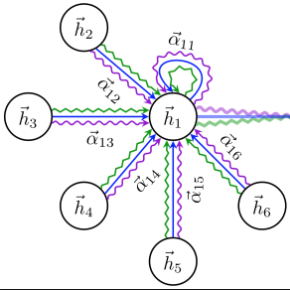

Adequate strategizing of agents behaviors is essential to solving cooperative MARL problems. One intuitively beneficial yet uncommon method in this domain is predicting agents future behaviors and planning accordingly. Leveraging this point, we propose a two-level hierarchical architecture that combines a novel information-theoretic objective with a trajectory prediction model to learn a strategy. To this end, we introduce a latent policy that learns two types of latent strategies: individual $z_A$, and relational $z_R$ using a modified Graph Attention Network module to extract interaction features. We encourage each agent to behave according to the strategy by conditioning its local $Q$ functions on $z_A$, and we further equip agents with a shared $Q$ function that conditions on $z_R$. Additionally, we introduce two regularizers to allow predicted trajectories to be accurate and rewarding. Empirical results on Google Research Football (GRF) and StarCraft (SC) II micromanagement tasks show that our method establishes a new state of the art being, to the best of our knowledge, the first MARL algorithm to solve all super hard SC II scenarios as well as the GRF full game with a win rate higher than $95\%$, thus outperforming all existing methods. Videos and brief overview of the methods and results are available at: //sites.google.com/view/hier-strats-marl/home.

相關內容

We introduce a reinforcement learning framework for economic design problems. We model the interaction between the designer of the economic environment and the participants as a Stackelberg game: the designer (leader) sets up the rules, and the participants (followers) respond strategically. We model the followers via no-regret dynamics, which converge to a Bayesian Coarse-Correlated Equilibrium (B-CCE) of the game induced by the leader. We embed the followers' no-regret dynamics in the leader's learning environment, which allows us to formulate our learning problem as a POMDP. We call this POMDP the Stackelberg POMDP. We prove that the optimal policy of the Stackelberg POMDP achieves the same utility as the optimal leader's strategy in our Stackelberg game. We solve the Stackelberg POMDP using an actor-critic method, where the critic can access the joint information of all agents. Finally, we show that we are able to learn optimal leader strategies in a variety of settings, including scenarios where the leader is participating in or designing normal-form games, as well as settings with incomplete information that capture common aspects of indirect mechanism design such as limited communication and turn-taking play by agents.

Real-world cooperation often requires intensive coordination among agents simultaneously. This task has been extensively studied within the framework of cooperative multi-agent reinforcement learning (MARL), and value decomposition methods are among those cutting-edge solutions. However, traditional methods that learn the value function as a monotonic mixing of per-agent utilities cannot solve the tasks with non-monotonic returns. This hinders their application in generic scenarios. Recent methods tackle this problem from the perspective of implicit credit assignment by learning value functions with complete expressiveness or using additional structures to improve cooperation. However, they are either difficult to learn due to large joint action spaces or insufficient to capture the complicated interactions among agents which are essential to solving tasks with non-monotonic returns. To address these problems, we propose a novel explicit credit assignment method to address the non-monotonic problem. Our method, Adaptive Value decomposition with Greedy Marginal contribution (AVGM), is based on an adaptive value decomposition that learns the cooperative value of a group of dynamically changing agents. We first illustrate that the proposed value decomposition can consider the complicated interactions among agents and is feasible to learn in large-scale scenarios. Then, our method uses a greedy marginal contribution computed from the value decomposition as an individual credit to incentivize agents to learn the optimal cooperative policy. We further extend the module with an action encoder to guarantee the linear time complexity for computing the greedy marginal contribution. Experimental results demonstrate that our method achieves significant performance improvements in several non-monotonic domains.

While multi-agent trust region algorithms have achieved great success empirically in solving coordination tasks, most of them, however, suffer from a non-stationarity problem since agents update their policies simultaneously. In contrast, a sequential scheme that updates policies agent-by-agent provides another perspective and shows strong performance. However, sample inefficiency and lack of monotonic improvement guarantees for each agent are still the two significant challenges for the sequential scheme. In this paper, we propose the \textbf{A}gent-by-\textbf{a}gent \textbf{P}olicy \textbf{O}ptimization (A2PO) algorithm to improve the sample efficiency and retain the guarantees of monotonic improvement for each agent during training. We justify the tightness of the monotonic improvement bound compared with other trust region algorithms. From the perspective of sequentially updating agents, we further consider the effect of agent updating order and extend the theory of non-stationarity into the sequential update scheme. To evaluate A2PO, we conduct a comprehensive empirical study on four benchmarks: StarCraftII, Multi-agent MuJoCo, Multi-agent Particle Environment, and Google Research Football full game scenarios. A2PO consistently outperforms strong baselines.

We consider the problem of multi-agent navigation and collision avoidance when observations are limited to the local neighborhood of each agent. We propose InforMARL, a novel architecture for multi-agent reinforcement learning (MARL) which uses local information intelligently to compute paths for all the agents in a decentralized manner. Specifically, InforMARL aggregates information about the local neighborhood of agents for both the actor and the critic using a graph neural network and can be used in conjunction with any standard MARL algorithm. We show that (1) in training, InforMARL has better sample efficiency and performance than baseline approaches, despite using less information, and (2) in testing, it scales well to environments with arbitrary numbers of agents and obstacles. We illustrate these results using four task environments, including one with predetermined goals for each agent, and one in which the agents collectively try to cover all goals.

In this paper, we study the Tiered Reinforcement Learning setting, a parallel transfer learning framework, where the goal is to transfer knowledge from the low-tier (source) task to the high-tier (target) task to reduce the exploration risk of the latter while solving the two tasks in parallel. Unlike previous work, we do not assume the low-tier and high-tier tasks share the same dynamics or reward functions, and focus on robust knowledge transfer without prior knowledge on the task similarity. We identify a natural and necessary condition called the "Optimal Value Dominance" for our objective. Under this condition, we propose novel online learning algorithms such that, for the high-tier task, it can achieve constant regret on partial states depending on the task similarity and retain near-optimal regret when the two tasks are dissimilar, while for the low-tier task, it can keep near-optimal without making sacrifice. Moreover, we further study the setting with multiple low-tier tasks, and propose a novel transfer source selection mechanism, which can ensemble the information from all low-tier tasks and allow provable benefits on a much larger state-action space.

This work extends an existing virtual multi-agent platform called RoboSumo to create TripleSumo -- a platform for investigating multi-agent cooperative behaviors in continuous action spaces, with physical contact in an adversarial environment. In this paper we investigate a scenario in which two agents, namely `Bug' and `Ant', must team up and push another agent `Spider' out of the arena. To tackle this goal, the newly added agent `Bug' is trained during an ongoing match between `Ant' and `Spider'. `Bug' must develop awareness of the other agents' actions, infer the strategy of both sides, and eventually learn an action policy to cooperate. The reinforcement learning algorithm Deep Deterministic Policy Gradient (DDPG) is implemented with a hybrid reward structure combining dense and sparse rewards. The cooperative behavior is quantitatively evaluated by the mean probability of winning the match and mean number of steps needed to win.

Climate-induced disasters are and will continue to be on the rise, and thus search-and-rescue (SAR) operations, where the task is to localize and assist one or several people who are missing, become increasingly relevant. In many cases the rough location may be known and a UAV can be deployed to explore a given, confined area to precisely localize the missing people. Due to time and battery constraints it is often critical that localization is performed as efficiently as possible. In this work we approach this type of problem by abstracting it as an aerial view goal localization task in a framework that emulates a SAR-like setup without requiring access to actual UAVs. In this framework, an agent operates on top of an aerial image (proxy for a search area) and is tasked with localizing a goal that is described in terms of visual cues. To further mimic the situation on an actual UAV, the agent is not able to observe the search area in its entirety, not even at low resolution, and thus it has to operate solely based on partial glimpses when navigating towards the goal. To tackle this task, we propose AiRLoc, a reinforcement learning (RL)-based model that decouples exploration (searching for distant goals) and exploitation (localizing nearby goals). Extensive evaluations show that AiRLoc outperforms heuristic search methods as well as alternative learnable approaches, and that it generalizes across datasets, e.g. to disaster-hit areas without seeing a single disaster scenario during training. We also conduct a proof-of-concept study which indicates that the learnable methods outperform humans on average. Code and models have been made publicly available at //github.com/aleksispi/airloc.

Communication in multi-agent reinforcement learning has been drawing attention recently for its significant role in cooperation. However, multi-agent systems may suffer from limitations on communication resources and thus need efficient communication techniques in real-world scenarios. According to the Shannon-Hartley theorem, messages to be transmitted reliably in worse channels require lower entropy. Therefore, we aim to reduce message entropy in multi-agent communication. A fundamental challenge is that the gradients of entropy are either 0 or infinity, disabling gradient-based methods. To handle it, we propose a pseudo gradient descent scheme, which reduces entropy by adjusting the distributions of messages wisely. We conduct experiments on two base communication frameworks with six environment settings and find that our scheme can reduce message entropy by up to 90% with nearly no loss of cooperation performance.

Recently, deep multiagent reinforcement learning (MARL) has become a highly active research area as many real-world problems can be inherently viewed as multiagent systems. A particularly interesting and widely applicable class of problems is the partially observable cooperative multiagent setting, in which a team of agents learns to coordinate their behaviors conditioning on their private observations and commonly shared global reward signals. One natural solution is to resort to the centralized training and decentralized execution paradigm. During centralized training, one key challenge is the multiagent credit assignment: how to allocate the global rewards for individual agent policies for better coordination towards maximizing system-level's benefits. In this paper, we propose a new method called Q-value Path Decomposition (QPD) to decompose the system's global Q-values into individual agents' Q-values. Unlike previous works which restrict the representation relation of the individual Q-values and the global one, we leverage the integrated gradient attribution technique into deep MARL to directly decompose global Q-values along trajectory paths to assign credits for agents. We evaluate QPD on the challenging StarCraft II micromanagement tasks and show that QPD achieves the state-of-the-art performance in both homogeneous and heterogeneous multiagent scenarios compared with existing cooperative MARL algorithms.

Graph Neural Networks (GNNs), which generalize deep neural networks to graph-structured data, have drawn considerable attention and achieved state-of-the-art performance in numerous graph related tasks. However, existing GNN models mainly focus on designing graph convolution operations. The graph pooling (or downsampling) operations, that play an important role in learning hierarchical representations, are usually overlooked. In this paper, we propose a novel graph pooling operator, called Hierarchical Graph Pooling with Structure Learning (HGP-SL), which can be integrated into various graph neural network architectures. HGP-SL incorporates graph pooling and structure learning into a unified module to generate hierarchical representations of graphs. More specifically, the graph pooling operation adaptively selects a subset of nodes to form an induced subgraph for the subsequent layers. To preserve the integrity of graph's topological information, we further introduce a structure learning mechanism to learn a refined graph structure for the pooled graph at each layer. By combining HGP-SL operator with graph neural networks, we perform graph level representation learning with focus on graph classification task. Experimental results on six widely used benchmarks demonstrate the effectiveness of our proposed model.