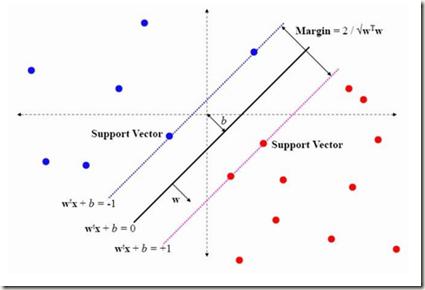

Speaker Verification (SV) systems involve mainly two individual stages: feature extraction and classification. In this paper, we explore these two modules with the aim of improving the performance of a speaker verification system under noisy conditions. On the one hand, the choice of the most appropriate acoustic features is a crucial factor for performing robust speaker verification. The acoustic parameters used in the proposed system are: Mel Frequency Cepstral Coefficients (MFCC), their first and second derivatives (Deltas and Delta- Deltas), Bark Frequency Cepstral Coefficients (BFCC), Perceptual Linear Predictive (PLP), and Relative Spectral Transform - Perceptual Linear Predictive (RASTA-PLP). In this paper, a complete comparison of different combinations of the previous features is discussed. On the other hand, the major weakness of a conventional Support Vector Machine (SVM) classifier is the use of generic traditional kernel functions to compute the distances among data points. However, the kernel function of an SVM has great influence on its performance. In this work, we propose the combination of two SVM-based classifiers with different kernel functions: Linear kernel and Gaussian Radial Basis Function (RBF) kernel with a Logistic Regression (LR) classifier. The combination is carried out by means of a parallel structure approach, in which different voting rules to take the final decision are considered. Results show that significant improvement in the performance of the SV system is achieved by using the combined features with the combined classifiers either with clean speech or in the presence of noise. Finally, to enhance the system more in noisy environments, the inclusion of the multiband noise removal technique as a preprocessing stage is proposed.

相關內容

Hyper-redundant Robotic Manipulators (HRMs) offer great dexterity and flexibility of operation, but solving Inverse Kinematics (IK) is challenging. In this work, we introduce VO-FABRIK, an algorithm combining Forward and Backward Reaching Inverse Kinematics (FABRIK) for repeatable deterministic IK computation, and an approach inspired from velocity obstacles to perform path planning under collision and joint limits constraints. We show preliminary results on an industrial HRM with 19 actuated joints. Our algorithm achieves good performance where a state-of-the-art IK solver fails.

A Kochen-Specker (KS) set is a finite collection of vectors on the two-sphere containing no antipodal pairs for which it is impossible to assign 0s and 1s such that no two orthogonal vectors are assigned 1 and exactly one vector in every triplet of mutually orthogonal vectors is assigned 1. The existence of KS sets lies at the heart of Kochen and Specker's argument against non-contextual hidden variable theories and the Conway-Kochen free will theorem. Identifying small KS sets can simplify these arguments and may contribute to the understanding of the role played by contextuality in quantum protocols. In this paper we derive a weak lower bound of 10 vectors for the size of any KS set by studying the opposite notion of large non-KS sets and using a probability argument that is independent of the graph structure of KS sets. We also point out an interesting connection with a generalisation of the moving sofa problem around a right-angled hallway on the two-sphere.

In this paper, we apply the Paired-Explicit Runge-Kutta (P-ERK) schemes by Vermeire et. al. (2019, 2022) to dynamically partitioned systems arising from adaptive mesh refinement. The P-ERK schemes enable multirate time-integration with no changes in the spatial discretization methodology, making them readily implementable in existing codes that employ a method-of-lines approach. We show that speedup compared to a range of state of the art Runge-Kutta methods can be realized, despite additional overhead due to the dynamic re-assignment of flagging variables and restricting nonlinear stability properties. The effectiveness of the approach is demonstrated for a range of simulation setups for viscous and inviscid convection-dominated compressible flows for which we provide a reproducibility repository. In addition, we perform a thorough investigation of the nonlinear stability properties of the Paired-Explicit Runge-Kutta schemes regarding limitations due to the violation of monotonicity properties of the underlying spatial discretization. Furthermore, we present a novel approach for estimating the relevant eigenvalues of large Jacobians required for the optimization of stability polynomials.

This paper introduces a new formulation that finds the optimum for the Moving-Target Traveling Salesman Problem (MT-TSP), which seeks to find a shortest path for an agent, that starts at a depot, visits a set of moving targets exactly once within their assigned time-windows, and returns to the depot. The formulation relies on the key idea that when the targets move along lines, their trajectories become convex sets within the space-time coordinate system. The problem then reduces to finding the shortest path within a graph of convex sets, subject to some speed constraints. We compare our formulation with the current state-of-the-art Mixed Integer Conic Program (MICP) solver for the MT-TSP. The experimental results show that our formulation outperforms the MICP for instances with up to 20 targets, with up to two orders of magnitude reduction in runtime, and up to a 60\% tighter optimality gap. We also show that the solution cost from the convex relaxation of our formulation provides significantly tighter lower bounds for the MT-TSP than the ones from the MICP.

This paper explores the potential of Physics-Informed Neural Networks (PINNs) to serve as Reduced Order Models (ROMs) for simulating the flow field within stirred tank reactors (STRs). We solve the two-dimensional stationary Navier-Stokes equations within a geometrically intricate domain and explore methodologies that allow us to integrate additional physical insights into the model. These approaches include imposing the Dirichlet boundary conditions (BCs) strongly and employing domain decomposition (DD), with both overlapping and non-overlapping subdomains. We adapt the Extended Physics-Informed Neural Network (XPINN) approach to solve different sets of equations in distinct subdomains based on the diverse flow characteristics present in each region. Our exploration results in a hierarchy of models spanning various levels of complexity, where the best models exhibit l1 prediction errors of less than 1% for both pressure and velocity. To illustrate the reproducibility of our approach, we track the errors over repeated independent training runs of the best identified model and show its reliability. Subsequently, by incorporating the stirring rate as a parametric input, we develop a fast-to-evaluate model of the flow capable of interpolating across a wide range of Reynolds numbers. Although we exclusively restrict ourselves to STRs in this work, we conclude that the steps taken to obtain the presented model hierarchy can be transferred to other applications.

This paper introduces BLaIR, a series of pretrained sentence embedding models specialized for recommendation scenarios. BLaIR is trained to learn correlations between item metadata and potential natural language context, which is useful for retrieving and recommending items. To pretrain BLaIR, we collect Amazon Reviews 2023, a new dataset comprising over 570 million reviews and 48 million items from 33 categories, significantly expanding beyond the scope of previous versions. We evaluate the generalization ability of BLaIR across multiple domains and tasks, including a new task named complex product search, referring to retrieving relevant items given long, complex natural language contexts. Leveraging large language models like ChatGPT, we correspondingly construct a semi-synthetic evaluation set, Amazon-C4. Empirical results on the new task, as well as conventional retrieval and recommendation tasks, demonstrate that BLaIR exhibit strong text and item representation capacity. Our datasets, code, and checkpoints are available at: //github.com/hyp1231/AmazonReviews2023.

Most continual segmentation methods tackle the problem as a per-pixel classification task. However, such a paradigm is very challenging, and we find query-based segmenters with built-in objectness have inherent advantages compared with per-pixel ones, as objectness has strong transfer ability and forgetting resistance. Based on these findings, we propose CoMasTRe by disentangling continual segmentation into two stages: forgetting-resistant continual objectness learning and well-researched continual classification. CoMasTRe uses a two-stage segmenter learning class-agnostic mask proposals at the first stage and leaving recognition to the second stage. During continual learning, a simple but effective distillation is adopted to strengthen objectness. To further mitigate the forgetting of old classes, we design a multi-label class distillation strategy suited for segmentation. We assess the effectiveness of CoMasTRe on PASCAL VOC and ADE20K. Extensive experiments show that our method outperforms per-pixel and query-based methods on both datasets. Code will be available at //github.com/jordangong/CoMasTRe.

Computer models play a crucial role in numerous scientific and engineering domains. To ensure the accuracy of simulations, it is essential to properly calibrate the input parameters of these models through statistical inference. While Bayesian inference is the standard approach for this task, employing Markov Chain Monte Carlo methods often encounters computational hurdles due to the costly evaluation of likelihood functions and slow mixing rates. Although variational inference (VI) can be a fast alternative to traditional Bayesian approaches, VI has limited applicability due to boundary issues and local optima problems. To address these challenges, we propose flexible VI methods based on deep generative models that do not require parametric assumptions on the variational distribution. We embed a surjective transformation in our framework to avoid posterior truncation at the boundary. Additionally, we provide theoretical conditions that guarantee the success of the algorithm. Furthermore, our temperature annealing scheme can prevent being trapped in local optima through a series of intermediate posteriors. We apply our method to infectious disease models and a geophysical model, illustrating that the proposed method can provide fast and accurate inference compared to its competitors.

This paper presents an exhaustive quantitative and qualitative evaluation of Large Language Models (LLMs) for Knowledge Graph (KG) construction and reasoning. We employ eight distinct datasets that encompass aspects including entity, relation and event extraction, link prediction, and question answering. Empirically, our findings suggest that GPT-4 outperforms ChatGPT in the majority of tasks and even surpasses fine-tuned models in certain reasoning and question-answering datasets. Moreover, our investigation extends to the potential generalization ability of LLMs for information extraction, which culminates in the presentation of the Virtual Knowledge Extraction task and the development of the VINE dataset. Drawing on these empirical findings, we further propose AutoKG, a multi-agent-based approach employing LLMs for KG construction and reasoning, which aims to chart the future of this field and offer exciting opportunities for advancement. We anticipate that our research can provide invaluable insights for future undertakings of KG\footnote{Code and datasets will be available in //github.com/zjunlp/AutoKG.

Many tasks in natural language processing can be viewed as multi-label classification problems. However, most of the existing models are trained with the standard cross-entropy loss function and use a fixed prediction policy (e.g., a threshold of 0.5) for all the labels, which completely ignores the complexity and dependencies among different labels. In this paper, we propose a meta-learning method to capture these complex label dependencies. More specifically, our method utilizes a meta-learner to jointly learn the training policies and prediction policies for different labels. The training policies are then used to train the classifier with the cross-entropy loss function, and the prediction policies are further implemented for prediction. Experimental results on fine-grained entity typing and text classification demonstrate that our proposed method can obtain more accurate multi-label classification results.