We consider the problem of computing a sparse binary representation of an image. To be precise, given an image and an overcomplete, non-orthonormal basis, we aim to find a sparse binary vector indicating the minimal set of basis vectors that when added together best reconstruct the given input. We formulate this problem with an $L_2$ loss on the reconstruction error, and an $L_0$ (or, equivalently, an $L_1$) loss on the binary vector enforcing sparsity. This yields a so-called Quadratic Unconstrained Binary Optimization (QUBO) problem, whose solution is generally NP-hard to find. The contribution of this work is twofold. First, the method of unsupervised and unnormalized dictionary feature learning for a desired sparsity level to best match the data is presented. Second, the binary sparse coding problem is then solved on the Loihi 1 neuromorphic chip by the use of stochastic networks of neurons to traverse the non-convex energy landscape. The solutions are benchmarked against the classical heuristic simulated annealing. We demonstrate neuromorphic computing is suitable for sampling low energy solutions of binary sparse coding QUBO models, and although Loihi 1 is capable of sampling very sparse solutions of the QUBO models, there needs to be improvement in the implementation in order to be competitive with simulated annealing.

相關內容

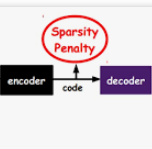

Neuromorphic processors have garnered considerable interest in recent years for their potential in energy-efficient and high-speed computing. The Locally Competitive Algorithm (LCA) has been utilized for power efficient sparse coding on neuromorphic processors, including the first Loihi processor. With the Loihi 2 processor enabling custom neuron models and graded spike communication, more complex implementations of LCA are possible. We present a new implementation of LCA designed for the Loihi 2 processor and perform an initial set of benchmarks comparing it to LCA on CPU and GPU devices. In these experiments LCA on Loihi 2 is orders of magnitude more efficient and faster for large sparsity penalties, while maintaining similar reconstruction quality. We find this performance improvement increases as the LCA parameters are tuned towards greater representation sparsity. Our study highlights the potential of neuromorphic processors, particularly Loihi 2, in enabling intelligent, autonomous, real-time processing on small robots, satellites where there are strict SWaP (small, lightweight, and low power) requirements. By demonstrating the superior performance of LCA on Loihi 2 compared to conventional computing device, our study suggests that Loihi 2 could be a valuable tool in advancing these types of applications. Overall, our study highlights the potential of neuromorphic processors for efficient and accurate data processing on resource-constrained devices.

Epidemiological models must be calibrated to ground truth for downstream tasks such as producing forward projections or running what-if scenarios. The meaning of calibration changes in case of a stochastic model since output from such a model is generally described via an ensemble or a distribution. Each member of the ensemble is usually mapped to a random number seed (explicitly or implicitly). With the goal of finding not only the input parameter settings but also the random seeds that are consistent with the ground truth, we propose a class of Gaussian process (GP) surrogates along with an optimization strategy based on Thompson sampling. This Trajectory Oriented Optimization (TOO) approach produces actual trajectories close to the empirical observations instead of a set of parameter settings where only the mean simulation behavior matches with the ground truth.

In the context of the high-dimensional Gaussian linear regression for ordered variables, we study the variable selection procedure via the minimization of the penalized least-squares criterion. We focus on model selection where the penalty function depends on an unknown multiplicative constant commonly calibrated for prediction. We propose a new proper calibration of this hyperparameter to simultaneously control predictive risk and false discovery rate. We obtain non-asymptotic theoretical bounds on the False Discovery Rate with respect to the hyperparameter and we provide an algorithm to calibrate it. It is based on completely observable quantities in view of applications. Our algorithm is validated by an extensive simulation study and is compared with some existing variable selection procedures. Finally, we propose a study to generalize our approach in complete variable selection.

Recently, Eldan, Koehler, and Zeitouni (2020) showed that Glauber dynamics mixes rapidly for general Ising models so long as the difference between the largest and smallest eigenvalues of the coupling matrix is at most $1 - \epsilon$ for any fixed $\epsilon > 0$. We give evidence that Glauber dynamics is in fact optimal for this "general-purpose sampling" task. Namely, we give an average-case reduction from hypothesis testing in a Wishart negatively-spiked matrix model to approximately sampling from the Gibbs measure of a general Ising model for which the difference between the largest and smallest eigenvalues of the coupling matrix is at most $1 + \epsilon$ for any fixed $\epsilon > 0$. Combined with results of Bandeira, Kunisky, and Wein (2019) that analyze low-degree polynomial algorithms to give evidence for the hardness of the former spiked matrix problem, our results in turn give evidence for the hardness of general-purpose sampling improving on Glauber dynamics. We also give a similar reduction to approximating the free energy of general Ising models, and again infer evidence that simulated annealing algorithms based on Glauber dynamics are optimal in the general-purpose setting.

Spiking neural networks (SNNs) are brain-inspired energy-efficient models that encode information in spatiotemporal dynamics. Recently, deep SNNs trained directly have shown great success in achieving high performance on classification tasks with very few time steps. However, how to design a directly-trained SNN for the regression task of object detection still remains a challenging problem. To address this problem, we propose EMS-YOLO, a novel directly-trained SNN framework for object detection, which is the first trial to train a deep SNN with surrogate gradients for object detection rather than ANN-SNN conversion strategies. Specifically, we design a full-spike residual block, EMS-ResNet, which can effectively extend the depth of the directly-trained SNN with low power consumption. Furthermore, we theoretically analyze and prove the EMS-ResNet could avoid gradient vanishing or exploding. The results demonstrate that our approach outperforms the state-of-the-art ANN-SNN conversion methods (at least 500 time steps) in extremely fewer time steps (only 4 time steps). It is shown that our model could achieve comparable performance to the ANN with the same architecture while consuming 5.83 times less energy on the frame-based COCO Dataset and the event-based Gen1 Dataset.

Optimal Transport has sparked vivid interest in recent years, in particular thanks to the Wasserstein distance, which provides a geometrically sensible and intuitive way of comparing probability measures. For computational reasons, the Sliced Wasserstein (SW) distance was introduced as an alternative to the Wasserstein distance, and has seen uses for training generative Neural Networks (NNs). While convergence of Stochastic Gradient Descent (SGD) has been observed practically in such a setting, there is to our knowledge no theoretical guarantee for this observation. Leveraging recent works on convergence of SGD on non-smooth and non-convex functions by Bianchi et al. (2022), we aim to bridge that knowledge gap, and provide a realistic context under which fixed-step SGD trajectories for the SW loss on NN parameters converge. More precisely, we show that the trajectories approach the set of (sub)-gradient flow equations as the step decreases. Under stricter assumptions, we show a much stronger convergence result for noised and projected SGD schemes, namely that the long-run limits of the trajectories approach a set of generalised critical points of the loss function.

Spiking neural networks (SNNs) are bio-plausible computing models with high energy efficiency. The temporal dynamics of neurons and synapses enable them to detect temporal patterns and generate sequences. While Backpropagation Through Time (BPTT) is traditionally used to train SNNs, it is not suitable for online learning of embedded applications due to its high computation and memory cost as well as extended latency. Previous works have proposed online learning algorithms, but they often utilize highly simplified spiking neuron models without synaptic dynamics and reset feedback, resulting in subpar performance. In this work, we present Spatiotemporal Online Learning for Synaptic Adaptation (SOLSA), specifically designed for online learning of SNNs composed of Leaky Integrate and Fire (LIF) neurons with exponentially decayed synapses and soft reset. The algorithm not only learns the synaptic weight but also adapts the temporal filters associated to the synapses. Compared to the BPTT algorithm, SOLSA has much lower memory requirement and achieves a more balanced temporal workload distribution. Moreover, SOLSA incorporates enhancement techniques such as scheduled weight update, early stop training and adaptive synapse filter, which speed up the convergence and enhance the learning performance. When compared to other non-BPTT based SNN learning, SOLSA demonstrates an average learning accuracy improvement of 14.2%. Furthermore, compared to BPTT, SOLSA achieves a 5% higher average learning accuracy with a 72% reduction in memory cost.

This work describes the investigation of neuromorphic computing-based spiking neural network (SNN) models used to filter data from sensor electronics in high energy physics experiments conducted at the High Luminosity Large Hadron Collider. We present our approach for developing a compact neuromorphic model that filters out the sensor data based on the particle's transverse momentum with the goal of reducing the amount of data being sent to the downstream electronics. The incoming charge waveforms are converted to streams of binary-valued events, which are then processed by the SNN. We present our insights on the various system design choices - from data encoding to optimal hyperparameters of the training algorithm - for an accurate and compact SNN optimized for hardware deployment. Our results show that an SNN trained with an evolutionary algorithm and an optimized set of hyperparameters obtains a signal efficiency of about 91% with nearly half as many parameters as a deep neural network.

Consider the community detection problem in random hypergraphs under the non-uniform hypergraph stochastic block model (HSBM), where each hyperedge appears independently with some given probability depending only on the labels of its vertices. We establish, for the first time in the literature, a sharp threshold for exact recovery under this non-uniform case, subject to minor constraints; in particular, we consider the model with multiple communities ($K \geq 2$). One crucial point here is that by aggregating information from all the uniform layers, we may obtain exact recovery even in cases when this may appear impossible if each layer were considered alone. Two efficient algorithms that successfully achieve exact recovery above the threshold are provided. The theoretical analysis of our algorithms relies on the concentration and regularization of the adjacency matrix for non-uniform random hypergraphs, which could be of independent interest. We also address some open problems regarding parameter knowledge and estimation.

A mainstream type of current self-supervised learning methods pursues a general-purpose representation that can be well transferred to downstream tasks, typically by optimizing on a given pretext task such as instance discrimination. In this work, we argue that existing pretext tasks inevitably introduce biases into the learned representation, which in turn leads to biased transfer performance on various downstream tasks. To cope with this issue, we propose Maximum Entropy Coding (MEC), a more principled objective that explicitly optimizes on the structure of the representation, so that the learned representation is less biased and thus generalizes better to unseen downstream tasks. Inspired by the principle of maximum entropy in information theory, we hypothesize that a generalizable representation should be the one that admits the maximum entropy among all plausible representations. To make the objective end-to-end trainable, we propose to leverage the minimal coding length in lossy data coding as a computationally tractable surrogate for the entropy, and further derive a scalable reformulation of the objective that allows fast computation. Extensive experiments demonstrate that MEC learns a more generalizable representation than previous methods based on specific pretext tasks. It achieves state-of-the-art performance consistently on various downstream tasks, including not only ImageNet linear probe, but also semi-supervised classification, object detection, instance segmentation, and object tracking. Interestingly, we show that existing batch-wise and feature-wise self-supervised objectives could be seen equivalent to low-order approximations of MEC. Code and pre-trained models are available at //github.com/xinliu20/MEC.