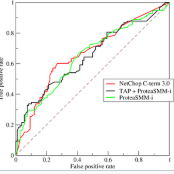

The Gaussian mechanism (GM) represents a universally employed tool for achieving differential privacy (DP), and a large body of work has been devoted to its analysis. We argue that the three prevailing interpretations of the GM, namely $(\varepsilon, \delta)$-DP, f-DP and R\'enyi DP can be expressed by using a single parameter $\psi$, which we term the sensitivity index. $\psi$ uniquely characterises the GM and its properties by encapsulating its two fundamental quantities: the sensitivity of the query and the magnitude of the noise perturbation. With strong links to the ROC curve and the hypothesis-testing interpretation of DP, $\psi$ offers the practitioner a powerful method for interpreting, comparing and communicating the privacy guarantees of Gaussian mechanisms.

相關內容

In Algorithmic Game Theory (AGT), designing efficient algorithms to compute Nash equilibria poses considerable challenges. We make progress in the field and shed new light on the intersection between Algorithmic Game Theory and Integer Programming. We introduce ZERO Regrets, a general cutting plane algorithm to compute, enumerate, and select Pure Nash Equilibria (PNEs) in Integer Programming Games, a class of simultaneous and non-cooperative games. We present a theoretical foundation for our algorithmic reasoning and provide a polyhedral characterization of the convex hull of the Pure Nash Equilibria. We introduce the concept of equilibrium inequality and devise an equilibrium separation oracle to separate non-equilibrium strategies from PNEs. We test ZERO Regrets on two paradigmatic classes of games: the Knapsack Game and the Network Formation Game, a well-studied game in AGT. Our algorithm successfully solves relevant instances of both games and shows promising applications for equilibria selection.

Recent research in differential privacy demonstrated that (sub)sampling can amplify the level of protection. For example, for $\epsilon$-differential privacy and simple random sampling with sampling rate $r$, the actual privacy guarantee is approximately $r\epsilon$, if a value of $\epsilon$ is used to protect the output from the sample. In this paper, we study whether this amplification effect can be exploited systematically to improve the accuracy of the privatized estimate. Specifically, assuming the agency has information for the full population, we ask under which circumstances accuracy gains could be expected, if the privatized estimate would be computed on a random sample instead of the full population. We find that accuracy gains can be achieved for certain regimes. However, gains can typically only be expected, if the sensitivity of the output with respect to small changes in the database does not depend too strongly on the size of the database. We only focus on algorithms that achieve differential privacy by adding noise to the final output and illustrate the accuracy implications for two commonly used statistics: the mean and the median. We see our research as a first step towards understanding the conditions required for accuracy gains in practice and we hope that these findings will stimulate further research broadening the scope of differential privacy algorithms and outputs considered.

Non-isolated systems have diverse coupling relations with the external environment. These relations generate complex thermodynamics and information transmission between the system and its environment. The framework depicted in the current research attempts to glance at the critical role of the internal orders inside the non-isolated system in shaping the information thermodynamics coupling. We characterize the coupling as a generalized encoding process, where the system acts as an information thermodynamics encoder to encode the external information based on thermodynamics. We formalize the encoding process in the context of the nonequilibrium second law of thermodynamics, revealing an intrinsic difference in information thermodynamics characteristics between information thermodynamics encoders with and without internal correlations. During the information encoding process of an external source $\mathsf{Y}$, specific sub-systems in an encoder $\mathsf{X}$ with internal correlations can exceed the information thermodynamics bound on $\left(\mathsf{X},\mathsf{Y}\right)$ and encode more information than system $\mathsf{X}$ works as a whole. We computationally verify this theoretical finding in an Ising model with a random external field and a neural data set of the human brain during visual perception and recognition. Our analysis demonstrates that the stronger internal correlation inside these systems implies a higher possibility for specific sub-systems to encode more information than the global one. These findings may suggest a new perspective in studying information thermodynamics in diverse physical and biological systems.

The arrival of Immersive Virtual and Augmented Reality hardware to the consumer market suggests seamless multi-modal communication between human participants and autonomous interactive characters is an achievable goal in the near future. This possibility is further reinforced by the rapid improvements in the automated analysis of speech, facial expressions and body language, as well as improvements in character animation and speech synthesis techniques. However, we do not have a formal theory that allows us to compare, on one side, interactive social scenarios among human users and autonomous virtual characters and, on the other side, pragmatic inference mechanisms as they occur in non-mediated communication. Grices' and Sperbers' model of inferential communication does explain the nature of everyday communication through cognitive mechanisms that support spontaneous inferences performed in pragmatic communication. However, such a theory is not based on a mathematical framework with a precision comparable to classical information theory. To address this gap, in this article we introduce a Mathematical Theory of Inferential Communication (MaTIC). MaTIC formalises some assumptions of inferential communication, it explores its theoretical consequences and outlines the practical steps needed to use it in different application scenarios.

Gaussian processes (GPs) are non-parametric Bayesian models that are widely used for diverse prediction tasks. Previous work in adding strong privacy protection to GPs via differential privacy (DP) has been limited to protecting only the privacy of the prediction targets (model outputs) but not inputs. We break this limitation by introducing GPs with DP protection for both model inputs and outputs. We achieve this by using sparse GP methodology and publishing a private variational approximation on known inducing points. The approximation covariance is adjusted to approximately account for the added uncertainty from DP noise. The approximation can be used to compute arbitrary predictions using standard sparse GP techniques. We propose a method for hyperparameter learning using a private selection protocol applied to validation set log-likelihood. Our experiments demonstrate that given sufficient amount of data, the method can produce accurate models under strong privacy protection.

Several queries and scores have recently been proposed to explain individual predictions over ML models. Given the need for flexible, reliable, and easy-to-apply interpretability methods for ML models, we foresee the need for developing declarative languages to naturally specify different explainability queries. We do this in a principled way by rooting such a language in a logic, called FOIL, that allows for expressing many simple but important explainability queries, and might serve as a core for more expressive interpretability languages. We study the computational complexity of FOIL queries over two classes of ML models often deemed to be easily interpretable: decision trees and OBDDs. Since the number of possible inputs for an ML model is exponential in its dimension, the tractability of the FOIL evaluation problem is delicate but can be achieved by either restricting the structure of the models or the fragment of FOIL being evaluated. We also present a prototype implementation of FOIL wrapped in a high-level declarative language and perform experiments showing that such a language can be used in practice.

This paper focuses on the expected difference in borrower's repayment when there is a change in the lender's credit decisions. Classical estimators overlook the confounding effects and hence the estimation error can be magnificent. As such, we propose another approach to construct the estimators such that the error can be greatly reduced. The proposed estimators are shown to be unbiased, consistent, and robust through a combination of theoretical analysis and numerical testing. Moreover, we compare the power of estimating the causal quantities between the classical estimators and the proposed estimators. The comparison is tested across a wide range of models, including linear regression models, tree-based models, and neural network-based models, under different simulated datasets that exhibit different levels of causality, different degrees of nonlinearity, and different distributional properties. Most importantly, we apply our approaches to a large observational dataset provided by a global technology firm that operates in both the e-commerce and the lending business. We find that the relative reduction of estimation error is strikingly substantial if the causal effects are accounted for correctly.

Train machine learning models on sensitive user data has raised increasing privacy concerns in many areas. Federated learning is a popular approach for privacy protection that collects the local gradient information instead of real data. One way to achieve a strict privacy guarantee is to apply local differential privacy into federated learning. However, previous works do not give a practical solution due to three issues. First, the noisy data is close to its original value with high probability, increasing the risk of information exposure. Second, a large variance is introduced to the estimated average, causing poor accuracy. Last, the privacy budget explodes due to the high dimensionality of weights in deep learning models. In this paper, we proposed a novel design of local differential privacy mechanism for federated learning to address the abovementioned issues. It is capable of making the data more distinct from its original value and introducing lower variance. Moreover, the proposed mechanism bypasses the curse of dimensionality by splitting and shuffling model updates. A series of empirical evaluations on three commonly used datasets, MNIST, Fashion-MNIST and CIFAR-10, demonstrate that our solution can not only achieve superior deep learning performance but also provide a strong privacy guarantee at the same time.

Graph Neural Networks (GNN) come in many flavors, but should always be either invariant (permutation of the nodes of the input graph does not affect the output) or equivariant (permutation of the input permutes the output). In this paper, we consider a specific class of invariant and equivariant networks, for which we prove new universality theorems. More precisely, we consider networks with a single hidden layer, obtained by summing channels formed by applying an equivariant linear operator, a pointwise non-linearity and either an invariant or equivariant linear operator. Recently, Maron et al. (2019) showed that by allowing higher-order tensorization inside the network, universal invariant GNNs can be obtained. As a first contribution, we propose an alternative proof of this result, which relies on the Stone-Weierstrass theorem for algebra of real-valued functions. Our main contribution is then an extension of this result to the equivariant case, which appears in many practical applications but has been less studied from a theoretical point of view. The proof relies on a new generalized Stone-Weierstrass theorem for algebra of equivariant functions, which is of independent interest. Finally, unlike many previous settings that consider a fixed number of nodes, our results show that a GNN defined by a single set of parameters can approximate uniformly well a function defined on graphs of varying size.

We introduce a new family of deep neural network models. Instead of specifying a discrete sequence of hidden layers, we parameterize the derivative of the hidden state using a neural network. The output of the network is computed using a black-box differential equation solver. These continuous-depth models have constant memory cost, adapt their evaluation strategy to each input, and can explicitly trade numerical precision for speed. We demonstrate these properties in continuous-depth residual networks and continuous-time latent variable models. We also construct continuous normalizing flows, a generative model that can train by maximum likelihood, without partitioning or ordering the data dimensions. For training, we show how to scalably backpropagate through any ODE solver, without access to its internal operations. This allows end-to-end training of ODEs within larger models.