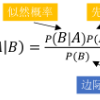

There are now many explainable AI methods for understanding the decisions of a machine learning model. Among these are those based on counterfactual reasoning, which involve simulating features changes and observing the impact on the prediction. This article proposes to view this simulation process as a source of creating a certain amount of knowledge that can be stored to be used, later, in different ways. This process is illustrated in the additive model and, more specifically, in the case of the naive Bayes classifier, whose interesting properties for this purpose are shown.

相關內容

We present a novel solution procedure for initial boundary value problems. The procedure is based on an action principle, in which coordinate maps are included as dynamical degrees of freedom. This reparametrization invariant action is formulated in an abstract parameter space and an energy density scale associated with the space-time coordinates separates the dynamics of the coordinate maps and of the propagating fields. Treating coordinates as dependent, i.e. dynamical quantities, offers the opportunity to discretize the action while retaining all space-time symmetries and also provides the basis for automatic adaptive mesh refinement (AMR). The presence of unbroken space-time symmetries after discretization also ensures that the associated continuum Noether charges remain exactly conserved. The presence of coordinate maps in addition provides new freedom in the choice of boundary conditions. An explicit numerical example for wave propagation in $1+1$ dimensions is provided, using recently developed regularized summation-by-parts finite difference operators.

We propose a comprehensive framework for policy gradient methods tailored to continuous time reinforcement learning. This is based on the connection between stochastic control problems and randomised problems, enabling applications across various classes of Markovian continuous time control problems, beyond diffusion models, including e.g. regular, impulse and optimal stopping/switching problems. By utilizing change of measure in the control randomisation technique, we derive a new policy gradient representation for these randomised problems, featuring parametrised intensity policies. We further develop actor-critic algorithms specifically designed to address general Markovian stochastic control issues. Our framework is demonstrated through its application to optimal switching problems, with two numerical case studies in the energy sector focusing on real options.

Often the question arises whether $Y$ can be predicted based on $X$ using a certain model. Especially for highly flexible models such as neural networks one may ask whether a seemingly good prediction is actually better than fitting pure noise or whether it has to be attributed to the flexibility of the model. This paper proposes a rigorous permutation test to assess whether the prediction is better than the prediction of pure noise. The test avoids any sample splitting and is based instead on generating new pairings of $(X_i,Y_j)$. It introduces a new formulation of the null hypothesis and rigorous justification for the test, which distinguishes it from previous literature. The theoretical findings are applied both to simulated data and to sensor data of tennis serves in an experimental context. The simulation study underscores how the available information affects the test. It shows that the less informative the predictors, the lower the probability of rejecting the null hypothesis of fitting pure noise and emphasizes that detecting weaker dependence between variables requires a sufficient sample size.

Scopus and the Web of Science have been the foundation for research in the science of science even though these traditional databases systematically underrepresent certain disciplines and world regions. In response, new inclusive databases, notably OpenAlex, have emerged. While many studies have begun using OpenAlex as a data source, few critically assess its limitations. This study, conducted in collaboration with the OpenAlex team, addresses this gap by comparing OpenAlex to Scopus across a number of dimensions. The analysis concludes that OpenAlex is a superset of Scopus and can be a reliable alternative for some analyses, particularly at the country level. Despite this, issues of metadata accuracy and completeness show that additional research is needed to fully comprehend and address OpenAlex's limitations. Doing so will be necessary to confidently use OpenAlex across a wider set of analyses, including those that are not at all possible with more constrained databases.

Logistic regression is widely used in many areas of knowledge. Several works compare the performance of lasso and maximum likelihood estimation in logistic regression. However, part of these works do not perform simulation studies and the remaining ones do not consider scenarios in which the ratio of the number of covariates to sample size is high. In this work, we compare the discrimination performance of lasso and maximum likelihood estimation in logistic regression using simulation studies and applications. Variable selection is done both by lasso and by stepwise when maximum likelihood estimation is used. We consider a wide range of values for the ratio of the number of covariates to sample size. The main conclusion of the work is that lasso has a better discrimination performance than maximum likelihood estimation when the ratio of the number of covariates to sample size is high.

Correspondence analysis (CA) is a popular technique to visualize the relationship between two categorical variables. CA uses the data from a two-way contingency table and is affected by the presence of outliers. The supplementary points method is a popular method to handle outliers. Its disadvantage is that the information from entire rows or columns is removed. However, outliers can be caused by cells only. In this paper, a reconstitution algorithm is introduced to cope with such cells. This algorithm can reduce the contribution of cells in CA instead of deleting entire rows or columns. Thus the remaining information in the row and column involved can be used in the analysis. The reconstitution algorithm is compared with two alternative methods for handling outliers, the supplementary points method and MacroPCA. It is shown that the proposed strategy works well.

We consider covariance parameter estimation for Gaussian processes with functional inputs. From an increasing-domain asymptotics perspective, we prove the asymptotic consistency and normality of the maximum likelihood estimator. We extend these theoretical guarantees to encompass scenarios accounting for approximation errors in the inputs, which allows robustness of practical implementations relying on conventional sampling methods or projections onto a functional basis. Loosely speaking, both consistency and normality hold when the approximation error becomes negligible, a condition that is often achieved as the number of samples or basis functions becomes large. These later asymptotic properties are illustrated through analytical examples, including one that covers the case of non-randomly perturbed grids, as well as several numerical illustrations.

Benchmarks have emerged as the central approach for evaluating Large Language Models (LLMs). The research community often relies on a model's average performance across the test prompts of a benchmark to evaluate the model's performance. This is consistent with the assumption that the test prompts within a benchmark represent a random sample from a real-world distribution of interest. We note that this is generally not the case; instead, we hold that the distribution of interest varies according to the specific use case. We find that (1) the correlation in model performance across test prompts is non-random, (2) accounting for correlations across test prompts can change model rankings on major benchmarks, (3) explanatory factors for these correlations include semantic similarity and common LLM failure points.

Splitting methods are a widely used numerical scheme for solving convection-diffusion problems. However, they may lose stability in some situations, particularly when applied to convection-diffusion problems in the presence of an unbounded convective term. In this paper, we propose a new splitting method, called the "Adapted Lie splitting method", which successfully overcomes the observed instability in certain cases. Assuming that the unbounded coefficient belongs to a suitable Lorentz space, we show that the adapted Lie splitting converges to first-order under the analytic semigroup framework. Furthermore, we provide numerical experiments to illustrate our newly proposed splitting approach.

Replication studies are increasingly conducted to assess the credibility of scientific findings. Most of these replication attempts target studies with a superiority design, but there is a lack of methodology regarding the analysis of replication studies with alternative types of designs, such as equivalence. In order to fill this gap, we propose two approaches, the two-trials rule and the sceptical TOST procedure, adapted from methods used in superiority settings. Both methods have the same overall Type-I error rate, but the sceptical TOST procedure allows replication success even for non-significant original or replication studies. This leads to a larger project power and other differences in relevant operating characteristics. Both methods can be used for sample size calculation of the replication study, based on the results from the original one. The two methods are applied to data from the Reproducibility Project: Cancer Biology.