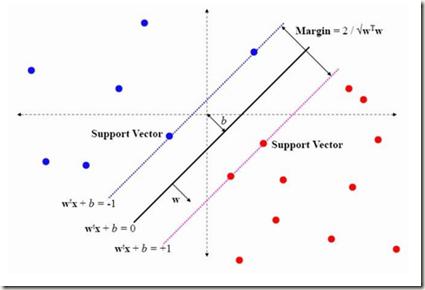

This paper provides an insight into the possibility of how to find ontologies most relevant to scientific texts using artificial neural networks. The basic idea of the presented approach is to select a representative paragraph from a source text file, embed it to a vector space by a pre-trained fine-tuned transformer, and classify the embedded vector according to its relevance to a target ontology. We have considered different classifiers to categorize the output from the transformer, in particular random forest, support vector machine, multilayer perceptron, k-nearest neighbors, and Gaussian process classifiers. Their suitability has been evaluated in a use case with ontologies and scientific texts concerning catalysis research. From results we can say the worst results have random forest. The best results in this task brought support vector machine classifier.

相關內容

Large language models (LLMs) have complicated internal dynamics, but induce representations of words and phrases whose geometry we can study. Human language processing is also opaque, but neural response measurements can provide (noisy) recordings of activation during listening or reading, from which we can extract similar representations of words and phrases. Here we study the extent to which the geometries induced by these representations, share similarities in the context of brain decoding. We find that the larger neural language models get, the more their representations are structurally similar to neural response measurements from brain imaging. Code is available at \url{//github.com/coastalcph/brainlm}.

Counterfactual fairness requires that a person would have been classified in the same way by an AI or other algorithmic system if they had a different protected class, such as a different race or gender. This is an intuitive standard, as reflected in the U.S. legal system, but its use is limited because counterfactuals cannot be directly observed in real-world data. On the other hand, group fairness metrics (e.g., demographic parity or equalized odds) are less intuitive but more readily observed. In this paper, we use $\textit{causal context}$ to bridge the gaps between counterfactual fairness, robust prediction, and group fairness. First, we motivate counterfactual fairness by showing that there is not necessarily a fundamental trade-off between fairness and accuracy because, under plausible conditions, the counterfactually fair predictor is in fact accuracy-optimal in an unbiased target distribution. Second, we develop a correspondence between the causal graph of the data-generating process and which, if any, group fairness metrics are equivalent to counterfactual fairness. Third, we show that in three common fairness contexts$\unicode{x2013}$measurement error, selection on label, and selection on predictors$\unicode{x2013}$counterfactual fairness is equivalent to demographic parity, equalized odds, and calibration, respectively. Counterfactual fairness can sometimes be tested by measuring relatively simple group fairness metrics.

In recent years, concept-based approaches have emerged as some of the most promising explainability methods to help us interpret the decisions of Artificial Neural Networks (ANNs). These methods seek to discover intelligible visual 'concepts' buried within the complex patterns of ANN activations in two key steps: (1) concept extraction followed by (2) importance estimation. While these two steps are shared across methods, they all differ in their specific implementations. Here, we introduce a unifying theoretical framework that comprehensively defines and clarifies these two steps. This framework offers several advantages as it allows us: (i) to propose new evaluation metrics for comparing different concept extraction approaches; (ii) to leverage modern attribution methods and evaluation metrics to extend and systematically evaluate state-of-the-art concept-based approaches and importance estimation techniques; (iii) to derive theoretical guarantees regarding the optimality of such methods. We further leverage our framework to try to tackle a crucial question in explainability: how to efficiently identify clusters of data points that are classified based on a similar shared strategy. To illustrate these findings and to highlight the main strategies of a model, we introduce a visual representation called the strategic cluster graph. Finally, we present //serre-lab.github.io/Lens, a dedicated website that offers a complete compilation of these visualizations for all classes of the ImageNet dataset.

This paper presents an algorithm for the preprocessing of observation data aimed at improving the robustness of orbit determination tools. Two objectives are fulfilled: obtain a refined solution to the initial orbit determination problem and detect possible outliers in the processed measurements. The uncertainty on the initial estimate is propagated forward in time and progressively reduced by exploiting sensor data available in said propagation window. Differential algebra techniques and a novel automatic domain splitting algorithm for second-order Taylor expansions are used to efficiently propagate uncertainties over time. A multifidelity approach is employed to minimize the computational effort while retaining the accuracy of the propagated estimate. At each observation epoch, a polynomial map is obtained by projecting the propagated states onto the observable space. Domains that do no overlap with the actual measurement are pruned thus reducing the uncertainty to be further propagated. Measurement outliers are also detected in this step. The refined estimate and retained observations are then used to improve the robustness of batch orbit determination tools. The effectiveness of the algorithm is demonstrated for a geostationary transfer orbit object using synthetic and real observation data from the TAROT network.

We study the problem of communication-efficient distributed vector mean estimation, a commonly used subroutine in distributed optimization and Federated Learning (FL). Rand-$k$ sparsification is a commonly used technique to reduce communication cost, where each client sends $k < d$ of its coordinates to the server. However, Rand-$k$ is agnostic to any correlations, that might exist between clients in practical scenarios. The recently proposed Rand-$k$-Spatial estimator leverages the cross-client correlation information at the server to improve Rand-$k$'s performance. Yet, the performance of Rand-$k$-Spatial is suboptimal. We propose the Rand-Proj-Spatial estimator with a more flexible encoding-decoding procedure, which generalizes the encoding of Rand-$k$ by projecting the client vectors to a random $k$-dimensional subspace. We utilize Subsampled Randomized Hadamard Transform (SRHT) as the projection matrix and show that Rand-Proj-Spatial with SRHT outperforms Rand-$k$-Spatial, using the correlation information more efficiently. Furthermore, we propose an approach to incorporate varying degrees of correlation and suggest a practical variant of Rand-Proj-Spatial when the correlation information is not available to the server. Experiments on real-world distributed optimization tasks showcase the superior performance of Rand-Proj-Spatial compared to Rand-$k$-Spatial and other more sophisticated sparsification techniques.

Thematic analysis and other variants of inductive coding are widely used qualitative analytic methods within empirical legal studies (ELS). We propose a novel framework facilitating effective collaboration of a legal expert with a large language model (LLM) for generating initial codes (phase 2 of thematic analysis), searching for themes (phase 3), and classifying the data in terms of the themes (to kick-start phase 4). We employed the framework for an analysis of a dataset (n=785) of facts descriptions from criminal court opinions regarding thefts. The goal of the analysis was to discover classes of typical thefts. Our results show that the LLM, namely OpenAI's GPT-4, generated reasonable initial codes, and it was capable of improving the quality of the codes based on expert feedback. They also suggest that the model performed well in zero-shot classification of facts descriptions in terms of the themes. Finally, the themes autonomously discovered by the LLM appear to map fairly well to the themes arrived at by legal experts. These findings can be leveraged by legal researchers to guide their decisions in integrating LLMs into their thematic analyses, as well as other inductive coding projects.

This paper proposes a generalized Firefly Algorithm (FA) to solve an optimization framework having objective function and constraints as multivariate functions of independent optimization variables. Four representative examples of how the proposed generalized FA can be adopted to solve downlink beamforming problems are shown for a classic transmit beamforming, cognitive beamforming, reconfigurable-intelligent-surfaces-aided (RIS-aided) transmit beamforming, and RIS-aided wireless power transfer (WPT). Complexity analyzes indicate that in large-antenna regimes the proposed FA approaches require less computational complexity than their corresponding interior point methods (IPMs) do, yet demand a higher complexity than the iterative and the successive convex approximation (SCA) approaches do. Simulation results reveal that the proposed FA attains the same global optimal solution as that of the IPM for an optimization problem in cognitive beamforming. On the other hand, the proposed FA approaches outperform the iterative, IPM and SCA in terms of obtaining better solution for optimization problems, respectively, for a classic transmit beamforming, RIS-aided transmit beamforming and RIS-aided WPT.

This paper proposes two nonlinear dynamics to solve constrained distributed optimization problem for resource allocation over a multi-agent network. In this setup, coupling constraint refers to resource-demand balance which is preserved at all-times. The proposed solutions can address various model nonlinearities, for example, due to quantization and/or saturation. Further, it allows to reach faster convergence or to robustify the solution against impulsive noise or uncertainties. We prove convergence over weakly connected networks using convex analysis and Lyapunov theory. Our findings show that convergence can be reached for general sign-preserving odd nonlinearity. We further propose delay-tolerant mechanisms to handle general bounded heterogeneous time-varying delays over the communication network of agents while preserving all-time feasibility. This work finds application in CPU scheduling and coverage control among others. This paper advances the state-of-the-art by addressing (i) possible nonlinearity on the agents/links, meanwhile handling (ii) resource-demand feasibility at all times, (iii) uniform-connectivity instead of all-time connectivity, and (iv) possible heterogeneous and time-varying delays. To our best knowledge, no existing work addresses contributions (i)-(iv) altogether. Simulations and comparative analysis are provided to corroborate our contributions.

This paper studies a diffusion-based framework to address the low-light image enhancement problem. To harness the capabilities of diffusion models, we delve into this intricate process and advocate for the regularization of its inherent ODE-trajectory. To be specific, inspired by the recent research that low curvature ODE-trajectory results in a stable and effective diffusion process, we formulate a curvature regularization term anchored in the intrinsic non-local structures of image data, i.e., global structure-aware regularization, which gradually facilitates the preservation of complicated details and the augmentation of contrast during the diffusion process. This incorporation mitigates the adverse effects of noise and artifacts resulting from the diffusion process, leading to a more precise and flexible enhancement. To additionally promote learning in challenging regions, we introduce an uncertainty-guided regularization technique, which wisely relaxes constraints on the most extreme regions of the image. Experimental evaluations reveal that the proposed diffusion-based framework, complemented by rank-informed regularization, attains distinguished performance in low-light enhancement. The outcomes indicate substantial advancements in image quality, noise suppression, and contrast amplification in comparison with state-of-the-art methods. We believe this innovative approach will stimulate further exploration and advancement in low-light image processing, with potential implications for other applications of diffusion models. The code is publicly available at //github.com/jinnh/GSAD.

Recent contrastive representation learning methods rely on estimating mutual information (MI) between multiple views of an underlying context. E.g., we can derive multiple views of a given image by applying data augmentation, or we can split a sequence into views comprising the past and future of some step in the sequence. Contrastive lower bounds on MI are easy to optimize, but have a strong underestimation bias when estimating large amounts of MI. We propose decomposing the full MI estimation problem into a sum of smaller estimation problems by splitting one of the views into progressively more informed subviews and by applying the chain rule on MI between the decomposed views. This expression contains a sum of unconditional and conditional MI terms, each measuring modest chunks of the total MI, which facilitates approximation via contrastive bounds. To maximize the sum, we formulate a contrastive lower bound on the conditional MI which can be approximated efficiently. We refer to our general approach as Decomposed Estimation of Mutual Information (DEMI). We show that DEMI can capture a larger amount of MI than standard non-decomposed contrastive bounds in a synthetic setting, and learns better representations in a vision domain and for dialogue generation.