We propose TrendSegment, a methodology for detecting multiple change-points corre- sponding to linear trend changes in one dimensional data. A core ingredient of TrendSegment is a new Tail-Greedy Unbalanced Wavelet transform: a conditionally orthonormal, bottom- up transformation of the data through an adaptively constructed unbalanced wavelet basis, which results in a sparse representation of the data. Due to its bottom-up nature, this multi- scale decomposition focuses on local features in its early stages and on global features next which enables the detection of both long and short linear trend segments at once. To reduce the computational complexity, the proposed method merges multiple regions in a single pass over the data. We show the consistency of the estimated number and locations of change- points. The practicality of our approach is demonstrated through simulations and two real data examples, involving Iceland temperature data and sea ice extent of the Arctic and the Antarctic. Our methodology is implemented in the R package trendsegmentR, available from CRAN.

相關內容

Proximity detection is to determine whether an IoT receiver is within a certain distance from a signal transmitter. Due to its low cost and high popularity, Bluetooth low energy (BLE) has been used to detect proximity based on the received signal strength indicator (RSSI). To address the fact that RSSI can be markedly influenced by device carriage states, previous works have incorporated RSSI with inertial measurement unit (IMU) using deep learning. However, they have not sufficiently accounted for the impact of multipath. Furthermore, due to the special setup, the IMU data collected in the training process may be biased, which hampers the system's robustness and generalizability. This issue has not been studied before. We propose PRID, an IMU-assisted BLE proximity detection approach robust against RSSI fluctuation and IMU data bias. PRID histogramizes RSSI to extract multipath features and uses carriage state regularization to mitigate overfitting due to IMU data bias. We further propose PRID-lite based on a binarized neural network to substantially cut memory requirements for resource-constrained devices. We have conducted extensive experiments under different multipath environments, data bias levels, and a crowdsourced dataset. Our results show that PRID significantly reduces false detection cases compared with the existing arts (by over 50%). PRID-lite further reduces over 90% PRID model size and extends 60% battery life, with a minor compromise in accuracy (7%).

This paper formulates a general cross validation framework for signal denoising. The general framework is then applied to nonparametric regression methods such as Trend Filtering and Dyadic CART. The resulting cross validated versions are then shown to attain nearly the same rates of convergence as are known for the optimally tuned analogues. There did not exist any previous theoretical analyses of cross validated versions of Trend Filtering or Dyadic CART. To illustrate the generality of the framework we also propose and study cross validated versions of two fundamental estimators; lasso for high dimensional linear regression and singular value thresholding for matrix estimation. Our general framework is inspired by the ideas in Chatterjee and Jafarov (2015) and is potentially applicable to a wide range of estimation methods which use tuning parameters.

Main subjects usually exist in the images or videos, as they are the objects that the photographer wants to highlight. Human viewers can easily identify them but algorithms often confuse them with other objects. Detecting the main subjects is an important technique to help machines understand the content of images and videos. We present a new dataset with the goal of training models to understand the layout of the objects and the context of the image then to find the main subjects among them. This is achieved in three aspects. By gathering images from movie shots created by directors with professional shooting skills, we collect the dataset with strong diversity, specifically, it contains 107\,700 images from 21\,540 movie shots. We labeled them with the bounding box labels for two classes: subject and non-subject foreground object. We present a detailed analysis of the dataset and compare the task with saliency detection and object detection. ImageSubject is the first dataset that tries to localize the subject in an image that the photographer wants to highlight. Moreover, we find the transformer-based detection model offers the best result among other popular model architectures. Finally, we discuss the potential applications and conclude with the importance of the dataset.

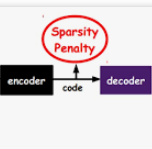

Deep learning methods can classify various unstructured data such as images, language, and voice as input data. As the task of classifying anomalies becomes more important in the real world, various methods exist for classifying using deep learning with data collected in the real world. As the task of classifying anomalies becomes more important in the real world, there are various methods for classifying using deep learning with data collected in the real world. Among the various methods, the representative approach is a method of extracting and learning the main features based on a transition model from pre-trained models, and a method of learning an autoencoderbased structure only with normal data and classifying it as abnormal through a threshold value. However, if the dataset is imbalanced, even the state-of-the-arts models do not achieve good performance. This can be addressed by augmenting normal and abnormal features in imbalanced data as features with strong distinction. We use the features of the autoencoder to train latent vectors from low to high dimensionality. We train normal and abnormal data as a feature that has a strong distinction among the features of imbalanced data. We propose a latent vector expansion autoencoder model that improves classification performance at imbalanced data. The proposed method shows performance improvement compared to the basic autoencoder using imbalanced anomaly dataset.

In this paper we discuss a reduced basis method for linear evolution PDEs, which is based on the application of the Laplace transform. The main advantage of this approach consists in the fact that, differently from time stepping methods, like Runge-Kutta integrators, the Laplace transform allows to compute the solution directly at a given instant, which can be done by approximating the contour integral associated to the inverse Laplace transform by a suitable quadrature formula. In terms of the reduced basis methodology, this determines a significant improvement in the reduction phase - like the one based on the classical proper orthogonal decomposition (POD) - since the number of vectors to which the decomposition applies is drastically reduced as it does not contain all intermediate solutions generated along an integration grid by a time stepping method. We show the effectiveness of the method by some illustrative parabolic PDEs arising from finance and also provide some evidence that the method we propose, when applied to a simple advection equation, does not suffer the problem of slow decay of singular values which instead affects methods based on time integration of the Cauchy problem arising from space discretization.

Modelling and forecasting homogeneous age-specific mortality rates of multiple countries could lead to improvements in long-term forecasting. Data fed into joint models are often grouped according to nominal attributes, such as geographic regions, ethnic groups, and socioeconomic status, which may still contain heterogeneity and deteriorate the forecast results. Our paper proposes a novel clustering technique to pursue homogeneity among multiple functional time series based on functional panel data modelling to address this issue. Using a functional panel data model with fixed effects, we can extract common functional time series features. These common features could be decomposed into two components: the functional time trend and the mode of variations of functions (functional pattern). The functional time trend reflects the dynamics across time, while the functional pattern captures the fluctuations within curves. The proposed clustering method searches for homogeneous age-specific mortality rates of multiple countries by accounting for both the modes of variations and the temporal dynamics among curves. We demonstrate that the proposed clustering technique outperforms other existing methods through a Monte Carlo simulation and could handle complicated cases with slow decaying eigenvalues. In empirical data analysis, we find that the clustering results of age-specific mortality rates can be explained by the combination of geographic region, ethnic groups, and socioeconomic status. We further show that our model produces more accurate forecasts than several benchmark methods in forecasting age-specific mortality rates.

The global financial crisis of 2007-2009 highlighted the crucial role systemic risk plays in ensuring stability of financial markets. Accurate assessment of systemic risk would enable regulators to introduce suitable policies to mitigate the risk as well as allow individual institutions to monitor their vulnerability to market movements. One popular measure of systemic risk is the conditional value-at-risk (CoVaR), proposed in Adrian and Brunnermeier (2011). We develop a methodology to estimate CoVaR semi-parametrically within the framework of multivariate extreme value theory. According to its definition, CoVaR can be viewed as a high quantile of the conditional distribution of one institution's (or the financial system) potential loss, where the conditioning event corresponds to having large losses in the financial system (or the given financial institution). We relate this conditional distribution to the tail dependence function between the system and the institution, then use parametric modelling of the tail dependence function to address data sparsity in the joint tail regions. We prove consistency of the proposed estimator, and illustrate its performance via simulation studies and a real data example.

Transformer is a new kind of neural architecture which encodes the input data as powerful features via the attention mechanism. Basically, the visual transformers first divide the input images into several local patches and then calculate both representations and their relationship. Since natural images are of high complexity with abundant detail and color information, the granularity of the patch dividing is not fine enough for excavating features of objects in different scales and locations. In this paper, we point out that the attention inside these local patches are also essential for building visual transformers with high performance and we explore a new architecture, namely, Transformer iN Transformer (TNT). Specifically, we regard the local patches (e.g., 16$\times$16) as "visual sentences" and present to further divide them into smaller patches (e.g., 4$\times$4) as "visual words". The attention of each word will be calculated with other words in the given visual sentence with negligible computational costs. Features of both words and sentences will be aggregated to enhance the representation ability. Experiments on several benchmarks demonstrate the effectiveness of the proposed TNT architecture, e.g., we achieve an 81.5% top-1 accuracy on the ImageNet, which is about 1.7% higher than that of the state-of-the-art visual transformer with similar computational cost. The PyTorch code is available at //github.com/huawei-noah/CV-Backbones, and the MindSpore code is available at //gitee.com/mindspore/models/tree/master/research/cv/TNT.

In real-world applications, data often come in a growing manner, where the data volume and the number of classes may increase dynamically. This will bring a critical challenge for learning: given the increasing data volume or the number of classes, one has to instantaneously adjust the neural model capacity to obtain promising performance. Existing methods either ignore the growing nature of data or seek to independently search an optimal architecture for a given dataset, and thus are incapable of promptly adjusting the architectures for the changed data. To address this, we present a neural architecture adaptation method, namely Adaptation eXpert (AdaXpert), to efficiently adjust previous architectures on the growing data. Specifically, we introduce an architecture adjuster to generate a suitable architecture for each data snapshot, based on the previous architecture and the different extent between current and previous data distributions. Furthermore, we propose an adaptation condition to determine the necessity of adjustment, thereby avoiding unnecessary and time-consuming adjustments. Extensive experiments on two growth scenarios (increasing data volume and number of classes) demonstrate the effectiveness of the proposed method.

In this paper, we introduce 'Coarse-Fine Networks', a two-stream architecture which benefits from different abstractions of temporal resolution to learn better video representations for long-term motion. Traditional Video models process inputs at one (or few) fixed temporal resolution without any dynamic frame selection. However, we argue that, processing multiple temporal resolutions of the input and doing so dynamically by learning to estimate the importance of each frame can largely improve video representations, specially in the domain of temporal activity localization. To this end, we propose (1) `Grid Pool', a learned temporal downsampling layer to extract coarse features, and, (2) `Multi-stage Fusion', a spatio-temporal attention mechanism to fuse a fine-grained context with the coarse features. We show that our method can outperform the state-of-the-arts for action detection in public datasets including Charades with a significantly reduced compute and memory footprint.