The relevance of determinacy coefficients as indicators for the validity of factor score predictors has regularly been emphasized. Previous simulation studies revealed biased determinacy coefficients for factor score predictors based on categorical variables. Therefore, and because there are different possibilities to compute determinacy coefficients, the present study compared bias of determinacy coefficients for the best linear factor score predictor and for a correlation-preserving factor score predictor based on confirmatory factor models with observed variables with 2, 4, 6, and 8 categories and maximum likelihood estimation, diagonally weighted least squares estimation, and Bayesian estimation. Positive bias was found when data were based on variables with two categories, population factors were correlated, and when there were unmodeled cross-loadings. Based on the results, the correction for sampling error, the use of maximum likelihood or Bayesian parameters, and data with at least four categories are recommended to avoid overestimation of parameter-based determinacy coefficients.

相關內容

Mobile digital health (mHealth) studies often collect multiple within-day self-reported assessments of participants' behaviour and health. Indexed by time of day, these assessments can be treated as functional observations of continuous, truncated, ordinal, and binary type. We develop covariance estimation and principal component analysis for mixed-type functional data like that. We propose a semiparametric Gaussian copula model that assumes a generalized latent non-paranormal process generating observed mixed-type functional data and defining temporal dependence via a latent covariance. The smooth estimate of latent covariance is constructed via Kendall's Tau bridging method that incorporates smoothness within the bridging step. The approach is then extended with methods for handling both dense and sparse sampling designs, calculating subject-specific latent representations of observed data, latent principal components and principal component scores. Importantly, the proposed framework handles all four mixed types in a unified way. Simulation studies show a competitive performance of the proposed method under both dense and sparse sampling designs. The method is applied to data from 497 participants of National Institute of Mental Health Family Study of the Mood Disorder Spectrum to characterize the differences in within-day temporal patterns of mood in individuals with the major mood disorder subtypes including Major Depressive Disorder, and Type 1 and 2 Bipolar Disorder.

We present a finite element scheme for fractional diffusion problems with varying diffusivity and fractional order. We consider a symmetric integral form of these nonlocal equations defined on general geometries and in arbitrary bounded domains. A number of challenges are encountered when discretizing these equations. The first comes from the heterogeneous kernel singularity in the fractional integral operator. The second comes from the dense discrete operator with its quadratic growth in memory footprint and arithmetic operations. An additional challenge comes from the need to handle volume conditions-the generalization of classical local boundary conditions to the nonlocal setting. Satisfying these conditions requires that the effect of the whole domain, including both the interior and exterior regions, can be computed on every interior point in the discretization. Performed directly, this would result in quadratic complexity. To address these challenges, we propose a strategy that decomposes the stiffness matrix into three components. The first is a sparse matrix that handles the singular near-field separately and is computed by adapting singular quadrature techniques available for the homogeneous case to the case of spatially variable order. The second component handles the remaining smooth part of the near-field as well as the far field and is approximated by a hierarchical $\mathcal{H}^{2}$ matrix that maintains linear complexity in storage and operations. The third component handles the effect of the global mesh at every node and is written as a weighted mass matrix whose density is computed by a fast-multipole type method. The resulting algorithm has therefore overall linear space and time complexity. Analysis of the consistency of the stiffness matrix is provided and numerical experiments are conducted to illustrate the convergence and performance of the proposed algorithm.

Autonomous racing control is a challenging research problem as vehicles are pushed to their limits of handling to achieve an optimal lap time; therefore, vehicles exhibit highly nonlinear and complex dynamics. Difficult-to-model effects, such as drifting, aerodynamics, chassis weight transfer, and suspension can lead to infeasible and suboptimal trajectories. While offline planning allows optimizing a full reference trajectory for the minimum lap time objective, such modeling discrepancies are particularly detrimental when using offline planning, as planning model errors compound with controller modeling errors. Gaussian Process Regression (GPR) can compensate for modeling errors. However, previous works primarily focus on modeling error in real-time control without consideration for how the model used in offline planning can affect the overall performance. In this work, we propose a double-GPR error compensation algorithm to reduce model uncertainties; specifically, we compensate both the planner's model and controller's model with two respective GPR-based error compensation functions. Furthermore, we design an iterative framework to re-collect error-rich data using the racing control system. We test our method in the high-fidelity racing simulator Gran Turismo Sport (GTS); we find that our iterative, double-GPR compensation functions improve racing performance and iteration stability in comparison to a single compensation function applied merely for real-time control.

As an efficient alternative to conventional full finetuning, parameter-efficient finetuning (PEFT) is becoming the prevailing method to adapt pretrained language models. In PEFT, a lightweight module is learned on each dataset while the underlying pretrained language model remains unchanged, resulting in multiple compact modules representing diverse skills when applied to various domains and tasks. In this paper, we propose to compose these parameter-efficient modules through linear arithmetic operations in the weight space, thereby integrating different module capabilities. Specifically, we first define addition and negation operators for the module, and then further compose these two basic operators to perform flexible arithmetic. Our approach requires \emph{no additional training} and enables highly flexible module composition. We apply different arithmetic operations to compose the parameter-efficient modules for (1) distribution generalization, (2) multi-tasking, (3) unlearning, and (4) domain transfer. Additionally, we extend our approach to detoxify Alpaca-LoRA, the latest instruction-tuned large language model based on LLaMA. Empirical results demonstrate that our approach produces new and effective parameter-efficient modules that significantly outperform existing ones across all settings.

Recently, there has been a growing interest for mixed-categorical meta-models based on Gaussian process (GP) surrogates. In this setting, several existing approaches use different strategies either by using continuous kernels (e.g., continuous relaxation and Gower distance based GP) or by using a direct estimation of the correlation matrix. In this paper, we present a kernel-based approach that extends continuous exponential kernels to handle mixed-categorical variables. The proposed kernel leads to a new GP surrogate that generalizes both the continuous relaxation and the Gower distance based GP models. We demonstrate, on both analytical and engineering problems, that our proposed GP model gives a higher likelihood and a smaller residual error than the other kernel-based state-of-the-art models. Our method is available in the open-source software SMT.

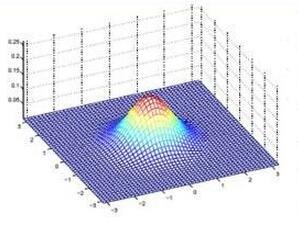

The convexification numerical method with the rigorously established global convergence property is constructed for a problem for the Mean Field Games System of the second order. This is the problem of the retrospective analysis of a game of infinitely many rational players. In addition to traditional initial and terminal conditions, one extra terminal condition is assumed to be known. Carleman estimates and a Carleman Weight Function play the key role. Numerical experiments demonstrate a good performance for complicated functions. Various versions of the convexification have been actively used by this research team for a number of years to numerically solve coefficient inverse problems.

Recently, Meta-Auto-Decoder (MAD) was proposed as a novel reduced order model (ROM) for solving parametric partial differential equations (PDEs), and the best possible performance of this method can be quantified by the decoder width. This paper aims to provide a theoretical analysis related to the decoder width. The solution sets of several parametric PDEs are examined, and the upper bounds of the corresponding decoder widths are estimated. In addition to the elliptic and the parabolic equations on a fixed domain, we investigate the advection equations that present challenges for classical linear ROMs, as well as the elliptic equations with the computational domain shape as a variable PDE parameter. The resulting fast decay rates of the decoder widths indicate the promising potential of MAD in addressing these problems.

Selective inference methods are developed for group lasso estimators for use with a wide class of distributions and loss functions. The method includes the use of exponential family distributions, as well as quasi-likelihood modeling for overdispersed count data, for example, and allows for categorical or grouped covariates as well as continuous covariates. A randomized group-regularized optimization problem is studied. The added randomization allows us to construct a post-selection likelihood which we show to be adequate for selective inference when conditioning on the event of the selection of the grouped covariates. This likelihood also provides a selective point estimator, accounting for the selection by the group lasso. Confidence regions for the regression parameters in the selected model take the form of Wald-type regions and are shown to have bounded volume. The selective inference method for grouped lasso is illustrated on data from the national health and nutrition examination survey while simulations showcase its behaviour and favorable comparison with other methods.

The problem of selecting optimal backdoor adjustment sets to estimate causal effects in graphical models with hidden and conditioned variables is addressed. Previous work has defined optimality as achieving the smallest asymptotic estimation variance and derived an optimal set for the case without hidden variables. For the case with hidden variables there can be settings where no optimal set exists and currently only a sufficient graphical optimality criterion of limited applicability has been derived. In the present work optimality is characterized as maximizing a certain adjustment information which allows to derive a necessary and sufficient graphical criterion for the existence of an optimal adjustment set and a definition and algorithm to construct it. Further, the optimal set is valid if and only if a valid adjustment set exists and has higher (or equal) adjustment information than the Adjust-set proposed in Perkovi{\'c} et al. [Journal of Machine Learning Research, 18: 1--62, 2018] for any graph. The results translate to minimal asymptotic estimation variance for a class of estimators whose asymptotic variance follows a certain information-theoretic relation. Numerical experiments indicate that the asymptotic results also hold for relatively small sample sizes and that the optimal adjustment set or minimized variants thereof often yield better variance also beyond that estimator class. Surprisingly, among the randomly created setups more than 90\% fulfill the optimality conditions indicating that also in many real-world scenarios graphical optimality may hold. Code is available as part of the python package \url{//github.com/jakobrunge/tigramite}.

Since hardware resources are limited, the objective of training deep learning models is typically to maximize accuracy subject to the time and memory constraints of training and inference. We study the impact of model size in this setting, focusing on Transformer models for NLP tasks that are limited by compute: self-supervised pretraining and high-resource machine translation. We first show that even though smaller Transformer models execute faster per iteration, wider and deeper models converge in significantly fewer steps. Moreover, this acceleration in convergence typically outpaces the additional computational overhead of using larger models. Therefore, the most compute-efficient training strategy is to counterintuitively train extremely large models but stop after a small number of iterations. This leads to an apparent trade-off between the training efficiency of large Transformer models and the inference efficiency of small Transformer models. However, we show that large models are more robust to compression techniques such as quantization and pruning than small models. Consequently, one can get the best of both worlds: heavily compressed, large models achieve higher accuracy than lightly compressed, small models.