We consider the symmetric binary perceptron model, a simple model of neural networks that has gathered significant attention in the statistical physics, information theory and probability theory communities, with recent connections made to the performance of learning algorithms in Baldassi et al. '15. We establish that the partition function of this model, normalized by its expected value, converges to a lognormal distribution. As a consequence, this allows us to establish several conjectures for this model: (i) it proves the contiguity conjecture of Aubin et al. '19 between the planted and unplanted models in the satisfiable regime; (ii) it establishes the sharp threshold conjecture; (iii) it proves the frozen 1-RSB conjecture in the symmetric case, conjectured first by Krauth-M\'ezard '89 in the asymmetric case. In a recent work of Perkins-Xu '21, the last two conjectures were also established by proving that the partition function concentrates on an exponential scale, under an analytical assumption on a real-valued function. This left open the contiguity conjecture and the lognormal limit characterization, which are established here unconditionally, with the analytical assumption verified. In particular, our proof technique relies on a dense counter-part of the small graph conditioning method, which was developed for sparse models in the celebrated work of Robinson and Wormald.

相關內容

We give a formulation of the Nielsen-Schreier theorem (subgroups of free groups are free) in homotopy type theory using the presentation of groups as pointed connected 1-truncated types. We show the special case of finite index subgroups holds constructively and the full theorem follows from the axiom of choice. We give an example of a boolean infinity topos where our formulation of the theorem does not hold and show a stronger "untruncated" version of the theorem is provably false in homotopy type theory.

We investigate two fundamental questions intersecting coding theory and combinatorial geometry, with emphasis on their connections. These are the problem of computing the asymptotic density of MRD codes in the rank metric, and the Critical Problem for combinatorial geometries by Crapo and Rota. Using methods from semifield theory, we derive two lower bounds for the density function of full-rank, square MRD codes. The first bound is sharp when the matrix size is a prime number and the underlying field is sufficiently large, while the second bound applies to the binary field. We then take a new look at the Critical Problem for combinatorial geometries, approaching it from a qualitative, often asymptotic, viewpoint. We illustrate the connection between this very classical problem and that of computing the asymptotic density of MRD codes. Finally, we study the asymptotic density of some special families of codes in the rank metric, including the symmetric, alternating and Hermitian ones. In particular, we show that the optimal codes in these three contexts are sparse.

We investigate the problem of approximating the matrix function $f(A)$ by $r(A)$, with $f$ a Markov function, $r$ a rational interpolant of $f$, and $A$ a symmetric Toeplitz matrix. In a first step, we obtain a new upper bound for the relative interpolation error $1-r/f$ on the spectral interval of $A$. By minimizing this upper bound over all interpolation points, we obtain a new, simple and sharp a priori bound for the relative interpolation error. We then consider three different approaches of representing and computing the rational interpolant $r$. Theoretical and numerical evidence is given that any of these methods for a scalar argument allows to achieve high precision, even in the presence of finite precision arithmetic. We finally investigate the problem of efficiently evaluating $r(A)$, where it turns out that the relative error for a matrix argument is only small if we use a partial fraction decomposition for $r$ following Antoulas and Mayo. An important role is played by a new stopping criterion which ensures to automatically find the degree of $r$ leading to a small error, even in presence of finite precision arithmetic.

Deciding whether a given function is quasiconvex is generally a difficult task. Here, we discuss a number of numerical approaches that can be used in the search for a counterexample to the quasiconvexity of a given function $W$. We will demonstrate these methods using the planar isotropic rank-one convex function \[ W_{\rm magic}^+(F)=\frac{\lambda_{\rm max}}{\lambda_{\rm min}}-\log\frac{\lambda_{\rm max}}{\lambda_{\rm min}}+\log\det F=\frac{\lambda_{\rm max}}{\lambda_{\rm min}}+2\log\lambda_{\rm min}\,, \] where $\lambda_{\rm max}\geq\lambda_{\rm min}$ are the singular values of $F$, as our main example. In a previous contribution, we have shown that quasiconvexity of this function would imply quasiconvexity for all rank-one convex isotropic planar energies $W:\operatorname{GL}^+(2)\rightarrow\mathbb{R}$ with an additive volumetric-isochoric split of the form \[ W(F)=W_{\rm iso}(F)+W_{\rm vol}(\det F)=\widetilde W_{\rm iso}\bigg(\frac{F}{\sqrt{\det F}}\bigg)+W_{\rm vol}(\det F) \] with a concave volumetric part. This example is therefore of particular interest with regard to Morrey's open question whether or not rank-one convexity implies quasiconvexity in the planar case.

We study the off-policy evaluation (OPE) problem in an infinite-horizon Markov decision process with continuous states and actions. We recast the $Q$-function estimation into a special form of the nonparametric instrumental variables (NPIV) estimation problem. We first show that under one mild condition the NPIV formulation of $Q$-function estimation is well-posed in the sense of $L^2$-measure of ill-posedness with respect to the data generating distribution, bypassing a strong assumption on the discount factor $\gamma$ imposed in the recent literature for obtaining the $L^2$ convergence rates of various $Q$-function estimators. Thanks to this new well-posed property, we derive the first minimax lower bounds for the convergence rates of nonparametric estimation of $Q$-function and its derivatives in both sup-norm and $L^2$-norm, which are shown to be the same as those for the classical nonparametric regression (Stone, 1982). We then propose a sieve two-stage least squares estimator and establish its rate-optimality in both norms under some mild conditions. Our general results on the well-posedness and the minimax lower bounds are of independent interest to study not only other nonparametric estimators for $Q$-function but also efficient estimation on the value of any target policy in off-policy settings.

Neural networks with the Rectified Linear Unit (ReLU) nonlinearity are described by a vector of parameters $\theta$, and realized as a piecewise linear continuous function $R_{\theta}: x \in \mathbb R^{d} \mapsto R_{\theta}(x) \in \mathbb R^{k}$. Natural scalings and permutations operations on the parameters $\theta$ leave the realization unchanged, leading to equivalence classes of parameters that yield the same realization. These considerations in turn lead to the notion of identifiability -- the ability to recover (the equivalence class of) $\theta$ from the sole knowledge of its realization $R_{\theta}$. The overall objective of this paper is to introduce an embedding for ReLU neural networks of any depth, $\Phi(\theta)$, that is invariant to scalings and that provides a locally linear parameterization of the realization of the network. Leveraging these two key properties, we derive some conditions under which a deep ReLU network is indeed locally identifiable from the knowledge of the realization on a finite set of samples $x_{i} \in \mathbb R^{d}$. We study the shallow case in more depth, establishing necessary and sufficient conditions for the network to be identifiable from a bounded subset $\mathcal X \subseteq \mathbb R^{d}$.

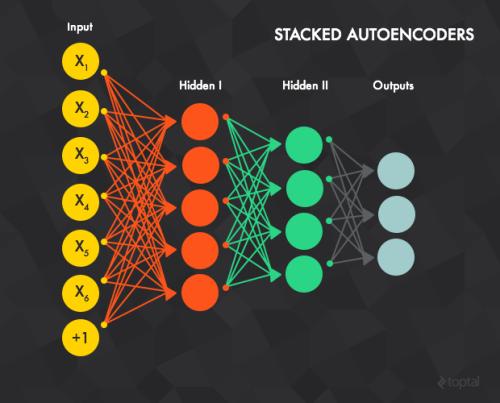

In 1954, Alston S. Householder published Principles of Numerical Analysis, one of the first modern treatments on matrix decomposition that favored a (block) LU decomposition-the factorization of a matrix into the product of lower and upper triangular matrices. And now, matrix decomposition has become a core technology in machine learning, largely due to the development of the back propagation algorithm in fitting a neural network. The sole aim of this survey is to give a self-contained introduction to concepts and mathematical tools in numerical linear algebra and matrix analysis in order to seamlessly introduce matrix decomposition techniques and their applications in subsequent sections. However, we clearly realize our inability to cover all the useful and interesting results concerning matrix decomposition and given the paucity of scope to present this discussion, e.g., the separated analysis of the Euclidean space, Hermitian space, Hilbert space, and things in the complex domain. We refer the reader to literature in the field of linear algebra for a more detailed introduction to the related fields.

Unsupervised multi-object representation learning depends on inductive biases to guide the discovery of object-centric representations that generalize. However, we observe that methods for learning these representations are either impractical due to long training times and large memory consumption or forego key inductive biases. In this work, we introduce EfficientMORL, an efficient framework for the unsupervised learning of object-centric representations. We show that optimization challenges caused by requiring both symmetry and disentanglement can in fact be addressed by high-cost iterative amortized inference by designing the framework to minimize its dependence on it. We take a two-stage approach to inference: first, a hierarchical variational autoencoder extracts symmetric and disentangled representations through bottom-up inference, and second, a lightweight network refines the representations with top-down feedback. The number of refinement steps taken during training is reduced following a curriculum, so that at test time with zero steps the model achieves 99.1% of the refined decomposition performance. We demonstrate strong object decomposition and disentanglement on the standard multi-object benchmark while achieving nearly an order of magnitude faster training and test time inference over the previous state-of-the-art model.

We present the problem of selecting relevant premises for a proof of a given statement. When stated as a binary classification task for pairs (conjecture, axiom), it can be efficiently solved using artificial neural networks. The key difference between our advance to solve this problem and previous approaches is the use of just functional signatures of premises. To further improve the performance of the model, we use dimensionality reduction technique, to replace long and sparse signature vectors with their compact and dense embedded versions. These are obtained by firstly defining the concept of a context for each functor symbol, and then training a simple neural network to predict the distribution of other functor symbols in the context of this functor. After training the network, the output of its hidden layer is used to construct a lower dimensional embedding of a functional signature (for each premise) with a distributed representation of features. This allows us to use 512-dimensional embeddings for conjecture-axiom pairs, containing enough information about the original statements to reach the accuracy of 76.45% in premise selection task, only with simple two-layer densely connected neural networks.

In this paper, we study the optimal convergence rate for distributed convex optimization problems in networks. We model the communication restrictions imposed by the network as a set of affine constraints and provide optimal complexity bounds for four different setups, namely: the function $F(\xb) \triangleq \sum_{i=1}^{m}f_i(\xb)$ is strongly convex and smooth, either strongly convex or smooth or just convex. Our results show that Nesterov's accelerated gradient descent on the dual problem can be executed in a distributed manner and obtains the same optimal rates as in the centralized version of the problem (up to constant or logarithmic factors) with an additional cost related to the spectral gap of the interaction matrix. Finally, we discuss some extensions to the proposed setup such as proximal friendly functions, time-varying graphs, improvement of the condition numbers.