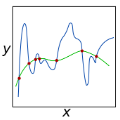

In this work, we propose and analyse a weak Galerkin method for the electrical impedance tomography based on a bounded variation regularization. We use the complete electrode model as the forward system that is approximated by a weak Galerkin method with lowest order. The error estimates are studied for the forward problem, which are used to establish the convergence of this weak Galerkin algorithm for the inverse problem. Numerical examples are presented to verify the effectiveness and efficiency of the weak Galerkin algorithm for the electrical impedance tomography.

相關內容

In recent years, strong expectations have been raised for the possible power of quantum computing for solving difficult optimization problems, based on theoretical, asymptotic worst-case bounds. Can we expect this to have consequences for Linear and Integer Programming when solving instances of practically relevant size, a fundamental goal of Mathematical Programming, Operations Research and Algorithm Engineering? Answering this question faces a crucial impediment: The lack of sufficiently large quantum platforms prevents performing real-world tests for comparison with classical methods. In this paper, we present a quantum analog for classical runtime analysis when solving real-world instances of important optimization problems. To this end, we measure the expected practical performance of quantum computers by analyzing the expected gate complexity of a quantum algorithm. The lack of practical quantum platforms for experimental comparison is addressed by hybrid benchmarking, in which the algorithm is performed on a classical system, logging the expected cost of the various subroutines that are employed by the quantum versions. In particular, we provide an analysis of quantum methods for Linear Programming, for which recent work has provided asymptotic speedup through quantum subroutines for the Simplex method. We show that a practical quantum advantage for realistic problem sizes would require quantum gate operation times that are considerably below current physical limitations.

Large Language Models (LLMs) serve as repositories of extensive world knowledge, enabling them to perform tasks such as question-answering and fact-checking. However, this knowledge can become obsolete as global contexts change. In this paper, we introduce a novel problem in the realm of continual learning: Online Continual Knowledge Learning (OCKL). This problem formulation aims to manage the dynamic nature of world knowledge in LMs under real-time constraints. We propose a new benchmark and evaluation metric designed to measure both the rate of new knowledge acquisition and the retention of previously learned knowledge. Our empirical evaluation, conducted using a variety of state-of-the-art methods, establishes robust base-lines for OCKL. Our results reveal that existing continual learning approaches are unfortunately insufficient for tackling the unique challenges posed by OCKL. We identify key factors that influence the trade-off between knowledge acquisition and retention, thereby advancing our understanding of how to train LMs in a continually evolving environment.

In this article, we propose a modified convex combination of the polynomial reconstructions of odd-order WENO schemes to maintain the central substencil prevalence over the lateral ones in all parts of the solution. New "centered" versions of the classical WENO-Z and its less dissipative counterpart, WENO-Z+, are defined through very simple modifications of the classical nonlinear weights and show significantly superior numerical properties; for instance, a well-known dispersion error for long-term runs is fixed, along with decreased dissipation and better shock-capturing abilities. Moreover, the proposed centered version of WENO-Z+ has no ad-hoc parameters and no dependence on the powers of the grid size. All the new schemes are thoroughly analyzed concerning convergence at critical points, adding to the discussion on the relevance of such convergence to the numerical simulation of typical hyperbolic conservation laws problems. Nonlinear spectral analysis confirms the enhancement achieved by the new schemes over the standard ones.

With the increasing amount of data available to scientists in disciplines as diverse as bioinformatics, physics, and remote sensing, scientific workflow systems are becoming increasingly important for composing and executing scalable data analysis pipelines. When writing such workflows, users need to specify the resources to be reserved for tasks so that sufficient resources are allocated on the target cluster infrastructure. Crucially, underestimating a task's memory requirements can result in task failures. Therefore, users often resort to overprovisioning, resulting in significant resource wastage and decreased throughput. In this paper, we propose a novel online method that uses monitoring time series data to predict task memory usage in order to reduce the memory wastage of scientific workflow tasks. Our method predicts a task's runtime, divides it into k equally-sized segments, and learns the peak memory value for each segment depending on the total file input size. We evaluate the prototype implementation of our method using workflows from the publicly available nf-core repository, showing an average memory wastage reduction of 29.48% compared to the best state-of-the-art approach

Humans perceive the world by concurrently processing and fusing high-dimensional inputs from multiple modalities such as vision and audio. Machine perception models, in stark contrast, are typically modality-specific and optimised for unimodal benchmarks, and hence late-stage fusion of final representations or predictions from each modality (`late-fusion') is still a dominant paradigm for multimodal video classification. Instead, we introduce a novel transformer based architecture that uses `fusion bottlenecks' for modality fusion at multiple layers. Compared to traditional pairwise self-attention, our model forces information between different modalities to pass through a small number of bottleneck latents, requiring the model to collate and condense the most relevant information in each modality and only share what is necessary. We find that such a strategy improves fusion performance, at the same time reducing computational cost. We conduct thorough ablation studies, and achieve state-of-the-art results on multiple audio-visual classification benchmarks including Audioset, Epic-Kitchens and VGGSound. All code and models will be released.

Recent contrastive representation learning methods rely on estimating mutual information (MI) between multiple views of an underlying context. E.g., we can derive multiple views of a given image by applying data augmentation, or we can split a sequence into views comprising the past and future of some step in the sequence. Contrastive lower bounds on MI are easy to optimize, but have a strong underestimation bias when estimating large amounts of MI. We propose decomposing the full MI estimation problem into a sum of smaller estimation problems by splitting one of the views into progressively more informed subviews and by applying the chain rule on MI between the decomposed views. This expression contains a sum of unconditional and conditional MI terms, each measuring modest chunks of the total MI, which facilitates approximation via contrastive bounds. To maximize the sum, we formulate a contrastive lower bound on the conditional MI which can be approximated efficiently. We refer to our general approach as Decomposed Estimation of Mutual Information (DEMI). We show that DEMI can capture a larger amount of MI than standard non-decomposed contrastive bounds in a synthetic setting, and learns better representations in a vision domain and for dialogue generation.

Graphical causal inference as pioneered by Judea Pearl arose from research on artificial intelligence (AI), and for a long time had little connection to the field of machine learning. This article discusses where links have been and should be established, introducing key concepts along the way. It argues that the hard open problems of machine learning and AI are intrinsically related to causality, and explains how the field is beginning to understand them.

Embedding entities and relations into a continuous multi-dimensional vector space have become the dominant method for knowledge graph embedding in representation learning. However, most existing models ignore to represent hierarchical knowledge, such as the similarities and dissimilarities of entities in one domain. We proposed to learn a Domain Representations over existing knowledge graph embedding models, such that entities that have similar attributes are organized into the same domain. Such hierarchical knowledge of domains can give further evidence in link prediction. Experimental results show that domain embeddings give a significant improvement over the most recent state-of-art baseline knowledge graph embedding models.

Graph neural networks (GNNs) are a popular class of machine learning models whose major advantage is their ability to incorporate a sparse and discrete dependency structure between data points. Unfortunately, GNNs can only be used when such a graph-structure is available. In practice, however, real-world graphs are often noisy and incomplete or might not be available at all. With this work, we propose to jointly learn the graph structure and the parameters of graph convolutional networks (GCNs) by approximately solving a bilevel program that learns a discrete probability distribution on the edges of the graph. This allows one to apply GCNs not only in scenarios where the given graph is incomplete or corrupted but also in those where a graph is not available. We conduct a series of experiments that analyze the behavior of the proposed method and demonstrate that it outperforms related methods by a significant margin.

Multi-relation Question Answering is a challenging task, due to the requirement of elaborated analysis on questions and reasoning over multiple fact triples in knowledge base. In this paper, we present a novel model called Interpretable Reasoning Network that employs an interpretable, hop-by-hop reasoning process for question answering. The model dynamically decides which part of an input question should be analyzed at each hop; predicts a relation that corresponds to the current parsed results; utilizes the predicted relation to update the question representation and the state of the reasoning process; and then drives the next-hop reasoning. Experiments show that our model yields state-of-the-art results on two datasets. More interestingly, the model can offer traceable and observable intermediate predictions for reasoning analysis and failure diagnosis.