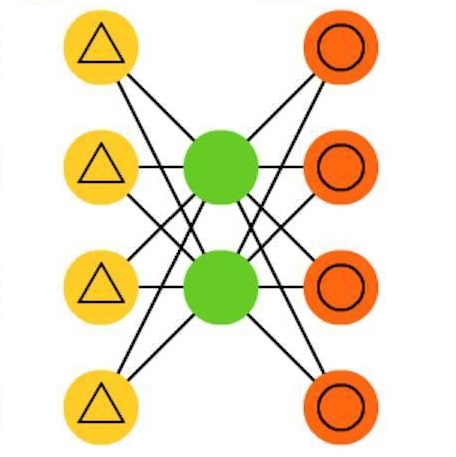

Using a deep autoencoder (DAE) for end-to-end communication in multiple-input multiple-output (MIMO) systems is a novel concept with significant potential. DAE-aided MIMO has been shown to outperform singular-value decomposition (SVD)-based precoded MIMO in terms of bit error rate (BER). This paper proposes embedding left- and right-singular vectors of the channel matrix into DAE encoder and decoder to further improve the performance of the MIMO DAE. SVDembedded DAE largely outperforms theoretic linear precoding in terms of BER. This is remarkable since it demonstrates that DAEs have significant potential to exceed the limits of current system design by treating the communication system as a single, end-to-end optimization block. Based on the simulation results, at SNR=10dB, the proposed SVD-embedded design can achieve a BER of about $10^{-5}$ and reduce the BER at least 10 times compared with existing DAE without SVD, and up to 18 times compared with theoretical linear precoding. We attribute this to the fact that the proposed DAE can match the input and output as an adaptive modulation structure with finite alphabet input. We also observe that adding residual connections to the DAE further improves the performance.

相關內容

We study a new two-time-scale stochastic gradient method for solving optimization problems, where the gradients are computed with the aid of an auxiliary variable under samples generated by time-varying Markov random processes parameterized by the underlying optimization variable. These time-varying samples make gradient directions in our update biased and dependent, which can potentially lead to the divergence of the iterates. In our two-time-scale approach, one scale is to estimate the true gradient from these samples, which is then used to update the estimate of the optimal solution. While these two iterates are implemented simultaneously, the former is updated "faster" (using bigger step sizes) than the latter (using smaller step sizes). Our first contribution is to characterize the finite-time complexity of the proposed two-time-scale stochastic gradient method. In particular, we provide explicit formulas for the convergence rates of this method under different structural assumptions, namely, strong convexity, convexity, the Polyak-Lojasiewicz condition, and general non-convexity. We apply our framework to two problems in control and reinforcement learning. First, we look at the standard online actor-critic algorithm over finite state and action spaces and derive a convergence rate of O(k^(-2/5)), which recovers the best known rate derived specifically for this problem. Second, we study an online actor-critic algorithm for the linear-quadratic regulator and show that a convergence rate of O(k^(-2/3)) is achieved. This is the first time such a result is known in the literature. Finally, we support our theoretical analysis with numerical simulations where the convergence rates are visualized.

In this paper, we introduce $\mathsf{CO}_3$, an algorithm for communication-efficiency federated Deep Neural Network (DNN) training.$\mathsf{CO}_3$ takes its name from three processing applied steps which reduce the communication load when transmitting the local gradients from the remote users to the Parameter Server.Namely:(i) gradient quantization through floating-point conversion, (ii) lossless compression of the quantized gradient, and (iii) quantization error correction.We carefully design each of the steps above so as to minimize the loss in the distributed DNN training when the communication overhead is fixed.In particular, in the design of steps (i) and (ii), we adopt the assumption that DNN gradients are distributed according to a generalized normal distribution.This assumption is validated numerically in the paper. For step (iii), we utilize an error feedback with memory decay mechanism to correct the quantization error introduced in step (i). We argue that this coefficient, similarly to the learning rate, can be optimally tuned to improve convergence. The performance of $\mathsf{CO}_3$ is validated through numerical simulations and is shown having better accuracy and improved stability at a reduced communication payload.

Hybrid precoding is a cost-efficient technique for millimeter wave (mmWave) massive multiple-input multiple-output (MIMO) communications. This paper proposes a deep learning approach by using a distributed neural network for hybrid analog-and-digital precoding design with limited feedback. The proposed distributed neural precoding network, called DNet, is committed to achieving two objectives. First, the DNet realizes channel state information (CSI) compression with a distributed architecture of neural networks, which enables practical deployment on multiple users. Specifically, this neural network is composed of multiple independent sub-networks with the same structure and parameters, which reduces both the number of training parameters and network complexity. Secondly, DNet learns the calculation of hybrid precoding from reconstructed CSI from limited feedback. Different from existing black-box neural network design, the DNet is specifically designed according to the data form of the matrix calculation of hybrid precoding. Simulation results show that the proposed DNet significantly improves the performance up to nearly 50% compared to traditional limited feedback precoding methods under the tests with various CSI compression ratios.

Stochastic optimization algorithms implemented on distributed computing architectures are increasingly used to tackle large-scale machine learning applications. A key bottleneck in such distributed systems is the communication overhead for exchanging information such as stochastic gradients between different workers. Sparse communication with memory and the adaptive aggregation methodology are two successful frameworks among the various techniques proposed to address this issue. In this paper, we exploit the advantages of Sparse communication and Adaptive aggregated Stochastic Gradients to design a communication-efficient distributed algorithm named SASG. Specifically, we determine the workers who need to communicate with the parameter server based on the adaptive aggregation rule and then sparsify the transmitted information. Therefore, our algorithm reduces both the overhead of communication rounds and the number of communication bits in the distributed system. We define an auxiliary sequence and provide convergence results of the algorithm with the help of Lyapunov function analysis. Experiments on training deep neural networks show that our algorithm can significantly reduce the communication overhead compared to the previous methods, with little impact on training and testing accuracy.

The stochastic gradient Langevin Dynamics is one of the most fundamental algorithms to solve sampling problems and non-convex optimization appearing in several machine learning applications. Especially, its variance reduced versions have nowadays gained particular attention. In this paper, we study two variants of this kind, namely, the Stochastic Variance Reduced Gradient Langevin Dynamics and the Stochastic Recursive Gradient Langevin Dynamics. We prove their convergence to the objective distribution in terms of KL-divergence under the sole assumptions of smoothness and Log-Sobolev inequality which are weaker conditions than those used in prior works for these algorithms. With the batch size and the inner loop length set to $\sqrt{n}$, the gradient complexity to achieve an $\epsilon$-precision is $\tilde{O}((n+dn^{1/2}\epsilon^{-1})\gamma^2 L^2\alpha^{-2})$, which is an improvement from any previous analyses. We also show some essential applications of our result to non-convex optimization.

This article presents an overview of image transformation with a secret key and its applications. Image transformation with a secret key enables us not only to protect visual information on plain images but also to embed unique features controlled with a key into images. In addition, numerous encryption methods can generate encrypted images that are compressible and learnable for machine learning. Various applications of such transformation have been developed by using these properties. In this paper, we focus on a class of image transformation referred to as learnable image encryption, which is applicable to privacy-preserving machine learning and adversarially robust defense. Detailed descriptions of both transformation algorithms and performances are provided. Moreover, we discuss robustness against various attacks.

Multi-camera vehicle tracking is one of the most complicated tasks in Computer Vision as it involves distinct tasks including Vehicle Detection, Tracking, and Re-identification. Despite the challenges, multi-camera vehicle tracking has immense potential in transportation applications including speed, volume, origin-destination (O-D), and routing data generation. Several recent works have addressed the multi-camera tracking problem. However, most of the effort has gone towards improving accuracy on high-quality benchmark datasets while disregarding lower camera resolutions, compression artifacts and the overwhelming amount of computational power and time needed to carry out this task on its edge and thus making it prohibitive for large-scale and real-time deployment. Therefore, in this work we shed light on practical issues that should be addressed for the design of a multi-camera tracking system to provide actionable and timely insights. Moreover, we propose a real-time city-scale multi-camera vehicle tracking system that compares favorably to computationally intensive alternatives and handles real-world, low-resolution CCTV instead of idealized and curated video streams. To show its effectiveness, in addition to integration into the Regional Integrated Transportation Information System (RITIS), we participated in the 2021 NVIDIA AI City multi-camera tracking challenge and our method is ranked among the top five performers on the public leaderboard.

Few-shot image classification aims to classify unseen classes with limited labeled samples. Recent works benefit from the meta-learning process with episodic tasks and can fast adapt to class from training to testing. Due to the limited number of samples for each task, the initial embedding network for meta learning becomes an essential component and can largely affects the performance in practice. To this end, many pre-trained methods have been proposed, and most of them are trained in supervised way with limited transfer ability for unseen classes. In this paper, we proposed to train a more generalized embedding network with self-supervised learning (SSL) which can provide slow and robust representation for downstream tasks by learning from the data itself. We evaluate our work by extensive comparisons with previous baseline methods on two few-shot classification datasets ({\em i.e.,} MiniImageNet and CUB). Based on the evaluation results, the proposed method achieves significantly better performance, i.e., improve 1-shot and 5-shot tasks by nearly \textbf{3\%} and \textbf{4\%} on MiniImageNet, by nearly \textbf{9\%} and \textbf{3\%} on CUB. Moreover, the proposed method can gain the improvement of (\textbf{15\%}, \textbf{13\%}) on MiniImageNet and (\textbf{15\%}, \textbf{8\%}) on CUB by pretraining using more unlabeled data. Our code will be available at \hyperref[//github.com/phecy/SSL-FEW-SHOT.]{//github.com/phecy/ssl-few-shot.}

Time Series Classification (TSC) is an important and challenging problem in data mining. With the increase of time series data availability, hundreds of TSC algorithms have been proposed. Among these methods, only a few have considered Deep Neural Networks (DNNs) to perform this task. This is surprising as deep learning has seen very successful applications in the last years. DNNs have indeed revolutionized the field of computer vision especially with the advent of novel deeper architectures such as Residual and Convolutional Neural Networks. Apart from images, sequential data such as text and audio can also be processed with DNNs to reach state-of-the-art performance for document classification and speech recognition. In this article, we study the current state-of-the-art performance of deep learning algorithms for TSC by presenting an empirical study of the most recent DNN architectures for TSC. We give an overview of the most successful deep learning applications in various time series domains under a unified taxonomy of DNNs for TSC. We also provide an open source deep learning framework to the TSC community where we implemented each of the compared approaches and evaluated them on a univariate TSC benchmark (the UCR/UEA archive) and 12 multivariate time series datasets. By training 8,730 deep learning models on 97 time series datasets, we propose the most exhaustive study of DNNs for TSC to date.

We introduce an effective model to overcome the problem of mode collapse when training Generative Adversarial Networks (GAN). Firstly, we propose a new generator objective that finds it better to tackle mode collapse. And, we apply an independent Autoencoders (AE) to constrain the generator and consider its reconstructed samples as "real" samples to slow down the convergence of discriminator that enables to reduce the gradient vanishing problem and stabilize the model. Secondly, from mappings between latent and data spaces provided by AE, we further regularize AE by the relative distance between the latent and data samples to explicitly prevent the generator falling into mode collapse setting. This idea comes when we find a new way to visualize the mode collapse on MNIST dataset. To the best of our knowledge, our method is the first to propose and apply successfully the relative distance of latent and data samples for stabilizing GAN. Thirdly, our proposed model, namely Generative Adversarial Autoencoder Networks (GAAN), is stable and has suffered from neither gradient vanishing nor mode collapse issues, as empirically demonstrated on synthetic, MNIST, MNIST-1K, CelebA and CIFAR-10 datasets. Experimental results show that our method can approximate well multi-modal distribution and achieve better results than state-of-the-art methods on these benchmark datasets. Our model implementation is published here: //github.com/tntrung/gaan