This paper explores the difficulties in solving partial differential equations (PDEs) using physics-informed neural networks (PINNs). PINNs use physics as a regularization term in the objective function. However, a drawback of this approach is the requirement for manual hyperparameter tuning, making it impractical in the absence of validation data or prior knowledge of the solution. Our investigations of the loss landscapes and backpropagated gradients in the presence of physics reveal that existing methods produce non-convex loss landscapes that are hard to navigate. Our findings demonstrate that high-order PDEs contaminate backpropagated gradients and hinder convergence. To address these challenges, we introduce a novel method that bypasses the calculation of high-order derivative operators and mitigates the contamination of backpropagated gradients. Consequently, we reduce the dimension of the search space and make learning PDEs with non-smooth solutions feasible. Our method also provides a mechanism to focus on complex regions of the domain. Besides, we present a dual unconstrained formulation based on Lagrange multiplier method to enforce equality constraints on the model's prediction, with adaptive and independent learning rates inspired by adaptive subgradient methods. We apply our approach to solve various linear and non-linear PDEs.

相關內容

Full waveform inversion (FWI) updates the subsurface model from an initial model by comparing observed and synthetic seismograms. Due to high nonlinearity, FWI is easy to be trapped into local minima. Extended domain FWI, including wavefield reconstruction inversion (WRI) and extended source waveform inversion (ESI) are attractive options to mitigate this issue. This paper makes an in-depth analysis for FWI in the extended domain, identifying key challenges and searching for potential remedies torwards practical applications. WRI and ESI are formulated within the same mathematical framework using Lagrangian-based adjoint-state method with a special focus on time-domain formulation using extended sources, while putting connections between classical FWI, WRI and ESI: both WRI and ESI can be viewed as weighted versions of classic FWI. Due to symmetric positive definite Hessian, the conjugate gradient is explored to efficiently solve the normal equation in a matrix free manner, while both time and frequency domain wave equation solvers are feasible. This study finds that the most significant challenge comes from the huge storage demand to store time-domain wavefields through iterations. To resolve this challenge, two possible workaround strategies can be considered, i.e., by extracting sparse frequencial wavefields or by considering time-domain data instead of wavefields for reducing such challenge. We suggest that these options should be explored more intensively for tractable workflows.

Deep neural networks have received significant attention due to their simplicity and flexibility in the fields of engineering and scientific calculation. In this work, we probe into solving a class of elliptic PDEs with multiple scales by means of Fourier-based mixed physics-informed neural networks (called FMPINN), and its solver is configured as a multi-scale DNN model. Unlike the classical PINN method, a dual (flux) variable about the rough coefficient of PDEs is introduced to avoid the ill-condition of neural tangent kernel matrix that resulted from the oscillating coefficient of multi-scale PDEs. Therefore, apart from the physical conservation laws, the discrepancy between the auxiliary variables and the gradients of multi-scale coefficients is incorporated into the cost function, then leveraging the optimization method to yield the satisfactory solution of PDEs by minimizing the defined loss. Additionally, a novel trigonometric activation function is introduced for FMPINN, which is suited for representing the derivatives of complex target functions. Handling the input data by Fourier feature mapping will effectively improve the capacity of deep neural networks to solve high-frequency problems. Finally, by introducing several numerical examples of multi-scale problems in various dimensional Euclidean spaces, we validate the efficiency and robustness of the proposed FMPINN algorithm in both low-frequency and high-frequency oscillation cases.

We present an artificial intelligence (AI) method for automatically computing the melting point based on coexistence simulations in the NPT ensemble. Given the interatomic interaction model, the method makes decisions regarding the number of atoms and temperature at which to conduct simulations, and based on the collected data predicts the melting point along with the uncertainty, which can be systematically improved with more data. We demonstrate how incorporating physical models of the solid-liquid coexistence evolution enhances the AI method's accuracy and enables optimal decision-making to effectively reduce predictive uncertainty. To validate our approach, we compare our results with approximately 20 melting point calculations from the literature. Remarkably, we observe significant deviations in about one-third of the cases, underscoring the need for accurate and reliable AI-based algorithms for materials property calculations.

The ultimate goal of studying the magnetopause position is to accurately determine its location. Both traditional empirical computation methods and the currently popular machine learning approaches have shown promising results. In this study, we propose a Regression-based Physics-Informed Neural Networks (Reg-PINNs) that combines physics-based numerical computation with vanilla machine learning. This new generation of Physics Informed Neural Networks overcomes the limitations of previous methods restricted to solving ordinary and partial differential equations by incorporating conventional empirical models to aid the convergence and enhance the generalization capability of the neural network. Compared to Shue et al. [1998], our model achieves a reduction of approximately 30% in root mean square error. The methodology presented in this study is not only applicable to space research but can also be referenced in studies across various fields, particularly those involving empirical models.

Input gradients have a pivotal role in a variety of applications, including adversarial attack algorithms for evaluating model robustness, explainable AI techniques for generating Saliency Maps, and counterfactual explanations. However, Saliency Maps generated by traditional neural networks are often noisy and provide limited insights. In this paper, we demonstrate that, on the contrary, the Saliency Maps of 1-Lipschitz neural networks, learnt with the dual loss of an optimal transportation problem, exhibit desirable XAI properties: They are highly concentrated on the essential parts of the image with low noise, significantly outperforming state-of-the-art explanation approaches across various models and metrics. We also prove that these maps align unprecedentedly well with human explanations on ImageNet. To explain the particularly beneficial properties of the Saliency Map for such models, we prove this gradient encodes both the direction of the transportation plan and the direction towards the nearest adversarial attack. Following the gradient down to the decision boundary is no longer considered an adversarial attack, but rather a counterfactual explanation that explicitly transports the input from one class to another. Thus, Learning with such a loss jointly optimizes the classification objective and the alignment of the gradient , i.e. the Saliency Map, to the transportation plan direction. These networks were previously known to be certifiably robust by design, and we demonstrate that they scale well for large problems and models, and are tailored for explainability using a fast and straightforward method.

Deep learning methods have gained considerable interest in the numerical solution of various partial differential equations (PDEs). One particular focus is on physics-informed neural networks (PINNs), which integrate physical principles into neural networks. This transforms the process of solving PDEs into optimization problems for neural networks. In order to address a collection of advection-diffusion equations (ADE) in a range of difficult circumstances, this paper proposes a novel network structure. This architecture integrates the solver, which is a multi-scale deep neural network (MscaleDNN) utilized in the PINN method, with a hard constraint technique known as HCPINN. This method introduces a revised formulation of the desired solution for advection-diffusion equations (ADE) by utilizing a loss function that incorporates the residuals of the governing equation and penalizes any deviations from the specified boundary and initial constraints. By surpassing the boundary constraints automatically, this method improves the accuracy and efficiency of the PINN technique. To address the ``spectral bias'' phenomenon in neural networks, a subnetwork structure of MscaleDNN and a Fourier-induced activation function are incorporated into the HCPINN, resulting in a hybrid approach called SFHCPINN. The effectiveness of SFHCPINN is demonstrated through various numerical experiments involving advection-diffusion equations (ADE) in different dimensions. The numerical results indicate that SFHCPINN outperforms both standard PINN and its subnetwork version with Fourier feature embedding. It achieves remarkable accuracy and efficiency while effectively handling complex boundary conditions and high-frequency scenarios in ADE.

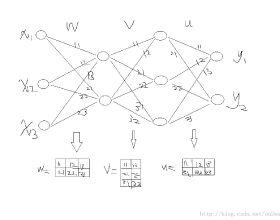

Our comprehension of biological neuronal networks has profoundly influenced the evolution of artificial neural networks (ANNs). However, the neurons employed in ANNs exhibit remarkable deviations from their biological analogs, mainly due to the absence of complex dendritic trees encompassing local nonlinearity. Despite such disparities, previous investigations have demonstrated that point neurons can functionally substitute dendritic neurons in executing computational tasks. In this study, we scrutinized the importance of nonlinear dendrites within neural networks. By employing machine-learning methodologies, we assessed the impact of dendritic structure nonlinearity on neural network performance. Our findings reveal that integrating dendritic structures can substantially enhance model capacity and performance while keeping signal communication costs effectively restrained. This investigation offers pivotal insights that hold considerable implications for the development of future neural network accelerators.

Recently, graph neural networks have been gaining a lot of attention to simulate dynamical systems due to their inductive nature leading to zero-shot generalizability. Similarly, physics-informed inductive biases in deep-learning frameworks have been shown to give superior performance in learning the dynamics of physical systems. There is a growing volume of literature that attempts to combine these two approaches. Here, we evaluate the performance of thirteen different graph neural networks, namely, Hamiltonian and Lagrangian graph neural networks, graph neural ODE, and their variants with explicit constraints and different architectures. We briefly explain the theoretical formulation highlighting the similarities and differences in the inductive biases and graph architecture of these systems. We evaluate these models on spring, pendulum, gravitational, and 3D deformable solid systems to compare the performance in terms of rollout error, conserved quantities such as energy and momentum, and generalizability to unseen system sizes. Our study demonstrates that GNNs with additional inductive biases, such as explicit constraints and decoupling of kinetic and potential energies, exhibit significantly enhanced performance. Further, all the physics-informed GNNs exhibit zero-shot generalizability to system sizes an order of magnitude larger than the training system, thus providing a promising route to simulate large-scale realistic systems.

Graph Neural Networks (GNNs) have received considerable attention on graph-structured data learning for a wide variety of tasks. The well-designed propagation mechanism which has been demonstrated effective is the most fundamental part of GNNs. Although most of GNNs basically follow a message passing manner, litter effort has been made to discover and analyze their essential relations. In this paper, we establish a surprising connection between different propagation mechanisms with a unified optimization problem, showing that despite the proliferation of various GNNs, in fact, their proposed propagation mechanisms are the optimal solution optimizing a feature fitting function over a wide class of graph kernels with a graph regularization term. Our proposed unified optimization framework, summarizing the commonalities between several of the most representative GNNs, not only provides a macroscopic view on surveying the relations between different GNNs, but also further opens up new opportunities for flexibly designing new GNNs. With the proposed framework, we discover that existing works usually utilize naive graph convolutional kernels for feature fitting function, and we further develop two novel objective functions considering adjustable graph kernels showing low-pass or high-pass filtering capabilities respectively. Moreover, we provide the convergence proofs and expressive power comparisons for the proposed models. Extensive experiments on benchmark datasets clearly show that the proposed GNNs not only outperform the state-of-the-art methods but also have good ability to alleviate over-smoothing, and further verify the feasibility for designing GNNs with our unified optimization framework.

Deep learning methods for graphs achieve remarkable performance on many node-level and graph-level prediction tasks. However, despite the proliferation of the methods and their success, prevailing Graph Neural Networks (GNNs) neglect subgraphs, rendering subgraph prediction tasks challenging to tackle in many impactful applications. Further, subgraph prediction tasks present several unique challenges, because subgraphs can have non-trivial internal topology, but also carry a notion of position and external connectivity information relative to the underlying graph in which they exist. Here, we introduce SUB-GNN, a subgraph neural network to learn disentangled subgraph representations. In particular, we propose a novel subgraph routing mechanism that propagates neural messages between the subgraph's components and randomly sampled anchor patches from the underlying graph, yielding highly accurate subgraph representations. SUB-GNN specifies three channels, each designed to capture a distinct aspect of subgraph structure, and we provide empirical evidence that the channels encode their intended properties. We design a series of new synthetic and real-world subgraph datasets. Empirical results for subgraph classification on eight datasets show that SUB-GNN achieves considerable performance gains, outperforming strong baseline methods, including node-level and graph-level GNNs, by 12.4% over the strongest baseline. SUB-GNN performs exceptionally well on challenging biomedical datasets when subgraphs have complex topology and even comprise multiple disconnected components.