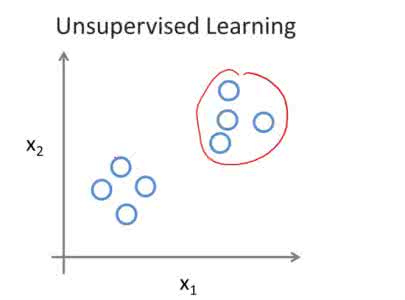

Recent deep learning models such as ChatGPT utilizing the back-propagation algorithm have exhibited remarkable performance. However, the disparity between the biological brain processes and the back-propagation algorithm has been noted. The Forward-Forward algorithm, which trains deep learning models solely through the forward pass, has emerged to address this. Although the Forward-Forward algorithm cannot replace back-propagation due to limitations such as having to use special input and loss functions, it has the potential to be useful in special situations where back-propagation is difficult to use. To work around this limitation and verify usability, we propose an Unsupervised Forward-Forward algorithm. Using an unsupervised learning model enables training with usual loss functions and inputs without restriction. Through this approach, we lead to stable learning and enable versatile utilization across various datasets and tasks. From a usability perspective, given the characteristics of the Forward-Forward algorithm and the advantages of the proposed method, we anticipate its practical application even in scenarios such as federated learning, where deep learning layers need to be trained separately in physically distributed environments.

相關內容

Feature attributions are ubiquitous tools for understanding the predictions of machine learning models. However, the calculation of popular methods for scoring input variables such as SHAP and LIME suffers from high instability due to random sampling. Leveraging ideas from multiple hypothesis testing, we devise attribution methods that ensure the most important features are ranked correctly with high probability. Given SHAP estimates from KernelSHAP or Shapley Sampling, we demonstrate how to retrospectively verify the number of stable rankings. Further, we introduce efficient sampling algorithms for SHAP and LIME that guarantee the $K$ highest-ranked features have the proper ordering. Finally, we show how to adapt these local feature attribution methods for the global importance setting.

GFlowNets are probabilistic models that sequentially generate compositional structures through a stochastic policy. Among GFlowNets, temperature-conditional GFlowNets can introduce temperature-based controllability for exploration and exploitation. We propose \textit{Logit-scaling GFlowNets} (Logit-GFN), a novel architectural design that greatly accelerates the training of temperature-conditional GFlowNets. It is based on the idea that previously proposed approaches introduced numerical challenges in the deep network training, since different temperatures may give rise to very different gradient profiles as well as magnitudes of the policy's logits. We find that the challenge is greatly reduced if a learned function of the temperature is used to scale the policy's logits directly. Also, using Logit-GFN, GFlowNets can be improved by having better generalization capabilities in offline learning and mode discovery capabilities in online learning, which is empirically verified in various biological and chemical tasks. Our code is available at \url{//github.com/dbsxodud-11/logit-gfn}

In the rapidly evolving field of autonomous driving, precise segmentation of LiDAR data is crucial for understanding complex 3D environments. Traditional approaches often rely on disparate, standalone codebases, hindering unified advancements and fair benchmarking across models. To address these challenges, we introduce MMDetection3D-lidarseg, a comprehensive toolbox designed for the efficient training and evaluation of state-of-the-art LiDAR segmentation models. We support a wide range of segmentation models and integrate advanced data augmentation techniques to enhance robustness and generalization. Additionally, the toolbox provides support for multiple leading sparse convolution backends, optimizing computational efficiency and performance. By fostering a unified framework, MMDetection3D-lidarseg streamlines development and benchmarking, setting new standards for research and application. Our extensive benchmark experiments on widely-used datasets demonstrate the effectiveness of the toolbox. The codebase and trained models have been publicly available, promoting further research and innovation in the field of LiDAR segmentation for autonomous driving.

Current methods to prevent crypto asset fraud are based on the analysis of transaction graphs within blockchain networks. While effective for identifying transaction patterns indicative of fraud, it does not capture the semantics of transactions and is constrained to blockchain data. Consequently, preventive methods based on transaction graphs are inherently limited. In response to these limitations, we propose the Kosmosis approach, which aims to incrementally construct a knowledge graph as new blockchain and social media data become available. During construction, it aims to extract the semantics of transactions and connect blockchain addresses to their real-world entities by fusing blockchain and social media data in a knowledge graph. This enables novel preventive methods against rug pulls as a form of crypto asset fraud. To demonstrate the effectiveness and practical applicability of the Kosmosis approach, we examine a series of real-world rug pulls from 2021. Through this case, we illustrate how Kosmosis can aid in identifying and preventing such fraudulent activities by leveraging the insights from the constructed knowledge graph.

Deep learning is a powerful set of techniques for detecting complex patterns in data. However, when the causal structure of that process is underspecified, deep learning models can be brittle, lacking robustness to shifts in the distribution of the data-generating process. In this paper, we turn to loop polarity analysis as a tool for specifying the causal structure of a data-generating process, in order to encode a more robust understanding of the relationship between system structure and system behavior within the deep learning pipeline. We use simulated epidemic data based on an SIR model to demonstrate how measuring the polarity of the different feedback loops that compose a system can lead to more robust inferences on the part of neural networks, improving the out-of-distribution performance of a deep learning model and infusing a system-dynamics-inspired approach into the machine learning development pipeline.

The fusion of causal models with deep learning introducing increasingly intricate data sets, such as the causal associations within images or between textual components, has surfaced as a focal research area. Nonetheless, the broadening of original causal concepts and theories to such complex, non-statistical data has been met with serious challenges. In response, our study proposes redefinitions of causal data into three distinct categories from the standpoint of causal structure and representation: definite data, semi-definite data, and indefinite data. Definite data chiefly pertains to statistical data used in conventional causal scenarios, while semi-definite data refers to a spectrum of data formats germane to deep learning, including time-series, images, text, and others. Indefinite data is an emergent research sphere inferred from the progression of data forms by us. To comprehensively present these three data paradigms, we elaborate on their formal definitions, differences manifested in datasets, resolution pathways, and development of research. We summarize key tasks and achievements pertaining to definite and semi-definite data from myriad research undertakings, present a roadmap for indefinite data, beginning with its current research conundrums. Lastly, we classify and scrutinize the key datasets presently utilized within these three paradigms.

As an effective strategy, data augmentation (DA) alleviates data scarcity scenarios where deep learning techniques may fail. It is widely applied in computer vision then introduced to natural language processing and achieves improvements in many tasks. One of the main focuses of the DA methods is to improve the diversity of training data, thereby helping the model to better generalize to unseen testing data. In this survey, we frame DA methods into three categories based on the diversity of augmented data, including paraphrasing, noising, and sampling. Our paper sets out to analyze DA methods in detail according to the above categories. Further, we also introduce their applications in NLP tasks as well as the challenges.

Current deep learning research is dominated by benchmark evaluation. A method is regarded as favorable if it empirically performs well on the dedicated test set. This mentality is seamlessly reflected in the resurfacing area of continual learning, where consecutively arriving sets of benchmark data are investigated. The core challenge is framed as protecting previously acquired representations from being catastrophically forgotten due to the iterative parameter updates. However, comparison of individual methods is nevertheless treated in isolation from real world application and typically judged by monitoring accumulated test set performance. The closed world assumption remains predominant. It is assumed that during deployment a model is guaranteed to encounter data that stems from the same distribution as used for training. This poses a massive challenge as neural networks are well known to provide overconfident false predictions on unknown instances and break down in the face of corrupted data. In this work we argue that notable lessons from open set recognition, the identification of statistically deviating data outside of the observed dataset, and the adjacent field of active learning, where data is incrementally queried such that the expected performance gain is maximized, are frequently overlooked in the deep learning era. Based on these forgotten lessons, we propose a consolidated view to bridge continual learning, active learning and open set recognition in deep neural networks. Our results show that this not only benefits each individual paradigm, but highlights the natural synergies in a common framework. We empirically demonstrate improvements when alleviating catastrophic forgetting, querying data in active learning, selecting task orders, while exhibiting robust open world application where previously proposed methods fail.

Graph representation learning resurges as a trending research subject owing to the widespread use of deep learning for Euclidean data, which inspire various creative designs of neural networks in the non-Euclidean domain, particularly graphs. With the success of these graph neural networks (GNN) in the static setting, we approach further practical scenarios where the graph dynamically evolves. Existing approaches typically resort to node embeddings and use a recurrent neural network (RNN, broadly speaking) to regulate the embeddings and learn the temporal dynamics. These methods require the knowledge of a node in the full time span (including both training and testing) and are less applicable to the frequent change of the node set. In some extreme scenarios, the node sets at different time steps may completely differ. To resolve this challenge, we propose EvolveGCN, which adapts the graph convolutional network (GCN) model along the temporal dimension without resorting to node embeddings. The proposed approach captures the dynamism of the graph sequence through using an RNN to evolve the GCN parameters. Two architectures are considered for the parameter evolution. We evaluate the proposed approach on tasks including link prediction, edge classification, and node classification. The experimental results indicate a generally higher performance of EvolveGCN compared with related approaches. The code is available at \url{//github.com/IBM/EvolveGCN}.

While existing machine learning models have achieved great success for sentiment classification, they typically do not explicitly capture sentiment-oriented word interaction, which can lead to poor results for fine-grained analysis at the snippet level (a phrase or sentence). Factorization Machine provides a possible approach to learning element-wise interaction for recommender systems, but they are not directly applicable to our task due to the inability to model contexts and word sequences. In this work, we develop two Position-aware Factorization Machines which consider word interaction, context and position information. Such information is jointly encoded in a set of sentiment-oriented word interaction vectors. Compared to traditional word embeddings, SWI vectors explicitly capture sentiment-oriented word interaction and simplify the parameter learning. Experimental results show that while they have comparable performance with state-of-the-art methods for document-level classification, they benefit the snippet/sentence-level sentiment analysis.