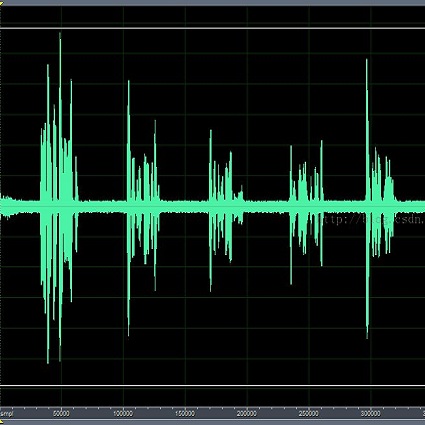

In this work, we extend our previously proposed offline SpatialNet for long-term streaming multichannel speech enhancement in both static and moving speaker scenarios. SpatialNet exploits spatial information, such as the spatial/steering direction of speech, for discriminating between target speech and interferences, and achieved outstanding performance. The core of SpatialNet is a narrow-band self-attention module used for learning the temporal dynamic of spatial vectors. Towards long-term streaming speech enhancement, we propose to replace the offline self-attention network with online networks that have linear inference complexity w.r.t signal length and meanwhile maintain the capability of learning long-term information. Three variants are developed based on (i) masked self-attention, (ii) Retention, a self-attention variant with linear inference complexity, and (iii) Mamba, a structured-state-space-based RNN-like network. Moreover, we investigate the length extrapolation ability of different networks, namely test on signals that are much longer than training signals, and propose a short-signal training plus long-signal fine-tuning strategy, which largely improves the length extrapolation ability of the networks within limited training time. Overall, the proposed online SpatialNet achieves outstanding speech enhancement performance for long audio streams, and for both static and moving speakers. The proposed method will be open-sourced in //github.com/Audio-WestlakeU/NBSS.

相關內容

The rapidly developing Large Vision Language Models (LVLMs) have shown notable capabilities on a range of multi-modal tasks, but still face the hallucination phenomena where the generated texts do not align with the given contexts, significantly restricting the usages of LVLMs. Most previous work detects and mitigates hallucination at the coarse-grained level or requires expensive annotation (e.g., labeling by proprietary models or human experts). To address these issues, we propose detecting and mitigating hallucinations in LVLMs via fine-grained AI feedback. The basic idea is that we generate a small-size sentence-level hallucination annotation dataset by proprietary models, whereby we train a hallucination detection model which can perform sentence-level hallucination detection, covering primary hallucination types (i.e., object, attribute, and relationship). Then, we propose a detect-then-rewrite pipeline to automatically construct preference dataset for training hallucination mitigating model. Furthermore, we propose differentiating the severity of hallucinations, and introducing a Hallucination Severity-Aware Direct Preference Optimization (HSA-DPO) for mitigating hallucination in LVLMs by incorporating the severity of hallucinations into preference learning. Extensive experiments demonstrate the effectiveness of our method.

In this paper, we propose a novel efficient digital twin (DT) data processing scheme to reduce service latency for multicast short video streaming. Particularly, DT is constructed to emulate and analyze user status for multicast group update and swipe feature abstraction. Then, a precise measurement model of DT data processing is developed to characterize the relationship among DT model size, user dynamics, and user clustering accuracy. A service latency model, consisting of DT data processing delay, video transcoding delay, and multicast transmission delay, is constructed by incorporating the impact of user clustering accuracy. Finally, a joint optimization problem of DT model size selection and bandwidth allocation is formulated to minimize the service latency. To efficiently solve this problem, a diffusion-based resource management algorithm is proposed, which utilizes the denoising technique to improve the action-generation process in the deep reinforcement learning algorithm. Simulation results based on the real-world dataset demonstrate that the proposed DT data processing scheme outperforms benchmark schemes in terms of service latency.

The training-conditional coverage performance of the conformal prediction is known to be empirically sound. Recently, there have been efforts to support this observation with theoretical guarantees. The training-conditional coverage bounds for jackknife+ and full-conformal prediction regions have been established via the notion of $(m,n)$-stability by Liang and Barber~[2023]. Although this notion is weaker than uniform stability, it is not clear how to evaluate it for practical models. In this paper, we study the training-conditional coverage bounds of full-conformal, jackknife+, and CV+ prediction regions from a uniform stability perspective which is known to hold for empirical risk minimization over reproducing kernel Hilbert spaces with convex regularization. We derive coverage bounds for finite-dimensional models by a concentration argument for the (estimated) predictor function, and compare the bounds with existing ones under ridge regression.

A sequence of predictions is calibrated if and only if it induces no swap regret to all down-stream decision tasks. We study the Maximum Swap Regret (MSR) of predictions for binary events: the swap regret maximized over all downstream tasks with bounded payoffs. Previously, the best online prediction algorithm for minimizing MSR is obtained by minimizing the K1 calibration error, which upper bounds MSR up to a constant factor. However, recent work (Qiao and Valiant, 2021) gives an ${\Omega}(T^{0.528})$ lower bound for the worst-case expected K1 calibration error incurred by any randomized algorithm in T rounds, presenting a barrier to achieving better rates for MSR. Several relaxations of MSR have been considered to overcome this barrier, via external regret (Kleinberg et al., 2023) and regret bounds depending polynomially on the number of actions in downstream tasks (Noarov et al., 2023; Roth and Shi, 2024). We show that the barrier can be surpassed without any relaxations: we give an efficient randomized prediction algorithm that guarantees $O(TlogT)$ expected MSR. We also discuss the economic utility of calibration by viewing MSR as a decision-theoretic calibration error metric and study its relationship to existing metrics.

Motivated by multi-domain Service Function Chain (SFC) orchestration, we define the Shortest-Longest Path (SLP) problem, prove its hardness, and design an efficient Fully Polynomial Time Approximation Scheme (FPTAS) using the scaling and rounding technique to compute an approximation solution with provable performance guarantee. The SLP problem and its solution algorithm have theoretical significance in multicriteria optimization and also have application potential in QoS routing and multi-domain network resource allocation scenarios.

We present a novel Graph-based debiasing Algorithm for Underreported Data (GRAUD) aiming at an efficient joint estimation of event counts and discovery probabilities across spatial or graphical structures. This innovative method provides a solution to problems seen in fields such as policing data and COVID-$19$ data analysis. Our approach avoids the need for strong priors typically associated with Bayesian frameworks. By leveraging the graph structures on unknown variables $n$ and $p$, our method debiases the under-report data and estimates the discovery probability at the same time. We validate the effectiveness of our method through simulation experiments and illustrate its practicality in one real-world application: police 911 calls-to-service data.

In this paper, we tackle the task of generating Prediction Intervals (PIs) in high-risk scenarios by proposing enhancements for learning Interval Type-2 (IT2) Fuzzy Logic Systems (FLSs) to address their learning challenges. In this context, we first provide extra design flexibility to the Karnik-Mendel (KM) and Nie-Tan (NT) center of sets calculation methods to increase their flexibility for generating PIs. These enhancements increase the flexibility of KM in the defuzzification stage while the NT in the fuzzification stage. To address the large-scale learning challenge, we transform the IT2-FLS's constraint learning problem into an unconstrained form via parameterization tricks, enabling the direct application of deep learning optimizers. To address the curse of dimensionality issue, we expand the High-Dimensional Takagi-Sugeno-Kang (HTSK) method proposed for type-1 FLS to IT2-FLSs, resulting in the HTSK2 approach. Additionally, we introduce a framework to learn the enhanced IT2-FLS with a dual focus, aiming for high precision and PI generation. Through exhaustive statistical results, we reveal that HTSK2 effectively addresses the dimensionality challenge, while the enhanced KM and NT methods improved learning and enhanced uncertainty quantification performances of IT2-FLSs.

Graph Neural Networks (GNNs) offer a compact and computationally efficient way to learn embeddings and classifications on graph data. GNN models are frequently large, making distributed minibatch training necessary. The primary contribution of this paper is new methods for reducing communication in the sampling step for distributed GNN training. Here, we propose a matrix-based bulk sampling approach that expresses sampling as a sparse matrix multiplication (SpGEMM) and samples multiple minibatches at once. When the input graph topology does not fit on a single device, our method distributes the graph and use communication-avoiding SpGEMM algorithms to scale GNN minibatch sampling, enabling GNN training on much larger graphs than those that can fit into a single device memory. When the input graph topology (but not the embeddings) fits in the memory of one GPU, our approach (1) performs sampling without communication, (2) amortizes the overheads of sampling a minibatch, and (3) can represent multiple sampling algorithms by simply using different matrix constructions. In addition to new methods for sampling, we introduce a pipeline that uses our matrix-based bulk sampling approach to provide end-to-end training results. We provide experimental results on the largest Open Graph Benchmark (OGB) datasets on $128$ GPUs, and show that our pipeline is $2.5\times$ faster than Quiver (a distributed extension to PyTorch-Geometric) on a $3$-layer GraphSAGE network. On datasets outside of OGB, we show a $8.46\times$ speedup on $128$ GPUs in per-epoch time. Finally, we show scaling when the graph is distributed across GPUs and scaling for both node-wise and layer-wise sampling algorithms.

In this paper, we investigate the retrieval-augmented generation (RAG) based on Knowledge Graphs (KGs) to improve the accuracy and reliability of Large Language Models (LLMs). Recent approaches suffer from insufficient and repetitive knowledge retrieval, tedious and time-consuming query parsing, and monotonous knowledge utilization. To this end, we develop a Hypothesis Knowledge Graph Enhanced (HyKGE) framework, which leverages LLMs' powerful reasoning capacity to compensate for the incompleteness of user queries, optimizes the interaction process with LLMs, and provides diverse retrieved knowledge. Specifically, HyKGE explores the zero-shot capability and the rich knowledge of LLMs with Hypothesis Outputs to extend feasible exploration directions in the KGs, as well as the carefully curated prompt to enhance the density and efficiency of LLMs' responses. Furthermore, we introduce the HO Fragment Granularity-aware Rerank Module to filter out noise while ensuring the balance between diversity and relevance in retrieved knowledge. Experiments on two Chinese medical multiple-choice question datasets and one Chinese open-domain medical Q&A dataset with two LLM turbos demonstrate the superiority of HyKGE in terms of accuracy and explainability.

In this paper, we propose a novel Feature Decomposition and Reconstruction Learning (FDRL) method for effective facial expression recognition. We view the expression information as the combination of the shared information (expression similarities) across different expressions and the unique information (expression-specific variations) for each expression. More specifically, FDRL mainly consists of two crucial networks: a Feature Decomposition Network (FDN) and a Feature Reconstruction Network (FRN). In particular, FDN first decomposes the basic features extracted from a backbone network into a set of facial action-aware latent features to model expression similarities. Then, FRN captures the intra-feature and inter-feature relationships for latent features to characterize expression-specific variations, and reconstructs the expression feature. To this end, two modules including an intra-feature relation modeling module and an inter-feature relation modeling module are developed in FRN. Experimental results on both the in-the-lab databases (including CK+, MMI, and Oulu-CASIA) and the in-the-wild databases (including RAF-DB and SFEW) show that the proposed FDRL method consistently achieves higher recognition accuracy than several state-of-the-art methods. This clearly highlights the benefit of feature decomposition and reconstruction for classifying expressions.