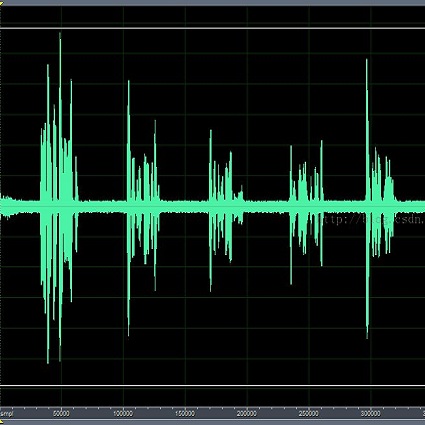

Within the area of speech enhancement, there is an ongoing interest in the creation of neural systems which explicitly aim to improve the perceptual quality of the processed audio. In concert with this is the topic of non-intrusive (i.e. without clean reference) speech quality prediction, for which neural networks are trained to predict human-assigned quality labels directly from distorted audio. When combined, these areas allow for the creation of powerful new speech enhancement systems which can leverage large real-world datasets of distorted audio, by taking inference of a pre-trained speech quality predictor as the sole loss function of the speech enhancement system. This paper aims to identify a potential pitfall with this approach, namely hallucinations which are introduced by the enhancement system `tricking' the speech quality predictor.

相關內容

We consider an asynchronous decentralized learning system, which consists of a network of connected devices trying to learn a machine learning model without any centralized parameter server. The users in the network have their own local training data, which is used for learning across all the nodes in the network. The learning method consists of two processes, evolving simultaneously without any necessary synchronization. The first process is the model update, where the users update their local model via a fixed number of stochastic gradient descent steps. The second process is model mixing, where the users communicate with each other via randomized gossiping to exchange their models and average them to reach consensus. In this work, we investigate the staleness criteria for such a system, which is a sufficient condition for convergence of individual user models. We show that for network scaling, i.e., when the number of user devices $n$ is very large, if the gossip capacity of individual users scales as $\Omega(\log n)$, we can guarantee the convergence of user models in finite time. Furthermore, we show that the bounded staleness can only be guaranteed by any distributed opportunistic scheme by $\Omega(n)$ scaling.

Survival prediction is a complex ordinal regression task that aims to predict the survival coefficient ranking among a cohort of patients, typically achieved by analyzing patients' whole slide images. Existing deep learning approaches mainly adopt multiple instance learning or graph neural networks under weak supervision. Most of them are unable to uncover the diverse interactions between different types of biological entities(\textit{e.g.}, cell cluster and tissue block) across multiple scales, while such interactions are crucial for patient survival prediction. In light of this, we propose a novel multi-scale heterogeneity-aware hypergraph representation framework. Specifically, our framework first constructs a multi-scale heterogeneity-aware hypergraph and assigns each node with its biological entity type. It then mines diverse interactions between nodes on the graph structure to obtain a global representation. Experimental results demonstrate that our method outperforms state-of-the-art approaches on three benchmark datasets. Code is publicly available at \href{//github.com/Hanminghao/H2GT}{//github.com/Hanminghao/H2GT}.

With the rising prevalence of deepfakes, there is a growing interest in developing generalizable detection methods for various types of deepfakes. While effective in their specific modalities, traditional detection methods fall short in addressing the generalizability of detection across diverse cross-modal deepfakes. This paper aims to explicitly learn potential cross-modal correlation to enhance deepfake detection towards various generation scenarios. Our approach introduces a correlation distillation task, which models the inherent cross-modal correlation based on content information. This strategy helps to prevent the model from overfitting merely to audio-visual synchronization. Additionally, we present the Cross-Modal Deepfake Dataset (CMDFD), a comprehensive dataset with four generation methods to evaluate the detection of diverse cross-modal deepfakes. The experimental results on CMDFD and FakeAVCeleb datasets demonstrate the superior generalizability of our method over existing state-of-the-art methods. Our code and data can be found at \url{//github.com/ljj898/CMDFD-Dataset-and-Deepfake-Detection}.

Smishing, also known as SMS phishing, is a type of fraudulent communication in which an attacker disguises SMS communications to deceive a target into providing their sensitive data. Smishing attacks use a variety of tactics; however, they have a similar goal of stealing money or personally identifying information (PII) from a victim. In response to these attacks, a wide variety of anti-smishing tools have been developed to block or filter these communications. Despite this, the number of phishing attacks continue to rise. In this paper, we developed a test bed for measuring the effectiveness of popular anti-smishing tools against fresh smishing attacks. To collect fresh smishing data, we introduce Smishtank.com, a collaborative online resource for reporting and collecting smishing data sets. The SMS messages were validated by a security expert and an in-depth qualitative analysis was performed on the collected messages to provide further insights. To compare tool effectiveness, we experimented with 20 smishing and benign messages across 3 key segments of the SMS messaging delivery ecosystem. Our results revealed significant room for improvement in all 3 areas against our smishing set. Most anti-phishing apps and bulk messaging services didn't filter smishing messages beyond the carrier blocking. The 2 apps that blocked the most smish also blocked 85-100\% of benign messages. Finally, while carriers did not block any benign messages, they were only able to reach a 25-35\% blocking rate for smishing messages. Our work provides insights into the performance of anti-smishing tools and the roles they play in the message blocking process. This paper would enable the research community and industry to be better informed on the current state of anti-smishing technology on the SMS platform.

We introduce the task of human action anomaly detection (HAAD), which aims to identify anomalous motions in an unsupervised manner given only the pre-determined normal category of training action samples. Compared to prior human-related anomaly detection tasks which primarily focus on unusual events from videos, HAAD involves the learning of specific action labels to recognize semantically anomalous human behaviors. To address this task, we propose a normalizing flow (NF)-based detection framework where the sample likelihood is effectively leveraged to indicate anomalies. As action anomalies often occur in some specific body parts, in addition to the full-body action feature learning, we incorporate extra encoding streams into our framework for a finer modeling of body subsets. Our framework is thus multi-level to jointly discover global and local motion anomalies. Furthermore, to show awareness of the potentially jittery data during recording, we resort to discrete cosine transformation by converting the action samples from the temporal to the frequency domain to mitigate the issue of data instability. Extensive experimental results on two human action datasets demonstrate that our method outperforms the baselines formed by adapting state-of-the-art human activity AD approaches to our task of HAAD.

Graphs are important data representations for describing objects and their relationships, which appear in a wide diversity of real-world scenarios. As one of a critical problem in this area, graph generation considers learning the distributions of given graphs and generating more novel graphs. Owing to their wide range of applications, generative models for graphs, which have a rich history, however, are traditionally hand-crafted and only capable of modeling a few statistical properties of graphs. Recent advances in deep generative models for graph generation is an important step towards improving the fidelity of generated graphs and paves the way for new kinds of applications. This article provides an extensive overview of the literature in the field of deep generative models for graph generation. Firstly, the formal definition of deep generative models for the graph generation and the preliminary knowledge are provided. Secondly, taxonomies of deep generative models for both unconditional and conditional graph generation are proposed respectively; the existing works of each are compared and analyzed. After that, an overview of the evaluation metrics in this specific domain is provided. Finally, the applications that deep graph generation enables are summarized and five promising future research directions are highlighted.

Advances in artificial intelligence often stem from the development of new environments that abstract real-world situations into a form where research can be done conveniently. This paper contributes such an environment based on ideas inspired by elementary Microeconomics. Agents learn to produce resources in a spatially complex world, trade them with one another, and consume those that they prefer. We show that the emergent production, consumption, and pricing behaviors respond to environmental conditions in the directions predicted by supply and demand shifts in Microeconomics. We also demonstrate settings where the agents' emergent prices for goods vary over space, reflecting the local abundance of goods. After the price disparities emerge, some agents then discover a niche of transporting goods between regions with different prevailing prices -- a profitable strategy because they can buy goods where they are cheap and sell them where they are expensive. Finally, in a series of ablation experiments, we investigate how choices in the environmental rewards, bartering actions, agent architecture, and ability to consume tradable goods can either aid or inhibit the emergence of this economic behavior. This work is part of the environment development branch of a research program that aims to build human-like artificial general intelligence through multi-agent interactions in simulated societies. By exploring which environment features are needed for the basic phenomena of elementary microeconomics to emerge automatically from learning, we arrive at an environment that differs from those studied in prior multi-agent reinforcement learning work along several dimensions. For example, the model incorporates heterogeneous tastes and physical abilities, and agents negotiate with one another as a grounded form of communication.

Inspired by the human cognitive system, attention is a mechanism that imitates the human cognitive awareness about specific information, amplifying critical details to focus more on the essential aspects of data. Deep learning has employed attention to boost performance for many applications. Interestingly, the same attention design can suit processing different data modalities and can easily be incorporated into large networks. Furthermore, multiple complementary attention mechanisms can be incorporated in one network. Hence, attention techniques have become extremely attractive. However, the literature lacks a comprehensive survey specific to attention techniques to guide researchers in employing attention in their deep models. Note that, besides being demanding in terms of training data and computational resources, transformers only cover a single category in self-attention out of the many categories available. We fill this gap and provide an in-depth survey of 50 attention techniques categorizing them by their most prominent features. We initiate our discussion by introducing the fundamental concepts behind the success of attention mechanism. Next, we furnish some essentials such as the strengths and limitations of each attention category, describe their fundamental building blocks, basic formulations with primary usage, and applications specifically for computer vision. We also discuss the challenges and open questions related to attention mechanism in general. Finally, we recommend possible future research directions for deep attention.

Knowledge graph embedding, which aims to represent entities and relations as low dimensional vectors (or matrices, tensors, etc.), has been shown to be a powerful technique for predicting missing links in knowledge graphs. Existing knowledge graph embedding models mainly focus on modeling relation patterns such as symmetry/antisymmetry, inversion, and composition. However, many existing approaches fail to model semantic hierarchies, which are common in real-world applications. To address this challenge, we propose a novel knowledge graph embedding model---namely, Hierarchy-Aware Knowledge Graph Embedding (HAKE)---which maps entities into the polar coordinate system. HAKE is inspired by the fact that concentric circles in the polar coordinate system can naturally reflect the hierarchy. Specifically, the radial coordinate aims to model entities at different levels of the hierarchy, and entities with smaller radii are expected to be at higher levels; the angular coordinate aims to distinguish entities at the same level of the hierarchy, and these entities are expected to have roughly the same radii but different angles. Experiments demonstrate that HAKE can effectively model the semantic hierarchies in knowledge graphs, and significantly outperforms existing state-of-the-art methods on benchmark datasets for the link prediction task.

Multi-relation Question Answering is a challenging task, due to the requirement of elaborated analysis on questions and reasoning over multiple fact triples in knowledge base. In this paper, we present a novel model called Interpretable Reasoning Network that employs an interpretable, hop-by-hop reasoning process for question answering. The model dynamically decides which part of an input question should be analyzed at each hop; predicts a relation that corresponds to the current parsed results; utilizes the predicted relation to update the question representation and the state of the reasoning process; and then drives the next-hop reasoning. Experiments show that our model yields state-of-the-art results on two datasets. More interestingly, the model can offer traceable and observable intermediate predictions for reasoning analysis and failure diagnosis.