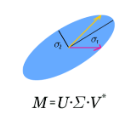

The Levin method is a classical technique for evaluating oscillatory integrals that operates by solving a certain ordinary differential equation in order to construct an antiderivative of the integrand. It was long believed that the method suffers from ``low-frequency breakdown,'' meaning that the accuracy of the computed integral deteriorates when the integrand is only slowly oscillating. Recently presented experimental evidence suggests that, when a Chebyshev spectral method is used to discretize the differential equation and the resulting linear system is solved via a truncated singular value decomposition, no such phenomenon is observed. Here, we provide a proof that this is, in fact, the case, and, remarkably, our proof applies even in the presence of saddle points. We also observe that the absence of low-frequency breakdown makes the Levin method suitable for use as the basis of an adaptive integration method. We describe extensive numerical experiments demonstrating that the resulting adaptive Levin method can efficiently and accurately evaluate a large class of oscillatory integrals, including many with saddle points.

相關內容

This paper studies adaptive least-squares finite element methods for convection-dominated diffusion-reaction problems. The least-squares methods are based on the first-order system of the primal and dual variables with various ways of imposing outflow boundary conditions. The coercivity of the homogeneous least-squares functionals are established, and the a priori error estimates of the least-squares methods are obtained in a norm that incorporates the streamline derivative. All methods have the same convergence rate provided that meshes in the layer regions are fine enough. To increase computational accuracy and reduce computational cost, adaptive least-squares methods are implemented and numerical results are presented for some test problems.

Visualization plays a vital role in making sense of complex network data. Recent studies have shown the potential of using extended reality (XR) for the immersive exploration of networks. The additional depth cues offered by XR help users perform better in certain tasks when compared to using traditional desktop setups. However, prior works on immersive network visualization rely on mostly static graph layouts to present the data to the user. This poses a problem since there is no optimal layout for all possible tasks. The choice of layout heavily depends on the type of network and the task at hand. We introduce a multi-layout approach that allows users to effectively explore hierarchical network data in immersive space. The resulting system leverages different layout techniques and interactions to efficiently use the available space in VR and provide an optimal view of the data depending on the task and the level of detail required to solve it. To evaluate our approach, we have conducted a user study comparing it against the state of the art for immersive network visualization. Participants performed tasks at varying spatial scopes. The results show that our approach outperforms the baseline in spatially focused scenarios as well as when the whole network needs to be considered.

Multi-agent reinforcement learning typically suffers from the problem of sample inefficiency, where learning suitable policies involves the use of many data samples. Learning from external demonstrators is a possible solution that mitigates this problem. However, most prior approaches in this area assume the presence of a single demonstrator. Leveraging multiple knowledge sources (i.e., advisors) with expertise in distinct aspects of the environment could substantially speed up learning in complex environments. This paper considers the problem of simultaneously learning from multiple independent advisors in multi-agent reinforcement learning. The approach leverages a two-level Q-learning architecture, and extends this framework from single-agent to multi-agent settings. We provide principled algorithms that incorporate a set of advisors by both evaluating the advisors at each state and subsequently using the advisors to guide action selection. We also provide theoretical convergence and sample complexity guarantees. Experimentally, we validate our approach in three different test-beds and show that our algorithms give better performances than baselines, can effectively integrate the combined expertise of different advisors, and learn to ignore bad advice.

Gene-disease associations are fundamental for understanding disease etiology and developing effective interventions and treatments. Identifying genes not yet associated with a disease due to a lack of studies is a challenging task in which prioritization based on prior knowledge is an important element. The computational search for new candidate disease genes may be eased by positive-unlabeled learning, the machine learning setting in which only a subset of instances are labeled as positive while the rest of the data set is unlabeled. In this work, we propose a set of effective network-based features to be used in a novel Markov diffusion-based multi-class labeling strategy for putative disease gene discovery. The performances of the new labeling algorithm and the effectiveness of the proposed features have been tested on ten different disease data sets using three machine learning algorithms. The new features have been compared against classical topological and functional/ontological features and a set of network- and biological-derived features already used in gene discovery tasks. The predictive power of the integrated methodology in searching for new disease genes has been found to be competitive against state-of-the-art algorithms.

Unsupervised domain adaptation has recently emerged as an effective paradigm for generalizing deep neural networks to new target domains. However, there is still enormous potential to be tapped to reach the fully supervised performance. In this paper, we present a novel active learning strategy to assist knowledge transfer in the target domain, dubbed active domain adaptation. We start from an observation that energy-based models exhibit free energy biases when training (source) and test (target) data come from different distributions. Inspired by this inherent mechanism, we empirically reveal that a simple yet efficient energy-based sampling strategy sheds light on selecting the most valuable target samples than existing approaches requiring particular architectures or computation of the distances. Our algorithm, Energy-based Active Domain Adaptation (EADA), queries groups of targe data that incorporate both domain characteristic and instance uncertainty into every selection round. Meanwhile, by aligning the free energy of target data compact around the source domain via a regularization term, domain gap can be implicitly diminished. Through extensive experiments, we show that EADA surpasses state-of-the-art methods on well-known challenging benchmarks with substantial improvements, making it a useful option in the open world. Code is available at //github.com/BIT-DA/EADA.

Unsupervised domain adaptation (UDA) methods for person re-identification (re-ID) aim at transferring re-ID knowledge from labeled source data to unlabeled target data. Although achieving great success, most of them only use limited data from a single-source domain for model pre-training, making the rich labeled data insufficiently exploited. To make full use of the valuable labeled data, we introduce the multi-source concept into UDA person re-ID field, where multiple source datasets are used during training. However, because of domain gaps, simply combining different datasets only brings limited improvement. In this paper, we try to address this problem from two perspectives, \ie{} domain-specific view and domain-fusion view. Two constructive modules are proposed, and they are compatible with each other. First, a rectification domain-specific batch normalization (RDSBN) module is explored to simultaneously reduce domain-specific characteristics and increase the distinctiveness of person features. Second, a graph convolutional network (GCN) based multi-domain information fusion (MDIF) module is developed, which minimizes domain distances by fusing features of different domains. The proposed method outperforms state-of-the-art UDA person re-ID methods by a large margin, and even achieves comparable performance to the supervised approaches without any post-processing techniques.

Invariant approaches have been remarkably successful in tackling the problem of domain generalization, where the objective is to perform inference on data distributions different from those used in training. In our work, we investigate whether it is possible to leverage domain information from the unseen test samples themselves. We propose a domain-adaptive approach consisting of two steps: a) we first learn a discriminative domain embedding from unsupervised training examples, and b) use this domain embedding as supplementary information to build a domain-adaptive model, that takes both the input as well as its domain into account while making predictions. For unseen domains, our method simply uses few unlabelled test examples to construct the domain embedding. This enables adaptive classification on any unseen domain. Our approach achieves state-of-the-art performance on various domain generalization benchmarks. In addition, we introduce the first real-world, large-scale domain generalization benchmark, Geo-YFCC, containing 1.1M samples over 40 training, 7 validation, and 15 test domains, orders of magnitude larger than prior work. We show that the existing approaches either do not scale to this dataset or underperform compared to the simple baseline of training a model on the union of data from all training domains. In contrast, our approach achieves a significant improvement.

In many important graph data processing applications the acquired information includes both node features and observations of the graph topology. Graph neural networks (GNNs) are designed to exploit both sources of evidence but they do not optimally trade-off their utility and integrate them in a manner that is also universal. Here, universality refers to independence on homophily or heterophily graph assumptions. We address these issues by introducing a new Generalized PageRank (GPR) GNN architecture that adaptively learns the GPR weights so as to jointly optimize node feature and topological information extraction, regardless of the extent to which the node labels are homophilic or heterophilic. Learned GPR weights automatically adjust to the node label pattern, irrelevant on the type of initialization, and thereby guarantee excellent learning performance for label patterns that are usually hard to handle. Furthermore, they allow one to avoid feature over-smoothing, a process which renders feature information nondiscriminative, without requiring the network to be shallow. Our accompanying theoretical analysis of the GPR-GNN method is facilitated by novel synthetic benchmark datasets generated by the so-called contextual stochastic block model. We also compare the performance of our GNN architecture with that of several state-of-the-art GNNs on the problem of node-classification, using well-known benchmark homophilic and heterophilic datasets. The results demonstrate that GPR-GNN offers significant performance improvement compared to existing techniques on both synthetic and benchmark data.

Behaviors of the synthetic characters in current military simulations are limited since they are generally generated by rule-based and reactive computational models with minimal intelligence. Such computational models cannot adapt to reflect the experience of the characters, resulting in brittle intelligence for even the most effective behavior models devised via costly and labor-intensive processes. Observation-based behavior model adaptation that leverages machine learning and the experience of synthetic entities in combination with appropriate prior knowledge can address the issues in the existing computational behavior models to create a better training experience in military training simulations. In this paper, we introduce a framework that aims to create autonomous synthetic characters that can perform coherent sequences of believable behavior while being aware of human trainees and their needs within a training simulation. This framework brings together three mutually complementary components. The first component is a Unity-based simulation environment - Rapid Integration and Development Environment (RIDE) - supporting One World Terrain (OWT) models and capable of running and supporting machine learning experiments. The second is Shiva, a novel multi-agent reinforcement and imitation learning framework that can interface with a variety of simulation environments, and that can additionally utilize a variety of learning algorithms. The final component is the Sigma Cognitive Architecture that will augment the behavior models with symbolic and probabilistic reasoning capabilities. We have successfully created proof-of-concept behavior models leveraging this framework on realistic terrain as an essential step towards bringing machine learning into military simulations.

Attributed graph clustering is challenging as it requires joint modelling of graph structures and node attributes. Recent progress on graph convolutional networks has proved that graph convolution is effective in combining structural and content information, and several recent methods based on it have achieved promising clustering performance on some real attributed networks. However, there is limited understanding of how graph convolution affects clustering performance and how to properly use it to optimize performance for different graphs. Existing methods essentially use graph convolution of a fixed and low order that only takes into account neighbours within a few hops of each node, which underutilizes node relations and ignores the diversity of graphs. In this paper, we propose an adaptive graph convolution method for attributed graph clustering that exploits high-order graph convolution to capture global cluster structure and adaptively selects the appropriate order for different graphs. We establish the validity of our method by theoretical analysis and extensive experiments on benchmark datasets. Empirical results show that our method compares favourably with state-of-the-art methods.