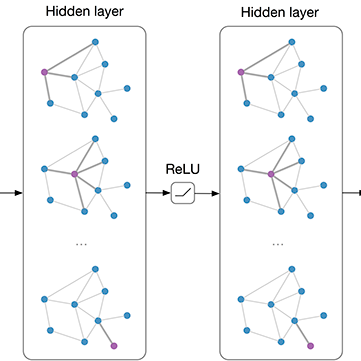

The disaggregated and hierarchical architecture of advanced RAN presents significant challenges in efficiently placing baseband functions and user plane functions in conjunction with Multi-Access Edge Computing (MEC) to accommodate diverse 5G services. Therefore, this paper proposes a novel approach NetMind, which leverages Deep Reinforcement Learning (DRL) to determine the function placement strategies in RANs with diverse topologies, aiming at minimizing power consumption. NetMind formulates the function placement problem as a maze-solving task, enabling a Markov Decision Process with standardized action space scales across different networks. Additionally, a Graph Convolutional Network (GCN) based encoding mechanism is introduced, allowing features from different networks to be aggregated into a single RL agent. That facilitates the RL agent's generalization capability and minimizes the negative impact of retraining on power consumption. In an example with three sub-networks, NetMind achieves comparable performance to traditional methods that require a dedicated DRL agent for each network, resulting in a 70% reduction in training costs. Furthermore, it demonstrates a substantial 32.76% improvement in power savings and a 41.67% increase in service stability compared to benchmarks from the existing literature.

相關內容

Despite progress in video-language modeling, the computational challenge of interpreting long-form videos in response to task-specific linguistic queries persists, largely due to the complexity of high-dimensional video data and the misalignment between language and visual cues over space and time. To tackle this issue, we introduce a novel approach called Language-guided Spatial-Temporal Prompt Learning (LSTP). This approach features two key components: a Temporal Prompt Sampler (TPS) with optical flow prior that leverages temporal information to efficiently extract relevant video content, and a Spatial Prompt Solver (SPS) that adeptly captures the intricate spatial relationships between visual and textual elements. By harmonizing TPS and SPS with a cohesive training strategy, our framework significantly enhances computational efficiency, temporal understanding, and spatial-temporal alignment. Empirical evaluations across two challenging tasks--video question answering and temporal question grounding in videos--using a variety of video-language pretrainings (VLPs) and large language models (LLMs) demonstrate the superior performance, speed, and versatility of our proposed LSTP paradigm.

Recent embedding-based methods have achieved great successes in exploiting entity alignment from knowledge graph (KG) embeddings of multiple modalities. In this paper, we study embedding-based entity alignment (EEA) from a perspective of generative models. We show that EEA shares similarities with typical generative models and prove the effectiveness of the recently developed generative adversarial network (GAN)-based EEA methods theoretically. We then reveal that their incomplete objective limits the capacity on both entity alignment and entity synthesis (i.e., generating new entities). We mitigate this problem by introducing a generative EEA (GEEA) framework with the proposed mutual variational autoencoder (M-VAE) as the generative model. M-VAE enables entity conversion between KGs and generation of new entities from random noise vectors. We demonstrate the power of GEEA with theoretical analysis and empirical experiments on both entity alignment and entity synthesis tasks.

Despite making significant progress in multi-modal tasks, current Multi-modal Large Language Models (MLLMs) encounter the significant challenge of hallucinations, which may lead to harmful consequences. Therefore, evaluating MLLMs' hallucinations is becoming increasingly important in model improvement and practical application deployment. Previous works are limited in high evaluation costs (e.g., relying on humans or advanced LLMs) and insufficient evaluation dimensions (e.g., types of tasks and hallucinations). In this paper, we propose an LLM-free multi-dimensional benchmark AMBER, which can be used to evaluate both generative task and discriminative task including existence, attribute and relation hallucination. Based on AMBER, we design a low-cost and efficient evaluation pipeline. Additionally, we conduct a comprehensive evaluation and detailed analysis of mainstream MLLMs including GPT-4V(ision), and also give guideline suggestions for mitigating hallucinations. The data and code of AMBER are available at //github.com/junyangwang0410/AMBER.

We investigate the replay buffer in rehearsal-based approaches for graph continual learning (GCL) methods. Existing rehearsal-based GCL methods select the most representative nodes for each class and store them in a replay buffer for later use in training subsequent tasks. However, we discovered that considering only the class representativeness of each replayed node makes the replayed nodes to be concentrated around the center of each class, incurring a potential risk of overfitting to nodes residing in those regions, which aggravates catastrophic forgetting. Moreover, as the rehearsal-based approach heavily relies on a few replayed nodes to retain knowledge obtained from previous tasks, involving the replayed nodes that have irrelevant neighbors in the model training may have a significant detrimental impact on model performance. In this paper, we propose a GCL model named DSLR, specifically, we devise a coverage-based diversity (CD) approach to consider both the class representativeness and the diversity within each class of the replayed nodes. Moreover, we adopt graph structure learning (GSL) to ensure that the replayed nodes are connected to truly informative neighbors. Extensive experimental results demonstrate the effectiveness and efficiency of DSLR. Our source code is available at //github.com/seungyoon-Choi/DSLR_official.

We present a novel framework, called FrameNeRF, designed to apply off-the-shelf fast high-fidelity NeRF models with fast training speed and high rendering quality for few-shot novel view synthesis tasks. The training stability of fast high-fidelity models is typically constrained to dense views, making them unsuitable for few-shot novel view synthesis tasks. To address this limitation, we utilize a regularization model as a data generator to produce dense views from sparse inputs, facilitating subsequent training of fast high-fidelity models. Since these dense views are pseudo ground truth generated by the regularization model, original sparse images are then used to fine-tune the fast high-fidelity model. This process helps the model learn realistic details and correct artifacts introduced in earlier stages. By leveraging an off-the-shelf regularization model and a fast high-fidelity model, our approach achieves state-of-the-art performance across various benchmark datasets.

State-of-the-art pre-trained image models predominantly adopt a two-stage approach: initial unsupervised pre-training on large-scale datasets followed by task-specific fine-tuning using Cross-Entropy loss~(CE). However, it has been demonstrated that CE can compromise model generalization and stability. While recent works employing contrastive learning address some of these limitations by enhancing the quality of embeddings and producing better decision boundaries, they often overlook the importance of hard negative mining and rely on resource intensive and slow training using large sample batches. To counter these issues, we introduce a novel approach named CLCE, which integrates Label-Aware Contrastive Learning with CE. Our approach not only maintains the strengths of both loss functions but also leverages hard negative mining in a synergistic way to enhance performance. Experimental results demonstrate that CLCE significantly outperforms CE in Top-1 accuracy across twelve benchmarks, achieving gains of up to 3.52% in few-shot learning scenarios and 3.41% in transfer learning settings with the BEiT-3 model. Importantly, our proposed CLCE approach effectively mitigates the dependency of contrastive learning on large batch sizes such as 4096 samples per batch, a limitation that has previously constrained the application of contrastive learning in budget-limited hardware environments.

Recent advances in AI combine large language models (LLMs) with vision encoders that bring forward unprecedented technical capabilities to leverage for a wide range of healthcare applications. Focusing on the domain of radiology, vision-language models (VLMs) achieve good performance results for tasks such as generating radiology findings based on a patient's medical image, or answering visual questions (e.g., 'Where are the nodules in this chest X-ray?'). However, the clinical utility of potential applications of these capabilities is currently underexplored. We engaged in an iterative, multidisciplinary design process to envision clinically relevant VLM interactions, and co-designed four VLM use concepts: Draft Report Generation, Augmented Report Review, Visual Search and Querying, and Patient Imaging History Highlights. We studied these concepts with 13 radiologists and clinicians who assessed the VLM concepts as valuable, yet articulated many design considerations. Reflecting on our findings, we discuss implications for integrating VLM capabilities in radiology, and for healthcare AI more generally.

The increasing complexity of Industry 4.0 systems brings new challenges regarding predictive maintenance tasks such as fault detection and diagnosis. A corresponding and realistic setting includes multi-source data streams from different modalities, such as sensors measurements time series, machine images, textual maintenance reports, etc. These heterogeneous multimodal streams also differ in their acquisition frequency, may embed temporally unaligned information and can be arbitrarily long, depending on the considered system and task. Whereas multimodal fusion has been largely studied in a static setting, to the best of our knowledge, there exists no previous work considering arbitrarily long multimodal streams alongside with related tasks such as prediction across time. Thus, in this paper, we first formalize this paradigm of heterogeneous multimodal learning in a streaming setting as a new one. To tackle this challenge, we propose StreaMulT, a Streaming Multimodal Transformer relying on cross-modal attention and on a memory bank to process arbitrarily long input sequences at training time and run in a streaming way at inference. StreaMulT improves the state-of-the-art metrics on CMU-MOSEI dataset for Multimodal Sentiment Analysis task, while being able to deal with much longer inputs than other multimodal models. The conducted experiments eventually highlight the importance of the textual embedding layer, questioning recent improvements in Multimodal Sentiment Analysis benchmarks.

With the capability of modeling bidirectional contexts, denoising autoencoding based pretraining like BERT achieves better performance than pretraining approaches based on autoregressive language modeling. However, relying on corrupting the input with masks, BERT neglects dependency between the masked positions and suffers from a pretrain-finetune discrepancy. In light of these pros and cons, we propose XLNet, a generalized autoregressive pretraining method that (1) enables learning bidirectional contexts by maximizing the expected likelihood over all permutations of the factorization order and (2) overcomes the limitations of BERT thanks to its autoregressive formulation. Furthermore, XLNet integrates ideas from Transformer-XL, the state-of-the-art autoregressive model, into pretraining. Empirically, XLNet outperforms BERT on 20 tasks, often by a large margin, and achieves state-of-the-art results on 18 tasks including question answering, natural language inference, sentiment analysis, and document ranking.

High spectral dimensionality and the shortage of annotations make hyperspectral image (HSI) classification a challenging problem. Recent studies suggest that convolutional neural networks can learn discriminative spatial features, which play a paramount role in HSI interpretation. However, most of these methods ignore the distinctive spectral-spatial characteristic of hyperspectral data. In addition, a large amount of unlabeled data remains an unexploited gold mine for efficient data use. Therefore, we proposed an integration of generative adversarial networks (GANs) and probabilistic graphical models for HSI classification. Specifically, we used a spectral-spatial generator and a discriminator to identify land cover categories of hyperspectral cubes. Moreover, to take advantage of a large amount of unlabeled data, we adopted a conditional random field to refine the preliminary classification results generated by GANs. Experimental results obtained using two commonly studied datasets demonstrate that the proposed framework achieved encouraging classification accuracy using a small number of data for training.