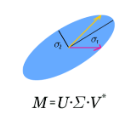

We characterize the first-order sensitivity of approximately recovering a low-rank matrix from linear measurements, a standard problem in compressed sensing. A special case covered by our analysis is approximating an incomplete matrix by a low-rank matrix. We give an algorithm for computing the associated condition number and demonstrate experimentally how the number of linear measurements affects it. In addition, we study the condition number of the rank-r matrix approximation problem. It measures in the Frobenius norm by how much an infinitesimal perturbation to an arbitrary input matrix is amplified in the movement of its best rank-r approximation. We give an explicit formula for the condition number, which shows that it does depend on the relative singular value gap between the rth and (r+1)th singular values of the input matrix.

相關內容

We study the shape reconstruction of an inclusion from the {faraway} measurement of the associated electric field. This is an inverse problem of practical importance in biomedical imaging and is known to be notoriously ill-posed. By incorporating Drude's model of the permittivity parameter, we propose a novel reconstruction scheme by using the plasmon resonance with a significantly enhanced resonant field. We conduct a delicate sensitivity analysis to establish a sharp relationship between the sensitivity of the reconstruction and the plasmon resonance. It is shown that when plasmon resonance occurs, the sensitivity functional blows up and hence ensures a more robust and effective construction. Then we combine the Tikhonov regularization with the Laplace approximation to solve the inverse problem, which is an organic hybridization of the deterministic and stochastic methods and can quickly calculate the minimizer while capture the uncertainty of the solution. We conduct extensive numerical experiments to illustrate the promising features of the proposed reconstruction scheme.

Differential privacy (DP) allows the quantification of privacy loss when the data of individuals is subjected to algorithmic processing such as machine learning, as well as the provision of objective privacy guarantees. However, while techniques such as individual R\'enyi DP (RDP) allow for granular, per-person privacy accounting, few works have investigated the impact of each input feature on the individual's privacy loss. Here we extend the view of individual RDP by introducing a new concept we call partial sensitivity, which leverages symbolic automatic differentiation to determine the influence of each input feature on the gradient norm of a function. We experimentally evaluate our approach on queries over private databases, where we obtain a feature-level contribution of private attributes to the DP guarantee of individuals. Furthermore, we explore our findings in the context of neural network training on synthetic data by investigating the partial sensitivity of input pixels on an image classification task.

Beta regression model is useful in the analysis of bounded continuous outcomes such as proportions. It is well known that for any regression model, the presence of multicollinearity leads to poor performance of the maximum likelihood estimators. The ridge type estimators have been proposed to alleviate the adverse effects of the multicollinearity. Furthermore, when some of the predictors have insignificant or weak effects on the outcomes, it is desired to recover as much information as possible from these predictors instead of discarding them all together. In this paper we proposed ridge type shrinkage estimators for the low and high dimensional beta regression model, which address the above two issues simultaneously. We compute the biases and variances of the proposed estimators in closed forms and use Monte Carlo simulations to evaluate their performances. The results show that, both in low and high dimensional data, the performance of the proposed estimators are superior to ridge estimators that discard weak or insignificant predictors. We conclude this paper by applying the proposed methods for two real data from econometric and medicine.

We consider the twin problems of estimating the effective rank and the Schatten norms $\|{\bf A}\|_{s}$ of a rectangular $p\times q$ matrix ${\bf A}$ from noisy observations. When $s$ is an even integer, we introduce a polynomial-time estimator of $\|{\bf A}\|_s$ that achieves the minimax rate $(pq)^{1/4}$. Interestingly, this optimal rate does not depend on the underlying rank of the matrix. When $s$ is not an even integer, the optimal rate is much slower. A simple thresholding estimator of the singular values achieves the rate $(q\wedge p)(pq)^{1/4}$, which turns out to be optimal up to a logarithmic multiplicative term. The tight minimax rate is achieved by a more involved polynomial approximation method. This allows us to build estimators for a class of effective rank indices. As a byproduct, we also characterize the minimax rate for estimating the sequence of singular values of a matrix.

In this paper, we present a wideband subspace estimation method that characterizes the signal subspace through its orthogonal projection matrix at each frequency. Fundamentally, the method models this projection matrix as a function of frequency that can be approximated by a polynomial. It provides two improvements: a reduction in the number of parameters required to represent the signal subspace along a given frequency band and a quality improvement in wideband direction-of-arrival (DOA) estimators such as Incoherent Multiple Signal Classification (IC-MUSIC) and Modified Test of Orthogonality of Projected Subspaces (MTOPS). In rough terms, the method fits a polynomial to a set of projection matrix estimates, obtained at a set of frequencies, and then uses the polynomial as a representation of the signal subspace. The paper includes the derivation of asymptotic bounds for the bias and root-mean-square (RMS) error of the projection matrix estimate and a numerical assessment of the method and its combination with the previous two DOA estimators.

The past decade has witnessed a surge of endeavors in statistical inference for high-dimensional sparse regression, particularly via de-biasing or relaxed orthogonalization. Nevertheless, these techniques typically require a more stringent sparsity condition than needed for estimation consistency, which seriously limits their practical applicability. To alleviate such constraint, we propose to exploit the identifiable features to residualize the design matrix before performing debiasing-based inference over the parameters of interest. This leads to a hybrid orthogonalization (HOT) technique that performs strict orthogonalization against the identifiable features but relaxed orthogonalization against the others. Under an approximately sparse model with a mixture of identifiable and unidentifiable signals, we establish the asymptotic normality of the HOT test statistic while accommodating as many identifiable signals as consistent estimation allows. The efficacy of the proposed test is also demonstrated through simulation and analysis of a stock market dataset.

Among the several paradigms of artificial intelligence (AI) or machine learning (ML), a remarkably successful paradigm is deep learning. Deep learning's phenomenal success has been hoped to be interpreted via fundamental research on the theory of deep learning. Accordingly, applied research on deep learning has spurred the theory of deep learning-oriented depth and breadth of developments. Inspired by such developments, we pose these fundamental questions: can we accurately approximate an arbitrary matrix-vector product using deep rectified linear unit (ReLU) feedforward neural networks (FNNs)? If so, can we bound the resulting approximation error? In light of these questions, we derive error bounds in Lebesgue and Sobolev norms that comprise our developed deep approximation theory. Guided by this theory, we have successfully trained deep ReLU FNNs whose test results justify our developed theory. The developed theory is also applicable for guiding and easing the training of teacher deep ReLU FNNs in view of the emerging teacher-student AI or ML paradigms that are essential for solving several AI or ML problems in wireless communications and signal processing; network science and graph signal processing; and network neuroscience and brain physics.

We show that for the problem of testing if a matrix $A \in F^{n \times n}$ has rank at most $d$, or requires changing an $\epsilon$-fraction of entries to have rank at most $d$, there is a non-adaptive query algorithm making $\widetilde{O}(d^2/\epsilon)$ queries. Our algorithm works for any field $F$. This improves upon the previous $O(d^2/\epsilon^2)$ bound (SODA'03), and bypasses an $\Omega(d^2/\epsilon^2)$ lower bound of (KDD'14) which holds if the algorithm is required to read a submatrix. Our algorithm is the first such algorithm which does not read a submatrix, and instead reads a carefully selected non-adaptive pattern of entries in rows and columns of $A$. We complement our algorithm with a matching query complexity lower bound for non-adaptive testers over any field. We also give tight bounds of $\widetilde{\Theta}(d^2)$ queries in the sensing model for which query access comes in the form of $\langle X_i, A\rangle:=tr(X_i^\top A)$; perhaps surprisingly these bounds do not depend on $\epsilon$. We next develop a novel property testing framework for testing numerical properties of a real-valued matrix $A$ more generally, which includes the stable rank, Schatten-$p$ norms, and SVD entropy. Specifically, we propose a bounded entry model, where $A$ is required to have entries bounded by $1$ in absolute value. We give upper and lower bounds for a wide range of problems in this model, and discuss connections to the sensing model above.

Many resource allocation problems in the cloud can be described as a basic Virtual Network Embedding Problem (VNEP): finding mappings of request graphs (describing the workloads) onto a substrate graph (describing the physical infrastructure). In the offline setting, the two natural objectives are profit maximization, i.e., embedding a maximal number of request graphs subject to the resource constraints, and cost minimization, i.e., embedding all requests at minimal overall cost. The VNEP can be seen as a generalization of classic routing and call admission problems, in which requests are arbitrary graphs whose communication endpoints are not fixed. Due to its applications, the problem has been studied intensively in the networking community. However, the underlying algorithmic problem is hardly understood. This paper presents the first fixed-parameter tractable approximation algorithms for the VNEP. Our algorithms are based on randomized rounding. Due to the flexible mapping options and the arbitrary request graph topologies, we show that a novel linear program formulation is required. Only using this novel formulation the computation of convex combinations of valid mappings is enabled, as the formulation needs to account for the structure of the request graphs. Accordingly, to capture the structure of request graphs, we introduce the graph-theoretic notion of extraction orders and extraction width and show that our algorithms have exponential runtime in the request graphs' maximal width. Hence, for request graphs of fixed extraction width, we obtain the first polynomial-time approximations. Studying the new notion of extraction orders we show that (i) computing extraction orders of minimal width is NP-hard and (ii) that computing decomposable LP solutions is in general NP-hard, even when restricting request graphs to planar ones.

This paper describes a suite of algorithms for constructing low-rank approximations of an input matrix from a random linear image of the matrix, called a sketch. These methods can preserve structural properties of the input matrix, such as positive-semidefiniteness, and they can produce approximations with a user-specified rank. The algorithms are simple, accurate, numerically stable, and provably correct. Moreover, each method is accompanied by an informative error bound that allows users to select parameters a priori to achieve a given approximation quality. These claims are supported by numerical experiments with real and synthetic data.