Single object tracking (SOT) research falls into a cycle - trackers perform well on most benchmarks but quickly fail in challenging scenarios, causing researchers to doubt the insufficient data content and take more effort constructing larger datasets with more challenging situations. However, isolated experimental environments and limited evaluation methods more seriously hinder the SOT research. The former causes existing datasets can not be exploited comprehensively, while the latter neglects challenging factors in the evaluation process. In this article, we systematize the representative benchmarks and form a single object tracking metaverse (SOTVerse) - a user-defined SOT task space to break through the bottleneck. We first propose a 3E Paradigm to describe tasks by three components (i.e., environment, evaluation, and executor). Then, we summarize task characteristics, clarify the organization standards, and construct SOTVerse with 12.56 million frames. Specifically, SOTVerse automatically labels challenging factors per frame, allowing users to generate user-defined spaces efficiently via construction rules. Besides, SOTVerse provides two mechanisms with new indicators and successfully evaluates trackers under various subtasks. Consequently, SOTVerse firstly provides a strategy to improve resource utilization in the computer vision area, making research more standardized and scientific. The SOTVerse, toolkit, evaluation server, and results are available at //metaverse.aitestunion.com.

相關內容

The tuning of hyperparameters becomes increasingly important as machine learning (ML) models have been extensively applied in data mining applications. Among various approaches, Bayesian optimization (BO) is a successful methodology to tune hyper-parameters automatically. While traditional methods optimize each tuning task in isolation, there has been recent interest in speeding up BO by transferring knowledge across previous tasks. In this work, we introduce an automatic method to design the BO search space with the aid of tuning history from past tasks. This simple yet effective approach can be used to endow many existing BO methods with transfer learning capabilities. In addition, it enjoys the three advantages: universality, generality, and safeness. The extensive experiments show that our approach considerably boosts BO by designing a promising and compact search space instead of using the entire space, and outperforms the state-of-the-arts on a wide range of benchmarks, including machine learning and deep learning tuning tasks, and neural architecture search.

Search engines and recommendation systems attempt to continually improve the quality of the experience they afford to their users. Refining the ranker that produces the lists displayed in response to user requests is an important component of this process. A common practice is for the service providers to make changes (e.g. new ranking features, different ranking models) and A/B test them on a fraction of their users to establish the value of the change. An alternative approach estimates the effectiveness of the proposed changes offline, utilising previously collected clickthrough data on the old ranker to posit what the user behaviour on ranked lists produced by the new ranker would have been. A majority of offline evaluation approaches invoke the well studied inverse propensity weighting to adjust for biases inherent in logged data. In this paper, we propose the use of parametric estimates for these propensities. Specifically, by leveraging well known learning-to-rank methods as subroutines, we show how accurate offline evaluation can be achieved when the new rankings to be evaluated differ from the logged ones.

Multi-scenario learning (MSL) enables a service provider to cater for users' fine-grained demands by separating services for different user sectors, e.g., by user's geographical region. Under each scenario there is a need to optimize multiple task-specific targets e.g., click through rate and conversion rate, known as multi-task learning (MTL). Recent solutions for MSL and MTL are mostly based on the multi-gate mixture-of-experts (MMoE) architecture. MMoE structure is typically static and its design requires domain-specific knowledge, making it less effective in handling both MSL and MTL. In this paper, we propose a novel Automatic Expert Selection framework for Multi-scenario and Multi-task search, named AESM^{2}. AESM^{2} integrates both MSL and MTL into a unified framework with an automatic structure learning. Specifically, AESM^{2} stacks multi-task layers over multi-scenario layers. This hierarchical design enables us to flexibly establish intrinsic connections between different scenarios, and at the same time also supports high-level feature extraction for different tasks. At each multi-scenario/multi-task layer, a novel expert selection algorithm is proposed to automatically identify scenario-/task-specific and shared experts for each input. Experiments over two real-world large-scale datasets demonstrate the effectiveness of AESM^{2} over a battery of strong baselines. Online A/B test also shows substantial performance gain on multiple metrics. Currently, AESM^{2} has been deployed online for serving major traffic.

Federated Learning is an emerging learning paradigm that allows training models from samples distributed across a large network of clients while respecting privacy and communication restrictions. Despite its success, federated learning faces several challenges related to its decentralized nature. In this work, we develop a novel algorithmic procedure with theoretical speedup guarantees that simultaneously handles two of these hurdles, namely (i) data heterogeneity, i.e., data distributions can vary substantially across clients, and (ii) system heterogeneity, i.e., the computational power of the clients could differ significantly. Our method relies on ideas from representation learning theory to find a global common representation using all clients' data and learn a user-specific set of parameters leading to a personalized solution for each client. Furthermore, our method mitigates the effects of stragglers by adaptively selecting clients based on their computational characteristics and statistical significance, thus achieving, for the first time, near optimal sample complexity and provable logarithmic speedup. Experimental results support our theoretical findings showing the superiority of our method over alternative personalized federated schemes in system and data heterogeneous environments.

End-to-end object detection is rapidly progressed after the emergence of DETR. DETRs use a set of sparse queries that replace the dense candidate boxes in most traditional detectors. In comparison, the sparse queries cannot guarantee a high recall as dense priors. However, making queries dense is not trivial in current frameworks. It not only suffers from heavy computational cost but also difficult optimization. As both sparse and dense queries are imperfect, then \emph{what are expected queries in end-to-end object detection}? This paper shows that the expected queries should be Dense Distinct Queries (DDQ). Concretely, we introduce dense priors back to the framework to generate dense queries. A duplicate query removal pre-process is applied to these queries so that they are distinguishable from each other. The dense distinct queries are then iteratively processed to obtain final sparse outputs. We show that DDQ is stronger, more robust, and converges faster. It obtains 44.5 AP on the MS COCO detection dataset with only 12 epochs. DDQ is also robust as it outperforms previous methods on both object detection and instance segmentation tasks on various datasets. DDQ blends advantages from traditional dense priors and recent end-to-end detectors. We hope it can serve as a new baseline and inspires researchers to revisit the complementarity between traditional methods and end-to-end detectors. The source code is publicly available at \url{//github.com/jshilong/DDQ}.

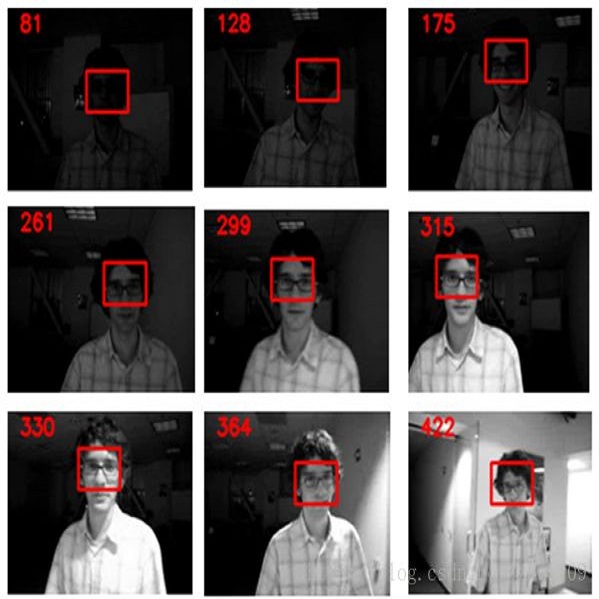

In many visual systems, visual tracking often bases on RGB image sequences, in which some targets are invalid in low-light conditions, and tracking performance is thus affected significantly. Introducing other modalities such as depth and infrared data is an effective way to handle imaging limitations of individual sources, but multi-modal imaging platforms usually require elaborate designs and cannot be applied in many real-world applications at present. Near-infrared (NIR) imaging becomes an essential part of many surveillance cameras, whose imaging is switchable between RGB and NIR based on the light intensity. These two modalities are heterogeneous with very different visual properties and thus bring big challenges for visual tracking. However, existing works have not studied this challenging problem. In this work, we address the cross-modal object tracking problem and contribute a new video dataset, including 654 cross-modal image sequences with over 481K frames in total, and the average video length is more than 735 frames. To promote the research and development of cross-modal object tracking, we propose a new algorithm, which learns the modality-aware target representation to mitigate the appearance gap between RGB and NIR modalities in the tracking process. It is plug-and-play and could thus be flexibly embedded into different tracking frameworks. Extensive experiments on the dataset are conducted, and we demonstrate the effectiveness of the proposed algorithm in two representative tracking frameworks against 17 state-of-the-art tracking methods. We will release the dataset for free academic usage, dataset download link and code will be released soon.

Owing to effective and flexible data acquisition, unmanned aerial vehicle (UAV) has recently become a hotspot across the fields of computer vision (CV) and remote sensing (RS). Inspired by recent success of deep learning (DL), many advanced object detection and tracking approaches have been widely applied to various UAV-related tasks, such as environmental monitoring, precision agriculture, traffic management. This paper provides a comprehensive survey on the research progress and prospects of DL-based UAV object detection and tracking methods. More specifically, we first outline the challenges, statistics of existing methods, and provide solutions from the perspectives of DL-based models in three research topics: object detection from the image, object detection from the video, and object tracking from the video. Open datasets related to UAV-dominated object detection and tracking are exhausted, and four benchmark datasets are employed for performance evaluation using some state-of-the-art methods. Finally, prospects and considerations for the future work are discussed and summarized. It is expected that this survey can facilitate those researchers who come from remote sensing field with an overview of DL-based UAV object detection and tracking methods, along with some thoughts on their further developments.

In contrast to batch learning where all training data is available at once, continual learning represents a family of methods that accumulate knowledge and learn continuously with data available in sequential order. Similar to the human learning process with the ability of learning, fusing, and accumulating new knowledge coming at different time steps, continual learning is considered to have high practical significance. Hence, continual learning has been studied in various artificial intelligence tasks. In this paper, we present a comprehensive review of the recent progress of continual learning in computer vision. In particular, the works are grouped by their representative techniques, including regularization, knowledge distillation, memory, generative replay, parameter isolation, and a combination of the above techniques. For each category of these techniques, both its characteristics and applications in computer vision are presented. At the end of this overview, several subareas, where continuous knowledge accumulation is potentially helpful while continual learning has not been well studied, are discussed.

Benefit from the quick development of deep learning techniques, salient object detection has achieved remarkable progresses recently. However, there still exists following two major challenges that hinder its application in embedded devices, low resolution output and heavy model weight. To this end, this paper presents an accurate yet compact deep network for efficient salient object detection. More specifically, given a coarse saliency prediction in the deepest layer, we first employ residual learning to learn side-output residual features for saliency refinement, which can be achieved with very limited convolutional parameters while keep accuracy. Secondly, we further propose reverse attention to guide such side-output residual learning in a top-down manner. By erasing the current predicted salient regions from side-output features, the network can eventually explore the missing object parts and details which results in high resolution and accuracy. Experiments on six benchmark datasets demonstrate that the proposed approach compares favorably against state-of-the-art methods, and with advantages in terms of simplicity, efficiency (45 FPS) and model size (81 MB).