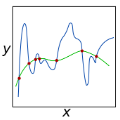

As a predictor's quality is often assessed by means of its risk, it is natural to regard risk consistency as a desirable property of learning methods, and many such methods have indeed been shown to be risk consistent. The first aim of this paper is to establish the close connection between risk consistency and $L_p$-consistency for a considerably wider class of loss functions than has been done before. The attempt to transfer this connection to shifted loss functions surprisingly reveals that this shift does not reduce the assumptions needed on the underlying probability measure to the same extent as it does for many other results. The results are applied to regularized kernel methods such as support vector machines.

相關內容

We study the consistency of surrogate risks for robust binary classification. It is common to learn robust classifiers by adversarial training, which seeks to minimize the expected $0$-$1$ loss when each example can be maliciously corrupted within a small ball. We give a simple and complete characterization of the set of surrogate loss functions that are \emph{consistent}, i.e., that can replace the $0$-$1$ loss without affecting the minimizing sequences of the original adversarial risk, for any data distribution. We also prove a quantitative version of adversarial consistency for the $\rho$-margin loss. Our results reveal that the class of adversarially consistent surrogates is substantially smaller than in the standard setting, where many common surrogates are known to be consistent.

Kernel-based regularized risk minimizers, also called support vector machines (SVMs), are known to possess many desirable properties but suffer from their super-linear computational requirements when dealing with large data sets. This problem can be tackled by using localized SVMs instead, which also offer the additional advantage of being able to apply different hyperparameters to different regions of the input space. In this paper, localized SVMs are analyzed with regards to their consistency. It is proven that they inherit $L_p$- as well as risk consistency from global SVMs under very weak conditions and even if the regions underlying the localized SVMs are allowed to change as the size of the training data set increases.

We propose two multiscale comparisons of graphs using heat diffusion, allowing to compare graphs without node correspondence or even with different sizes. These multiscale comparisons lead to the definition of Lipschitz-continuous empirical processes indexed by a real parameter. The statistical properties of empirical means of such processes are studied in the general case. Under mild assumptions, we prove a functional central limit theorem, as well as a Gaussian approximation with a rate depending only on the sample size. Once applied to our processes, these results allow to analyze data sets of pairs of graphs. We design consistent confidence bands around empirical means and consistent two-sample tests, using bootstrap methods. Their performances are evaluated by simulations on synthetic data sets.

The Byzantine consensus problem involves $n$ processes, out of which t < n could be faulty and behave arbitrarily. Three properties characterize consensus: (1) termination, requiring correct (non-faulty) processes to eventually reach a decision, (2) agreement, preventing them from deciding different values, and (3) validity, precluding ``unreasonable'' decisions. But, what is a reasonable decision? Strong validity, a classical property, stipulates that, if all correct processes propose the same value, only that value can be decided. Weak validity, another established property, stipulates that, if all processes are correct and they propose the same value, that value must be decided. The space of possible validity properties is vast. However, their impact on consensus remains unclear. This paper addresses the question of which validity properties allow Byzantine consensus to be solvable with partial synchrony, and at what cost. First, we determine necessary and sufficient conditions for a validity property to make the consensus problem solvable; we say that such validity properties are solvable. Notably, we prove that, if n <= 3t, all solvable validity properties are trivial (there exists an always-admissible decision). Furthermore, we show that, with any non-trivial (and solvable) validity property, consensus requires Omega(t^2) messages. This extends the seminal Dolev-Reischuk bound, originally proven for strong validity, to all non-trivial validity properties. Lastly, we give a general Byzantine consensus algorithm, we call Universal, for any solvable (and non-trivial) validity property. Importantly, Universal incurs O(n^2) message complexity. Thus, together with our lower bound, Universal implies a fundamental result in partial synchrony: with t \in Omega(n), the message complexity of all (non-trivial) consensus variants is Theta(n^2).

We study fair mechanisms for the (asymmetric) one-sided allocation problem with m items and n multi-unit demand agents with additive, unit-sum valuations. The symmetric case (m=n), the one-sided matching problem, has been studied extensively for the class of unit demand agents, in particular with respect to the folklore Random Priority mechanism and the Probabilistic Serial mechanism, introduced by Bogomolnaia and Moulin. Under the assumption of unit-sum valuation functions, Christodoulou et al. proved that the price of anarchy is $\Theta(\sqrt{n})$ in the one-sided matching problem for both the Random Priority and Probabilistic Serial mechanisms. Whilst both Random Priority and Probabilistic Serial are ordinal mechanisms, these approximation guarantees are the best possible even for the broader class of cardinal mechanisms. To extend these results to the general setting there are two technical obstacles. One, asymmetry ($m\neq n$) is problematic especially when the number of items is much greater than the number of items. Two, it is necessary to study multi-unit demand agents rather than simply unit demand agents. Our approach is to study a cardinal mechanism variant of Probabilistic Serial, which we call Cardinal Probabilistic Serial. We present structural theorems for this mechanism and use them to obtain bounds on the price of anarchy. Our first main result is an upper bound of $O(\sqrt{n}\cdot \log m)$ on the price of anarchy for the asymmetric one-sided allocation problem with multi-unit demand agents. This upper bound applies to Probabilistic Serial as well and there is a complementary lower bound of $\Omega(\sqrt{n})$ for any fair mechanism. Our second main result is that the price of anarchy degrades with the number of items. Specifically, a logarithmic dependence on the number of items is necessary for both mechanisms.

Random smoothing data augmentation is a unique form of regularization that can prevent overfitting by introducing noise to the input data, encouraging the model to learn more generalized features. Despite its success in various applications, there has been a lack of systematic study on the regularization ability of random smoothing. In this paper, we aim to bridge this gap by presenting a framework for random smoothing regularization that can adaptively and effectively learn a wide range of ground truth functions belonging to the classical Sobolev spaces. Specifically, we investigate two underlying function spaces: the Sobolev space of low intrinsic dimension, which includes the Sobolev space in $D$-dimensional Euclidean space or low-dimensional sub-manifolds as special cases, and the mixed smooth Sobolev space with a tensor structure. By using random smoothing regularization as novel convolution-based smoothing kernels, we can attain optimal convergence rates in these cases using a kernel gradient descent algorithm, either with early stopping or weight decay. It is noteworthy that our estimator can adapt to the structural assumptions of the underlying data and avoid the curse of dimensionality. This is achieved through various choices of injected noise distributions such as Gaussian, Laplace, or general polynomial noises, allowing for broad adaptation to the aforementioned structural assumptions of the underlying data. The convergence rate depends only on the effective dimension, which may be significantly smaller than the actual data dimension. We conduct numerical experiments on simulated data to validate our theoretical results.

The Nash Equilibrium (NE) estimation in bidding games of electricity markets is the key concern of both generation companies (GENCOs) for bidding strategy optimization and the Independent System Operator (ISO) for market surveillance. However, existing methods for NE estimation in emerging modern electricity markets (FEM) are inaccurate and inefficient because the priori knowledge of bidding strategies before any environment changes, such as load demand variations, network congestion, and modifications of market design, is not fully utilized. In this paper, a Bayes-adaptive Markov Decision Process in FEM (BAMDP-FEM) is therefore developed to model the GENCOs' bidding strategy optimization considering the priori knowledge. A novel Multi-Agent Generative Adversarial Imitation Learning algorithm (MAGAIL-FEM) is then proposed to enable GENCOs to learn simultaneously from priori knowledge and interactions with changing environments. The obtained NE is a Bayesian Nash Equilibrium (BNE) with priori knowledge transferred from the previous environment. In the case study, the superiority of this proposed algorithm in terms of convergence speed compared with conventional methods is verified. It is concluded that the optimal bidding strategies in the obtained BNE can always lead to more profits than NE due to the effective learning from the priori knowledge. Also, BNE is more accurate and consistent with situations in real-world markets.

The {\em binary deletion channel} with deletion probability $d$ ($\text{BDC}_d$) is a random channel that deletes each bit of the input message i.i.d with probability $d$. It has been studied extensively as a canonical example of a channel with synchronization errors. Perhaps the most important question regarding the BDC is determining its capacity. Mitzenmacher and Drinea (ITIT 2006) and Kirsch and Drinea (ITIT 2009) show a method by which distributions on run lengths can be converted to codes for the BDC, yielding a lower bound of $\mathcal{C}(\text{BDC}_d) > 0.1185 \cdot (1-d)$. Fertonani and Duman (ITIT 2010), Dalai (ISIT 2011) and Rahmati and Duman (ITIT 2014) use computer aided analyses based on the Blahut-Arimoto algorithm to prove an upper bound of $\mathcal{C}(\text{BDC}_d) < 0.4143\cdot(1-d)$ in the high deletion probability regime ($d > 0.65$). In this paper, we show that the Blahut-Arimoto algorithm can be implemented with a lower space complexity, allowing us to extend the upper bound analyses, and prove an upper bound of $\mathcal{C}(\text{BDC}_d) < 0.3745 \cdot(1-d)$ for all $d \geq 0.68$. Furthermore, we show that an extension of the Blahut-Arimoto algorithm can also be used to select better run length distributions for Mitzenmacher and Drinea's construction, yielding a lower bound of $\mathcal{C}(\text{BDC}_d) > 0.1221 \cdot (1 - d)$.

A variety of deep neural networks have been applied in medical image segmentation and achieve good performance. Unlike natural images, medical images of the same imaging modality are characterized by the same pattern, which indicates that same normal organs or tissues locate at similar positions in the images. Thus, in this paper we try to incorporate the prior knowledge of medical images into the structure of neural networks such that the prior knowledge can be utilized for accurate segmentation. Based on this idea, we propose a novel deep network called knowledge-based fully convolutional network (KFCN) for medical image segmentation. The segmentation function and corresponding error is analyzed. We show the existence of an asymptotically stable region for KFCN which traditional FCN doesn't possess. Experiments validate our knowledge assumption about the incorporation of prior knowledge into the convolution kernels of KFCN and show that KFCN can achieve a reasonable segmentation and a satisfactory accuracy.

We investigate the training and performance of generative adversarial networks using the Maximum Mean Discrepancy (MMD) as critic, termed MMD GANs. As our main theoretical contribution, we clarify the situation with bias in GAN loss functions raised by recent work: we show that gradient estimators used in the optimization process for both MMD GANs and Wasserstein GANs are unbiased, but learning a discriminator based on samples leads to biased gradients for the generator parameters. We also discuss the issue of kernel choice for the MMD critic, and characterize the kernel corresponding to the energy distance used for the Cramer GAN critic. Being an integral probability metric, the MMD benefits from training strategies recently developed for Wasserstein GANs. In experiments, the MMD GAN is able to employ a smaller critic network than the Wasserstein GAN, resulting in a simpler and faster-training algorithm with matching performance. We also propose an improved measure of GAN convergence, the Kernel Inception Distance, and show how to use it to dynamically adapt learning rates during GAN training.