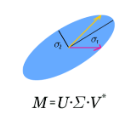

Large statically indeterminate truss and frame structures exhibit complex load-bearing behavior, and redundancy matrices are helpful for their analysis and design. Depending on the task, the full redundancy matrix or only its diagonal entries are required. The standard computation procedure has a high computational effort. Many structures fall in the category of moderately redundant, i.e., the ratio of the statical indeterminacy to the number of all load-carrying modes of all elements is less one half. This paper proposes a closed-form expression for redundancy contributions that is computationally efficient for moderately redundant systems. The expression is derived via a factorization of the redundancy matrix that is based on singular value decomposition. Several examples illustrate the behavior of the method for increasing size of systems and, where applicable, for increasing degree of statical indeterminacy.

相關內容

Clinically deployed segmentation models are known to fail on data outside of their training distribution. As these models perform well on most cases, it is imperative to detect out-of-distribution (OOD) images at inference to protect against automation bias. This work applies the Mahalanobis distance post hoc to the bottleneck features of a Swin UNETR model that segments the liver on T1-weighted magnetic resonance imaging. By reducing the dimensions of the bottleneck features with principal component analysis, OOD images were detected with high performance and minimal computational load.

We survey analytical methods and evaluation results for the performance assessment of caching strategies. Knapsack solutions are derived, which provide static caching bounds for independent requests and general bounds for dynamic caching under arbitrary request pattern. We summarize Markov- and time-to-live-based solutions, which assume specific stochastic processes for capturing web request streams and timing. We compare the performance of caching strategies with different knowledge about the properties of data objects regarding a broad set of caching demands. The efficiency of web caching must regard benefits for network wide traffic load, energy consumption and quality-of-service aspects in a tradeoff with costs for updating and storage overheads.

We consider the problem of estimating the false-/ true-positive-rate (FPR/TPR) for a binary classification model when there are incorrect labels (label noise) in the validation set. Our motivating application is fraud prevention where accurate estimates of FPR are critical to preserving the experience for good customers, and where label noise is highly asymmetric. Existing methods seek to minimize the total error in the cleaning process - to avoid cleaning examples that are not noise, and to ensure cleaning of examples that are. This is an important measure of accuracy but insufficient to guarantee good estimates of the true FPR or TPR for a model, and we show that using the model to directly clean its own validation data leads to underestimates even if total error is low. This indicates a need for researchers to pursue methods that not only reduce total error but also seek to de-correlate cleaning error with model scores.

We explore the information geometry and asymptotic behaviour of estimators for Kronecker-structured covariances, in both growing-$n$ and growing-$p$ scenarios, with a focus towards examining the quadratic form or partial trace estimator proposed by Linton and Tang. It is shown that the partial trace estimator is asymptotically inefficient An explanation for this inefficiency is that the partial trace estimator does not scale sub-blocks of the sample covariance matrix optimally. To correct for this, an asymptotically efficient, rescaled partial trace estimator is proposed. Motivated by this rescaling, we introduce an orthogonal parameterization for the set of Kronecker covariances. High-dimensional consistency results using the partial trace estimator are obtained that demonstrate a blessing of dimensionality. In settings where an array has at least order three, it is shown that as the array dimensions jointly increase, it is possible to consistently estimate the Kronecker covariance matrix, even when the sample size is one.

Matching problems with group-fairness constraints and diversity constraints have numerous applications such as in allocation problems, committee selection, school choice, etc. Moreover, online matching problems have lots of applications in ad allocations and other e-commerce problems like product recommendation in digital marketing. We study two problems involving assigning {\em items} to {\em platforms}, where items belong to various {\em groups} depending on their attributes; the set of items are available offline and the platforms arrive online. In the first problem, we study online matchings with {\em proportional fairness constraints}. Here, each platform on arrival should either be assigned a set of items in which the fraction of items from each group is within specified bounds or be assigned no items; the goal is to assign items to platforms in order to maximize the number of items assigned to platforms. In the second problem, we study online matchings with {\em diversity constraints}, i.e. for each platform, absolute lower bounds are specified for each group. Each platform on arrival should either be assigned a set of items that satisfy these bounds or be assigned no items; the goal is to maximize the set of platforms that get matched. We study approximation algorithms and hardness results for these problems. The technical core of our proofs is a new connection between these problems and the problem of matchings in hypergraphs. Our experimental evaluation shows the performance of our algorithms on real-world and synthetic datasets exceeds our theoretical guarantees.

Graph neural networks (GNNs) have been demonstrated to be a powerful algorithmic model in broad application fields for their effectiveness in learning over graphs. To scale GNN training up for large-scale and ever-growing graphs, the most promising solution is distributed training which distributes the workload of training across multiple computing nodes. However, the workflows, computational patterns, communication patterns, and optimization techniques of distributed GNN training remain preliminarily understood. In this paper, we provide a comprehensive survey of distributed GNN training by investigating various optimization techniques used in distributed GNN training. First, distributed GNN training is classified into several categories according to their workflows. In addition, their computational patterns and communication patterns, as well as the optimization techniques proposed by recent work are introduced. Second, the software frameworks and hardware platforms of distributed GNN training are also introduced for a deeper understanding. Third, distributed GNN training is compared with distributed training of deep neural networks, emphasizing the uniqueness of distributed GNN training. Finally, interesting issues and opportunities in this field are discussed.

Understanding causality helps to structure interventions to achieve specific goals and enables predictions under interventions. With the growing importance of learning causal relationships, causal discovery tasks have transitioned from using traditional methods to infer potential causal structures from observational data to the field of pattern recognition involved in deep learning. The rapid accumulation of massive data promotes the emergence of causal search methods with brilliant scalability. Existing summaries of causal discovery methods mainly focus on traditional methods based on constraints, scores and FCMs, there is a lack of perfect sorting and elaboration for deep learning-based methods, also lacking some considers and exploration of causal discovery methods from the perspective of variable paradigms. Therefore, we divide the possible causal discovery tasks into three types according to the variable paradigm and give the definitions of the three tasks respectively, define and instantiate the relevant datasets for each task and the final causal model constructed at the same time, then reviews the main existing causal discovery methods for different tasks. Finally, we propose some roadmaps from different perspectives for the current research gaps in the field of causal discovery and point out future research directions.

We consider the problem of explaining the predictions of graph neural networks (GNNs), which otherwise are considered as black boxes. Existing methods invariably focus on explaining the importance of graph nodes or edges but ignore the substructures of graphs, which are more intuitive and human-intelligible. In this work, we propose a novel method, known as SubgraphX, to explain GNNs by identifying important subgraphs. Given a trained GNN model and an input graph, our SubgraphX explains its predictions by efficiently exploring different subgraphs with Monte Carlo tree search. To make the tree search more effective, we propose to use Shapley values as a measure of subgraph importance, which can also capture the interactions among different subgraphs. To expedite computations, we propose efficient approximation schemes to compute Shapley values for graph data. Our work represents the first attempt to explain GNNs via identifying subgraphs explicitly and directly. Experimental results show that our SubgraphX achieves significantly improved explanations, while keeping computations at a reasonable level.

Deep neural networks have revolutionized many machine learning tasks in power systems, ranging from pattern recognition to signal processing. The data in these tasks is typically represented in Euclidean domains. Nevertheless, there is an increasing number of applications in power systems, where data are collected from non-Euclidean domains and represented as the graph-structured data with high dimensional features and interdependency among nodes. The complexity of graph-structured data has brought significant challenges to the existing deep neural networks defined in Euclidean domains. Recently, many studies on extending deep neural networks for graph-structured data in power systems have emerged. In this paper, a comprehensive overview of graph neural networks (GNNs) in power systems is proposed. Specifically, several classical paradigms of GNNs structures (e.g., graph convolutional networks, graph recurrent neural networks, graph attention networks, graph generative networks, spatial-temporal graph convolutional networks, and hybrid forms of GNNs) are summarized, and key applications in power systems such as fault diagnosis, power prediction, power flow calculation, and data generation are reviewed in detail. Furthermore, main issues and some research trends about the applications of GNNs in power systems are discussed.

Object detection typically assumes that training and test data are drawn from an identical distribution, which, however, does not always hold in practice. Such a distribution mismatch will lead to a significant performance drop. In this work, we aim to improve the cross-domain robustness of object detection. We tackle the domain shift on two levels: 1) the image-level shift, such as image style, illumination, etc, and 2) the instance-level shift, such as object appearance, size, etc. We build our approach based on the recent state-of-the-art Faster R-CNN model, and design two domain adaptation components, on image level and instance level, to reduce the domain discrepancy. The two domain adaptation components are based on H-divergence theory, and are implemented by learning a domain classifier in adversarial training manner. The domain classifiers on different levels are further reinforced with a consistency regularization to learn a domain-invariant region proposal network (RPN) in the Faster R-CNN model. We evaluate our newly proposed approach using multiple datasets including Cityscapes, KITTI, SIM10K, etc. The results demonstrate the effectiveness of our proposed approach for robust object detection in various domain shift scenarios.