Numerous studies in the literature have already shown the potential of biometrics on mobile devices for authentication purposes. However, it has been shown that, the learning processes associated to biometric systems might expose sensitive personal information about the subjects. This study proposes GaitPrivacyON, a novel mobile gait biometrics verification approach that provides accurate authentication results while preserving the sensitive information of the subject. It comprises two modules: i) a convolutional Autoencoder that transforms attributes of the biometric raw data, such as the gender or the activity being performed, into a new privacy-preserving representation; and ii) a mobile gait verification system based on the combination of Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) with a Siamese architecture. The main advantage of GaitPrivacyON is that the first module (convolutional Autoencoder) is trained in an unsupervised way, without specifying the sensitive attributes of the subject to protect. The experimental results achieved using two popular databases (MotionSense and MobiAct) suggest the potential of GaitPrivacyON to significantly improve the privacy of the subject while keeping user authentication results higher than 99% Area Under the Curve (AUC). To the best of our knowledge, this is the first mobile gait verification approach that considers privacy-preserving methods trained in an unsupervised way.

相關內容

Plasticity and stability are needed in class-incremental learning in order to learn from new data while preserving past knowledge. Due to catastrophic forgetting, finding a compromise between these two properties is particularly challenging when no memory buffer is available. Mainstream methods need to store two deep models since they integrate new classes using fine tuning with knowledge distillation from the previous incremental state. We propose a method which has similar number of parameters but distributes them differently in order to find a better balance between plasticity and stability. Following an approach already deployed by transfer-based incremental methods, we freeze the feature extractor after the initial state. Classes in the oldest incremental states are trained with this frozen extractor to ensure stability. Recent classes are predicted using partially fine-tuned models in order to introduce plasticity. Our proposed plasticity layer can be incorporated to any transfer-based method designed for memory-free incremental learning, and we apply it to two such methods. Evaluation is done with three large-scale datasets. Results show that performance gains are obtained in all tested configurations compared to existing methods.

We study the problem faced by a data analyst or platform that wishes to collect private data from privacy-aware agents. To incentivize participation, in exchange for this data, the platform provides a service to the agents in the form of a statistic computed using all agents' submitted data. The agents decide whether to join the platform (and truthfully reveal their data) or not participate by considering both the privacy costs of joining and the benefit they get from obtaining the statistic. The platform must ensure the statistic is computed differentially privately and chooses a central level of noise to add to the computation, but can also induce personalized privacy levels (or costs) by giving different weights to different agents in the computation as a function of their heterogeneous privacy preferences (which are known to the platform). We assume the platform aims to optimize the accuracy of the statistic, and must pick the privacy level of each agent to trade-off between i) incentivizing more participation and ii) adding less noise to the estimate. We provide a semi-closed form characterization of the optimal choice of agent weights for the platform in two variants of our model. In both of these models, we identify a common nontrivial structure in the platform's optimal solution: an instance-specific number of agents with the least stringent privacy requirements are pooled together and given the same weight, while the weights of the remaining agents decrease as a function of the strength of their privacy requirement. We also provide algorithmic results on how to find the optimal value of the noise parameter used by the platform and of the weights given to the agents.

A considerable amount of various types of data have been collected during the COVID-19 pandemic, the analysis and interpretation of which have been indispensable for curbing the spread of the disease. As the pandemic moves to an endemic state, the data collected during the pandemic will continue to be rich sources for further studying and understanding the impacts of the pandemic on various aspects of our society. On the other hand, na\"{i}ve release and sharing of the information can be associated with serious privacy concerns. In this study, we use three common but distinct data types collected during the pandemic (case surveillance tabular data, case location data, and contact tracing networks) to illustrate the publication and sharing of granular information and individual-level pandemic data in a privacy-preserving manner. We leverage and build upon the concept of differential privacy to generate and release privacy-preserving data for each data type. We investigate the inferential utility of privacy-preserving information through simulation studies at different levels of privacy guarantees and demonstrate the approaches in real-life data. All the approaches employed in the study are straightforward to apply. Our study generates statistical evidence on the practical feasibility of sharing pandemic data with privacy guarantees and on how to balance the statistical utility of released information during this process.

Federated Learning (FL) provides a promising distributed learning paradigm, since it seeks to protect users privacy by not sharing their private training data. Recent research has demonstrated, however, that FL is susceptible to model inversion attacks, which can reconstruct users' private data by eavesdropping on shared gradients. Existing defense solutions cannot survive stronger attacks and exhibit a poor trade-off between privacy and performance. In this paper, we present a straightforward yet effective defense strategy based on obfuscating the gradients of sensitive data with concealing data. Specifically, we alter a few samples within a mini batch to mimic the sensitive data at the gradient levels. Using a gradient projection technique, our method seeks to obscure sensitive data without sacrificing FL performance. Our extensive evaluations demonstrate that, compared to other defenses, our technique offers the highest level of protection while preserving FL performance. Our source code is located in the repository.

Low Earth Orbit (LEO) satellite constellations have seen a surge in deployment over the past few years by virtue of their ability to provide broadband Internet access as well as to collect vast amounts of Earth observational data that can be utilised to develop AI on a global scale. As traditional machine learning (ML) approaches which train a model by downloading satellite data to a ground station (GS) is not practical, Federated Learning (FL) offers a potential solution. However, existing FL approaches cannot be readily used because of excessively prolonged training time and unreliable satellite-GS communication channels. In this paper, we propose FedHAP by introducing high-altitude platforms (HAPs) as distributed parameter servers (PSs) into FL for Satcom (or more concretely LEO constellations), to achieve fast and efficient model training. FedHAP consists of three components: 1) a layered communication topology, 2) a model propagation algorithm, and 3) a model aggregation algorithm. Our extensive simulations demonstrate that FedHAP significantly accelerates FL model convergence as compared to state-of-the-art baselines, cutting the training time from several days down to a few hours yet achieving higher accuracy.

Spatio-temporal representation learning is critical for video self-supervised representation. Recent approaches mainly use contrastive learning and pretext tasks. However, these approaches learn representation by discriminating sampled instances via feature similarity in the latent space while ignoring the intermediate state of the learned representations, which limits the overall performance. In this work, taking into account the degree of similarity of sampled instances as the intermediate state, we propose a novel pretext task - spatio-temporal overlap rate (STOR) prediction. It stems from the observation that humans are capable of discriminating the overlap rates of videos in space and time. This task encourages the model to discriminate the STOR of two generated samples to learn the representations. Moreover, we employ a joint optimization combining pretext tasks with contrastive learning to further enhance the spatio-temporal representation learning. We also study the mutual influence of each component in the proposed scheme. Extensive experiments demonstrate that our proposed STOR task can favor both contrastive learning and pretext tasks. The joint optimization scheme can significantly improve the spatio-temporal representation in video understanding. The code is available at //github.com/Katou2/CSTP.

Convolutional neural networks (CNN) are the dominant deep neural network (DNN) architecture for computer vision. Recently, Transformer and multi-layer perceptron (MLP)-based models, such as Vision Transformer and MLP-Mixer, started to lead new trends as they showed promising results in the ImageNet classification task. In this paper, we conduct empirical studies on these DNN structures and try to understand their respective pros and cons. To ensure a fair comparison, we first develop a unified framework called SPACH which adopts separate modules for spatial and channel processing. Our experiments under the SPACH framework reveal that all structures can achieve competitive performance at a moderate scale. However, they demonstrate distinctive behaviors when the network size scales up. Based on our findings, we propose two hybrid models using convolution and Transformer modules. The resulting Hybrid-MS-S+ model achieves 83.9% top-1 accuracy with 63M parameters and 12.3G FLOPS. It is already on par with the SOTA models with sophisticated designs. The code and models will be made publicly available.

This paper focuses on the expected difference in borrower's repayment when there is a change in the lender's credit decisions. Classical estimators overlook the confounding effects and hence the estimation error can be magnificent. As such, we propose another approach to construct the estimators such that the error can be greatly reduced. The proposed estimators are shown to be unbiased, consistent, and robust through a combination of theoretical analysis and numerical testing. Moreover, we compare the power of estimating the causal quantities between the classical estimators and the proposed estimators. The comparison is tested across a wide range of models, including linear regression models, tree-based models, and neural network-based models, under different simulated datasets that exhibit different levels of causality, different degrees of nonlinearity, and different distributional properties. Most importantly, we apply our approaches to a large observational dataset provided by a global technology firm that operates in both the e-commerce and the lending business. We find that the relative reduction of estimation error is strikingly substantial if the causal effects are accounted for correctly.

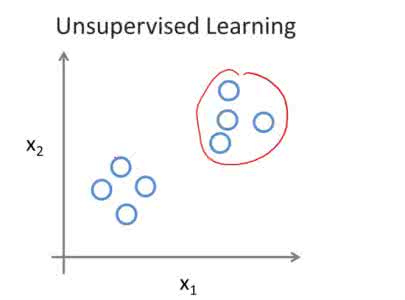

Transfer learning aims at improving the performance of target learners on target domains by transferring the knowledge contained in different but related source domains. In this way, the dependence on a large number of target domain data can be reduced for constructing target learners. Due to the wide application prospects, transfer learning has become a popular and promising area in machine learning. Although there are already some valuable and impressive surveys on transfer learning, these surveys introduce approaches in a relatively isolated way and lack the recent advances in transfer learning. As the rapid expansion of the transfer learning area, it is both necessary and challenging to comprehensively review the relevant studies. This survey attempts to connect and systematize the existing transfer learning researches, as well as to summarize and interpret the mechanisms and the strategies in a comprehensive way, which may help readers have a better understanding of the current research status and ideas. Different from previous surveys, this survey paper reviews over forty representative transfer learning approaches from the perspectives of data and model. The applications of transfer learning are also briefly introduced. In order to show the performance of different transfer learning models, twenty representative transfer learning models are used for experiments. The models are performed on three different datasets, i.e., Amazon Reviews, Reuters-21578, and Office-31. And the experimental results demonstrate the importance of selecting appropriate transfer learning models for different applications in practice.

Image segmentation is still an open problem especially when intensities of the interested objects are overlapped due to the presence of intensity inhomogeneity (also known as bias field). To segment images with intensity inhomogeneities, a bias correction embedded level set model is proposed where Inhomogeneities are Estimated by Orthogonal Primary Functions (IEOPF). In the proposed model, the smoothly varying bias is estimated by a linear combination of a given set of orthogonal primary functions. An inhomogeneous intensity clustering energy is then defined and membership functions of the clusters described by the level set function are introduced to rewrite the energy as a data term of the proposed model. Similar to popular level set methods, a regularization term and an arc length term are also included to regularize and smooth the level set function, respectively. The proposed model is then extended to multichannel and multiphase patterns to segment colourful images and images with multiple objects, respectively. It has been extensively tested on both synthetic and real images that are widely used in the literature and public BrainWeb and IBSR datasets. Experimental results and comparison with state-of-the-art methods demonstrate that advantages of the proposed model in terms of bias correction and segmentation accuracy.