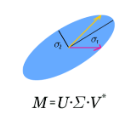

Heterogeneous functional data are commonly seen in time series and longitudinal data analysis. To capture the statistical structures of such data, we propose the framework of Functional Singular Value Decomposition (FSVD), a unified framework with structure-adaptive interpretability for the analysis of heterogeneous functional data. We establish the mathematical foundation of FSVD by proving its existence and providing its fundamental properties using operator theory. We then develop an implementation approach for noisy and irregularly observed functional data based on a novel joint kernel ridge regression scheme and provide theoretical guarantees for its convergence and estimation accuracy. The framework of FSVD also introduces the concepts of intrinsic basis functions and intrinsic basis vectors, which represent two fundamental statistical structures for random functions and connect FSVD to various tasks including functional principal component analysis, factor models, functional clustering, and functional completion. We compare the performance of FSVD with existing methods in several tasks through extensive simulation studies. To demonstrate the value of FSVD in real-world datasets, we apply it to extract temporal patterns from a COVID-19 case count dataset and perform data completion on an electronic health record dataset.

相關內容

Machine unlearning in neural information retrieval (IR) systems requires removing specific data whilst maintaining model performance. Applying existing machine unlearning methods to IR may compromise retrieval effectiveness or inadvertently expose unlearning actions due to the removal of particular items from the retrieved results presented to users. We formalise corrective unranking, which extends machine unlearning in (neural) IR context by integrating substitute documents to preserve ranking integrity, and propose a novel teacher-student framework, Corrective unRanking Distillation (CuRD), for this task. CuRD (1) facilitates forgetting by adjusting the (trained) neural IR model such that its output relevance scores of to-be-forgotten samples mimic those of low-ranking, non-retrievable samples; (2) enables correction by fine-tuning the relevance scores for the substitute samples to match those of corresponding to-be-forgotten samples closely; (3) seeks to preserve performance on samples that are not targeted for forgetting. We evaluate CuRD on four neural IR models (BERTcat, BERTdot, ColBERT, PARADE) using MS MARCO and TREC CAR datasets. Experiments with forget set sizes from 1 % and 20 % of the training dataset demonstrate that CuRD outperforms seven state-of-the-art baselines in terms of forgetting and correction while maintaining model retention and generalisation capabilities.

Equivariant deep learning architectures exploit symmetries in learning problems to improve the sample efficiency of neural-network-based models and their ability to generalise. However, when modelling real-world data, learning problems are often not exactly equivariant, but only approximately. For example, when estimating the global temperature field from weather station observations, local topographical features like mountains break translation equivariance. In these scenarios, it is desirable to construct architectures that can flexibly depart from exact equivariance in a data-driven way. Current approaches to achieving this cannot usually be applied out-of-the-box to any architecture and symmetry group. In this paper, we develop a general approach to achieving this using existing equivariant architectures. Our approach is agnostic to both the choice of symmetry group and model architecture, making it widely applicable. We consider the use of approximately equivariant architectures in neural processes (NPs), a popular family of meta-learning models. We demonstrate the effectiveness of our approach on a number of synthetic and real-world regression experiments, showing that approximately equivariant NP models can outperform both their non-equivariant and strictly equivariant counterparts.

Depth measures quantify central tendency in the analysis of statistical and geometric data. Selecting a depth measure that is simple and efficiently computable is often important, e.g., when calculating depth for multiple query points or when applied to large sets of data. In this work, we introduce \emph{Hyperplane Distance Depth (HDD)}, which measures the centrality of a query point $q$ relative to a given set $P$ of $n$ points in $\mathbb{R}^d$, defined as the sum of the distances from $q$ to all $\binom{n}{d}$ hyperplanes determined by points in $P$. We present algorithms for calculating the HDD of an arbitrary query point $q$ relative to $P$ in $O(d \log n)$ time after preprocessing $P$, and for finding a median point of $P$ in $O(d n^{d^2} \log n)$ time. We study various properties of hyperplane distance depth and show that it is convex, symmetric, and vanishing at infinity.

Disentangled representation learning in speech processing has lagged behind other domains, largely due to the lack of datasets with annotated generative factors for robust evaluation. To address this, we propose SynSpeech, a novel large-scale synthetic speech dataset specifically designed to enable research on disentangled speech representations. SynSpeech includes controlled variations in speaker identity, spoken text, and speaking style, with three dataset versions to support experimentation at different levels of complexity. In this study, we present a comprehensive framework to evaluate disentangled representation learning techniques, applying both linear probing and established supervised disentanglement metrics to assess the modularity, compactness, and explicitness of the representations learned by a state-of-the-art model. Using the RAVE model as a test case, we find that SynSpeech facilitates benchmarking across a range of factors, achieving promising disentanglement of simpler features like gender and speaking style, while highlighting challenges in isolating complex attributes like speaker identity. This benchmark dataset and evaluation framework fills a critical gap, supporting the development of more robust and interpretable speech representation learning methods.

Graph learning architectures based on the k-dimensional Weisfeiler-Leman (k-WL) hierarchy offer a theoretically well-understood expressive power. However, such architectures often fail to deliver solid predictive performance on real-world tasks, limiting their practical impact. In contrast, global attention-based models such as graph transformers demonstrate strong performance in practice, but comparing their expressive power with the k-WL hierarchy remains challenging, particularly since these architectures rely on positional or structural encodings for their expressivity and predictive performance. To address this, we show that the recently proposed Edge Transformer, a global attention model operating on node pairs instead of nodes, has at least 3-WL expressive power. Empirically, we demonstrate that the Edge Transformer surpasses other theoretically aligned architectures regarding predictive performance while not relying on positional or structural encodings. Our code is available at //github.com/luis-mueller/towards-principled-gts

Accurate approximation of a real-valued function depends on two aspects of the available data: the density of inputs within the domain of interest and the variation of the outputs over that domain. There are few methods for assessing whether the density of inputs is \textit{sufficient} to identify the relevant variations in outputs -- i.e., the ``geometric scale'' of the function -- despite the fact that sampling density is closely tied to the success or failure of an approximation method. In this paper, we introduce a general purpose, computational approach to detecting the geometric scale of real-valued functions over a fixed domain using a deterministic interpolation technique from computational geometry. The algorithm is intended to work on scalar data in moderate dimensions (2-10). Our algorithm is based on the observation that a sequence of piecewise linear interpolants will converge to a continuous function at a quadratic rate (in $L^2$ norm) if and only if the data are sampled densely enough to distinguish the feature from noise (assuming sufficiently regular sampling). We present numerical experiments demonstrating how our method can identify feature scale, estimate uncertainty in feature scale, and assess the sampling density for fixed (i.e., static) datasets of input-output pairs. We include analytical results in support of our numerical findings and have released lightweight code that can be adapted for use in a variety of data science settings.

We introduce a family of boundary conditions and point constraints for conformal immersions that increase the controllability of surfaces defined as minimizers of conformal variational problems. Our free boundary conditions fix the metric on the boundary, up to a global scale, and admit a discretization compatible with discrete conformal equivalence. We also introduce constraints on the conformal scale factor, enforcing rigidity of the geometry in regions of interest, and describe how in the presence of point constraints the conformal class encodes knot points of the spline that can be directly manipulated. To control the tangent planes, we introduce flux constraints balancing the internal material stresses. The collection of these point constraints provide intuitive controls for exploring a subspace of conformal immersions interpolating a fixed set of points in space. We demonstrate the applicability of our framework to geometric modeling, mathematical visualization, and form finding.

Disentangled Representation Learning (DRL) aims to learn a model capable of identifying and disentangling the underlying factors hidden in the observable data in representation form. The process of separating underlying factors of variation into variables with semantic meaning benefits in learning explainable representations of data, which imitates the meaningful understanding process of humans when observing an object or relation. As a general learning strategy, DRL has demonstrated its power in improving the model explainability, controlability, robustness, as well as generalization capacity in a wide range of scenarios such as computer vision, natural language processing, data mining etc. In this article, we comprehensively review DRL from various aspects including motivations, definitions, methodologies, evaluations, applications and model designs. We discuss works on DRL based on two well-recognized definitions, i.e., Intuitive Definition and Group Theory Definition. We further categorize the methodologies for DRL into four groups, i.e., Traditional Statistical Approaches, Variational Auto-encoder Based Approaches, Generative Adversarial Networks Based Approaches, Hierarchical Approaches and Other Approaches. We also analyze principles to design different DRL models that may benefit different tasks in practical applications. Finally, we point out challenges in DRL as well as potential research directions deserving future investigations. We believe this work may provide insights for promoting the DRL research in the community.

We investigate a lattice-structured LSTM model for Chinese NER, which encodes a sequence of input characters as well as all potential words that match a lexicon. Compared with character-based methods, our model explicitly leverages word and word sequence information. Compared with word-based methods, lattice LSTM does not suffer from segmentation errors. Gated recurrent cells allow our model to choose the most relevant characters and words from a sentence for better NER results. Experiments on various datasets show that lattice LSTM outperforms both word-based and character-based LSTM baselines, achieving the best results.

The dominant sequence transduction models are based on complex recurrent or convolutional neural networks in an encoder-decoder configuration. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 English-to-German translation task, improving over the existing best results, including ensembles by over 2 BLEU. On the WMT 2014 English-to-French translation task, our model establishes a new single-model state-of-the-art BLEU score of 41.8 after training for 3.5 days on eight GPUs, a small fraction of the training costs of the best models from the literature. We show that the Transformer generalizes well to other tasks by applying it successfully to English constituency parsing both with large and limited training data.