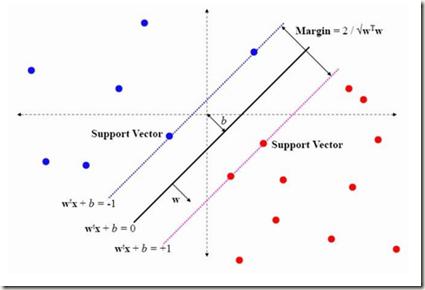

Interest in bilevel optimization has grown in recent years, partially due to its applications to tackle challenging machine-learning problems. Several exciting recent works have been centered around developing efficient gradient-based algorithms that can solve bilevel optimization problems with provable guarantees. However, the existing literature mainly focuses on bilevel problems either without constraints, or featuring only simple constraints that do not couple variables across the upper and lower levels, excluding a range of complex applications. Our paper studies this challenging but less explored scenario and develops a (fully) first-order algorithm, which we term BLOCC, to tackle BiLevel Optimization problems with Coupled Constraints. We establish rigorous convergence theory for the proposed algorithm and demonstrate its effectiveness on two well-known real-world applications - hyperparameter selection in support vector machine (SVM) and infrastructure planning in transportation networks using the real data from the city of Seville.

相關內容

Perfect synchronization in distributed machine learning problems is inefficient and even impossible due to the existence of latency, package losses and stragglers. We propose a Robust Fully-Asynchronous Stochastic Gradient Tracking method (R-FAST), where each device performs local computation and communication at its own pace without any form of synchronization. Different from existing asynchronous distributed algorithms, R-FAST can eliminate the impact of data heterogeneity across devices and allow for packet losses by employing a robust gradient tracking strategy that relies on properly designed auxiliary variables for tracking and buffering the overall gradient vector. More importantly, the proposed method utilizes two spanning-tree graphs for communication so long as both share at least one common root, enabling flexible designs in communication architectures. We show that R-FAST converges in expectation to a neighborhood of the optimum with a geometric rate for smooth and strongly convex objectives; and to a stationary point with a sublinear rate for general non-convex settings. Extensive experiments demonstrate that R-FAST runs 1.5-2 times faster than synchronous benchmark algorithms, such as Ring-AllReduce and D-PSGD, while still achieving comparable accuracy, and outperforms existing asynchronous SOTA algorithms, such as AD-PSGD and OSGP, especially in the presence of stragglers.

As quantum machine learning continues to develop at a rapid pace, the importance of ensuring the robustness and efficiency of quantum algorithms cannot be overstated. Our research presents an analysis of quantum randomized smoothing, how data encoding and perturbation modeling approaches can be matched to achieve meaningful robustness certificates. By utilizing an innovative approach integrating Grover's algorithm, a quadratic sampling advantage over classical randomized smoothing is achieved. This strategy necessitates a basis state encoding, thus restricting the space of meaningful perturbations. We show how constrained $k$-distant Hamming weight perturbations are a suitable noise distribution here, and elucidate how they can be constructed on a quantum computer. The efficacy of the proposed framework is demonstrated on a time series classification task employing a Bag-of-Words pre-processing solution. The advantage of quadratic sample reduction is recovered especially in the regime with large number of samples. This may allow quantum computers to efficiently scale randomized smoothing to more complex tasks beyond the reach of classical methods.

Knowledge distillation optimises a smaller student model to behave similarly to a larger teacher model, retaining some of the performance benefits. While this method can improve results on in-distribution examples, it does not necessarily generalise to out-of-distribution (OOD) settings. We investigate two complementary methods for improving the robustness of the resulting student models on OOD domains. The first approach augments the distillation with generated unlabelled examples that match the target distribution. The second method upsamples data points among the training set that are similar to the target distribution. When applied on the task of natural language inference (NLI), our experiments on MNLI show that distillation with these modifications outperforms previous robustness solutions. We also find that these methods improve performance on OOD domains even beyond the target domain.

The aim in many sciences is to understand the mechanisms that underlie the observed distribution of variables, starting from a set of initial hypotheses. Causal discovery allows us to infer mechanisms as sets of cause and effect relationships in a generalized way -- without necessarily tailoring to a specific domain. Causal discovery algorithms search over a structured hypothesis space, defined by the set of directed acyclic graphs, to find the graph that best explains the data. For high-dimensional problems, however, this search becomes intractable and scalable algorithms for causal discovery are needed to bridge the gap. In this paper, we define a novel causal graph partition that allows for divide-and-conquer causal discovery with theoretical guarantees. We leverage the idea of a superstructure -- a set of learned or existing candidate hypotheses -- to partition the search space. We prove under certain assumptions that learning with a causal graph partition always yields the Markov Equivalence Class of the true causal graph. We show our algorithm achieves comparable accuracy and a faster time to solution for biologically-tuned synthetic networks and networks up to ${10^4}$ variables. This makes our method applicable to gene regulatory network inference and other domains with high-dimensional structured hypothesis spaces.

The pursuit of long-term autonomy mandates that machine learning models must continuously adapt to their changing environments and learn to solve new tasks. Continual learning seeks to overcome the challenge of catastrophic forgetting, where learning to solve new tasks causes a model to forget previously learnt information. Prior-based continual learning methods are appealing as they are computationally efficient and do not require auxiliary models or data storage. However, prior-based approaches typically fail on important benchmarks and are thus limited in their potential applications compared to their memory-based counterparts. We introduce Bayesian adaptive moment regularization (BAdam), a novel prior-based method that better constrains parameter growth, reducing catastrophic forgetting. Our method boasts a range of desirable properties such as being lightweight and task label-free, converging quickly, and offering calibrated uncertainty that is important for safe real-world deployment. Results show that BAdam achieves state-of-the-art performance for prior-based methods on challenging single-headed class-incremental experiments such as Split MNIST and Split FashionMNIST, and does so without relying on task labels or discrete task boundaries.

Quantum computing holds immense potential for solving classically intractable problems by leveraging the unique properties of quantum mechanics. The scalability of quantum architectures remains a significant challenge. Multi-core quantum architectures are proposed to solve the scalability problem, arising a new set of challenges in hardware, communications and compilation, among others. One of these challenges is to adapt a quantum algorithm to fit within the different cores of the quantum computer. This paper presents a novel approach for circuit partitioning using Deep Reinforcement Learning, contributing to the advancement of both quantum computing and graph partitioning. This work is the first step in integrating Deep Reinforcement Learning techniques into Quantum Circuit Mapping, opening the door to a new paradigm of solutions to such problems.

Virtual scenario-based testing methods to validate autonomous driving systems are predominantly centred around collision avoidance, and lack a comprehensive approach to evaluate optimal driving behaviour holistically. Furthermore, current validation approaches do not align with authorisation and monitoring requirements put forth by regulatory bodies. We address these validation gaps by outlining a universal evaluation framework that: incorporates the notion of careful and competent driving, unifies behavioural competencies and evaluation criteria, and is amenable at a scenario-specific and aggregate behaviour level. This framework can be leveraged to evaluate optimal driving in scenario-based testing, and for post-deployment monitoring to ensure continual compliance with regulation and safety standards.

Cross-attention has become a fundamental module nowadays in many important artificial intelligence applications, e.g., retrieval-augmented generation (RAG), system prompt, guided stable diffusion, and many so on. Ensuring cross-attention privacy is crucial and urgently needed because its key and value matrices may contain sensitive information about companies and their users, many of which profit solely from their system prompts or RAG data. In this work, we design a novel differential privacy (DP) data structure to address the privacy security of cross-attention with a theoretical guarantee. In detail, let $n$ be the input token length of system prompt/RAG data, $d$ be the feature dimension, $0 < \alpha \le 1$ be the relative error parameter, $R$ be the maximum value of the query and key matrices, $R_w$ be the maximum value of the value matrix, and $r,s,\epsilon_s$ be parameters of polynomial kernel methods. Then, our data structure requires $\widetilde{O}(ndr^2)$ memory consumption with $\widetilde{O}(nr^2)$ initialization time complexity and $\widetilde{O}(\alpha^{-1} r^2)$ query time complexity for a single token query. In addition, our data structure can guarantee that the user query is $(\epsilon, \delta)$-DP with $\widetilde{O}(n^{-1} \epsilon^{-1} \alpha^{-1/2} R^{2s} R_w r^2)$ additive error and $n^{-1} (\alpha + \epsilon_s)$ relative error between our output and the true answer. Furthermore, our result is robust to adaptive queries in which users can intentionally attack the cross-attention system. To our knowledge, this is the first work to provide DP for cross-attention. We believe it can inspire more privacy algorithm design in large generative models (LGMs).

In the application of deep learning for malware classification, it is crucial to account for the prevalence of malware evolution, which can cause trained classifiers to fail on drifted malware. Existing solutions to combat concept drift use active learning: they select new samples for analysts to label, and then retrain the classifier with the new labels. Our key finding is, the current retraining techniques do not achieve optimal results. These models overlook that updating the model with scarce drifted samples requires learning features that remain consistent across pre-drift and post-drift data. Furthermore, the model should be capable of disregarding specific features that, while beneficial for classification of pre-drift data, are absent in post-drift data, thereby preventing prediction degradation. In this paper, we propose a method that learns retained information in malware control flow graphs post-drift by leveraging graph neural network with adversarial domain adaptation. Our approach considers drift-invariant features within assembly instructions and flow of code execution. We further propose building blocks for more robust evaluation of drift adaptation techniques that computes statistically distant malware clusters. Our approach is compared with the previously published training methods in active learning systems, and the other domain adaptation technique. Our approach demonstrates a significant enhancement in predicting unseen malware family in a binary classification task and predicting drifted malware families in a multi-class setting. In addition, we assess alternative malware representations. The best results are obtained when our adaptation method is applied to our graph representations.

Face recognition technology has advanced significantly in recent years due largely to the availability of large and increasingly complex training datasets for use in deep learning models. These datasets, however, typically comprise images scraped from news sites or social media platforms and, therefore, have limited utility in more advanced security, forensics, and military applications. These applications require lower resolution, longer ranges, and elevated viewpoints. To meet these critical needs, we collected and curated the first and second subsets of a large multi-modal biometric dataset designed for use in the research and development (R&D) of biometric recognition technologies under extremely challenging conditions. Thus far, the dataset includes more than 350,000 still images and over 1,300 hours of video footage of approximately 1,000 subjects. To collect this data, we used Nikon DSLR cameras, a variety of commercial surveillance cameras, specialized long-rage R&D cameras, and Group 1 and Group 2 UAV platforms. The goal is to support the development of algorithms capable of accurately recognizing people at ranges up to 1,000 m and from high angles of elevation. These advances will include improvements to the state of the art in face recognition and will support new research in the area of whole-body recognition using methods based on gait and anthropometry. This paper describes methods used to collect and curate the dataset, and the dataset's characteristics at the current stage.