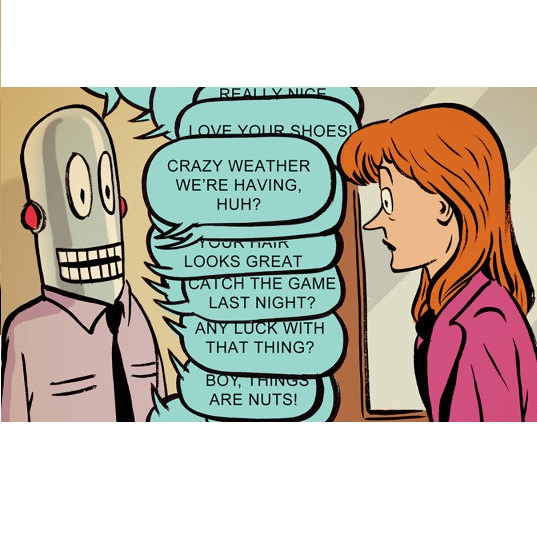

Tabular data is the most common format to publish and exchange structured data online. A clear example is the growing number of open data portals published by all types of public administrations. However, exploitation of these data sources is currently limited to technical people able to programmatically manipulate and digest such data. As an alternative, we propose the use of chatbots to offer a conversational interface to facilitate the exploration of tabular data sources. With our approach, any regular citizen can benefit and leverage them. Moreover, our chatbots are not manually created: instead, they are automatically generated from the data source itself thanks to the instantiation of a configurable collection of conversation patterns.

相關內容

Semantic image synthesis (SIS) refers to the problem of generating realistic imagery given a semantic segmentation mask that defines the spatial layout of object classes. Most of the approaches in the literature, other than the quality of the generated images, put effort in finding solutions to increase the generation diversity in terms of style i.e. texture. However, they all neglect a different feature, which is the possibility of manipulating the layout provided by the mask. Currently, the only way to do so is manually by means of graphical users interfaces. In this paper, we describe a network architecture to address the problem of automatically manipulating or generating the shape of object classes in semantic segmentation masks, with specific focus on human faces. Our proposed model allows embedding the mask class-wise into a latent space where each class embedding can be independently edited. Then, a bi-directional LSTM block and a convolutional decoder output a new, locally manipulated mask. We report quantitative and qualitative results on the CelebMask-HQ dataset, which show our model can both faithfully reconstruct and modify a segmentation mask at the class level. Also, we show our model can be put before a SIS generator, opening the way to a fully automatic generation control of both shape and texture. Code available at //github.com/TFonta/Semantic-VAE.

The heterogeneous, geographically distributed infrastructure of fog computing poses challenges in data replication, data distribution, and data mobility for fog applications. Fog computing is still missing the necessary abstractions to manage application data, and fog application developers need to re-implement data management for every new piece of software. Proposed solutions are limited to certain application domains, such as the IoT, are not flexible in regard to network topology, or do not provide the means for applications to control the movement of their data. In this paper, we present FReD, a data replication middleware for the fog. FReD serves as a building block for configurable fog data distribution and enables low-latency, high-bandwidth, and privacy-sensitive applications. FReD is a common data access interface across heterogeneous infrastructure and network topologies, provides transparent and controllable data distribution, and can be integrated with applications from different domains. To evaluate our approach, we present a prototype implementation of FReD and show the benefits of developing with FReD using three case studies of fog computing applications.

Making data and metadata FAIR (Findable, Accessible, Interoperable, Reusable) has become an important objective in research and industry, and knowledge graphs and ontologies have been cornerstones in many going-FAIR strategies. In this process, however, human-actionability of data and metadata has been lost sight of. Here, in the first part, I discuss two issues exemplifying the lack of human-actionability in knowledge graphs and I suggest adding the Principle of human Explorability to extend FAIR to the FAIREr Guiding Principles. Moreover, in its interoperability framework and as part of its GoingFAIR strategy, the European Open Science Cloud initiative distinguishes between technical, semantic, organizational, and legal interoperability and I argue to add cognitive interoperability. In the second part, I provide a short introduction to semantic units and discuss how they increase the human explorability and cognitive interoperability of knowledge graphs. Semantic units structure a knowledge graph into identifiable and semantically meaningful subgraphs, each represented with its own resource that instantiates a corresponding semantic unit class. Three categories of semantic units can be distinguished: Statement units model individual propositions, compound units are semantically meaningful collections of semantic units, and question units model questions that translate into queries. I conclude with discussing how semantic units provide a framework for the development of innovative user interfaces that support exploring and accessing information in the graph by reducing its complexity to what currently interests the user, thereby significantly increasing the cognitive interoperability and thus human-actionability of knowledge graphs.

Cross-Modal learning tasks have picked up pace in recent times. With plethora of applications in diverse areas, generation of novel content using multiple modalities of data has remained a challenging problem. To address the same, various generative modelling techniques have been proposed for specific tasks. Novel and creative image generation is one important aspect for industrial application which could help as an arm for novel content generation. Techniques proposed previously used Generative Adversarial Network(GAN), autoregressive models and Variational Autoencoders (VAE) for accomplishing similar tasks. These approaches are limited in their capability to produce images guided by either text instructions or rough sketch images decreasing the overall performance of image generator. We used state of the art diffusion models to generate creative art by primarily leveraging text with additional support of rough sketches. Diffusion starts with a pattern of random dots and slowly converts that pattern into a design image using the guiding information fed into the model. Diffusion models have recently outperformed other generative models in image generation tasks using cross modal data as guiding information. The initial experiments for this task of novel image generation demonstrated promising qualitative results.

We expect the generalization error to improve with more samples from a similar task, and to deteriorate with more samples from an out-of-distribution (OOD) task. In this work, we show a counter-intuitive phenomenon: the generalization error of a task can be a non-monotonic function of the number of OOD samples. As the number of OOD samples increases, the generalization error on the target task improves before deteriorating beyond a threshold. In other words, there is value in training on small amounts of OOD data. We use Fisher's Linear Discriminant on synthetic datasets and deep networks on computer vision benchmarks such as MNIST, CIFAR-10, CINIC-10, PACS and DomainNet to demonstrate and analyze this phenomenon. In the idealistic setting where we know which samples are OOD, we show that these non-monotonic trends can be exploited using an appropriately weighted objective of the target and OOD empirical risk. While its practical utility is limited, this does suggest that if we can detect OOD samples, then there may be ways to benefit from them. When we do not know which samples are OOD, we show how a number of go-to strategies such as data-augmentation, hyper-parameter optimization, and pre-training are not enough to ensure that the target generalization error does not deteriorate with the number of OOD samples in the dataset.

Recommendation systems are ubiquitous yet often difficult for users to control and adjust when recommendation quality is poor. This has motivated the development of conversational recommendation systems (CRSs), with control over recommendations provided through natural language feedback. However, building conversational recommendation systems requires conversational training data involving user utterances paired with items that cover a diverse range of preferences. Such data has proved challenging to collect scalably using conventional methods like crowdsourcing. We address it in the context of item-set recommendation, noting the increasing attention to this task motivated by use cases like music, news and recipe recommendation. We present a new technique, TalkTheWalk, that synthesizes realistic high-quality conversational data by leveraging domain expertise encoded in widely available curated item collections, showing how these can be transformed into corresponding item set curation conversations. Specifically, TalkTheWalk generates a sequence of hypothetical yet plausible item sets returned by a system, then uses a language model to produce corresponding user utterances. Applying TalkTheWalk to music recommendation, we generate over one million diverse playlist curation conversations. A human evaluation shows that the conversations contain consistent utterances with relevant item sets, nearly matching the quality of small human-collected conversational data for this task. At the same time, when the synthetic corpus is used to train a CRS, it improves Hits@100 by 10.5 points on a benchmark dataset over standard baselines and is preferred over the top-performing baseline in an online evaluation.

Large amounts of tabular data remain underutilized due to privacy, data quality, and data sharing limitations. While training a generative model producing synthetic data resembling the original distribution addresses some of these issues, most applications require additional constraints from the generated data. Existing synthetic data approaches are limited as they typically only handle specific constraints, e.g., differential privacy (DP) or increased fairness, and lack an accessible interface for declaring general specifications. In this work, we introduce ProgSyn, the first programmable synthetic tabular data generation algorithm that allows for comprehensive customization over the generated data. To ensure high data quality while adhering to custom specifications, ProgSyn pre-trains a generative model on the original dataset and fine-tunes it on a differentiable loss automatically derived from the provided specifications. These can be programmatically declared using statistical and logical expressions, supporting a wide range of requirements (e.g., DP or fairness, among others). We conduct an extensive experimental evaluation of ProgSyn on a number of constraints, achieving a new state-of-the-art on some, while remaining general. For instance, at the same fairness level we achieve 2.3% higher downstream accuracy than the state-of-the-art in fair synthetic data generation on the Adult dataset. Overall, ProgSyn provides a versatile and accessible framework for generating constrained synthetic tabular data, allowing for specifications that generalize beyond the capabilities of prior work.

AI is undergoing a paradigm shift with the rise of models (e.g., BERT, DALL-E, GPT-3) that are trained on broad data at scale and are adaptable to a wide range of downstream tasks. We call these models foundation models to underscore their critically central yet incomplete character. This report provides a thorough account of the opportunities and risks of foundation models, ranging from their capabilities (e.g., language, vision, robotics, reasoning, human interaction) and technical principles(e.g., model architectures, training procedures, data, systems, security, evaluation, theory) to their applications (e.g., law, healthcare, education) and societal impact (e.g., inequity, misuse, economic and environmental impact, legal and ethical considerations). Though foundation models are based on standard deep learning and transfer learning, their scale results in new emergent capabilities,and their effectiveness across so many tasks incentivizes homogenization. Homogenization provides powerful leverage but demands caution, as the defects of the foundation model are inherited by all the adapted models downstream. Despite the impending widespread deployment of foundation models, we currently lack a clear understanding of how they work, when they fail, and what they are even capable of due to their emergent properties. To tackle these questions, we believe much of the critical research on foundation models will require deep interdisciplinary collaboration commensurate with their fundamentally sociotechnical nature.

Generative models are now capable of producing highly realistic images that look nearly indistinguishable from the data on which they are trained. This raises the question: if we have good enough generative models, do we still need datasets? We investigate this question in the setting of learning general-purpose visual representations from a black-box generative model rather than directly from data. Given an off-the-shelf image generator without any access to its training data, we train representations from the samples output by this generator. We compare several representation learning methods that can be applied to this setting, using the latent space of the generator to generate multiple "views" of the same semantic content. We show that for contrastive methods, this multiview data can naturally be used to identify positive pairs (nearby in latent space) and negative pairs (far apart in latent space). We find that the resulting representations rival those learned directly from real data, but that good performance requires care in the sampling strategy applied and the training method. Generative models can be viewed as a compressed and organized copy of a dataset, and we envision a future where more and more "model zoos" proliferate while datasets become increasingly unwieldy, missing, or private. This paper suggests several techniques for dealing with visual representation learning in such a future. Code is released on our project page: //ali-design.github.io/GenRep/