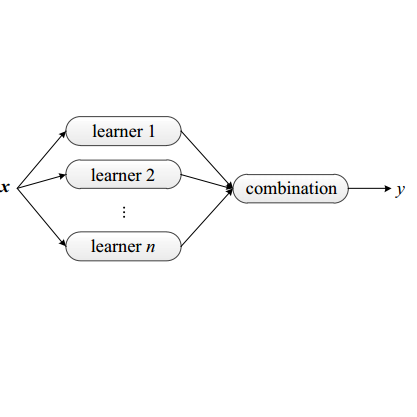

We investigate the use of sequence analysis for behavior modeling, emphasizing that sequential context often outweighs the value of aggregate features in understanding human behavior. We discuss framing common problems in fields like healthcare, finance, and e-commerce as sequence modeling tasks, and address challenges related to constructing coherent sequences from fragmented data and disentangling complex behavior patterns. We present a framework for sequence modeling using Ensembles of Hidden Markov Models, which are lightweight, interpretable, and efficient. Our ensemble-based scoring method enables robust comparison across sequences of different lengths and enhances performance in scenarios with imbalanced or scarce data. The framework scales in real-world scenarios, is compatible with downstream feature-based modeling, and is applicable in both supervised and unsupervised learning settings. We demonstrate the effectiveness of our method with results on a longitudinal human behavior dataset.

相關內容

Recent research on learned indexes has created a new perspective for indexes as models that map keys to their respective storage locations. These learned indexes are created to approximate the cumulative distribution function of the key set, where using only a single model may have limited accuracy. To overcome this limitation, a typical method is to use multiple models, arranged in a hierarchical manner, where the query performance depends on two aspects: (i) traversal time to find the correct model and (ii) search time to find the key in the selected model. Such a method may cause some key space regions that are difficult to model to be placed at deeper levels in the hierarchy. To address this issue, we propose an alternative method that modifies the key space as opposed to any structural or model modifications. This is achieved through making the key set more learnable (i.e., smoothing the distribution) by inserting virtual points. Furthermore, we develop an algorithm named CSV to integrate our virtual point insertion method into existing learned indexes, reducing both their traversal and search time. We implement CSV on state-of-the-art learned indexes and evaluate them on real-world datasets. Extensive experimental results show significant query performance improvement for the keys in deeper levels of the index structures at a low storage cost.

Machine learning algorithms often struggle to eliminate inherent data biases, particularly those arising from unreliable labels, which poses a significant challenge in ensuring fairness. Existing fairness techniques that address label bias typically involve modifying models and intervening in the training process, but these lack flexibility for large-scale datasets. To address this limitation, we introduce a data selection method designed to efficiently and flexibly mitigate label bias, tailored to more practical needs. Our approach utilizes a zero-shot predictor as a proxy model that simulates training on a clean holdout set. This strategy, supported by peer predictions, ensures the fairness of the proxy model and eliminates the need for an additional holdout set, which is a common requirement in previous methods. Without altering the classifier's architecture, our modality-agnostic method effectively selects appropriate training data and has proven efficient and effective in handling label bias and improving fairness across diverse datasets in experimental evaluations.

The sensitivity of machine learning algorithms to outliers, particularly in high-dimensional spaces, necessitates the development of robust methods. Within the framework of $\epsilon$-contamination model, where the adversary can inspect and replace up to $\epsilon$ fraction of the samples, a fundamental open question is determining the optimal rates for robust stochastic convex optimization (robust SCO), provided the samples under $\epsilon$-contamination. We develop novel algorithms that achieve minimax-optimal excess risk (up to logarithmic factors) under the $\epsilon$-contamination model. Our approach advances beyonds existing algorithms, which are not only suboptimal but also constrained by stringent requirements, including Lipschitzness and smoothness conditions on sample functions.Our algorithms achieve optimal rates while removing these restrictive assumptions, and notably, remain effective for nonsmooth but Lipschitz population risks.

In structured additive distributional regression, the conditional distribution of the response variables given the covariate information and the vector of model parameters is modelled using a P-parametric probability density function where each parameter is modelled through a linear predictor and a bijective response function that maps the domain of the predictor into the domain of the parameter. We present a method to perform inference in structured additive distributional regression using stochastic variational inference. We propose two strategies for constructing a multivariate Gaussian variational distribution to estimate the posterior distribution of the regression coefficients. The first strategy leverages covariate information and hyperparameters to learn both the location vector and the precision matrix. The second strategy tackles the complexity challenges of the first by initially assuming independence among all smooth terms and then introducing correlations through an additional set of variational parameters. Furthermore, we present two approaches for estimating the smoothing parameters. The first treats them as free parameters and provides point estimates, while the second accounts for uncertainty by applying a variational approximation to the posterior distribution. Our model was benchmarked against state-of-the-art competitors in logistic and gamma regression simulation studies. Finally, we validated our approach by comparing its posterior estimates to those obtained using Markov Chain Monte Carlo on a dataset of patents from the biotechnology/pharmaceutics and semiconductor/computer sectors.

A key strategy in societal adaptation to climate change is using alert systems to prompt preventative action and reduce the adverse health impacts of extreme heat events. This paper implements and evaluates reinforcement learning (RL) as a tool to optimize the effectiveness of such systems. Our contributions are threefold. First, we introduce a new publicly available RL environment enabling the evaluation of the effectiveness of heat alert policies to reduce heat-related hospitalizations. The rewards model is trained from a comprehensive dataset of historical weather, Medicare health records, and socioeconomic/geographic features. We use scalable Bayesian techniques tailored to the low-signal effects and spatial heterogeneity present in the data. The transition model uses real historical weather patterns enriched by a data augmentation mechanism based on climate region similarity. Second, we use this environment to evaluate standard RL algorithms in the context of heat alert issuance. Our analysis shows that policy constraints are needed to improve RL's initially poor performance. Third, a post-hoc contrastive analysis provides insight into scenarios where our modified heat alert-RL policies yield significant gains/losses over the current National Weather Service alert policy in the United States.

Diffusion models have recently gained popularity for policy learning in robotics due to their ability to capture high-dimensional and multimodal distributions. However, diffusion policies are inherently stochastic and typically trained offline, limiting their ability to handle unseen and dynamic conditions where novel constraints not represented in the training data must be satisfied. To overcome this limitation, we propose diffusion predictive control with constraints (DPCC), an algorithm for diffusion-based control with explicit state and action constraints that can deviate from those in the training data. DPCC uses constraint tightening and incorporates model-based projections into the denoising process of a trained trajectory diffusion model. This allows us to generate constraint-satisfying, dynamically feasible, and goal-reaching trajectories for predictive control. We show through simulations of a robot manipulator that DPCC outperforms existing methods in satisfying novel test-time constraints while maintaining performance on the learned control task.

The adaptive processing of structured data is a long-standing research topic in machine learning that investigates how to automatically learn a mapping from a structured input to outputs of various nature. Recently, there has been an increasing interest in the adaptive processing of graphs, which led to the development of different neural network-based methodologies. In this thesis, we take a different route and develop a Bayesian Deep Learning framework for graph learning. The dissertation begins with a review of the principles over which most of the methods in the field are built, followed by a study on graph classification reproducibility issues. We then proceed to bridge the basic ideas of deep learning for graphs with the Bayesian world, by building our deep architectures in an incremental fashion. This framework allows us to consider graphs with discrete and continuous edge features, producing unsupervised embeddings rich enough to reach the state of the art on several classification tasks. Our approach is also amenable to a Bayesian nonparametric extension that automatizes the choice of almost all model's hyper-parameters. Two real-world applications demonstrate the efficacy of deep learning for graphs. The first concerns the prediction of information-theoretic quantities for molecular simulations with supervised neural models. After that, we exploit our Bayesian models to solve a malware-classification task while being robust to intra-procedural code obfuscation techniques. We conclude the dissertation with an attempt to blend the best of the neural and Bayesian worlds together. The resulting hybrid model is able to predict multimodal distributions conditioned on input graphs, with the consequent ability to model stochasticity and uncertainty better than most works. Overall, we aim to provide a Bayesian perspective into the articulated research field of deep learning for graphs.

It is important to detect anomalous inputs when deploying machine learning systems. The use of larger and more complex inputs in deep learning magnifies the difficulty of distinguishing between anomalous and in-distribution examples. At the same time, diverse image and text data are available in enormous quantities. We propose leveraging these data to improve deep anomaly detection by training anomaly detectors against an auxiliary dataset of outliers, an approach we call Outlier Exposure (OE). This enables anomaly detectors to generalize and detect unseen anomalies. In extensive experiments on natural language processing and small- and large-scale vision tasks, we find that Outlier Exposure significantly improves detection performance. We also observe that cutting-edge generative models trained on CIFAR-10 may assign higher likelihoods to SVHN images than to CIFAR-10 images; we use OE to mitigate this issue. We also analyze the flexibility and robustness of Outlier Exposure, and identify characteristics of the auxiliary dataset that improve performance.

Recently, graph neural networks (GNNs) have revolutionized the field of graph representation learning through effectively learned node embeddings, and achieved state-of-the-art results in tasks such as node classification and link prediction. However, current GNN methods are inherently flat and do not learn hierarchical representations of graphs---a limitation that is especially problematic for the task of graph classification, where the goal is to predict the label associated with an entire graph. Here we propose DiffPool, a differentiable graph pooling module that can generate hierarchical representations of graphs and can be combined with various graph neural network architectures in an end-to-end fashion. DiffPool learns a differentiable soft cluster assignment for nodes at each layer of a deep GNN, mapping nodes to a set of clusters, which then form the coarsened input for the next GNN layer. Our experimental results show that combining existing GNN methods with DiffPool yields an average improvement of 5-10% accuracy on graph classification benchmarks, compared to all existing pooling approaches, achieving a new state-of-the-art on four out of five benchmark data sets.

We propose a new method for event extraction (EE) task based on an imitation learning framework, specifically, inverse reinforcement learning (IRL) via generative adversarial network (GAN). The GAN estimates proper rewards according to the difference between the actions committed by the expert (or ground truth) and the agent among complicated states in the environment. EE task benefits from these dynamic rewards because instances and labels yield to various extents of difficulty and the gains are expected to be diverse -- e.g., an ambiguous but correctly detected trigger or argument should receive high gains -- while the traditional RL models usually neglect such differences and pay equal attention on all instances. Moreover, our experiments also demonstrate that the proposed framework outperforms state-of-the-art methods, without explicit feature engineering.