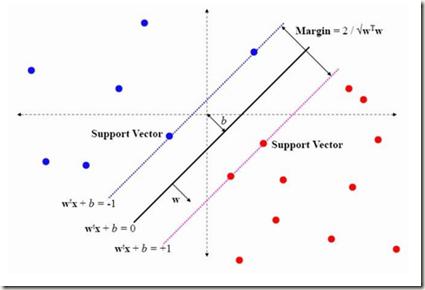

This paper presents approaches to compute sparse solutions of Generalized Singular Value Problem (GSVP). The GSVP is regularized by $\ell_1$-norm and $\ell_q$-penalty for $0<q<1$, resulting in the $\ell_1$-GSVP and $\ell_q$-GSVP formulations. The solutions of these problems are determined by applying the proximal gradient descent algorithm with a fixed step size. The inherent sparsity levels within the computed solutions are exploited for feature selection, and subsequently, binary classification with non-parallel Support Vector Machines (SVM). For our feature selection task, SVM is integrated into the $\ell_1$-GSVP and $\ell_q$-GSVP frameworks to derive the $\ell_1$-GSVPSVM and $\ell_q$-GSVPSVM variants. Machine learning applications to cancer detection are considered. We remarkably report near-to-perfect balanced accuracy across breast and ovarian cancer datasets using a few selected features.

相關內容

Diffusion Policy is a powerful technique tool for learning end-to-end visuomotor robot control. It is expected that Diffusion Policy possesses scalability, a key attribute for deep neural networks, typically suggesting that increasing model size would lead to enhanced performance. However, our observations indicate that Diffusion Policy in transformer architecture (\DP) struggles to scale effectively; even minor additions of layers can deteriorate training outcomes. To address this issue, we introduce Scalable Diffusion Transformer Policy for visuomotor learning. Our proposed method, namely \textbf{\methodname}, introduces two modules that improve the training dynamic of Diffusion Policy and allow the network to better handle multimodal action distribution. First, we identify that \DP~suffers from large gradient issues, making the optimization of Diffusion Policy unstable. To resolve this issue, we factorize the feature embedding of observation into multiple affine layers, and integrate it into the transformer blocks. Additionally, our utilize non-causal attention which allows the policy network to \enquote{see} future actions during prediction, helping to reduce compounding errors. We demonstrate that our proposed method successfully scales the Diffusion Policy from 10 million to 1 billion parameters. This new model, named \methodname, can effectively scale up the model size with improved performance and generalization. We benchmark \methodname~across 50 different tasks from MetaWorld and find that our largest \methodname~outperforms \DP~with an average improvement of 21.6\%. Across 7 real-world robot tasks, our ScaleDP demonstrates an average improvement of 36.25\% over DP-T on four single-arm tasks and 75\% on three bimanual tasks. We believe our work paves the way for scaling up models for visuomotor learning. The project page is available at scaling-diffusion-policy.github.io.

With the rise of Speech Large Language Models (Speech LLMs), there has been growing interest in discrete speech tokens for their ability to integrate with text-based tokens seamlessly. Compared to most studies that focus on continuous speech features, although discrete-token based LLMs have shown promising results on certain tasks, the performance gap between these two paradigms is rarely explored. In this paper, we present a fair and thorough comparison between discrete and continuous features across a variety of semantic-related tasks using a light-weight LLM (Qwen1.5-0.5B). Our findings reveal that continuous features generally outperform discrete tokens, particularly in tasks requiring fine-grained semantic understanding. Moreover, this study goes beyond surface-level comparison by identifying key factors behind the under-performance of discrete tokens, such as limited token granularity and inefficient information retention. To enhance the performance of discrete tokens, we explore potential aspects based on our analysis. We hope our results can offer new insights into the opportunities for advancing discrete speech tokens in Speech LLMs.

Quantum Machine Learning (QML) offers tremendous potential but is currently limited by the availability of qubits. We introduce an innovative approach that utilizes pre-trained neural networks to enhance Variational Quantum Circuits (VQC). This technique effectively separates approximation error from qubit count and removes the need for restrictive conditions, making QML more viable for real-world applications. Our method significantly improves parameter optimization for VQC while delivering notable gains in representation and generalization capabilities, as evidenced by rigorous theoretical analysis and extensive empirical testing on quantum dot classification tasks. Moreover, our results extend to applications such as human genome analysis, demonstrating the broad applicability of our approach. By addressing the constraints of current quantum hardware, our work paves the way for a new era of advanced QML applications, unlocking the full potential of quantum computing in fields such as machine learning, materials science, medicine, mimetics, and various interdisciplinary areas.

Relative pose estimation using point correspondences (PC) is a widely used technique. A minimal configuration of six PCs is required for two views of generalized cameras. In this paper, we present several minimal solvers that use six PCs to compute the 6DOF relative pose of multi-camera systems, including a minimal solver for the generalized camera and two minimal solvers for the practical configuration of two-camera rigs. The equation construction is based on the decoupling of rotation and translation. Rotation is represented by Cayley or quaternion parametrization, and translation can be eliminated by using the hidden variable technique. Ray bundle constraints are found and proven when a subset of PCs relate the same cameras across two views. This is the key to reducing the number of solutions and generating numerically stable solvers. Moreover, all configurations of six-point problems for multi-camera systems are enumerated. Extensive experiments demonstrate the superior accuracy and efficiency of our solvers compared to state-of-the-art six-point methods. The code is available at //github.com/jizhaox/relpose-6pt

This paper presents innovative approaches to optimization problems, focusing on both Single-Objective Multi-Modal Optimization (SOMMOP) and Multi-Objective Optimization (MOO). In SOMMOP, we integrate chaotic evolution with niching techniques, as well as Persistence-Based Clustering combined with Gaussian mutation. The proposed algorithms, Chaotic Evolution with Deterministic Crowding (CEDC) and Chaotic Evolution with Clustering Algorithm (CECA), utilize chaotic dynamics to enhance population diversity and improve search efficiency. For MOO, we extend these methods into a comprehensive framework that incorporates Uncertainty-Based Selection, Adaptive Parameter Tuning, and introduces a radius \( R \) concept in deterministic crowding, which enables clearer and more precise separation of populations at peak points. Experimental results demonstrate that the proposed algorithms outperform traditional methods, achieving superior optimization accuracy and robustness across a variety of benchmark functions.

Link prediction is crucial for understanding complex networks but traditional Graph Neural Networks (GNNs) often rely on random negative sampling, leading to suboptimal performance. This paper introduces Fuzzy Graph Attention Networks (FGAT), a novel approach integrating fuzzy rough sets for dynamic negative sampling and enhanced node feature aggregation. Fuzzy Negative Sampling (FNS) systematically selects high-quality negative edges based on fuzzy similarities, improving training efficiency. FGAT layer incorporates fuzzy rough set principles, enabling robust and discriminative node representations. Experiments on two research collaboration networks demonstrate FGAT's superior link prediction accuracy, outperforming state-of-the-art baselines by leveraging the power of fuzzy rough sets for effective negative sampling and node feature learning.

This paper studies a beam tracking problem in which an access point (AP), in collaboration with a reconfigurable intelligent surface (RIS), dynamically adjusts its downlink beamformers and the reflection pattern at the RIS in order to maintain reliable communications with multiple mobile user equipments (UEs). Specifically, the mobile UEs send uplink pilots to the AP periodically during the channel sensing intervals, the AP then adaptively configures the beamformers and the RIS reflection coefficients for subsequent data transmission based on the received pilots. This is an active sensing problem, because channel sensing involves configuring the RIS coefficients during the pilot stage and the optimal sensing strategy should exploit the trajectory of channel state information (CSI) from previously received pilots. Analytical solution to such an active sensing problem is very challenging. In this paper, we propose a deep learning framework utilizing a recurrent neural network (RNN) to automatically summarize the time-varying CSI obtained from the periodically received pilots into state vectors. These state vectors are then mapped to the AP beamformers and RIS reflection coefficients for subsequent downlink data transmissions, as well as the RIS reflection coefficients for the next round of uplink channel sensing. The mappings from the state vectors to the downlink beamformers and the RIS reflection coefficients for both channel sensing and downlink data transmission are performed using graph neural networks (GNNs) to account for the interference among the UEs. Simulations demonstrate significant and interpretable performance improvement of the proposed approach over the existing data-driven methods with nonadaptive channel sensing schemes.

Deployment of Internet of Things (IoT) devices and Data Fusion techniques have gained popularity in public and government domains. This usually requires capturing and consolidating data from multiple sources. As datasets do not necessarily originate from identical sensors, fused data typically results in a complex data problem. Because military is investigating how heterogeneous IoT devices can aid processes and tasks, we investigate a multi-sensor approach. Moreover, we propose a signal to image encoding approach to transform information (signal) to integrate (fuse) data from IoT wearable devices to an image which is invertible and easier to visualize supporting decision making. Furthermore, we investigate the challenge of enabling an intelligent identification and detection operation and demonstrate the feasibility of the proposed Deep Learning and Anomaly Detection models that can support future application that utilizes hand gesture data from wearable devices.

In this paper, we propose a novel Feature Decomposition and Reconstruction Learning (FDRL) method for effective facial expression recognition. We view the expression information as the combination of the shared information (expression similarities) across different expressions and the unique information (expression-specific variations) for each expression. More specifically, FDRL mainly consists of two crucial networks: a Feature Decomposition Network (FDN) and a Feature Reconstruction Network (FRN). In particular, FDN first decomposes the basic features extracted from a backbone network into a set of facial action-aware latent features to model expression similarities. Then, FRN captures the intra-feature and inter-feature relationships for latent features to characterize expression-specific variations, and reconstructs the expression feature. To this end, two modules including an intra-feature relation modeling module and an inter-feature relation modeling module are developed in FRN. Experimental results on both the in-the-lab databases (including CK+, MMI, and Oulu-CASIA) and the in-the-wild databases (including RAF-DB and SFEW) show that the proposed FDRL method consistently achieves higher recognition accuracy than several state-of-the-art methods. This clearly highlights the benefit of feature decomposition and reconstruction for classifying expressions.

Visual Question Answering (VQA) models have struggled with counting objects in natural images so far. We identify a fundamental problem due to soft attention in these models as a cause. To circumvent this problem, we propose a neural network component that allows robust counting from object proposals. Experiments on a toy task show the effectiveness of this component and we obtain state-of-the-art accuracy on the number category of the VQA v2 dataset without negatively affecting other categories, even outperforming ensemble models with our single model. On a difficult balanced pair metric, the component gives a substantial improvement in counting over a strong baseline by 6.6%.