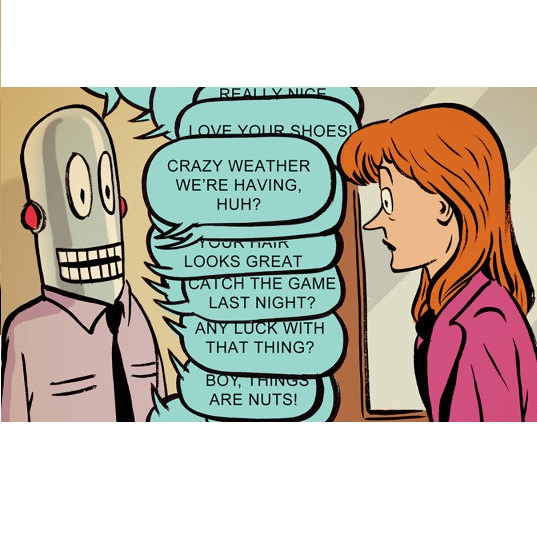

One of the most common things that a genealogist is tasked with is the gathering of a person's initial family history, normally via in-person interviews or with the use of a platform such as ancestry.com, as this can provide a strong foundation upon which a genealogist may build. However, the ability to conduct these interviews can often be hindered by both geographical constraints and the technical proficiency of the interviewee, as the interviewee in these types of interviews is most often an elderly person with a lower than average level of technical proficiency. With this in mind, this study presents what we believe, based on prior research, to be the first chatbot geared entirely towards the gathering of family histories, and explores the viability of utilising such a chatbot by comparing the performance and usability of such a method with the aforementioned alternatives. With a chatbot-based approach, we show that, though the average time taken to conduct an interview may be longer than if the user had used ancestry.com or participated in an in-person interview, the number of mistakes made and the level of confusion from the user regarding the UI and process required is lower than the other two methods. Note that the final metric regarding the user's confusion is not applicable for the in-person interview sessions due to its lack of a UI. With refinement, we believe this use of a chatbot could be a valuable tool for genealogists, especially when dealing with interviewees who are based in other countries where it is not possible to conduct an in-person interview.

相關內容

Deep neural networks (DNNs) often accept high-dimensional media data (e.g., photos, text, and audio) and understand their perceptual content (e.g., a cat). To test DNNs, diverse inputs are needed to trigger mis-predictions. Some preliminary works use byte-level mutations or domain-specific filters (e.g., foggy), whose enabled mutations may be limited and likely error-prone. SOTA works employ deep generative models to generate (infinite) inputs. Also, to keep the mutated inputs perceptually valid (e.g., a cat remains a "cat" after mutation), existing efforts rely on imprecise and less generalizable heuristics. This study revisits two key objectives in media input mutation - perception diversity (DIV) and validity (VAL) - in a rigorous manner based on manifold, a well-developed theory capturing perceptions of high-dimensional media data in a low-dimensional space. We show important results that DIV and VAL inextricably bound each other, and prove that SOTA generative model-based methods fundamentally fail to mutate real-world media data (either sacrificing DIV or VAL). In contrast, we discuss the feasibility of mutating real-world media data with provably high DIV and VAL based on manifold. We concretize the technical solution of mutating media data of various formats (images, audios, text) via a unified manner based on manifold. Specifically, when media data are projected into a low-dimensional manifold, the data can be mutated by walking on the manifold with certain directions and step sizes. When contrasted with the input data, the mutated data exhibit encouraging DIV in the perceptual traits (e.g., lying vs. standing dog) while retaining reasonably high VAL (i.e., a dog remains a dog). We implement our techniques in DEEPWALK for testing DNNs. DEEPWALK outperforms prior methods in testing comprehensiveness and can find more error-triggering inputs with higher quality.

With the ongoing efforts to empower people with mobility impairments and the increase in technological acceptance by the general public, assistive technologies, such as collaborative robotic arms, are gaining popularity. Yet, their widespread success is limited by usability issues, specifically the disparity between user input and software control along the autonomy continuum. To address this, shared control concepts provide opportunities to combine the targeted increase of user autonomy with a certain level of computer assistance. This paper presents the free and open-source AdaptiX XR framework for developing and evaluating shared control applications in a high-resolution simulation environment. The initial framework consists of a simulated robotic arm with an example scenario in Virtual Reality (VR), multiple standard control interfaces, and a specialized recording/replay system. AdaptiX can easily be extended for specific research needs, allowing Human-Robot Interaction (HRI) researchers to rapidly design and test novel interaction methods, intervention strategies, and multi-modal feedback techniques, without requiring an actual physical robotic arm during the early phases of ideation, prototyping, and evaluation. Also, a Robot Operating System (ROS) integration enables the controlling of a real robotic arm in a PhysicalTwin approach without any simulation-reality gap. Here, we review the capabilities and limitations of AdaptiX in detail and present three bodies of research based on the framework. AdaptiX can be accessed at //adaptix.robot-research.de.

Numerous studies have demonstrated the ability of neural language models to learn various linguistic properties without direct supervision. This work takes an initial step towards exploring the less researched topic of how neural models discover linguistic properties of words, such as gender, as well as the rules governing their usage. We propose to use an artificial corpus generated by a PCFG based on French to precisely control the gender distribution in the training data and determine under which conditions a model correctly captures gender information or, on the contrary, appears gender-biased.

This paper focuses on improving the mathematical interpretability of convolutional neural networks (CNNs) in the context of image classification. Specifically, we tackle the instability issue arising in their first layer, which tends to learn parameters that closely resemble oriented band-pass filters when trained on datasets like ImageNet. Subsampled convolutions with such Gabor-like filters are prone to aliasing, causing sensitivity to small input shifts. In this context, we establish conditions under which the max pooling operator approximates a complex modulus, which is nearly shift invariant. We then derive a measure of shift invariance for subsampled convolutions followed by max pooling. In particular, we highlight the crucial role played by the filter's frequency and orientation in achieving stability. We experimentally validate our theory by considering a deterministic feature extractor based on the dual-tree complex wavelet packet transform, a particular case of discrete Gabor-like decomposition.

Graph neural networks (GNNs) are widely used for modeling complex interactions between entities represented as vertices of a graph. Despite recent efforts to theoretically analyze the expressive power of GNNs, a formal characterization of their ability to model interactions is lacking. The current paper aims to address this gap. Formalizing strength of interactions through an established measure known as separation rank, we quantify the ability of certain GNNs to model interaction between a given subset of vertices and its complement, i.e. between the sides of a given partition of input vertices. Our results reveal that the ability to model interaction is primarily determined by the partition's walk index -- a graph-theoretical characteristic defined by the number of walks originating from the boundary of the partition. Experiments with common GNN architectures corroborate this finding. As a practical application of our theory, we design an edge sparsification algorithm named Walk Index Sparsification (WIS), which preserves the ability of a GNN to model interactions when input edges are removed. WIS is simple, computationally efficient, and in our experiments has markedly outperformed alternative methods in terms of induced prediction accuracy. More broadly, it showcases the potential of improving GNNs by theoretically analyzing the interactions they can model.

Deception, which includes leading cyber-attackers astray with false information, has shown to be an effective method of thwarting cyber-attacks. There has been little investigation of the effect of probing action costs on adversarial decision-making, despite earlier studies on deception in cybersecurity focusing primarily on variables like network size and the percentage of honeypots utilized in games. Understanding human decision-making when prompted with choices of various costs is essential in many areas such as in cyber security. In this paper, we will use a deception game (DG) to examine different costs of probing on adversarial decisions. To achieve this we utilized an IBLT model and a delayed feedback mechanism to mimic knowledge of human actions. Our results were taken from an even split of deception and no deception to compare each influence. It was concluded that probing was slightly taken less as the cost of probing increased. The proportion of attacks stayed relatively the same as the cost of probing increased. Although a constant cost led to a slight decrease in attacks. Overall, our results concluded that the different probing costs do not have an impact on the proportion of attacks whereas it had a slightly noticeable impact on the proportion of probing.

On any night in Canada, at least 35,000 individuals experience homelessness. These individuals use emergency shelters to transition out of homelessness and into permanent housing. We designed and deployed a technology to support front-line staff at the largest emergency housing shelter in Calgary, Canada. Over a period of five months in 2022, we worked closely with front-line staff to co-design an interface for supporting a holistic understanding of client context and facilitating decision-making. The tool is currently in-use and our collaboration is ongoing. In this paper, we reflect on preliminary findings regarding the second iteration of the tool. We find that supporting shelter staff in understanding the human behind the data was a critical component of design. This work contributes to literature on how data tools may be integrated into homeless shelters in a way that aligns with shelters' values.

Stories are as old as human history - and a powerful means for the engaging communication of information, especially in combination with visualizations. The InTaVia project is built on this intersection and has developed a platform which supports the workflow of cultural heritage experts to create compelling visualization-based stories: From the search for relevant cultural objects and actors in a cultural knowledge graph, to the curation and visual analysis of the selected information, and to the creation of stories based on these data and visualizations, which can be shared with the interested public.

Humans and animals have the ability to continually acquire, fine-tune, and transfer knowledge and skills throughout their lifespan. This ability, referred to as lifelong learning, is mediated by a rich set of neurocognitive mechanisms that together contribute to the development and specialization of our sensorimotor skills as well as to long-term memory consolidation and retrieval. Consequently, lifelong learning capabilities are crucial for autonomous agents interacting in the real world and processing continuous streams of information. However, lifelong learning remains a long-standing challenge for machine learning and neural network models since the continual acquisition of incrementally available information from non-stationary data distributions generally leads to catastrophic forgetting or interference. This limitation represents a major drawback for state-of-the-art deep neural network models that typically learn representations from stationary batches of training data, thus without accounting for situations in which information becomes incrementally available over time. In this review, we critically summarize the main challenges linked to lifelong learning for artificial learning systems and compare existing neural network approaches that alleviate, to different extents, catastrophic forgetting. We discuss well-established and emerging research motivated by lifelong learning factors in biological systems such as structural plasticity, memory replay, curriculum and transfer learning, intrinsic motivation, and multisensory integration.

While it is nearly effortless for humans to quickly assess the perceptual similarity between two images, the underlying processes are thought to be quite complex. Despite this, the most widely used perceptual metrics today, such as PSNR and SSIM, are simple, shallow functions, and fail to account for many nuances of human perception. Recently, the deep learning community has found that features of the VGG network trained on the ImageNet classification task has been remarkably useful as a training loss for image synthesis. But how perceptual are these so-called "perceptual losses"? What elements are critical for their success? To answer these questions, we introduce a new Full Reference Image Quality Assessment (FR-IQA) dataset of perceptual human judgments, orders of magnitude larger than previous datasets. We systematically evaluate deep features across different architectures and tasks and compare them with classic metrics. We find that deep features outperform all previous metrics by huge margins. More surprisingly, this result is not restricted to ImageNet-trained VGG features, but holds across different deep architectures and levels of supervision (supervised, self-supervised, or even unsupervised). Our results suggest that perceptual similarity is an emergent property shared across deep visual representations.