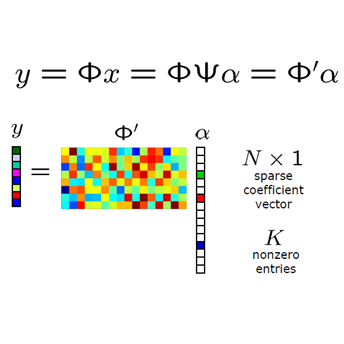

In this work, we present some new results for compressed sensing and phase retrieval. For compressed sensing, it is shown that if the unknown $n$-dimensional vector can be expressed as a linear combination of $s$ unknown Vandermonde vectors (with Fourier vectors as a special case) and the measurement matrix is a Vandermonde matrix, exact recovery of the vector with $2s$ measurements and $O(\mathrm{poly}(s))$ complexity is possible when $n \geq 2s$. From these results, a measurement matrix is constructed from which it is possible to recover $s$-sparse $n$-dimensional vectors for $n \geq 2s$ with as few as $2s$ measurements and with a recovery algorithm of $O(\mathrm{poly}(s))$ complexity. In the second part of the work, these results are extended to the challenging problem of phase retrieval. The most significant discovery in this direction is that if the unknown $n$-dimensional vector is composed of $s$ frequencies with at least one being non-harmonic, $n \geq 4s - 1$ and we take at least $8s-3$ Fourier measurements, there are, remarkably, only two possible vectors producing the observed measurement values and they are easily obtainable from each other. The two vectors can be found by an algorithm with only $O(\mathrm{poly}(s))$ complexity. An immediate application of the new result is construction of a measurement matrix from which it is possible to recover all $s$-sparse $n$-dimensional signals (up to a global phase) from $O(s)$ magnitude-only measurements and $O(\mathrm{poly}(s))$ recovery complexity when $n \geq 4s - 1$.

相關內容

We investigate the emergence of periodic behavior in opinion dynamics and its underlying geometry. For this, we use a bounded-confidence model with contrarian agents in a convolution social network. This means that agents adapt their opinions by interacting with their neighbors in a time-varying social network. Being contrarian, the agents are kept from reaching consensus. This is the key feature that allows the emergence of cyclical trends. We show that the systems either converge to nonconsensual equilibrium or are attracted to periodic or quasi-periodic orbits. We bound the dimension of the attractors and the period of cyclical trends. We exhibit instances where each orbit is dense and uniformly distributed within its attractor. We also investigate the case of randomly changing social networks.

We present a new adaptive algorithm for learning discrete distributions under distribution drift. In this setting, we observe a sequence of independent samples from a discrete distribution that is changing over time, and the goal is to estimate the current distribution. Since we have access to only a single sample for each time step, a good estimation requires a careful choice of the number of past samples to use. To use more samples, we must resort to samples further in the past, and we incur a drift error due to the bias introduced by the change in distribution. On the other hand, if we use a small number of past samples, we incur a large statistical error as the estimation has a high variance. We present a novel adaptive algorithm that can solve this trade-off without any prior knowledge of the drift. Unlike previous adaptive results, our algorithm characterizes the statistical error using data-dependent bounds. This technicality enables us to overcome the limitations of the previous work that require a fixed finite support whose size is known in advance and that cannot change over time. Additionally, we can obtain tighter bounds depending on the complexity of the drifting distribution, and also consider distributions with infinite support.

We study the algorithmic complexity of computing persistent homology of a randomly generated filtration. Specifically, we prove upper bounds for the average fill-in (number of non-zero entries) of the boundary matrix on \v{C}ech, Vietoris--Rips and Erd\H{o}s--R\'enyi filtrations after matrix reduction. Our bounds show that the reduced matrix is expected to be significantly sparser than what the general worst-case predicts. Our method is based on previous results on the expected Betti numbers of the corresponding complexes. We establish a link between these results and the fill-in of the boundary matrix. In the $1$-dimensional case, our bound for \v{C}ech and Vietoris--Rips complexes is asymptotically tight up to a logarithmic factor. We also provide an Erd\H{o}s--R\'enyi filtration realising the worst-case.

In this work, we investigate the effect of momentum on the optimisation trajectory of gradient descent. We leverage a continuous-time approach in the analysis of momentum gradient descent with step size $\gamma$ and momentum parameter $\beta$ that allows us to identify an intrinsic quantity $\lambda = \frac{ \gamma }{ (1 - \beta)^2 }$ which uniquely defines the optimisation path and provides a simple acceleration rule. When training a $2$-layer diagonal linear network in an overparametrised regression setting, we characterise the recovered solution through an implicit regularisation problem. We then prove that small values of $\lambda$ help to recover sparse solutions. Finally, we give similar but weaker results for stochastic momentum gradient descent. We provide numerical experiments which support our claims.

Building upon the exact methods presented in our earlier work [J. Complexity, 2022], we introduce a heuristic approach for the star discrepancy subset selection problem. The heuristic gradually improves the current-best subset by replacing one of its elements at a time. While we prove that the heuristic does not necessarily return an optimal solution, we obtain very promising results for all tested dimensions. For example, for moderate point set sizes $30 \leq n \leq 240$ in dimension 6, we obtain point sets with $L_{\infty}$ star discrepancy up to 35% better than that of the first $n$ points of the Sobol' sequence. Our heuristic works in all dimensions, the main limitation being the precision of the discrepancy calculation algorithms. We also provide a comparison with a recent energy functional introduced by Steinerberger [J. Complexity, 2019], showing that our heuristic performs better on all tested instances.

In this work, we introduce an efficient generation procedure to produce synthetic multi-modal datasets of fluid simulations. The procedure can reproduce the dynamics of fluid flows and allows for exploring and learning various properties of their complex behavior, from distinct perspectives and modalities. We employ our framework to generate a set of thoughtfully designed training datasets, which attempt to span specific fluid simulation scenarios in a meaningful way. The properties of our contributions are demonstrated by evaluating recently published algorithms for the neural fluid simulation and fluid inverse rendering tasks using our benchmark datasets. Our contribution aims to fulfill the community's need for standardized training data, fostering more reproducibile and robust research.

In this article, we study the relationship between notions of depth for sequences, namely, Bennett's notions of strong and weak depth, and deep $\Pi^0_1$ classes, introduced by the authors and motivated by previous work of Levin. For the first main result of the study, we show that every member of a $\Pi^0_1$ class is order-deep, a property that implies strong depth. From this result, we obtain new examples of strongly deep sequences based on properties studied in computability theory and algorithmic randomness. We further show that not every strongly deep sequence is a member of a deep $\Pi^0_1$ class. For the second main result, we show that the collection of strongly deep sequences is negligible, which is equivalent to the statement that the probability of computing a strongly deep sequence with some random oracle is 0, a property also shared by every deep $\Pi^0_1$ class. Finally, we show that variants of strong depth, given in terms of a priori complexity and monotone complexity, are equivalent to weak depth.

It has been shown that deep neural networks are prone to overfitting on biased training data. Towards addressing this issue, meta-learning employs a meta model for correcting the training bias. Despite the promising performances, super slow training is currently the bottleneck in the meta learning approaches. In this paper, we introduce a novel Faster Meta Update Strategy (FaMUS) to replace the most expensive step in the meta gradient computation with a faster layer-wise approximation. We empirically find that FaMUS yields not only a reasonably accurate but also a low-variance approximation of the meta gradient. We conduct extensive experiments to verify the proposed method on two tasks. We show our method is able to save two-thirds of the training time while still maintaining the comparable or achieving even better generalization performance. In particular, our method achieves the state-of-the-art performance on both synthetic and realistic noisy labels, and obtains promising performance on long-tailed recognition on standard benchmarks.

We present a new method to learn video representations from large-scale unlabeled video data. Ideally, this representation will be generic and transferable, directly usable for new tasks such as action recognition and zero or few-shot learning. We formulate unsupervised representation learning as a multi-modal, multi-task learning problem, where the representations are shared across different modalities via distillation. Further, we introduce the concept of loss function evolution by using an evolutionary search algorithm to automatically find optimal combination of loss functions capturing many (self-supervised) tasks and modalities. Thirdly, we propose an unsupervised representation evaluation metric using distribution matching to a large unlabeled dataset as a prior constraint, based on Zipf's law. This unsupervised constraint, which is not guided by any labeling, produces similar results to weakly-supervised, task-specific ones. The proposed unsupervised representation learning results in a single RGB network and outperforms previous methods. Notably, it is also more effective than several label-based methods (e.g., ImageNet), with the exception of large, fully labeled video datasets.

In this paper, we present an accurate and scalable approach to the face clustering task. We aim at grouping a set of faces by their potential identities. We formulate this task as a link prediction problem: a link exists between two faces if they are of the same identity. The key idea is that we find the local context in the feature space around an instance (face) contains rich information about the linkage relationship between this instance and its neighbors. By constructing sub-graphs around each instance as input data, which depict the local context, we utilize the graph convolution network (GCN) to perform reasoning and infer the likelihood of linkage between pairs in the sub-graphs. Experiments show that our method is more robust to the complex distribution of faces than conventional methods, yielding favorably comparable results to state-of-the-art methods on standard face clustering benchmarks, and is scalable to large datasets. Furthermore, we show that the proposed method does not need the number of clusters as prior, is aware of noises and outliers, and can be extended to a multi-view version for more accurate clustering accuracy.