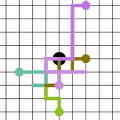

Given a graph $G$, a query node $q$, and an integer $k$, community search (CS) seeks a cohesive subgraph (measured by community models such as $k$-core or $k$-truss) from $G$ that contains $q$. It is difficult for ordinary users with less knowledge of graphs' complexity to set an appropriate $k$. Even if we define quite a large $k$, the community size returned by CS is often too large for users to gain much insight about it. Compared against the entire community, key-members in the community appear more valuable than others. To contend with this, we focus on Community Key-members Search problem (CKS). We turn our perspective to the key-members in the community containing $q$ instead of the entire community. To solve CKS problem, we first propose an exact algorithm based on truss decomposition as a baseline. Then, we present four random walk-based optimized algorithms to achieve a trade-off between effectiveness and efficiency, by carefully considering three important cohesiveness features in the design of transition matrix. As a result, we return key-members according to the stationary distribution when random walk converges. We theoretically analyze the rationality of designing the cohesiveness-aware transition matrix for random walk, through Bayesian theory based on Gaussian Mixture Model with Box-Cox Transformation and Copula Function Fitting. Moreover, we propose a lightweight refinement method following an ``expand-replace" manner to further optimize the result with little overhead, and we extend our method for CKS with multiple query nodes. Comprehensive experimental studies on various real-world datasets demonstrate our method's superiority.

相關內容

NSFW (Not Safe for Work) content, in the context of a dialogue, can have severe side effects on users in open-domain dialogue systems. However, research on detecting NSFW language, especially sexually explicit content, within a dialogue context has significantly lagged behind. To address this issue, we introduce CensorChat, a dialogue monitoring dataset aimed at NSFW dialogue detection. Leveraging knowledge distillation techniques involving GPT-4 and ChatGPT, this dataset offers a cost-effective means of constructing NSFW content detectors. The process entails collecting real-life human-machine interaction data and breaking it down into single utterances and single-turn dialogues, with the chatbot delivering the final utterance. ChatGPT is employed to annotate unlabeled data, serving as a training set. Rationale validation and test sets are constructed using ChatGPT and GPT-4 as annotators, with a self-criticism strategy for resolving discrepancies in labeling. A BERT model is fine-tuned as a text classifier on pseudo-labeled data, and its performance is assessed. The study emphasizes the importance of AI systems prioritizing user safety and well-being in digital conversations while respecting freedom of expression. The proposed approach not only advances NSFW content detection but also aligns with evolving user protection needs in AI-driven dialogues.

We consider extensions of the Shannon relative entropy, referred to as $f$-divergences.Three classical related computational problems are typically associated with these divergences: (a) estimation from moments, (b) computing normalizing integrals, and (c) variational inference in probabilistic models. These problems are related to one another through convex duality, and for all them, there are many applications throughout data science, and we aim for computationally tractable approximation algorithms that preserve properties of the original problem such as potential convexity or monotonicity. In order to achieve this, we derive a sequence of convex relaxations for computing these divergences from non-centered covariance matrices associated with a given feature vector: starting from the typically non-tractable optimal lower-bound, we consider an additional relaxation based on ``sums-of-squares'', which is is now computable in polynomial time as a semidefinite program. We also provide computationally more efficient relaxations based on spectral information divergences from quantum information theory. For all of the tasks above, beyond proposing new relaxations, we derive tractable convex optimization algorithms, and we present illustrations on multivariate trigonometric polynomials and functions on the Boolean hypercube.

We consider a category-level perception problem, where one is given 2D or 3D sensor data picturing an object of a given category (e.g., a car), and has to reconstruct the 3D pose and shape of the object despite intra-class variability (i.e., different car models have different shapes). We consider an active shape model, where -- for an object category -- we are given a library of potential CAD models describing objects in that category, and we adopt a standard formulation where pose and shape are estimated from 2D or 3D keypoints via non-convex optimization. Our first contribution is to develop PACE3D* and PACE2D*, the first certifiably optimal solvers for pose and shape estimation using 3D and 2D keypoints, respectively. Both solvers rely on the design of tight (i.e., exact) semidefinite relaxations. Our second contribution is to develop outlier-robust versions of both solvers, named PACE3D# and PACE2D#. Towards this goal, we propose ROBIN, a general graph-theoretic framework to prune outliers, which uses compatibility hypergraphs to model measurements' compatibility. We show that in category-level perception problems these hypergraphs can be built from the winding orders of the keypoints (in 2D) or their convex hulls (in 3D), and many outliers can be filtered out via maximum hyperclique computation. The last contribution is an extensive experimental evaluation. Besides providing an ablation study on simulated datasets and on the PASCAL3D+ dataset, we combine our solver with a deep keypoint detector, and show that PACE3D# improves over the state of the art in vehicle pose estimation in the ApolloScape datasets, and its runtime is compatible with practical applications. We release our code at //github.com/MIT-SPARK/PACE.

We consider a category-level perception problem, where one is given 3D sensor data picturing an object of a given category (e.g. a car), and has to reconstruct the pose and shape of the object despite intra-class variability (i.e. different car models have different shapes). We consider an active shape model, where -- for an object category -- we are given a library of potential CAD models describing objects in that category, and we adopt a standard formulation where pose and shape estimation are formulated as a non-convex optimization. Our first contribution is to provide the first certifiably optimal solver for pose and shape estimation. In particular, we show that rotation estimation can be decoupled from the estimation of the object translation and shape, and we demonstrate that (i) the optimal object rotation can be computed via a tight (small-size) semidefinite relaxation, and (ii) the translation and shape parameters can be computed in closed-form given the rotation. Our second contribution is to add an outlier rejection layer to our solver, hence making it robust to a large number of misdetections. Towards this goal, we wrap our optimal solver in a robust estimation scheme based on graduated non-convexity. To further enhance robustness to outliers, we also develop the first graph-theoretic formulation to prune outliers in category-level perception, which removes outliers via convex hull and maximum clique computations; the resulting approach is robust to 70%-90% outliers. Our third contribution is an extensive experimental evaluation. Besides providing an ablation study on a simulated dataset and on the PASCAL3D+ dataset, we combine our solver with a deep-learned keypoint detector, and show that the resulting approach improves over the state of the art in vehicle pose estimation in the ApolloScape datasets.

We study the problem of Out-of-Distribution (OOD) detection, that is, detecting whether a learning algorithm's output can be trusted at inference time. While a number of tests for OOD detection have been proposed in prior work, a formal framework for studying this problem is lacking. We propose a definition for the notion of OOD that includes both the input distribution and the learning algorithm, which provides insights for the construction of powerful tests for OOD detection. We propose a multiple hypothesis testing inspired procedure to systematically combine any number of different statistics from the learning algorithm using conformal p-values. We further provide strong guarantees on the probability of incorrectly classifying an in-distribution sample as OOD. In our experiments, we find that threshold-based tests proposed in prior work perform well in specific settings, but not uniformly well across different types of OOD instances. In contrast, our proposed method that combines multiple statistics performs uniformly well across different datasets and neural networks.

We propose a new algorithm for variance reduction when estimating $f(X_T)$ where $X$ is the solution to some stochastic differential equation and $f$ is a test function. The new estimator is $(f(X^1_T) + f(X^2_T))/2$, where $X^1$ and $X^2$ have same marginal law as $X$ but are pathwise correlated so that to reduce the variance. The optimal correlation function $\rho$ is approximated by a deep neural network and is calibrated along the trajectories of $(X^1, X^2)$ by policy gradient and reinforcement learning techniques. Finding an optimal coupling given marginal laws has links with maximum optimal transport.

Many multi-object tracking (MOT) methods follow the framework of "tracking by detection", which associates the target objects-of-interest based on the detection results. However, due to the separate models for detection and association, the tracking results are not optimal.Moreover, the speed is limited by some cumbersome association methods to achieve high tracking performance. In this work, we propose an end-to-end MOT method, with a Gaussian filter-inspired dynamic search region refinement module to dynamically filter and refine the search region by considering both the template information from the past frames and the detection results from the current frame with little computational burden, and a lightweight attention-based tracking head to achieve the effective fine-grained instance association. Extensive experiments and ablation study on MOT17 and MOT20 datasets demonstrate that our method can achieve the state-of-the-art performance with reasonable speed.

We propose a novel protocol for computing a circuit which implements the multi-party private set intersection functionality (PSI). Circuit-based approach has advantages over using custom protocols to achieve this task, since many applications of PSI do not require the computation of the intersection itself, but rather specific functional computations over the items in the intersection. Our protocol represents the pioneering circuit-based multi-party PSI protocol, which builds upon and optimizes the two-party SCS \cite{huang2012private} protocol. By using secure computation between two parties, our protocol sidesteps the complexities associated with multi-party interactions and demonstrates good scalability. In order to mitigate the high overhead associated with circuit-based constructions, we have further enhanced our protocol by utilizing simple hashing scheme and permutation-based hash functions. These tricks have enabled us to minimize circuit size by employing bucketing techniques while simultaneously attaining noteworthy reductions in both computation and communication expenses.

Most object recognition approaches predominantly focus on learning discriminative visual patterns while overlooking the holistic object structure. Though important, structure modeling usually requires significant manual annotations and therefore is labor-intensive. In this paper, we propose to "look into object" (explicitly yet intrinsically model the object structure) through incorporating self-supervisions into the traditional framework. We show the recognition backbone can be substantially enhanced for more robust representation learning, without any cost of extra annotation and inference speed. Specifically, we first propose an object-extent learning module for localizing the object according to the visual patterns shared among the instances in the same category. We then design a spatial context learning module for modeling the internal structures of the object, through predicting the relative positions within the extent. These two modules can be easily plugged into any backbone networks during training and detached at inference time. Extensive experiments show that our look-into-object approach (LIO) achieves large performance gain on a number of benchmarks, including generic object recognition (ImageNet) and fine-grained object recognition tasks (CUB, Cars, Aircraft). We also show that this learning paradigm is highly generalizable to other tasks such as object detection and segmentation (MS COCO). Project page: //github.com/JDAI-CV/LIO.

Multi-paragraph reasoning is indispensable for open-domain question answering (OpenQA), which receives less attention in the current OpenQA systems. In this work, we propose a knowledge-enhanced graph neural network (KGNN), which performs reasoning over multiple paragraphs with entities. To explicitly capture the entities' relatedness, KGNN utilizes relational facts in knowledge graph to build the entity graph. The experimental results show that KGNN outperforms in both distractor and full wiki settings than baselines methods on HotpotQA dataset. And our further analysis illustrates KGNN is effective and robust with more retrieved paragraphs.