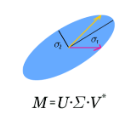

In this paper a two-sided, parallel Kogbetliantz-type algorithm for the hyperbolic singular value decomposition (HSVD) of real and complex square matrices is developed, with a single assumption that the input matrix, of order $n$, admits such a decomposition into the product of a unitary, a non-negative diagonal, and a $J$-unitary matrix, where $J$ is a given diagonal matrix of positive and negative signs. When $J=\pm I$, the proposed algorithm computes the ordinary SVD. The paper's most important contribution -- a derivation of formulas for the HSVD of $2\times 2$ matrices -- is presented first, followed by the details of their implementation in floating-point arithmetic. Next, the effects of the hyperbolic transformations on the columns of the iteration matrix are discussed. These effects then guide a redesign of the dynamic pivot ordering, being already a well-established pivot strategy for the ordinary Kogbetliantz algorithm, for the general, $n\times n$ HSVD. A heuristic but sound convergence criterion is then proposed, which contributes to high accuracy demonstrated in the numerical testing results. Such a $J$-Kogbetliantz algorithm as presented here is intrinsically slow, but is nevertheless usable for matrices of small orders.

相關內容

This paper presents an efficient reversible algorithm for linear regression, both with and without ridge regression. Our reversible algorithm matches the asymptotic time and space complexity of standard irreversible algorithms for this problem. Needed for this result is the expansion of the analysis of efficient reversible matrix multiplication to rectangular matrices and matrix inversion.

The Sinc-Nystr\"{o}m method is a high-order numerical method based on Sinc basis functions for discretizing evolutionary differential equations in time. But in this method we have to solve all the time steps in one-shot (i.e. all-at-once), which results in a large-scale nonsymmetric dense system that is expensive to handle. In this paper, we propose and analyze preconditioner for such dense system arising from both the parabolic and hyperbolic PDEs. The proposed preconditioner is a low-rank perturbation of the original matrix and has two advantages. First, we show that the eigenvalues of the preconditioned system are highly clustered with some uniform bounds which are independent of the mesh parameters. Second, the preconditioner can be used parallel for all the Sinc time points via a block diagonalization procedure. Such a parallel potential owes to the fact that the eigenvector matrix of the diagonalization is well conditioned. In particular, we show that the condition number of the eigenvector matrix only mildly grows as the number of Sinc time points increases, and thus the roundoff error arising from the diagonalization procedure is controllable. The effectiveness of our proposed PinT preconditioners is verified by the observed mesh-independent convergence rates of the preconditioned GMRES in reported numerical examples.

The asymptotic behaviour of Linear Spectral Statistics (LSS) of the smoothed periodogram estimator of the spectral coherency matrix of a complex Gaussian high-dimensional time series $(\y_n)_{n \in \mathbb{Z}}$ with independent components is studied under the asymptotic regime where the sample size $N$ converges towards $+\infty$ while the dimension $M$ of $\y$ and the smoothing span of the estimator grow to infinity at the same rate in such a way that $\frac{M}{N} \rightarrow 0$. It is established that, at each frequency, the estimated spectral coherency matrix is close from the sample covariance matrix of an independent identically $\mathcal{N}_{\mathbb{C}}(0,\I_M)$ distributed sequence, and that its empirical eigenvalue distribution converges towards the Marcenko-Pastur distribution. This allows to conclude that each LSS has a deterministic behaviour that can be evaluated explicitly. Using concentration inequalities, it is shown that the order of magnitude of the supremum over the frequencies of the deviation of each LSS from its deterministic approximation is of the order of $\frac{1}{M} + \frac{\sqrt{M}}{N}+ (\frac{M}{N})^{3}$ where $N$ is the sample size. Numerical simulations supports our results.

We introduce a new real-valued invariant called the natural slope of a hyperbolic knot in the 3-sphere, which is defined in terms of its cusp geometry. We show that twice the knot signature and the natural slope differ by at most a constant times the hyperbolic volume divided by the cube of the injectivity radius. This inequality was discovered using machine learning to detect relationships between various knot invariants. It has applications to Dehn surgery and to 4-ball genus. We also show a refined version of the inequality where the upper bound is a linear function of the volume, and the slope is corrected by terms corresponding to short geodesics that link the knot an odd number of times.

We propose a block version of the randomized Gram-Schmidt process for computing a QR factorization of a matrix. Our algorithm inherits the major properties of its single-vector analogue from [Balabanov and Grigori, 2020] such as higher efficiency than the classical Gram-Schmidt algorithm and stability of the modified Gram-Schmidt algorithm, which can be refined even further by using multi-precision arithmetic. As in [Balabanov and Grigori, 2020], our algorithm has an advantage of performing standard high-dimensional operations, that define the overall computational cost, with a unit roundoff independent of the dominant dimension of the matrix. This unique feature makes the methodology especially useful for large-scale problems computed on low-precision arithmetic architectures. Block algorithms are advantageous in terms of performance as they are mainly based on cache-friendly matrix-wise operations, and can reduce communication cost in high-performance computing. The block Gram-Schmidt orthogonalization is the key element in the block Arnoldi procedure for the construction of Krylov basis, which in its turn is used in GMRES and Rayleigh-Ritz methods for the solution of linear systems and clustered eigenvalue problems. In this article, we develop randomized versions of these methods, based on the proposed randomized Gram-Schmidt algorithm, and validate them on nontrivial numerical examples.

With the goal of improving spectral efficiency, complex rotation-based precoding and power allocation schemes are developed for two multiple-input multiple-output (MIMO) communication systems, namely, simultaneous wireless information and power transfer (SWIPT) and physical layer multicasting. While the state-of-the-art solutions for these problems use very different approaches, the proposed approach treats them similarly using a general tool and works efficiently for any number of antennas at each node. Through modeling the precoder using complex rotation matrices, objective functions (transmission rates) of the above systems can be formulated and solved in a similar structure. Hence, this approach simplifies signaling design for MIMO systems and can reduce the hardware complexity by having one set of parameters to optimize. Extensive numerical results show that the proposed approach outperforms state-of-the-art solutions for both problems. It increases transmission rates for multicasting and achieves higher rate-energy regions in the SWIPT case. In both cases, the improvement is significant (20%-30%) in practically important settings where the users have one or two antennas. Furthermore, the new precoders are less time-consuming than the existing solutions.

We present a new data structure to approximate accurately and efficiently a polynomial $f$ of degree $d$ given as a list of coefficients. Its properties allow us to improve the state-of-the-art bounds on the bit complexity for the problems of root isolation and approximate multipoint evaluation. This data structure also leads to a new geometric criterion to detect ill-conditioned polynomials, implying notably that the standard condition number of the zeros of a polynomial is at least exponential in the number of roots of modulus less than $1/2$ or greater than $2$.Given a polynomial $f$ of degree $d$ with $\|f\|_1 \leq 2^\tau$ for $\tau \geq 1$, isolating all its complex roots or evaluating it at $d$ points can be done with a quasi-linear number of arithmetic operations. However, considering the bit complexity, the state-of-the-art algorithms require at least $d^{3/2}$ bit operations even for well-conditioned polynomials and when the accuracy required is low. Given a positive integer $m$, we can compute our new data structure and evaluate $f$ at $d$ points in the unit disk with an absolute error less than $2^{-m}$ in $\widetilde O(d(\tau+m))$ bit operations, where $\widetilde O(\cdot)$ means that we omit logarithmic factors. We also show that if $\kappa$ is the absolute condition number of the zeros of $f$, then we can isolate all the roots of $f$ in $\widetilde O(d(\tau + \log \kappa))$ bit operations. Moreover, our algorithms are simple to implement. For approximating the complex roots of a polynomial, we implemented a small prototype in \verb|Python/NumPy| that is an order of magnitude faster than the state-of-the-art solver \verb/MPSolve/ for high degree polynomials with random coefficients.

This paper develops simple feed-forward neural networks that achieve the universal approximation property for all continuous functions with a fixed finite number of neurons. These neural networks are simple because they are designed with a simple and computable continuous activation function $\sigma$ leveraging a triangular-wave function and a softsign function. We prove that $\sigma$-activated networks with width $36d(2d+1)$ and depth $11$ can approximate any continuous function on a $d$-dimensioanl hypercube within an arbitrarily small error. Hence, for supervised learning and its related regression problems, the hypothesis space generated by these networks with a size not smaller than $36d(2d+1)\times 11$ is dense in the space of continuous functions. Furthermore, classification functions arising from image and signal classification are in the hypothesis space generated by $\sigma$-activated networks with width $36d(2d+1)$ and depth $12$, when there exist pairwise disjoint closed bounded subsets of $\mathbb{R}^d$ such that the samples of the same class are located in the same subset.

Graph neural network (GNN) has shown superior performance in dealing with graphs, which has attracted considerable research attention recently. However, most of the existing GNN models are primarily designed for graphs in Euclidean spaces. Recent research has proven that the graph data exhibits non-Euclidean latent anatomy. Unfortunately, there was rarely study of GNN in non-Euclidean settings so far. To bridge this gap, in this paper, we study the GNN with attention mechanism in hyperbolic spaces at the first attempt. The research of hyperbolic GNN has some unique challenges: since the hyperbolic spaces are not vector spaces, the vector operations (e.g., vector addition, subtraction, and scalar multiplication) cannot be carried. To tackle this problem, we employ the gyrovector spaces, which provide an elegant algebraic formalism for hyperbolic geometry, to transform the features in a graph; and then we propose the hyperbolic proximity based attention mechanism to aggregate the features. Moreover, as mathematical operations in hyperbolic spaces could be more complicated than those in Euclidean spaces, we further devise a novel acceleration strategy using logarithmic and exponential mappings to improve the efficiency of our proposed model. The comprehensive experimental results on four real-world datasets demonstrate the performance of our proposed hyperbolic graph attention network model, by comparisons with other state-of-the-art baseline methods.

UMAP (Uniform Manifold Approximation and Projection) is a novel manifold learning technique for dimension reduction. UMAP is constructed from a theoretical framework based in Riemannian geometry and algebraic topology. The result is a practical scalable algorithm that applies to real world data. The UMAP algorithm is competitive with t-SNE for visualization quality, and arguably preserves more of the global structure with superior run time performance. Furthermore, UMAP has no computational restrictions on embedding dimension, making it viable as a general purpose dimension reduction technique for machine learning.