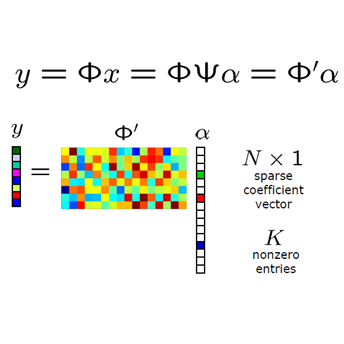

Solving inverse problems is a fundamental component of science, engineering and mathematics. With the advent of deep learning, deep neural networks have significant potential to outperform existing state-of-the-art, model-based methods for solving inverse problems. However, it is known that current data-driven approaches face several key issues, notably hallucinations, instabilities and unpredictable generalization, with potential impact in critical tasks such as medical imaging. This raises the key question of whether or not one can construct deep neural networks for inverse problems with explicit stability and accuracy guarantees. In this work, we present a novel construction of accurate, stable and efficient neural networks for inverse problems with general analysis-sparse models, termed NESTANets. To construct the network, we first unroll NESTA, an accelerated first-order method for convex optimization. The slow convergence of this method leads to deep networks with low efficiency. Therefore, to obtain shallow, and consequently more efficient, networks we combine NESTA with a novel restart scheme. We then use compressed sensing techniques to demonstrate accuracy and stability. We showcase this approach in the case of Fourier imaging, and verify its stability and performance via a series of numerical experiments. The key impact of this work is demonstrating the construction of efficient neural networks based on unrolling with guaranteed stability and accuracy.

相關內容

We consider the constrained Linear Inverse Problem (LIP), where a certain atomic norm (like the $\ell_1 $ and the Nuclear norm) is minimized subject to a quadratic constraint. Typically, such cost functions are non-differentiable which makes them not amenable to the fast optimization methods existing in practice. We propose two equivalent reformulations of the constrained LIP with improved convex regularity: (i) a smooth convex minimization problem, and (ii) a strongly convex min-max problem. These problems could be solved by applying existing acceleration based convex optimization methods which provide better \mmode{ O \left( \nicefrac{1}{k^2} \right) } theoretical convergence guarantee. However, to fully exploit the utility of these reformulations, we also provide a novel algorithm, to which we refer as the Fast Linear Inverse Problem Solver (FLIPS), that is tailored to solve the reformulation of the LIP. We demonstrate the performance of FLIPS on the sparse coding problem arising in image processing tasks. In this setting, we observe that FLIPS consistently outperforms the Chambolle-Pock and C-SALSA algorithms--two of the current best methods in the literature.

Many solid mechanics problems on complex geometries are conventionally solved using discrete boundary methods. However, such an approach can be cumbersome for problems involving evolving domain boundaries due to the need to track boundaries and constant remeshing. In this work, we employ a robust smooth boundary method (SBM) that represents complex geometry implicitly, in a larger and simpler computational domain, as the support of a smooth indicator function. We present the resulting equations for mechanical equilibrium, in which inhomogeneous boundary conditions are replaced by source terms. The resulting mechanical equilibrium problem is semidefinite, making it difficult to solve. In this work, we present a computational strategy for efficiently solving near-singular SBM elasticity problems. We use the block-structured adaptive mesh refinement (BSAMR) method for resolving evolving boundaries appropriately, coupled with a geometric multigrid solver for an efficient solution of mechanical equilibrium. We discuss some of the practical numerical strategies for implementing this method, notably including the importance of grid versus node-centered fields. We demonstrate the solver's accuracy and performance for three representative examples: a) plastic strain evolution around a void, b) crack nucleation and propagation in brittle materials, and c) structural topology optimization. In each case, we show that very good convergence of the solver is achieved, even with large near-singular areas, and that any convergence issues arise from other complexities, such as stress concentrations. We present this framework as a versatile tool for studying a wide variety of solid mechanics problems involving variable geometry.

It is well-known that cohomology has a richer structure than homology. However, so far, in practice, the use of cohomology in persistence setting has been limited to speeding up of barcode computations. Two recently introduced invariants, namely, persistent cup-length and persistent Steenrod modules, to some extent, fill this gap. When added to the standard persistence barcode, they lead to invariants that are more discriminative than the standard persistence barcode. In this work, we introduce (the persistent variants of) the order-$k$ cup product modules, which are images of maps from the $k$-fold tensor products of the cohomology vector space of a complex to the cohomology vector space of the complex itself. We devise an $O(d n^4)$ algorithm for computing the order-$k$ cup product persistent modules for all $k \in \{2, \dots, d\}$, where $d$ denotes the dimension of the filtered complex, and $n$ denotes its size. Furthermore, we show that these modules are stable for Cech and Rips filtrations. Finally, we note that the persistent cup length can be obtained as a byproduct of our computations leading to a significantly faster algorithm for computing it.

Proximal splitting-based convex optimization is a promising approach to linear inverse problems because we can use some prior knowledge of the unknown variables explicitly. An understanding of the behavior of the optimization algorithms would be important for the tuning of the parameters and the development of new algorithms. In this paper, we first analyze the asymptotic property of the proximity operator for the squared loss function, which appears in the update equations of some proximal splitting methods for linear inverse problems. The analysis shows that the output of the proximity operator can be characterized with a scalar random variable in the large system limit. Moreover, we investigate the asymptotic behavior of the Douglas-Rachford algorithm, which is one of the famous proximal splitting methods. From the resultant conjecture, we can predict the evolution of the mean squared error (MSE) in the algorithm for large-scale linear inverse problems. Simulation results demonstrate that the MSE performance of the Douglas-Rachford algorithm can be well predicted by the analytical result in compressed sensing with the $\ell_{1}$ optimization.

Derived from spiking neuron models via the diffusion approximation, the moment activation (MA) faithfully captures the nonlinear coupling of correlated neural variability. However, numerical evaluation of the MA faces significant challenges due to a number of ill-conditioned Dawson-like functions. By deriving asymptotic expansions of these functions, we develop an efficient numerical algorithm for evaluating the MA and its derivatives ensuring reliability, speed, and accuracy. We also provide exact analytical expressions for the MA in the weak fluctuation limit. Powered by this efficient algorithm, the MA may serve as an effective tool for investigating the dynamics of correlated neural variability in large-scale spiking neural circuits.

Image reconstruction using deep learning algorithms offers improved reconstruction quality and lower reconstruction time than classical compressed sensing and model-based algorithms. Unfortunately, clean and fully sampled ground-truth data to train the deep networks is often unavailable in several applications, restricting the applicability of the above methods. We introduce a novel metric termed the ENsemble Stein's Unbiased Risk Estimate (ENSURE) framework, which can be used to train deep image reconstruction algorithms without fully sampled and noise-free images. The proposed framework is the generalization of the classical SURE and GSURE formulation to the setting where the images are sampled by different measurement operators, chosen randomly from a set. We evaluate the expectation of the GSURE loss functions over the sampling patterns to obtain the ENSURE loss function. We show that this loss is an unbiased estimate for the true mean-square error, which offers a better alternative to GSURE, which only offers an unbiased estimate for the projected error. Our experiments show that the networks trained with this loss function can offer reconstructions comparable to the supervised setting. While we demonstrate this framework in the context of MR image recovery, the ENSURE framework is generally applicable to arbitrary inverse problems.

The compute-intensive nature of neural networks (NNs) limits their deployment in resource-constrained environments such as cell phones, drones, autonomous robots, etc. Hence, developing robust sparse models fit for safety-critical applications has been an issue of longstanding interest. Though adversarial training with model sparsification has been combined to attain the goal, conventional adversarial training approaches provide no formal guarantee that the models would be robust against any rogue samples in a restricted space around a benign sample. Recently proposed verified local robustness techniques provide such a guarantee. This is the first paper that combines the ideas from verified local robustness and dynamic sparse training to develop `SparseVLR'-- a novel framework to search verified locally robust sparse networks. Obtained sparse models exhibit accuracy and robustness comparable to their dense counterparts at sparsity as high as 99%. Furthermore, unlike most conventional sparsification techniques, SparseVLR does not require a pre-trained dense model, reducing the training time by 50%. We exhaustively investigated SparseVLR's efficacy and generalizability by evaluating various benchmark and application-specific datasets across several models.

Graph convolution networks (GCN) are increasingly popular in many applications, yet remain notoriously hard to train over large graph datasets. They need to compute node representations recursively from their neighbors. Current GCN training algorithms suffer from either high computational costs that grow exponentially with the number of layers, or high memory usage for loading the entire graph and node embeddings. In this paper, we propose a novel efficient layer-wise training framework for GCN (L-GCN), that disentangles feature aggregation and feature transformation during training, hence greatly reducing time and memory complexities. We present theoretical analysis for L-GCN under the graph isomorphism framework, that L-GCN leads to as powerful GCNs as the more costly conventional training algorithm does, under mild conditions. We further propose L^2-GCN, which learns a controller for each layer that can automatically adjust the training epochs per layer in L-GCN. Experiments show that L-GCN is faster than state-of-the-arts by at least an order of magnitude, with a consistent of memory usage not dependent on dataset size, while maintaining comparable prediction performance. With the learned controller, L^2-GCN can further cut the training time in half. Our codes are available at //github.com/Shen-Lab/L2-GCN.

Since deep neural networks were developed, they have made huge contributions to everyday lives. Machine learning provides more rational advice than humans are capable of in almost every aspect of daily life. However, despite this achievement, the design and training of neural networks are still challenging and unpredictable procedures. To lower the technical thresholds for common users, automated hyper-parameter optimization (HPO) has become a popular topic in both academic and industrial areas. This paper provides a review of the most essential topics on HPO. The first section introduces the key hyper-parameters related to model training and structure, and discusses their importance and methods to define the value range. Then, the research focuses on major optimization algorithms and their applicability, covering their efficiency and accuracy especially for deep learning networks. This study next reviews major services and toolkits for HPO, comparing their support for state-of-the-art searching algorithms, feasibility with major deep learning frameworks, and extensibility for new modules designed by users. The paper concludes with problems that exist when HPO is applied to deep learning, a comparison between optimization algorithms, and prominent approaches for model evaluation with limited computational resources.

Retrieving object instances among cluttered scenes efficiently requires compact yet comprehensive regional image representations. Intuitively, object semantics can help build the index that focuses on the most relevant regions. However, due to the lack of bounding-box datasets for objects of interest among retrieval benchmarks, most recent work on regional representations has focused on either uniform or class-agnostic region selection. In this paper, we first fill the void by providing a new dataset of landmark bounding boxes, based on the Google Landmarks dataset, that includes $94k$ images with manually curated boxes from $15k$ unique landmarks. Then, we demonstrate how a trained landmark detector, using our new dataset, can be leveraged to index image regions and improve retrieval accuracy while being much more efficient than existing regional methods. In addition, we further introduce a novel regional aggregated selective match kernel (R-ASMK) to effectively combine information from detected regions into an improved holistic image representation. R-ASMK boosts image retrieval accuracy substantially at no additional memory cost, while even outperforming systems that index image regions independently. Our complete image retrieval system improves upon the previous state-of-the-art by significant margins on the Revisited Oxford and Paris datasets. Code and data will be released.