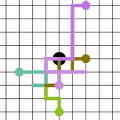

Hypothesis testing is a statistical method used to draw conclusions about populations from sample data, typically represented in tables. With the prevalence of graph representations in real-life applications, hypothesis testing in graphs is gaining importance. In this work, we formalize node, edge, and path hypotheses in attributed graphs. We develop a sampling-based hypothesis testing framework, which can accommodate existing hypothesis-agnostic graph sampling methods. To achieve accurate and efficient sampling, we then propose a Path-Hypothesis-Aware SamplEr, PHASE, an m- dimensional random walk that accounts for the paths specified in a hypothesis. We further optimize its time efficiency and propose PHASEopt. Experiments on real datasets demonstrate the ability of our framework to leverage common graph sampling methods for hypothesis testing, and the superiority of hypothesis-aware sampling in terms of accuracy and time efficiency.

相關內容

Rate split multiple access (RSMA) has been proven as an effective communication scheme for 5G and beyond, especially in vehicular scenarios. However, RSMA requires complicated iterative algorithms for proper resource allocation, which cannot fulfill the stringent latency requirement in resource constrained vehicles. Although data driven approaches can alleviate this issue, they suffer from poor generalizability and scarce training data. In this paper, we propose a fractional programming (FP) based deep unfolding (DU) approach to address resource allocation problem for a weighted sum rate optimization in RSMA. By carefully designing the penalty function, we couple the variable update with projected gradient descent algorithm (PGD). Following the structure of PGD, we embed few learnable parameters in each layer of the DU network. Through extensive simulation, we have shown that the proposed model-based neural networks has similar performance as optimal results given by traditional algorithm but with much lower computational complexity, less training data, and higher resilience to test set data and out-of-distribution (OOD) data.

Economic policy sciences are constantly investigating the quality of well-being of broad sections of the population in order to describe the current interdependence between unequal living conditions, low levels of education and a lack of integration into society. Such studies are often carried out in the form of surveys, e.g. as part of the EU-SILC program. If the survey is designed at national or international level, the results of the study are often used as a reference by a broad range of public institutions. However, the sampling strategy per se may not capture enough information to provide an accurate representation of all population strata. Problems might arise from rare, or hard-to-sample, populations and the conclusion of the study may be compromised or unrealistic. We propose here a two-phase methodology to identify rare, poorly sampled populations and then resample the hard-to-sample strata. We focused our attention on the 2019 EU-SILC section concerning the Italian region of Liguria. Methods based on dispersion indices or deep learning were used to detect rare populations. A multi-frame survey was proposed as the sampling design. The results showed that factors such as citizenship, material deprivation and large families are still fundamental characteristics that are difficult to capture.

Existing methods for creating source-grounded information-seeking dialog datasets are often costly and hard to implement due to their sole reliance on human annotators. We propose combining large language models (LLMs) prompting with human expertise for more efficient and reliable data generation. Instead of the labor-intensive Wizard-of-Oz (WOZ) method, where two annotators generate a dialog from scratch, role-playing agent and user, we use LLM generation to simulate the two roles. Annotators then verify the output and augment it with attribution data. We demonstrate our method by constructing MISeD -- Meeting Information Seeking Dialogs dataset -- the first information-seeking dialog dataset focused on meeting transcripts. Models finetuned with MISeD demonstrate superior performance on our test set, as well as on a novel fully-manual WOZ test set and an existing query-based summarization benchmark, suggesting the utility of our approach.

Hyperspectral Imaging (HSI) serves as an important technique in remote sensing. However, high dimensionality and data volume typically pose significant computational challenges. Band selection is essential for reducing spectral redundancy in hyperspectral imagery while retaining intrinsic critical information. In this work, we propose a novel hyperspectral band selection model by decomposing the data into a low-rank and smooth component and a sparse one. In particular, we develop a generalized 3D total variation (G3DTV) by applying the $\ell_1^p$-norm to derivatives to preserve spatial-spectral smoothness. By employing the alternating direction method of multipliers (ADMM), we derive an efficient algorithm, where the tensor low-rankness is implied by the tensor CUR decomposition. We demonstrate the effectiveness of the proposed approach through comparisons with various other state-of-the-art band selection techniques using two benchmark real-world datasets. In addition, we provide practical guidelines for parameter selection in both noise-free and noisy scenarios.

Incorporating human-perceptual intelligence into model training has shown to increase the generalization capability of models in several difficult biometric tasks, such as presentation attack detection (PAD) and detection of synthetic samples. After the initial collection phase, human visual saliency (e.g., eye-tracking data, or handwritten annotations) can be integrated into model training through attention mechanisms, augmented training samples, or through human perception-related components of loss functions. Despite their successes, a vital, but seemingly neglected, aspect of any saliency-based training is the level of salience granularity (e.g., bounding boxes, single saliency maps, or saliency aggregated from multiple subjects) necessary to find a balance between reaping the full benefits of human saliency and the cost of its collection. In this paper, we explore several different levels of salience granularity and demonstrate that increased generalization capabilities of PAD and synthetic face detection can be achieved by using simple yet effective saliency post-processing techniques across several different CNNs.

Despite the impressive performance of biological and artificial networks, an intuitive understanding of how their local learning dynamics contribute to network-level task solutions remains a challenge to this date. Efforts to bring learning to a more local scale indeed lead to valuable insights, however, a general constructive approach to describe local learning goals that is both interpretable and adaptable across diverse tasks is still missing. We have previously formulated a local information processing goal that is highly adaptable and interpretable for a model neuron with compartmental structure. Building on recent advances in Partial Information Decomposition (PID), we here derive a corresponding parametric local learning rule, which allows us to introduce 'infomorphic' neural networks. We demonstrate the versatility of these networks to perform tasks from supervised, unsupervised and memory learning. By leveraging the interpretable nature of the PID framework, infomorphic networks represent a valuable tool to advance our understanding of the intricate structure of local learning.

Radio signal recognition is a crucial task in both civilian and military applications, as accurate and timely identification of unknown signals is an essential part of spectrum management and electronic warfare. The majority of research in this field has focused on applying deep learning for modulation classification, leaving the task of signal characterisation as an understudied area. This paper addresses this gap by presenting an approach for tackling radar signal classification and characterisation as a multi-task learning (MTL) problem. We propose the IQ Signal Transformer (IQST) among several reference architectures that allow for simultaneous optimisation of multiple regression and classification tasks. We demonstrate the performance of our proposed MTL model on a synthetic radar dataset, while also providing a first-of-its-kind benchmark for radar signal characterisation.

The success of AI models relies on the availability of large, diverse, and high-quality datasets, which can be challenging to obtain due to data scarcity, privacy concerns, and high costs. Synthetic data has emerged as a promising solution by generating artificial data that mimics real-world patterns. This paper provides an overview of synthetic data research, discussing its applications, challenges, and future directions. We present empirical evidence from prior art to demonstrate its effectiveness and highlight the importance of ensuring its factuality, fidelity, and unbiasedness. We emphasize the need for responsible use of synthetic data to build more powerful, inclusive, and trustworthy language models.

Most existing knowledge graphs suffer from incompleteness, which can be alleviated by inferring missing links based on known facts. One popular way to accomplish this is to generate low-dimensional embeddings of entities and relations, and use these to make inferences. ConvE, a recently proposed approach, applies convolutional filters on 2D reshapings of entity and relation embeddings in order to capture rich interactions between their components. However, the number of interactions that ConvE can capture is limited. In this paper, we analyze how increasing the number of these interactions affects link prediction performance, and utilize our observations to propose InteractE. InteractE is based on three key ideas -- feature permutation, a novel feature reshaping, and circular convolution. Through extensive experiments, we find that InteractE outperforms state-of-the-art convolutional link prediction baselines on FB15k-237. Further, InteractE achieves an MRR score that is 9%, 7.5%, and 23% better than ConvE on the FB15k-237, WN18RR and YAGO3-10 datasets respectively. The results validate our central hypothesis -- that increasing feature interaction is beneficial to link prediction performance. We make the source code of InteractE available to encourage reproducible research.

Recently, ensemble has been applied to deep metric learning to yield state-of-the-art results. Deep metric learning aims to learn deep neural networks for feature embeddings, distances of which satisfy given constraint. In deep metric learning, ensemble takes average of distances learned by multiple learners. As one important aspect of ensemble, the learners should be diverse in their feature embeddings. To this end, we propose an attention-based ensemble, which uses multiple attention masks, so that each learner can attend to different parts of the object. We also propose a divergence loss, which encourages diversity among the learners. The proposed method is applied to the standard benchmarks of deep metric learning and experimental results show that it outperforms the state-of-the-art methods by a significant margin on image retrieval tasks.