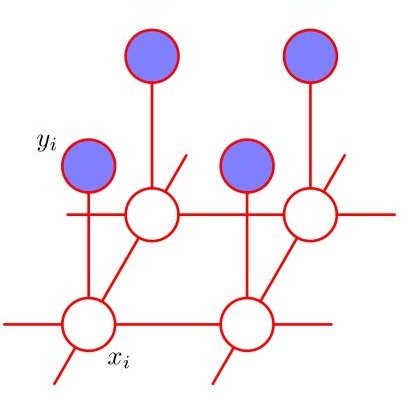

The theory of influences in product measures has profound applications in theoretical computer science, combinatorics, and discrete probability. This deep theory is intimately connected to functional inequalities and to the Fourier analysis of discrete groups. Originally, influences of functions were motivated by the study of social choice theory, wherein a Boolean function represents a voting scheme, its inputs represent the votes, and its output represents the outcome of the elections. Thus, product measures represent a scenario in which the votes of the parties are randomly and independently distributed, which is often far from the truth in real-life scenarios. We begin to develop the theory of influences for more general measures under mixing or correlation decay conditions. More specifically, we prove analogues of the KKL and Talagrand influence theorems for Markov Random Fields on bounded degree graphs with correlation decay. We show how some of the original applications of the theory of in terms of voting and coalitions extend to general measures with correlation decay. Our results thus shed light both on voting with correlated voters and on the behavior of general functions of Markov Random Fields (also called ``spin-systems") with correlation decay.

相關內容

An optimal delivery of arguments is key to persuasion in any debate, both for humans and for AI systems. This requires the use of clear and fluent claims relevant to the given debate. Prior work has studied the automatic assessment of argument quality extensively. Yet, no approach actually improves the quality so far. To fill this gap, this paper proposes the task of claim optimization: to rewrite argumentative claims in order to optimize their delivery. As multiple types of optimization are possible, we approach this task by first generating a diverse set of candidate claims using a large language model, such as BART, taking into account contextual information. Then, the best candidate is selected using various quality metrics. In automatic and human evaluation on an English-language corpus, our quality-based candidate selection outperforms several baselines, improving 60% of all claims (worsening 16% only). Follow-up analyses reveal that, beyond copy editing, our approach often specifies claims with details, whereas it adds less evidence than humans do. Moreover, its capabilities generalize well to other domains, such as instructional texts.

Behavioral testing in NLP allows fine-grained evaluation of systems by examining their linguistic capabilities through the analysis of input-output behavior. Unfortunately, existing work on behavioral testing in Machine Translation (MT) is currently restricted to largely handcrafted tests covering a limited range of capabilities and languages. To address this limitation, we propose to use Large Language Models (LLMs) to generate a diverse set of source sentences tailored to test the behavior of MT models in a range of situations. We can then verify whether the MT model exhibits the expected behavior through matching candidate sets that are also generated using LLMs. Our approach aims to make behavioral testing of MT systems practical while requiring only minimal human effort. In our experiments, we apply our proposed evaluation framework to assess multiple available MT systems, revealing that while in general pass-rates follow the trends observable from traditional accuracy-based metrics, our method was able to uncover several important differences and potential bugs that go unnoticed when relying only on accuracy.

The modelling of dynamical systems from discrete observations is a challenge faced by modern scientific and engineering data systems. Hamiltonian systems are one such fundamental and ubiquitous class of dynamical systems. Hamiltonian neural networks are state-of-the-art models that unsupervised-ly regress the Hamiltonian of a dynamical system from discrete observations of its vector field under the learning bias of Hamilton's equations. Yet Hamiltonian dynamics are often complicated, especially in higher dimensions where the state space of the Hamiltonian system is large relative to the number of samples. A recently discovered remedy to alleviate the complexity between state variables in the state space is to leverage the additive separability of the Hamiltonian system and embed that additive separability into the Hamiltonian neural network. Following the nomenclature of physics-informed machine learning, we propose three separable Hamiltonian neural networks. These models embed additive separability within Hamiltonian neural networks. The first model uses additive separability to quadratically scale the amount of data for training Hamiltonian neural networks. The second model embeds additive separability within the loss function of the Hamiltonian neural network. The third model embeds additive separability through the architecture of the Hamiltonian neural network using conjoined multilayer perceptions. We empirically compare the three models against state-of-the-art Hamiltonian neural networks, and demonstrate that the separable Hamiltonian neural networks, which alleviate complexity between the state variables, are more effective at regressing the Hamiltonian and its vector field.

We work towards a unifying paradigm for accelerating policy optimization methods in reinforcement learning (RL) by integrating foresight in the policy improvement step via optimistic and adaptive updates. Leveraging the connection between policy iteration and policy gradient methods, we view policy optimization algorithms as iteratively solving a sequence of surrogate objectives, local lower bounds on the original objective. We define optimism as predictive modelling of the future behavior of a policy, and adaptivity as taking immediate and anticipatory corrective actions to mitigate accumulating errors from overshooting predictions or delayed responses to change. We use this shared lens to jointly express other well-known algorithms, including model-based policy improvement based on forward search, and optimistic meta-learning algorithms. We analyze properties of this formulation, and show connections to other accelerated optimization algorithms. Then, we design an optimistic policy gradient algorithm, adaptive via meta-gradient learning, and empirically highlight several design choices pertaining to acceleration, in an illustrative task.

Regularized m-estimators are widely used due to their ability of recovering a low-dimensional model in high-dimensional scenarios. Some recent efforts on this subject focused on creating a unified framework for establishing oracle bounds, and deriving conditions for support recovery. Under this same framework, we propose a new Generalized Information Criteria (GIC) that takes into consideration the sparsity pattern one wishes to recover. We obtain non-asymptotic model selection bounds and sufficient conditions for model selection consistency of the GIC. Furthermore, we show that the GIC can also be used for selecting the regularization parameter within a regularized $m$-estimation framework, which allows practical use of the GIC for model selection in high-dimensional scenarios. We provide examples of group LASSO in the context of generalized linear regression and low rank matrix regression.

Cellwise outliers are widespread in data and traditional robust methods may fail when applied to datasets under such contamination. We propose a variable selection procedure, that uses a pairwise robust estimator to obtain an initial empirical covariance matrix among the response and potentially many predictors. Then we replace the primary design matrix and the response vector with their robust counterparts based on the estimated covariance matrix. Finally, we adopt the adaptive Lasso to obtain variable selection results. The proposed approach is robust to cellwise outliers in regular and high dimensional settings and empirical results show good performance in comparison with recently proposed alternative robust approaches, particularly in the challenging setting when contamination rates are high but the magnitude of outliers is moderate. Real data applications demonstrate the practical utility of the proposed method.

We present a new, efficient procedure to establish Markov equivalence between directed graphs that may or may not contain cycles under the \textit{d}-separation criterion. It is based on the Cyclic Equivalence Theorem (CET) in the seminal works on cyclic models by Thomas Richardson in the mid '90s, but now rephrased from an ancestral perspective. The resulting characterization leads to a procedure for establishing Markov equivalence between graphs that no longer requires tests for d-separation, leading to a significantly reduced algorithmic complexity. The conceptually simplified characterization may help to reinvigorate theoretical research towards sound and complete cyclic discovery in the presence of latent confounders. This version includes a correction to rule (iv) in Theorem 1, and the subsequent adjustment in part 2 of Algorithm 2.

The adaptive processing of structured data is a long-standing research topic in machine learning that investigates how to automatically learn a mapping from a structured input to outputs of various nature. Recently, there has been an increasing interest in the adaptive processing of graphs, which led to the development of different neural network-based methodologies. In this thesis, we take a different route and develop a Bayesian Deep Learning framework for graph learning. The dissertation begins with a review of the principles over which most of the methods in the field are built, followed by a study on graph classification reproducibility issues. We then proceed to bridge the basic ideas of deep learning for graphs with the Bayesian world, by building our deep architectures in an incremental fashion. This framework allows us to consider graphs with discrete and continuous edge features, producing unsupervised embeddings rich enough to reach the state of the art on several classification tasks. Our approach is also amenable to a Bayesian nonparametric extension that automatizes the choice of almost all model's hyper-parameters. Two real-world applications demonstrate the efficacy of deep learning for graphs. The first concerns the prediction of information-theoretic quantities for molecular simulations with supervised neural models. After that, we exploit our Bayesian models to solve a malware-classification task while being robust to intra-procedural code obfuscation techniques. We conclude the dissertation with an attempt to blend the best of the neural and Bayesian worlds together. The resulting hybrid model is able to predict multimodal distributions conditioned on input graphs, with the consequent ability to model stochasticity and uncertainty better than most works. Overall, we aim to provide a Bayesian perspective into the articulated research field of deep learning for graphs.

Humans perceive the world by concurrently processing and fusing high-dimensional inputs from multiple modalities such as vision and audio. Machine perception models, in stark contrast, are typically modality-specific and optimised for unimodal benchmarks, and hence late-stage fusion of final representations or predictions from each modality (`late-fusion') is still a dominant paradigm for multimodal video classification. Instead, we introduce a novel transformer based architecture that uses `fusion bottlenecks' for modality fusion at multiple layers. Compared to traditional pairwise self-attention, our model forces information between different modalities to pass through a small number of bottleneck latents, requiring the model to collate and condense the most relevant information in each modality and only share what is necessary. We find that such a strategy improves fusion performance, at the same time reducing computational cost. We conduct thorough ablation studies, and achieve state-of-the-art results on multiple audio-visual classification benchmarks including Audioset, Epic-Kitchens and VGGSound. All code and models will be released.

Benefit from the quick development of deep learning techniques, salient object detection has achieved remarkable progresses recently. However, there still exists following two major challenges that hinder its application in embedded devices, low resolution output and heavy model weight. To this end, this paper presents an accurate yet compact deep network for efficient salient object detection. More specifically, given a coarse saliency prediction in the deepest layer, we first employ residual learning to learn side-output residual features for saliency refinement, which can be achieved with very limited convolutional parameters while keep accuracy. Secondly, we further propose reverse attention to guide such side-output residual learning in a top-down manner. By erasing the current predicted salient regions from side-output features, the network can eventually explore the missing object parts and details which results in high resolution and accuracy. Experiments on six benchmark datasets demonstrate that the proposed approach compares favorably against state-of-the-art methods, and with advantages in terms of simplicity, efficiency (45 FPS) and model size (81 MB).