General purpose optimization techniques can be used to solve many problems in engineering computations, although their cost is often prohibitive when the number of degrees of freedom is very large. We describe a multilevel approach to speed up the computation of the solution of a large-scale optimization problem by a given optimization technique. By embedding the problem within Harten's Multiresolution Framework (MRF), we set up a procedure that leads to the desired solution, after the computation of a finite sequence of sub-optimal solutions, which solve auxiliary optimization problems involving a smaller number of variables. For convex optimization problems having smooth solutions, we prove that the distance between the optimal solution and each sub-optimal approximation is related to the accuracy of the interpolation technique used within the MRF and analyze its relation with the performance of the proposed algorithm. Several numerical experiments confirm that our technique provides a computationally efficient strategy that allows the end user to treat both the optimizer and the objective function as black boxes throughout the optimization process.

相關內容

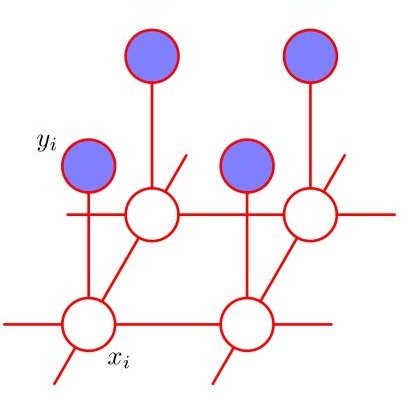

We study the complexity of classical constraint satisfaction problems on a 2D grid. Specifically, we consider the complexity of function versions of such problems, with the additional restriction that the constraints are translationally invariant, namely, the variables are located at the vertices of a 2D grid and the constraint between every pair of adjacent variables is the same in each dimension. The only input to the problem is thus the size of the grid. This problem is equivalent to one of the most interesting problems in classical physics, namely, computing the lowest energy of a classical system of particles on the grid. We provide a tight characterization of the complexity of this problem, and show that it is complete for the class $FP^{NEXP}$. Gottesman and Irani (FOCS 2009) also studied classical translationally-invariant constraint satisfaction problems; they show that the problem of deciding whether the cost of the optimal solution is below a given threshold is NEXP-complete. Our result is thus a strengthening of their result from the decision version to the function version of the problem. Our result can also be viewed as a generalization to the translationally invariant setting, of Krentel's famous result from 1988, showing that the function version of SAT is complete for the class $FP^{NP}$. An essential ingredient in the proof is a study of the complexity of a gapped variant of the problem. We show that it is NEXP-hard to approximate the cost of the optimal assignment to within an additive error of $\Omega(N^{1/4})$, for an $N \times N$ grid. To the best of our knowledge, no gapped result is known for CSPs on the grid, even in the non-translationally invariant case. As a byproduct of our results, we also show that a decision version of the optimization problem which asks whether the cost of the optimal assignment is odd or even is also complete for $P^{NEXP}$.

Bilevel optimization (BO) is useful for solving a variety of important machine learning problems including but not limited to hyperparameter optimization, meta-learning, continual learning, and reinforcement learning. Conventional BO methods need to differentiate through the low-level optimization process with implicit differentiation, which requires expensive calculations related to the Hessian matrix. There has been a recent quest for first-order methods for BO, but the methods proposed to date tend to be complicated and impractical for large-scale deep learning applications. In this work, we propose a simple first-order BO algorithm that depends only on first-order gradient information, requires no implicit differentiation, and is practical and efficient for large-scale non-convex functions in deep learning. We provide non-asymptotic convergence analysis of the proposed method to stationary points for non-convex objectives and present empirical results that show its superior practical performance.

A two dimensional eigenvalue problem (2DEVP) of a Hermitian matrix pair $(A, C)$ is introduced in this paper. The 2DEVP can be viewed as a linear algebraic formulation of the well-known eigenvalue optimization problem of the parameter matrix $H(\mu) = A - \mu C$. We present fundamental properties of the 2DEVP such as the existence, the necessary and sufficient condition for the finite number of 2D-eigenvalues and variational characterizations. We use eigenvalue optimization problems from the minmax of two Rayleigh quotients and the computation of distance to instability to show their connections with the 2DEVP and new insights of these problems derived from the properties of the 2DEVP.

We propose a symbolic execution method for programs that can draw random samples. In contrast to existing work, our method can verify randomized programs with unknown inputs and can prove probabilistic properties that universally quantify over all possible inputs. Our technique augments standard symbolic execution with a new class of \emph{probabilistic symbolic variables}, which represent the results of random draws, and computes symbolic expressions representing the probability of taking individual paths. We implement our method on top of the \textsc{KLEE} symbolic execution engine alongside multiple optimizations and use it to prove properties about probabilities and expected values for a range of challenging case studies written in C++, including Freivalds' algorithm, randomized quicksort, and a randomized property-testing algorithm for monotonicity. We evaluate our method against \textsc{Psi}, an exact probabilistic symbolic inference engine, and \textsc{Storm}, a probabilistic model checker, and show that our method significantly outperforms both tools.

In this paper, we consider decentralized optimization problems where agents have individual cost functions to minimize subject to subspace constraints that require the minimizers across the network to lie in low-dimensional subspaces. This constrained formulation includes consensus or single-task optimization as special cases, and allows for more general task relatedness models such as multitask smoothness and coupled optimization. In order to cope with communication constraints, we propose and study an adaptive decentralized strategy where the agents employ differential randomized quantizers to compress their estimates before communicating with their neighbors. The analysis shows that, under some general conditions on the quantization noise, and for sufficiently small step-sizes $\mu$, the strategy is stable both in terms of mean-square error and average bit rate: by reducing $\mu$, it is possible to keep the estimation errors small (on the order of $\mu$) without increasing indefinitely the bit rate as $\mu\rightarrow 0$. Simulations illustrate the theoretical findings and the effectiveness of the proposed approach, revealing that decentralized learning is achievable at the expense of only a few bits.

Multiple-objective optimization (MOO) aims to simultaneously optimize multiple conflicting objectives and has found important applications in machine learning, such as minimizing classification loss and discrepancy in treating different populations for fairness. At optimality, further optimizing one objective will necessarily harm at least another objective, and decision-makers need to comprehensively explore multiple optima (called Pareto front) to pinpoint one final solution. We address the efficiency of finding the Pareto front. First, finding the front from scratch using stochastic multi-gradient descent (SMGD) is expensive with large neural networks and datasets. We propose to explore the Pareto front as a manifold from a few initial optima, based on a predictor-corrector method. Second, for each exploration step, the predictor solves a large-scale linear system that scales quadratically in the number of model parameters and requires one backpropagation to evaluate a second-order Hessian-vector product per iteration of the solver. We propose a Gauss-Newton approximation that only scales linearly, and that requires only first-order inner-product per iteration. This also allows for a choice between the MINRES and conjugate gradient methods when approximately solving the linear system. The innovations make predictor-corrector possible for large networks. Experiments on multi-objective (fairness and accuracy) misinformation detection tasks show that 1) the predictor-corrector method can find Pareto fronts better than or similar to SMGD with less time; and 2) the proposed first-order method does not harm the quality of the Pareto front identified by the second-order method, while further reduce running time.

In the well-known complexity class NP, many combinatorial problems can be found, whose optimization counterpart are important for many practical settings. Those problems usually consider full knowledge about the input and optimize on this specific input. In a practical setting, however, uncertainty in the input data is a usual phenomenon, whereby this is normally not covered in optimization versions of NP problems. One concept to model the uncertainty in the input data, is \textit{recoverable robustness}. In this setting, a solution on the input is calculated, whereby a possible recovery to a good solution should be guaranteed, whenever uncertainty manifests itself. That is, a solution $\texttt{s}_0$ for the base scenario $\textsf{S}_0$ as well as a solution \texttt{s} for every possible scenario of scenario set \textsf{S} has to be calculated. In other words, not only solution $\texttt{s}_0$ for instance $\textsf{S}_0$ is calculated but solutions \texttt{s} for all scenarios from \textsf{S} are prepared to correct possible errors through uncertainty. This paper introduces a specific concept of recoverable robust problems: Hamming Distance Recoverable Robust Problems. In this setting, solutions $\texttt{s}_0$ and \texttt{s} have to be calculated, such that $\texttt{s}_0$ and \texttt{s} may only differ in at most $\kappa$ elements. That is, one can recover from a harmful scenario by choosing a different solution, which is not too far away from the first solution. This paper surveys the complexity of Hamming distance recoverable robust version of optimization problems, typically found in NP for different types of scenarios. The complexity is primarily situated in the lower levels of the polynomial hierarchy. The main contribution of the paper is that recoverable robust problems with compression-encoded scenarios and $m \in \mathbb{N}$ recoveries are $\Sigma^P_{2m+1}$-complete.

Unsupervised domain adaptation has recently emerged as an effective paradigm for generalizing deep neural networks to new target domains. However, there is still enormous potential to be tapped to reach the fully supervised performance. In this paper, we present a novel active learning strategy to assist knowledge transfer in the target domain, dubbed active domain adaptation. We start from an observation that energy-based models exhibit free energy biases when training (source) and test (target) data come from different distributions. Inspired by this inherent mechanism, we empirically reveal that a simple yet efficient energy-based sampling strategy sheds light on selecting the most valuable target samples than existing approaches requiring particular architectures or computation of the distances. Our algorithm, Energy-based Active Domain Adaptation (EADA), queries groups of targe data that incorporate both domain characteristic and instance uncertainty into every selection round. Meanwhile, by aligning the free energy of target data compact around the source domain via a regularization term, domain gap can be implicitly diminished. Through extensive experiments, we show that EADA surpasses state-of-the-art methods on well-known challenging benchmarks with substantial improvements, making it a useful option in the open world. Code is available at //github.com/BIT-DA/EADA.

Non-convex optimization is ubiquitous in modern machine learning. Researchers devise non-convex objective functions and optimize them using off-the-shelf optimizers such as stochastic gradient descent and its variants, which leverage the local geometry and update iteratively. Even though solving non-convex functions is NP-hard in the worst case, the optimization quality in practice is often not an issue -- optimizers are largely believed to find approximate global minima. Researchers hypothesize a unified explanation for this intriguing phenomenon: most of the local minima of the practically-used objectives are approximately global minima. We rigorously formalize it for concrete instances of machine learning problems.

Since deep neural networks were developed, they have made huge contributions to everyday lives. Machine learning provides more rational advice than humans are capable of in almost every aspect of daily life. However, despite this achievement, the design and training of neural networks are still challenging and unpredictable procedures. To lower the technical thresholds for common users, automated hyper-parameter optimization (HPO) has become a popular topic in both academic and industrial areas. This paper provides a review of the most essential topics on HPO. The first section introduces the key hyper-parameters related to model training and structure, and discusses their importance and methods to define the value range. Then, the research focuses on major optimization algorithms and their applicability, covering their efficiency and accuracy especially for deep learning networks. This study next reviews major services and toolkits for HPO, comparing their support for state-of-the-art searching algorithms, feasibility with major deep learning frameworks, and extensibility for new modules designed by users. The paper concludes with problems that exist when HPO is applied to deep learning, a comparison between optimization algorithms, and prominent approaches for model evaluation with limited computational resources.