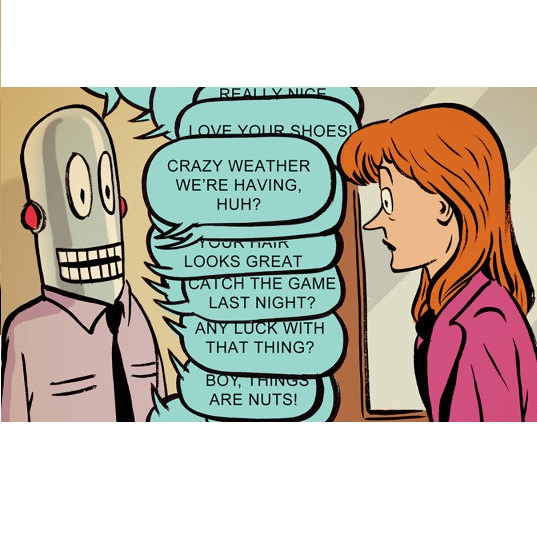

Current language processing technologies allow the creation of conversational chatbot platforms. Even though artificial intelligence is still too immature to support satisfactory user experience in many mass market domains, conversational interfaces have found their way into ad hoc applications such as call centres and online shopping assistants. However, they have not been applied so far to social inclusion of elderly people, who are particularly vulnerable to the digital divide. Many of them relieve their loneliness with traditional media such as TV and radio, which are known to create a feeling of companionship. In this paper we present the EBER chatbot, designed to reduce the digital gap for the elderly. EBER reads news in the background and adapts its responses to the user's mood. Its novelty lies in the concept of "intelligent radio", according to which, instead of simplifying a digital information system to make it accessible to the elderly, a traditional channel they find familiar -- background news -- is augmented with interactions via voice dialogues. We make it possible by combining Artificial Intelligence Modelling Language, automatic Natural Language Generation and Sentiment Analysis. The system allows accessing digital content of interest by combining words extracted from user answers to chatbot questions with keywords extracted from the news items. This approach permits defining metrics of the abstraction capabilities of the users depending on a spatial representation of the word space. To prove the suitability of the proposed solution we present results of real experiments conducted with elderly people that provided valuable insights. Our approach was considered satisfactory during the tests and improved the information search capabilities of the participants.

相關內容

Quantum computing has recently emerged as a transformative technology. Yet, its promised advantages rely on efficiently translating quantum operations into viable physical realizations. In this work, we use generative machine learning models, specifically denoising diffusion models (DMs), to facilitate this transformation. Leveraging text-conditioning, we steer the model to produce desired quantum operations within gate-based quantum circuits. Notably, DMs allow to sidestep during training the exponential overhead inherent in the classical simulation of quantum dynamics -- a consistent bottleneck in preceding ML techniques. We demonstrate the model's capabilities across two tasks: entanglement generation and unitary compilation. The model excels at generating new circuits and supports typical DM extensions such as masking and editing to, for instance, align the circuit generation to the constraints of the targeted quantum device. Given their flexibility and generalization abilities, we envision DMs as pivotal in quantum circuit synthesis, enhancing both practical applications but also insights into theoretical quantum computation.

Citizen Science (CS) is related to public engagement in scientific research. The tasks in which the citizens can be involved are diverse and can range from data collection and tagging images to participation in the planning and research design. However, little is known about the involvement degree of the citizens to CS projects, and the contribution of those projects to the advancement of knowledge (e.g. scientific outcomes). This study aims to gain a better understanding by analysing the SciStarter database. A total of 2,346 CS projects were identified, mainly from Ecology and Environmental Sciences. Of these projects, 91% show low participation of the citizens (Level 1 "citizens as sensors" and 2 "citizens as interpreters", from Haklay's scale). In terms of scientific output, 918 papers indexed in the Web of Science (WoS) were identified. The most prolific projects were found to have lower levels of citizen involvement, specifically at Levels 1 and 2.

The accelerated progress of artificial intelligence (AI) has popularized deep learning models across domains, yet their inherent opacity poses challenges, notably in critical fields like healthcare, medicine and the geosciences. Explainable AI (XAI) has emerged to shed light on these "black box" models, helping decipher their decision making process. Nevertheless, different XAI methods yield highly different explanations. This inter-method variability increases uncertainty and lowers trust in deep networks' predictions. In this study, for the first time, we propose a novel framework designed to enhance the explainability of deep networks, by maximizing both the accuracy and the comprehensibility of the explanations. Our framework integrates various explanations from established XAI methods and employs a non-linear "explanation optimizer" to construct a unique and optimal explanation. Through experiments on multi-class and binary classification tasks in 2D object and 3D neuroscience imaging, we validate the efficacy of our approach. Our explanation optimizer achieved superior faithfulness scores, averaging 155% and 63% higher than the best performing XAI method in the 3D and 2D applications, respectively. Additionally, our approach yielded lower complexity, increasing comprehensibility. Our results suggest that optimal explanations based on specific criteria are derivable and address the issue of inter-method variability in the current XAI literature.

How decisions are being made is of utmost importance within organizations. The explicit representation of business logic facilitates identifying and adopting the criteria needed to make a particular decision and drives initiatives to automate repetitive decisions. The last decade has seen a surge in both the adoption of decision modeling standards such as DMN and the use of software tools such as chatbots, which seek to automate parts of the process by interacting with users to guide them in executing tasks or providing information. However, building a chatbot is not a trivial task, as it requires extensive knowledge of the business domain as well as technical knowledge for implementing the tool. In this paper, we build on these two requirements to propose an approach for the automatic generation of fully functional, ready-to-use decisions-support chatbots based on a DNM decision model. With the aim of reducing chatbots development time and to allowing non-technical users the possibility of developing chatbots specific to their domain, all necessary phases for the generation of the chatbot were implemented in the Demabot tool. The evaluation was conducted with potential developers and end users. The results showed that Demabot generates chatbots that are correct and allow for acceptably smooth communication with the user. Furthermore, Demabots's help and customization options are considered useful and correct, while the tool can also help to reduce development time and potential errors.

Due to the increasing need for effective security measures and the integration of cameras in commercial products, a hugeamount of visual data is created today. Law enforcement agencies (LEAs) are inspecting images and videos to findradicalization, propaganda for terrorist organizations and illegal products on darknet markets. This is time consuming.Instead of an undirected search, LEAs would like to adapt to new crimes and threats, and focus only on data from specificlocations, persons or objects, which requires flexible interpretation of image content. Visual concept detection with deepconvolutional neural networks (CNNs) is a crucial component to understand the image content. This paper has fivecontributions. The first contribution allows image-based geo-localization to estimate the origin of an image. CNNs andgeotagged images are used to create a model that determines the location of an image by its pixel values. The secondcontribution enables analysis of fine-grained concepts to distinguish sub-categories in a generic concept. The proposedmethod encompasses data acquisition and cleaning and concept hierarchies. The third contribution is the recognition ofperson attributes (e.g., glasses or moustache) to enable query by textual description for a person. The person-attributeproblem is treated as a specific sub-task of concept classification. The fourth contribution is an intuitive image annotationtool based on active learning. Active learning allows users to define novel concepts flexibly and train CNNs with minimalannotation effort. The fifth contribution increases the flexibility for LEAs in the query definition by using query expansion.Query expansion maps user queries to known and detectable concepts. Therefore, no prior knowledge of the detectableconcepts is required for the users. The methods are validated on data with varying locations (popular and non-touristiclocations), varying person attributes (CelebA dataset), and varying number of annotations.

In causal inference, sensitivity models assess how unmeasured confounders could alter causal analyses. However, the sensitivity parameter in these models -- which quantifies the degree of unmeasured confounding -- is often difficult to interpret. For this reason, researchers will sometimes compare the magnitude of the sensitivity parameter to an estimate for measured confounding. This is known as calibration. We propose novel calibrated sensitivity models, which directly incorporate measured confounding, and bound the degree of unmeasured confounding by a multiple of measured confounding. We illustrate how to construct calibrated sensitivity models via several examples. We also demonstrate their advantages over standard sensitivity analyses and calibration; in particular, the calibrated sensitivity parameter is an intuitive unit-less ratio of unmeasured divided by measured confounding, unlike standard sensitivity parameters, and one can correctly incorporate uncertainty due to estimating measured confounding, which standard calibration methods fail to do. By incorporating uncertainty due to measured confounding, we observe that causal analyses can be less robust or more robust to unmeasured confounding than would have been shown with standard approaches. We develop efficient estimators and methods for inference for bounds on the average treatment effect with three calibrated sensitivity models, and establish that our estimators are doubly robust and attain parametric efficiency and asymptotic normality under nonparametric conditions on their nuisance function estimators. We illustrate our methods with data analyses on the effect of exposure to violence on attitudes towards peace in Darfur and the effect of mothers' smoking on infant birthweight.

A large body of work in psycholinguistics has focused on the idea that online language comprehension can be shallow or `good enough': given constraints on time or available computation, comprehenders may form interpretations of their input that are plausible but inaccurate. However, this idea has not yet been linked with formal theories of computation under resource constraints. Here we use information theory to formulate a model of language comprehension as an optimal trade-off between accuracy and processing depth, formalized as bits of information extracted from the input, which increases with processing time. The model provides a measure of processing effort as the change in processing depth, which we link to EEG signals and reading times. We validate our theory against a large-scale dataset of garden path sentence reading times, and EEG experiments featuring N400, P600 and biphasic ERP effects. By quantifying the timecourse of language processing as it proceeds from shallow to deep, our model provides a unified framework to explain behavioral and neural signatures of language comprehension.

Inferential models (IMs) represent a novel possibilistic approach for achieving provably valid statistical inference. This paper introduces a general framework for fusing independent IMs in a "black-box" manner, requiring no knowledge of the original IMs construction details. The underlying logic of this framework mirrors that of the IMs approach. First, a fusing function for the initial IMs' possibility contours is selected. Given the possible lack of guarantee regarding the calibration of this function for valid inferences, a "validification" step is performed. Subsequently, a straightforward normalization step is executed to ensure that the final output conforms to a possibility contour.

We explicitly construct zero loss neural network classifiers. We write the weight matrices and bias vectors in terms of cumulative parameters, which determine truncation maps acting recursively on input space. The configurations for the training data considered are (i) sufficiently small, well separated clusters corresponding to each class, and (ii) equivalence classes which are sequentially linearly separable. In the best case, for $Q$ classes of data in $\mathbb{R}^M$, global minimizers can be described with $Q(M+2)$ parameters.

In large-scale systems there are fundamental challenges when centralised techniques are used for task allocation. The number of interactions is limited by resource constraints such as on computation, storage, and network communication. We can increase scalability by implementing the system as a distributed task-allocation system, sharing tasks across many agents. However, this also increases the resource cost of communications and synchronisation, and is difficult to scale. In this paper we present four algorithms to solve these problems. The combination of these algorithms enable each agent to improve their task allocation strategy through reinforcement learning, while changing how much they explore the system in response to how optimal they believe their current strategy is, given their past experience. We focus on distributed agent systems where the agents' behaviours are constrained by resource usage limits, limiting agents to local rather than system-wide knowledge. We evaluate these algorithms in a simulated environment where agents are given a task composed of multiple subtasks that must be allocated to other agents with differing capabilities, to then carry out those tasks. We also simulate real-life system effects such as networking instability. Our solution is shown to solve the task allocation problem to 6.7% of the theoretical optimal within the system configurations considered. It provides 5x better performance recovery over no-knowledge retention approaches when system connectivity is impacted, and is tested against systems up to 100 agents with less than a 9% impact on the algorithms' performance.