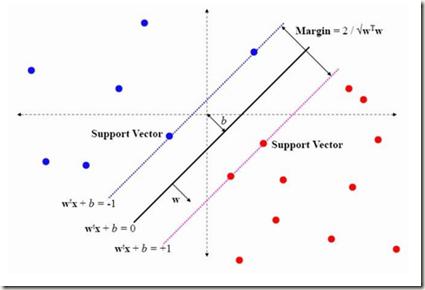

The support vector machine (SVM) is a supervised learning algorithm that finds a maximum-margin linear classifier, often after mapping the data to a high-dimensional feature space via the kernel trick. Recent work has demonstrated that in certain sufficiently overparameterized settings, the SVM decision function coincides exactly with the minimum-norm label interpolant. This phenomenon of support vector proliferation (SVP) is especially interesting because it allows us to understand SVM performance by leveraging recent analyses of harmless interpolation in linear and kernel models. However, previous work on SVP has made restrictive assumptions on the data/feature distribution and spectrum. In this paper, we present a new and flexible analysis framework for proving SVP in an arbitrary reproducing kernel Hilbert space with a flexible class of generative models for the labels. We present conditions for SVP for features in the families of general bounded orthonormal systems (e.g. Fourier features) and independent sub-Gaussian features. In both cases, we show that SVP occurs in many interesting settings not covered by prior work, and we leverage these results to prove novel generalization results for kernel SVM classification.

相關內容

Learning the kernel parameters for Gaussian processes is often the computational bottleneck in applications such as online learning, Bayesian optimization, or active learning. Amortizing parameter inference over different datasets is a promising approach to dramatically speed up training time. However, existing methods restrict the amortized inference procedure to a fixed kernel structure. The amortization network must be redesigned manually and trained again in case a different kernel is employed, which leads to a large overhead in design time and training time. We propose amortizing kernel parameter inference over a complete kernel-structure-family rather than a fixed kernel structure. We do that via defining an amortization network over pairs of datasets and kernel structures. This enables fast kernel inference for each element in the kernel family without retraining the amortization network. As a by-product, our amortization network is able to do fast ensembling over kernel structures. In our experiments, we show drastically reduced inference time combined with competitive test performance for a large set of kernels and datasets.

Equivalence testing allows one to conclude that two characteristics are practically equivalent. We propose a framework for fast sample size determination with Bayesian equivalence tests facilitated via posterior probabilities. We assume that data are generated using statistical models with fixed parameters for the purposes of sample size determination. Our framework leverages an interval-based approach, which defines a distribution for the sample size to control the length of posterior highest density intervals (HDIs). We prove the normality of the limiting distribution for the sample size, and we consider the relationship between posterior HDI length and the statistical power of Bayesian equivalence tests. We introduce two novel approaches for estimating the distribution for the sample size, both of which are calibrated to align with targets for statistical power. Both approaches are much faster than traditional power calculations for Bayesian equivalence tests. Moreover, our method requires users to make fewer choices than traditional simulation-based methods for Bayesian sample size determination. It is therefore more accessible to users accustomed to frequentist methods.

The increasing complexity of data requires methods and models that can effectively handle intricate structures, as simplifying them would result in loss of information. While several analytical tools have been developed to work with complex data objects in their original form, these tools are typically limited to single-type variables. In this work, we propose energy trees as a regression and classification model capable of accommodating structured covariates of various types. Energy trees leverage energy statistics to extend the capabilities of conditional inference trees, from which they inherit sound statistical foundations, interpretability, scale invariance, and freedom from distributional assumptions. We specifically focus on functional and graph-structured covariates, while also highlighting the model's flexibility in integrating other variable types. Extensive simulation studies demonstrate the model's competitive performance in terms of variable selection and robustness to overfitting. Finally, we assess the model's predictive ability through two empirical analyses involving human biological data. Energy trees are implemented in the R package etree.

We propose novel statistics which maximise the power of a two-sample test based on the Maximum Mean Discrepancy (MMD), by adapting over the set of kernels used in defining it. For finite sets, this reduces to combining (normalised) MMD values under each of these kernels via a weighted soft maximum. Exponential concentration bounds are proved for our proposed statistics under the null and alternative. We further show how these kernels can be chosen in a data-dependent but permutation-independent way, in a well-calibrated test, avoiding data splitting. This technique applies more broadly to general permutation-based MMD testing, and includes the use of deep kernels with features learnt using unsupervised models such as auto-encoders. We highlight the applicability of our MMD-FUSE test on both synthetic low-dimensional and real-world high-dimensional data, and compare its performance in terms of power against current state-of-the-art kernel tests.

We study the Electrical Impedance Tomography Bayesian inverse problem for recovering the conductivity given noisy measurements of the voltage on some boundary surface electrodes. The uncertain conductivity depends linearly on a countable number of uniformly distributed random parameters in a compact interval, with the coefficient functions in the linear expansion decaying at an algebraic rate. We analyze the surrogate Markov Chain Monte Carlo (MCMC) approach for sampling the posterior probability measure, where the multivariate sparse adaptive interpolation, with interpolating points chosen according to a lower index set, is used for approximating the forward map. The forward equation is approximated once before running the MCMC for all the realizations, using interpolation on the finite element (FE) approximation at the parametric interpolating points. When evaluation of the solution is needed for a realization, we only need to compute a polynomial, thus cutting drastically the computation time. We contribute a rigorous error estimate for the MCMC convergence. In particular, we show that there is a nested sequence of interpolating lower index sets for which we can derive an interpolation error estimate in terms of the cardinality of these sets, uniformly for all the parameter realizations. An explicit convergence rate for the MCMC sampling of the posterior expectation of the conductivity is rigorously derived, in terms of the interpolating point number, the accuracy of the FE approximation of the forward equation, and the MCMC sample number. We perform numerical experiments using an adaptive greedy approach to construct the sets of interpolation points. We show the benefits of this approach over the simple MCMC where the forward equation is repeatedly solved for all the samples and the non-adaptive surrogate MCMC with an isotropic index set treating all the random parameters equally.

Neural network compression has been an increasingly important subject, due to its practical implications in terms of reducing the computational requirements and its theoretical implications, as there is an explicit connection between compressibility and the generalization error. Recent studies have shown that the choice of the hyperparameters of stochastic gradient descent (SGD) can have an effect on the compressibility of the learned parameter vector. Even though these results have shed some light on the role of the training dynamics over compressibility, they relied on unverifiable assumptions and the resulting theory does not provide a practical guideline due to its implicitness. In this study, we propose a simple modification for SGD, such that the outputs of the algorithm will be provably compressible without making any nontrivial assumptions. We consider a one-hidden-layer neural network trained with SGD and we inject additive heavy-tailed noise to the iterates at each iteration. We then show that, for any compression rate, there exists a level of overparametrization (i.e., the number of hidden units), such that the output of the algorithm will be compressible with high probability. To achieve this result, we make two main technical contributions: (i) we build on a recent study on stochastic analysis and prove a 'propagation of chaos' result with improved rates for a class of heavy-tailed stochastic differential equations, and (ii) we derive strong-error estimates for their Euler discretization. We finally illustrate our approach on experiments, where the results suggest that the proposed approach achieves compressibility with a slight compromise from the training and test error.

Classic algorithms and machine learning systems like neural networks are both abundant in everyday life. While classic computer science algorithms are suitable for precise execution of exactly defined tasks such as finding the shortest path in a large graph, neural networks allow learning from data to predict the most likely answer in more complex tasks such as image classification, which cannot be reduced to an exact algorithm. To get the best of both worlds, this thesis explores combining both concepts leading to more robust, better performing, more interpretable, more computationally efficient, and more data efficient architectures. The thesis formalizes the idea of algorithmic supervision, which allows a neural network to learn from or in conjunction with an algorithm. When integrating an algorithm into a neural architecture, it is important that the algorithm is differentiable such that the architecture can be trained end-to-end and gradients can be propagated back through the algorithm in a meaningful way. To make algorithms differentiable, this thesis proposes a general method for continuously relaxing algorithms by perturbing variables and approximating the expectation value in closed form, i.e., without sampling. In addition, this thesis proposes differentiable algorithms, such as differentiable sorting networks, differentiable renderers, and differentiable logic gate networks. Finally, this thesis presents alternative training strategies for learning with algorithms.

Graph neural networks (GNNs) are a popular class of machine learning models whose major advantage is their ability to incorporate a sparse and discrete dependency structure between data points. Unfortunately, GNNs can only be used when such a graph-structure is available. In practice, however, real-world graphs are often noisy and incomplete or might not be available at all. With this work, we propose to jointly learn the graph structure and the parameters of graph convolutional networks (GCNs) by approximately solving a bilevel program that learns a discrete probability distribution on the edges of the graph. This allows one to apply GCNs not only in scenarios where the given graph is incomplete or corrupted but also in those where a graph is not available. We conduct a series of experiments that analyze the behavior of the proposed method and demonstrate that it outperforms related methods by a significant margin.

In structure learning, the output is generally a structure that is used as supervision information to achieve good performance. Considering the interpretation of deep learning models has raised extended attention these years, it will be beneficial if we can learn an interpretable structure from deep learning models. In this paper, we focus on Recurrent Neural Networks (RNNs) whose inner mechanism is still not clearly understood. We find that Finite State Automaton (FSA) that processes sequential data has more interpretable inner mechanism and can be learned from RNNs as the interpretable structure. We propose two methods to learn FSA from RNN based on two different clustering methods. We first give the graphical illustration of FSA for human beings to follow, which shows the interpretability. From the FSA's point of view, we then analyze how the performance of RNNs are affected by the number of gates, as well as the semantic meaning behind the transition of numerical hidden states. Our results suggest that RNNs with simple gated structure such as Minimal Gated Unit (MGU) is more desirable and the transitions in FSA leading to specific classification result are associated with corresponding words which are understandable by human beings.

Dynamic programming (DP) solves a variety of structured combinatorial problems by iteratively breaking them down into smaller subproblems. In spite of their versatility, DP algorithms are usually non-differentiable, which hampers their use as a layer in neural networks trained by backpropagation. To address this issue, we propose to smooth the max operator in the dynamic programming recursion, using a strongly convex regularizer. This allows to relax both the optimal value and solution of the original combinatorial problem, and turns a broad class of DP algorithms into differentiable operators. Theoretically, we provide a new probabilistic perspective on backpropagating through these DP operators, and relate them to inference in graphical models. We derive two particular instantiations of our framework, a smoothed Viterbi algorithm for sequence prediction and a smoothed DTW algorithm for time-series alignment. We showcase these instantiations on two structured prediction tasks and on structured and sparse attention for neural machine translation.