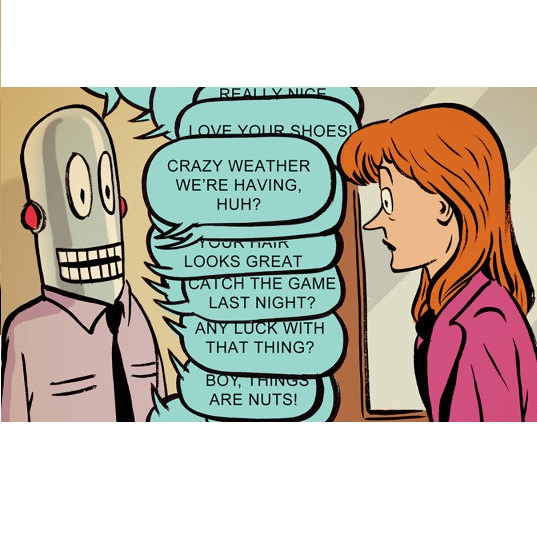

This research delves into the intersection of illustration art and artificial intelligence (AI), focusing on how illustrators engage with AI agents that embody their original characters (OCs). We introduce 'ORIBA', a customizable AI chatbot that enables illustrators to converse with their OCs. This approach allows artists to not only receive responses from their OCs but also to observe their inner monologues and behavior. Despite the existing tension between artists and AI, our study explores innovative collaboration methods that are inspiring to illustrators. By examining the impact of AI on the creative process and the boundaries of authorship, we aim to enhance human-AI interactions in creative fields, with potential applications extending beyond illustration to interactive storytelling and more.

相關內容

Causal inference is a crucial goal of science, enabling researchers to arrive at meaningful conclusions regarding the predictions of hypothetical interventions using observational data. Path models, Structural Equation Models (SEMs), and, more generally, Directed Acyclic Graphs (DAGs), provide a means to unambiguously specify assumptions regarding the causal structure underlying a phenomenon. Unlike DAGs, which make very few assumptions about the functional and parametric form, SEM assumes linearity. This can result in functional misspecification which prevents researchers from undertaking reliable effect size estimation. In contrast, we propose Super Learner Equation Modeling, a path modeling technique integrating machine learning Super Learner ensembles. We empirically demonstrate its ability to provide consistent and unbiased estimates of causal effects, its competitive performance for linear models when compared with SEM, and highlight its superiority over SEM when dealing with non-linear relationships. We provide open-source code, and a tutorial notebook with example usage, accentuating the easy-to-use nature of the method.

The advent of deep learning has brought a revolutionary transformation to image denoising techniques. However, the persistent challenge of acquiring noise-clean pairs for supervised methods in real-world scenarios remains formidable, necessitating the exploration of more practical self-supervised image denoising. This paper focuses on self-supervised image denoising methods that offer effective solutions to address this challenge. Our comprehensive review thoroughly analyzes the latest advancements in self-supervised image denoising approaches, categorizing them into three distinct classes: General methods, Blind Spot Network (BSN)-based methods, and Transformer-based methods. For each class, we provide a concise theoretical analysis along with their practical applications. To assess the effectiveness of these methods, we present both quantitative and qualitative experimental results on various datasets, utilizing classical algorithms as benchmarks. Additionally, we critically discuss the current limitations of these methods and propose promising directions for future research. By offering a detailed overview of recent developments in self-supervised image denoising, this review serves as an invaluable resource for researchers and practitioners in the field, facilitating a deeper understanding of this emerging domain and inspiring further advancements.

To use reinforcement learning from human feedback (RLHF) in practical applications, it is crucial to learn reward models from diverse sources of human feedback and to consider human factors involved in providing feedback of different types. However, the systematic study of learning from diverse types of feedback is held back by limited standardized tooling available to researchers. To bridge this gap, we propose RLHF-Blender, a configurable, interactive interface for learning from human feedback. RLHF-Blender provides a modular experimentation framework and implementation that enables researchers to systematically investigate the properties and qualities of human feedback for reward learning. The system facilitates the exploration of various feedback types, including demonstrations, rankings, comparisons, and natural language instructions, as well as studies considering the impact of human factors on their effectiveness. We discuss a set of concrete research opportunities enabled by RLHF-Blender. More information is available at //rlhfblender.info/.

Advanced image tampering techniques are increasingly challenging the trustworthiness of multimedia, leading to the development of Image Manipulation Localization (IML). But what makes a good IML model? The answer lies in the way to capture artifacts. Exploiting artifacts requires the model to extract non-semantic discrepancies between manipulated and authentic regions, necessitating explicit comparisons between the two areas. With the self-attention mechanism, naturally, the Transformer should be a better candidate to capture artifacts. However, due to limited datasets, there is currently no pure ViT-based approach for IML to serve as a benchmark, and CNNs dominate the entire task. Nevertheless, CNNs suffer from weak long-range and non-semantic modeling. To bridge this gap, based on the fact that artifacts are sensitive to image resolution, amplified under multi-scale features, and massive at the manipulation border, we formulate the answer to the former question as building a ViT with high-resolution capacity, multi-scale feature extraction capability, and manipulation edge supervision that could converge with a small amount of data. We term this simple but effective ViT paradigm IML-ViT, which has significant potential to become a new benchmark for IML. Extensive experiments on five benchmark datasets verified our model outperforms the state-of-the-art manipulation localization methods.Code and models are available at \url{//github.com/SunnyHaze/IML-ViT}.

In policy learning for robotic manipulation, sample efficiency is of paramount importance. Thus, learning and extracting more compact representations from camera observations is a promising avenue. However, current methods often assume full observability of the scene and struggle with scale invariance. In many tasks and settings, this assumption does not hold as objects in the scene are often occluded or lie outside the field of view of the camera, rendering the camera observation ambiguous with regard to their location. To tackle this problem, we present BASK, a Bayesian approach to tracking scale-invariant keypoints over time. Our approach successfully resolves inherent ambiguities in images, enabling keypoint tracking on symmetrical objects and occluded and out-of-view objects. We employ our method to learn challenging multi-object robot manipulation tasks from wrist camera observations and demonstrate superior utility for policy learning compared to other representation learning techniques. Furthermore, we show outstanding robustness towards disturbances such as clutter, occlusions, and noisy depth measurements, as well as generalization to unseen objects both in simulation and real-world robotic experiments.

In recent years, more and more researchers have reflected on the undervaluation of emotion in data visualization and highlighted the importance of considering human emotion in visualization design. Meanwhile, an increasing number of studies have been conducted to explore emotion-related factors. However, so far, this research area is still in its early stages and faces a set of challenges, such as the unclear definition of key concepts, the insufficient justification of why emotion is important in visualization design, and the lack of characterization of the design space of affective visualization design. To address these challenges, first, we conducted a literature review and identified three research lines that examined both emotion and data visualization. We clarified the differences between these research lines and kept 109 papers that studied or discussed how data visualization communicates and influences emotion. Then, we coded the 109 papers in terms of how they justified the legitimacy of considering emotion in visualization design (i.e., why emotion is important) and identified five argumentative perspectives. Based on these papers, we also identified 61 projects that practiced affective visualization design. We coded these design projects in three dimensions, including design fields (where), design tasks (what), and design methods (how), to explore the design space of affective visualization design.

In pace with developments in the research field of artificial intelligence, knowledge graphs (KGs) have attracted a surge of interest from both academia and industry. As a representation of semantic relations between entities, KGs have proven to be particularly relevant for natural language processing (NLP), experiencing a rapid spread and wide adoption within recent years. Given the increasing amount of research work in this area, several KG-related approaches have been surveyed in the NLP research community. However, a comprehensive study that categorizes established topics and reviews the maturity of individual research streams remains absent to this day. Contributing to closing this gap, we systematically analyzed 507 papers from the literature on KGs in NLP. Our survey encompasses a multifaceted review of tasks, research types, and contributions. As a result, we present a structured overview of the research landscape, provide a taxonomy of tasks, summarize our findings, and highlight directions for future work.

Over the past few years, the rapid development of deep learning technologies for computer vision has greatly promoted the performance of medical image segmentation (MedISeg). However, the recent MedISeg publications usually focus on presentations of the major contributions (e.g., network architectures, training strategies, and loss functions) while unwittingly ignoring some marginal implementation details (also known as "tricks"), leading to a potential problem of the unfair experimental result comparisons. In this paper, we collect a series of MedISeg tricks for different model implementation phases (i.e., pre-training model, data pre-processing, data augmentation, model implementation, model inference, and result post-processing), and experimentally explore the effectiveness of these tricks on the consistent baseline models. Compared to paper-driven surveys that only blandly focus on the advantages and limitation analyses of segmentation models, our work provides a large number of solid experiments and is more technically operable. With the extensive experimental results on both the representative 2D and 3D medical image datasets, we explicitly clarify the effect of these tricks. Moreover, based on the surveyed tricks, we also open-sourced a strong MedISeg repository, where each of its components has the advantage of plug-and-play. We believe that this milestone work not only completes a comprehensive and complementary survey of the state-of-the-art MedISeg approaches, but also offers a practical guide for addressing the future medical image processing challenges including but not limited to small dataset learning, class imbalance learning, multi-modality learning, and domain adaptation. The code has been released at: //github.com/hust-linyi/MedISeg

Influenced by the stunning success of deep learning in computer vision and language understanding, research in recommendation has shifted to inventing new recommender models based on neural networks. In recent years, we have witnessed significant progress in developing neural recommender models, which generalize and surpass traditional recommender models owing to the strong representation power of neural networks. In this survey paper, we conduct a systematic review on neural recommender models, aiming to summarize the field to facilitate future progress. Distinct from existing surveys that categorize existing methods based on the taxonomy of deep learning techniques, we instead summarize the field from the perspective of recommendation modeling, which could be more instructive to researchers and practitioners working on recommender systems. Specifically, we divide the work into three types based on the data they used for recommendation modeling: 1) collaborative filtering models, which leverage the key source of user-item interaction data; 2) content enriched models, which additionally utilize the side information associated with users and items, like user profile and item knowledge graph; and 3) context enriched models, which account for the contextual information associated with an interaction, such as time, location, and the past interactions. After reviewing representative works for each type, we finally discuss some promising directions in this field, including benchmarking recommender systems, graph reasoning based recommendation models, and explainable and fair recommendations for social good.

In order to answer natural language questions over knowledge graphs, most processing pipelines involve entity and relation linking. Traditionally, entity linking and relation linking has been performed either as dependent sequential tasks or independent parallel tasks. In this paper, we propose a framework called "EARL", which performs entity linking and relation linking as a joint single task. EARL uses a graph connection based solution to the problem. We model the linking task as an instance of the Generalised Travelling Salesman Problem (GTSP) and use GTSP approximate algorithm solutions. We later develop EARL which uses a pair-wise graph-distance based solution to the problem.The system determines the best semantic connection between all keywords of the question by referring to a knowledge graph. This is achieved by exploiting the "connection density" between entity candidates and relation candidates. The "connection density" based solution performs at par with the approximate GTSP solution.We have empirically evaluated the framework on a dataset with 5000 questions. Our system surpasses state-of-the-art scores for entity linking task by reporting an accuracy of 0.65 to 0.40 from the next best entity linker.