Deciding how to optimally deploy sensors in a large, complex, and spatially extended structure is critical to ensure that the surface pressure field is accurately captured for subsequent analysis and design. In some cases, reconstruction of missing data is required in downstream tasks such as the development of digital twins. This paper presents a data-driven sparse sensor selection algorithm, aiming to provide the most information contents for reconstructing aerodynamic characteristics of wind pressures over tall building structures parsimoniously. The algorithm first fits a set of basis functions to the training data, then applies a computationally efficient QR algorithm that ranks existing pressure sensors in order of importance based on the state reconstruction to this tailored basis. The findings of this study show that the proposed algorithm successfully reconstructs the aerodynamic characteristics of tall buildings from sparse measurement locations, generating stable and optimal solutions across a range of conditions. As a result, this study serves as a promising first step toward leveraging the success of data-driven and machine learning algorithms to supplement traditional genetic algorithms currently used in wind engineering.

相關內容

In the design of offshore jacket foundations, fatigue life is crucial. Post-weld treatment has been proposed to enhance the fatigue performance of welded joints, where particularly high-frequency mechanical impact (HFMI) treatment has been shown to improve fatigue performance significantly. Automated HFMI treatment has improved quality assurance and can lead to cost-effective design when combined with accurate fatigue life prediction. However, the finite element method (FEM), commonly used for predicting fatigue life in complex or multi-axial joints, relies on a basic CAD depiction of the weld, failing to consider the actual weld geometry and defects. Including the actual weld geometry in the FE model improves fatigue life prediction and possible crack location prediction but requires a digital reconstruction of the weld. Current digital reconstruction methods are time-consuming or require specialised scanning equipment and potential component relocation. The proposed framework instead uses an industrial manipulator combined with a line scanner to integrate digital reconstruction as part of the automated HFMI treatment setup. This approach applies standard image processing, simple filtering techniques, and non-linear optimisation for aligning and merging overlapping scans. A screened Poisson surface reconstruction finalises the 3D model to create a meshed surface. The outcome is a generic, cost-effective, flexible, and rapid method that enables generic digital reconstruction of welded parts, aiding in component design, overall quality assurance, and documentation of the HFMI treatment.

This study proposes a self-learning algorithm for closed-loop cylinder wake control targeting lower drag and lower lift fluctuations with the additional challenge of sparse sensor information, taking deep reinforcement learning as the starting point. DRL performance is significantly improved by lifting the sensor signals to dynamic features (DF), which predict future flow states. The resulting dynamic feature-based DRL (DF-DRL) automatically learns a feedback control in the plant without a dynamic model. Results show that the drag coefficient of the DF-DRL model is 25% less than the vanilla model based on direct sensor feedback. More importantly, using only one surface pressure sensor, DF-DRL can reduce the drag coefficient to a state-of-the-art performance of about 8% at Re = 100 and significantly mitigate lift coefficient fluctuations. Hence, DF-DRL allows the deployment of sparse sensing of the flow without degrading the control performance. This method also shows good robustness in controlling flow under higher Reynolds numbers, which reduces the drag coefficient by 32.2% and 46.55% at Re = 500 and 1000, respectively, indicating the broad applicability of the method. Since surface pressure information is more straightforward to measure in realistic scenarios than flow velocity information, this study provides a valuable reference for experimentally designing the active flow control of a circular cylinder based on wall pressure signals, which is an essential step toward further developing intelligent control in realistic multi-input multi-output (MIMO) system.

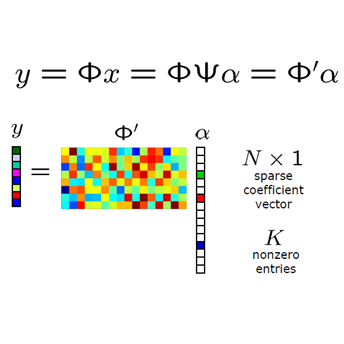

Linear inverse problems arise in diverse engineering fields especially in signal and image reconstruction. The development of computational methods for linear inverse problems with sparsity is one of the recent trends in this field. The so-called optimal $k$-thresholding is a newly introduced method for sparse optimization and linear inverse problems. Compared to other sparsity-aware algorithms, the advantage of optimal $k$-thresholding method lies in that it performs thresholding and error metric reduction simultaneously and thus works stably and robustly for solving medium-sized linear inverse problems. However, the runtime of this method is generally high when the size of the problem is large. The purpose of this paper is to propose an acceleration strategy for this method. Specifically, we propose a heavy-ball-based optimal $k$-thresholding (HBOT) algorithm and its relaxed variants for sparse linear inverse problems. The convergence of these algorithms is shown under the restricted isometry property. In addition, the numerical performance of the heavy-ball-based relaxed optimal $k$-thresholding pursuit (HBROTP) has been evaluated, and simulations indicate that HBROTP admits robustness for signal and image reconstruction even in noisy environments.

Recently developed reduced-order modeling techniques aim to approximate nonlinear dynamical systems on low-dimensional manifolds learned from data. This is an effective approach for modeling dynamics in a post-transient regime where the effects of initial conditions and other disturbances have decayed. However, modeling transient dynamics near an underlying manifold, as needed for real-time control and forecasting applications, is complicated by the effects of fast dynamics and nonnormal sensitivity mechanisms. To begin to address these issues, we introduce a parametric class of nonlinear projections described by constrained autoencoder neural networks in which both the manifold and the projection fibers are learned from data. Our architecture uses invertible activation functions and biorthogonal weight matrices to ensure that the encoder is a left inverse of the decoder. We also introduce new dynamics-aware cost functions that promote learning of oblique projection fibers that account for fast dynamics and nonnormality. To demonstrate these methods and the specific challenges they address, we provide a detailed case study of a three-state model of vortex shedding in the wake of a bluff body immersed in a fluid, which has a two-dimensional slow manifold that can be computed analytically. In anticipation of future applications to high-dimensional systems, we also propose several techniques for constructing computationally efficient reduced-order models using our proposed nonlinear projection framework. This includes a novel sparsity-promoting penalty for the encoder that avoids detrimental weight matrix shrinkage via computation on the Grassmann manifold.

A sound field synthesis method enhancing perceptual quality is proposed. Sound field synthesis using multiple loudspeakers enables spatial audio reproduction with a broad listening area; however, synthesis errors at high frequencies called spatial aliasing artifacts are unavoidable. To minimize these artifacts, we propose a method based on the combination of pressure and amplitude matching. On the basis of the human's auditory properties, synthesizing the amplitude distribution will be sufficient for horizontal sound localization. Furthermore, a flat amplitude response should be synthesized as much as possible to avoid coloration. Therefore, we apply amplitude matching, which is a method to synthesize the desired amplitude distribution with arbitrary phase distribution, for high frequencies and conventional pressure matching for low frequencies. Experimental results of numerical simulations and listening tests using a practical system indicated that the perceptual quality of the sound field synthesized by the proposed method was improved from that synthesized by pressure matching.

Over the past decades, hemodynamics simulators have steadily evolved and have become tools of choice for studying cardiovascular systems in-silico. While such tools are routinely used to simulate whole-body hemodynamics from physiological parameters, solving the corresponding inverse problem of mapping waveforms back to plausible physiological parameters remains both promising and challenging. Motivated by advances in simulation-based inference (SBI), we cast this inverse problem as statistical inference. In contrast to alternative approaches, SBI provides \textit{posterior distributions} for the parameters of interest, providing a \textit{multi-dimensional} representation of uncertainty for \textit{individual} measurements. We showcase this ability by performing an in-silico uncertainty analysis of five biomarkers of clinical interest comparing several measurement modalities. Beyond the corroboration of known facts, such as the feasibility of estimating heart rate, our study highlights the potential of estimating new biomarkers from standard-of-care measurements. SBI reveals practically relevant findings that cannot be captured by standard sensitivity analyses, such as the existence of sub-populations for which parameter estimation exhibits distinct uncertainty regimes. Finally, we study the gap between in-vivo and in-silico with the MIMIC-III waveform database and critically discuss how cardiovascular simulations can inform real-world data analysis.

Face recognition technology has advanced significantly in recent years due largely to the availability of large and increasingly complex training datasets for use in deep learning models. These datasets, however, typically comprise images scraped from news sites or social media platforms and, therefore, have limited utility in more advanced security, forensics, and military applications. These applications require lower resolution, longer ranges, and elevated viewpoints. To meet these critical needs, we collected and curated the first and second subsets of a large multi-modal biometric dataset designed for use in the research and development (R&D) of biometric recognition technologies under extremely challenging conditions. Thus far, the dataset includes more than 350,000 still images and over 1,300 hours of video footage of approximately 1,000 subjects. To collect this data, we used Nikon DSLR cameras, a variety of commercial surveillance cameras, specialized long-rage R&D cameras, and Group 1 and Group 2 UAV platforms. The goal is to support the development of algorithms capable of accurately recognizing people at ranges up to 1,000 m and from high angles of elevation. These advances will include improvements to the state of the art in face recognition and will support new research in the area of whole-body recognition using methods based on gait and anthropometry. This paper describes methods used to collect and curate the dataset, and the dataset's characteristics at the current stage.

Explainable Artificial Intelligence (XAI) is transforming the field of Artificial Intelligence (AI) by enhancing the trust of end-users in machines. As the number of connected devices keeps on growing, the Internet of Things (IoT) market needs to be trustworthy for the end-users. However, existing literature still lacks a systematic and comprehensive survey work on the use of XAI for IoT. To bridge this lacking, in this paper, we address the XAI frameworks with a focus on their characteristics and support for IoT. We illustrate the widely-used XAI services for IoT applications, such as security enhancement, Internet of Medical Things (IoMT), Industrial IoT (IIoT), and Internet of City Things (IoCT). We also suggest the implementation choice of XAI models over IoT systems in these applications with appropriate examples and summarize the key inferences for future works. Moreover, we present the cutting-edge development in edge XAI structures and the support of sixth-generation (6G) communication services for IoT applications, along with key inferences. In a nutshell, this paper constitutes the first holistic compilation on the development of XAI-based frameworks tailored for the demands of future IoT use cases.

With the extremely rapid advances in remote sensing (RS) technology, a great quantity of Earth observation (EO) data featuring considerable and complicated heterogeneity is readily available nowadays, which renders researchers an opportunity to tackle current geoscience applications in a fresh way. With the joint utilization of EO data, much research on multimodal RS data fusion has made tremendous progress in recent years, yet these developed traditional algorithms inevitably meet the performance bottleneck due to the lack of the ability to comprehensively analyse and interpret these strongly heterogeneous data. Hence, this non-negligible limitation further arouses an intense demand for an alternative tool with powerful processing competence. Deep learning (DL), as a cutting-edge technology, has witnessed remarkable breakthroughs in numerous computer vision tasks owing to its impressive ability in data representation and reconstruction. Naturally, it has been successfully applied to the field of multimodal RS data fusion, yielding great improvement compared with traditional methods. This survey aims to present a systematic overview in DL-based multimodal RS data fusion. More specifically, some essential knowledge about this topic is first given. Subsequently, a literature survey is conducted to analyse the trends of this field. Some prevalent sub-fields in the multimodal RS data fusion are then reviewed in terms of the to-be-fused data modalities, i.e., spatiospectral, spatiotemporal, light detection and ranging-optical, synthetic aperture radar-optical, and RS-Geospatial Big Data fusion. Furthermore, We collect and summarize some valuable resources for the sake of the development in multimodal RS data fusion. Finally, the remaining challenges and potential future directions are highlighted.

Multi-object tracking (MOT) is a crucial component of situational awareness in military defense applications. With the growing use of unmanned aerial systems (UASs), MOT methods for aerial surveillance is in high demand. Application of MOT in UAS presents specific challenges such as moving sensor, changing zoom levels, dynamic background, illumination changes, obscurations and small objects. In this work, we present a robust object tracking architecture aimed to accommodate for the noise in real-time situations. We propose a kinematic prediction model, called Deep Extended Kalman Filter (DeepEKF), in which a sequence-to-sequence architecture is used to predict entity trajectories in latent space. DeepEKF utilizes a learned image embedding along with an attention mechanism trained to weight the importance of areas in an image to predict future states. For the visual scoring, we experiment with different similarity measures to calculate distance based on entity appearances, including a convolutional neural network (CNN) encoder, pre-trained using Siamese networks. In initial evaluation experiments, we show that our method, combining scoring structure of the kinematic and visual models within a MHT framework, has improved performance especially in edge cases where entity motion is unpredictable, or the data presents frames with significant gaps.