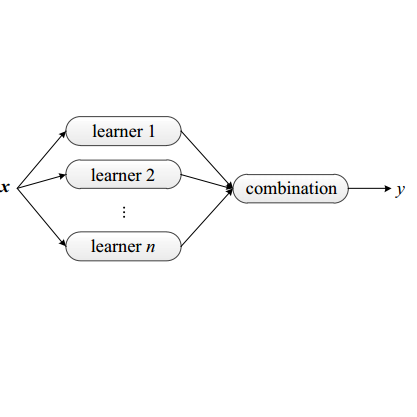

As an effective tool for eliciting the power of Large Language Models (LLMs), prompting has recently demonstrated unprecedented abilities across a variety of complex tasks. To further improve the performance, prompt ensemble has attracted substantial interest for tackling the hallucination and instability of LLMs. However, existing methods usually adopt a two-stage paradigm, which requires a pre-prepared set of prompts with substantial manual effort, and is unable to perform directed optimization for different weak learners. In this paper, we propose a simple, universal, and automatic method named PREFER (Pompt Ensemble learning via Feedback-Reflect-Refine) to address the stated limitations. Specifically, given the fact that weak learners are supposed to focus on hard examples during boosting, PREFER builds a feedback mechanism for reflecting on the inadequacies of existing weak learners. Based on this, the LLM is required to automatically synthesize new prompts for iterative refinement. Moreover, to enhance stability of the prompt effect evaluation, we propose a novel prompt bagging method involving forward and backward thinking, which is superior to majority voting and is beneficial for both feedback and weight calculation in boosting. Extensive experiments demonstrate that our PREFER achieves state-of-the-art performance in multiple types of tasks by a significant margin. We have made our code publicly available.

相關內容

Temporal motif mining is the task of finding the occurrences of subgraph patterns within a large input temporal graph that obey the specified structural and temporal constraints. Despite its utility in several critical application domains that demand high performance (e.g., detecting fraud in financial transaction graphs), the performance of existing software is limited on commercial hardware platforms, in that it runs for tens of hours. This paper presents Everest - a system that efficiently maps the workload of mining (supports both enumeration and counting) temporal motifs to the highly parallel GPU architecture. In particular, using an input temporal graph and a more expressive user-defined temporal motif query definition compared to prior works, Everest generates an execution plan and runtime primitives that optimize the workload execution by exploiting the high compute throughput of a GPU. Everest generates motif-specific mining code to reduce long-latency memory accesses and frequent thread divergence operations. Everest incorporates novel low-cost runtime mechanisms to enable load balancing to improve GPU hardware utilization. To support large graphs that do not fit on GPU memory, Everest also supports multi-GPU execution by intelligently partitioning the edge list that prevents inter-GPU communication. Everest hides the implementation complexity of presented optimizations away from the targeted system user for better usability. Our evaluation shows that, using proposed optimizations, Everest improves the performance of a baseline GPU implementation by 19x, on average.

In the realm of robotic intelligence, achieving efficient and precise RGB-D semantic segmentation is a key cornerstone. State-of-the-art multimodal semantic segmentation methods, primarily rooted in symmetrical skeleton networks, find it challenging to harmonize computational efficiency and precision. In this work, we propose AsymFormer, a novel network for real-time RGB-D semantic segmentation, which targets the minimization of superfluous parameters by optimizing the distribution of computational resources and introduces an asymmetrical backbone to allow for the effective fusion of multimodal features. Furthermore, we explore techniques to bolster network accuracy by redefining feature selection and extracting multi-modal self-similarity features without a substantial increase in the parameter count, thereby ensuring real-time execution on robotic platforms. Additionally, a Local Attention-Guided Feature Selection (LAFS) module is used to selectively fuse features from different modalities by leveraging their dependencies. Subsequently, a Cross-Modal Attention-Guided Feature Correlation Embedding (CMA) module is introduced to further extract cross-modal representations. This method is evaluated on NYUv2 and SUNRGBD datasets, with AsymFormer demonstrating competitive results with 52.0% mIoU on NYUv2 and 49.1% mIoU on SUNRGBD. Notably, AsymFormer achieves an inference speed of 65 FPS and after implementing mixed precision quantization, it attains an impressive inference speed of 79 FPS on RTX3090. This significantly outperforms existing multi-modal methods, thereby demonstrating that AsymFormer can strike a balance between high accuracy and efficiency for RGB-D semantic segmentation.

Integrated sensing and communication (ISAC) has the advantages of efficient spectrum utilization and low hardware cost. It is promising to be implemented in the fifth-generation-advanced (5G-A) and sixth-generation (6G) mobile communication systems, having the potential to be applied in intelligent applications requiring both communication and high-accurate sensing capabilities. As the fundamental technology of ISAC, ISAC signal directly impacts the performance of sensing and communication. This article systematically reviews the literature on ISAC signals from the perspective of mobile communication systems, including ISAC signal design, ISAC signal processing algorithms and ISAC signal optimization. We first review the ISAC signal design based on 5G, 5G-A and 6G mobile communication systems. Then, radar signal processing methods are reviewed for ISAC signals, mainly including the channel information matrix method, spectrum lines estimator method and super resolution method. In terms of signal optimization, we summarize peak-to-average power ratio (PAPR) optimization, interference management, and adaptive signal optimization for ISAC signals. This article may provide the guidelines for the research of ISAC signals in 5G-A and 6G mobile communication systems.

Language understanding is a multi-faceted cognitive capability, which the Natural Language Processing (NLP) community has striven to model computationally for decades. Traditionally, facets of linguistic intelligence have been compartmentalized into tasks with specialized model architectures and corresponding evaluation protocols. With the advent of large language models (LLMs) the community has witnessed a dramatic shift towards general purpose, task-agnostic approaches powered by generative models. As a consequence, the traditional compartmentalized notion of language tasks is breaking down, followed by an increasing challenge for evaluation and analysis. At the same time, LLMs are being deployed in more real-world scenarios, including previously unforeseen zero-shot setups, increasing the need for trustworthy and reliable systems. Therefore, we argue that it is time to rethink what constitutes tasks and model evaluation in NLP, and pursue a more holistic view on language, placing trustworthiness at the center. Towards this goal, we review existing compartmentalized approaches for understanding the origins of a model's functional capacity, and provide recommendations for more multi-faceted evaluation protocols.

Large Language Models (LLMs) have shown impressive abilities in various tasks. However, fundamentally improving them depends on high-quality datasets or computationally expensive fine-tuning. On the contrary, humans can easily improve themselves by self-thinking and memory, without external resources. In this paper, we propose a framework, MoT, to let the LLM self-improve through Memory-of-Thought, without annotated datasets and parameter updates. Specifically, MoT is divided into two stages: 1. before the test stage, the LLM pre-thinks on the unlabeled dataset and saves the high-confidence thoughts as external memory; 2. During the test stage, given a test question, the LLM recalls relevant memory to help itself reason and answer it. Experimental results show that MoT can help ChatGPT significantly improve its abilities in arithmetic reasoning, commonsense reasoning, factual reasoning, and natural language inference. Further analyses show that each component contributes critically to the improvements and MoT can lead to consistent improvements across various CoT methods and LLMs.

This paper pursues the insight that language models naturally enable an intelligent variation operator similar in spirit to evolutionary crossover. In particular, language models of sufficient scale demonstrate in-context learning, i.e. they can learn from associations between a small number of input patterns to generate outputs incorporating such associations (also called few-shot prompting). This ability can be leveraged to form a simple but powerful variation operator, i.e. to prompt a language model with a few text-based genotypes (such as code, plain-text sentences, or equations), and to parse its corresponding output as those genotypes' offspring. The promise of such language model crossover (which is simple to implement and can leverage many different open-source language models) is that it enables a simple mechanism to evolve semantically-rich text representations (with few domain-specific tweaks), and naturally benefits from current progress in language models. Experiments in this paper highlight the versatility of language-model crossover, through evolving binary bit-strings, sentences, equations, text-to-image prompts, and Python code. The conclusion is that language model crossover is a promising method for evolving genomes representable as text.

A typical neural speech enhancement (SE) approach mainly handles speech and noise mixtures, which is not optimal for singing voice enhancement scenarios. Music source separation (MSS) models treat vocals and various accompaniment components equally, which may reduce performance compared to the model that only considers vocal enhancement. In this paper, we propose a novel multi-band temporal-frequency neural network (MBTFNet) for singing voice enhancement, which particularly removes background music, noise and even backing vocals from singing recordings. MBTFNet combines inter and intra-band modeling for better processing of full-band signals. Dual-path modeling are introduced to expand the receptive field of the model. We propose an implicit personalized enhancement (IPE) stage based on signal-to-noise ratio (SNR) estimation, which further improves the performance of MBTFNet. Experiments show that our proposed model significantly outperforms several state-of-the-art SE and MSS models.

Neural Radiance Fields (NeRF) has received much attention recently due to its impressive capability to represent 3D scene and synthesize novel view images. Existing works usually assume that the input images are captured by a global shutter camera. Thus, rolling shutter (RS) images cannot be trivially applied to an off-the-shelf NeRF algorithm for novel view synthesis. Rolling shutter effect would also affect the accuracy of the camera pose estimation (e.g. via COLMAP), which further prevents the success of NeRF algorithm with RS images. In this paper, we propose Unrolling Shutter Bundle Adjusted Neural Radiance Fields (USB-NeRF). USB-NeRF is able to correct rolling shutter distortions and recover accurate camera motion trajectory simultaneously under the framework of NeRF, by modeling the physical image formation process of a RS camera. Experimental results demonstrate that USB-NeRF achieves better performance compared to prior works, in terms of RS effect removal, novel view image synthesis as well as camera motion estimation. Furthermore, our algorithm can also be used to recover high-fidelity high frame-rate global shutter video from a sequence of RS images.

Named entity recognition (NER) in Chinese is essential but difficult because of the lack of natural delimiters. Therefore, Chinese Word Segmentation (CWS) is usually considered as the first step for Chinese NER. However, models based on word-level embeddings and lexicon features often suffer from segmentation errors and out-of-vocabulary (OOV) words. In this paper, we investigate a Convolutional Attention Network called CAN for Chinese NER, which consists of a character-based convolutional neural network (CNN) with local-attention layer and a gated recurrent unit (GRU) with global self-attention layer to capture the information from adjacent characters and sentence contexts. Also, compared to other models, not depending on any external resources like lexicons and employing small size of char embeddings make our model more practical. Extensive experimental results show that our approach outperforms state-of-the-art methods without word embedding and external lexicon resources on different domain datasets including Weibo, MSRA and Chinese Resume NER dataset.

Recurrent neural nets (RNN) and convolutional neural nets (CNN) are widely used on NLP tasks to capture the long-term and local dependencies, respectively. Attention mechanisms have recently attracted enormous interest due to their highly parallelizable computation, significantly less training time, and flexibility in modeling dependencies. We propose a novel attention mechanism in which the attention between elements from input sequence(s) is directional and multi-dimensional (i.e., feature-wise). A light-weight neural net, "Directional Self-Attention Network (DiSAN)", is then proposed to learn sentence embedding, based solely on the proposed attention without any RNN/CNN structure. DiSAN is only composed of a directional self-attention with temporal order encoded, followed by a multi-dimensional attention that compresses the sequence into a vector representation. Despite its simple form, DiSAN outperforms complicated RNN models on both prediction quality and time efficiency. It achieves the best test accuracy among all sentence encoding methods and improves the most recent best result by 1.02% on the Stanford Natural Language Inference (SNLI) dataset, and shows state-of-the-art test accuracy on the Stanford Sentiment Treebank (SST), Multi-Genre natural language inference (MultiNLI), Sentences Involving Compositional Knowledge (SICK), Customer Review, MPQA, TREC question-type classification and Subjectivity (SUBJ) datasets.