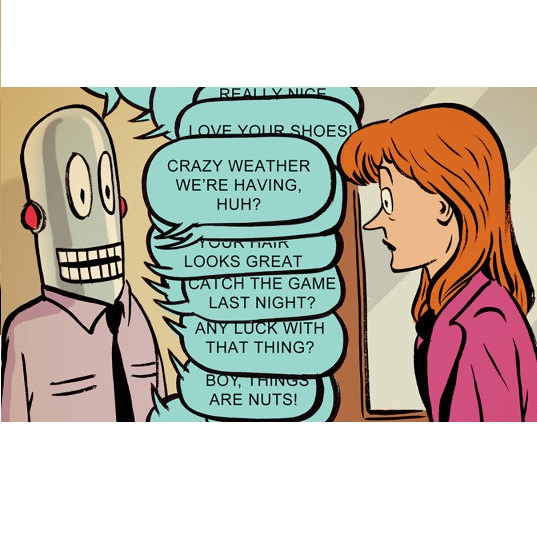

Artificial intelligence (AI) has the potential to transform education with its power of uncovering insights from massive data about student learning patterns. However, ethical and trustworthy concerns of AI have been raised but are unsolved. Prominent ethical issues in high school AI education include data privacy, information leakage, abusive language, and fairness. This paper describes technological components that were built to address ethical and trustworthy concerns in a multi-modal collaborative platform (called ALLURE chatbot) for high school students to collaborate with AI to solve the Rubik's cube. In data privacy, we want to ensure that the informed consent of children, parents, and teachers, is at the center of any data that is managed. Since children are involved, language, whether textual, audio, or visual, is acceptable both from users and AI and the system can steer interaction away from dangerous situations. In information management, we also want to ensure that the system, while learning to improve over time, does not leak information about users from one group to another.

相關內容

Adaptiveness is a key principle in information processing including statistics and machine learning. We investigate the usefulness of adaptive methods in the framework of asymptotic binary hypothesis testing, when each hypothesis represents asymptotically many independent instances of a quantum channel, and the tests are based on using the unknown channel and observing outputs. Unlike the familiar setting of quantum states as hypotheses, there is a fundamental distinction between adaptive and non-adaptive strategies with respect to the channel uses, and we introduce a number of further variants of the discrimination tasks by imposing different restrictions on the test strategies. The following results are obtained: (1) We prove that for classical-quantum channels, adaptive and non-adaptive strategies lead to the same error exponents both in the symmetric (Chernoff) and asymmetric (Hoeffding, Stein) settings. (2) The first separation between adaptive and non-adaptive symmetric hypothesis testing exponents for quantum channels, which we derive from a general lower bound on the error probability for non-adaptive strategies; the concrete example we analyze is a pair of entanglement-breaking channels. (3)We prove, in some sense generalizing the previous statement, that for general channels adaptive strategies restricted to classical feed-forward and product state channel inputs are not superior in the asymptotic limit to non-adaptive product state strategies. (4) As an application of our findings, we address the discrimination power of an arbitrary quantum channel and show that adaptive strategies with classical feedback and no quantum memory at the input do not increase the discrimination power of the channel beyond non-adaptive tensor product input strategies.

Artificial intelligence (AI) models are increasingly used in the medical domain. However, as medical data is highly sensitive, special precautions to ensure its protection are required. The gold standard for privacy preservation is the introduction of differential privacy (DP) to model training. Prior work indicates that DP has negative implications on model accuracy and fairness, which are unacceptable in medicine and represent a main barrier to the widespread use of privacy-preserving techniques. In this work, we evaluated the effect of privacy-preserving training of AI models regarding accuracy and fairness compared to non-private training. For this, we used two datasets: (1) A large dataset (N=193,311) of high quality clinical chest radiographs, and (2) a dataset (N=1,625) of 3D abdominal computed tomography (CT) images, with the task of classifying the presence of pancreatic ductal adenocarcinoma (PDAC). Both were retrospectively collected and manually labeled by experienced radiologists. We then compared non-private deep convolutional neural networks (CNNs) and privacy-preserving (DP) models with respect to privacy-utility trade-offs measured as area under the receiver-operator-characteristic curve (AUROC), and privacy-fairness trade-offs, measured as Pearson's r or Statistical Parity Difference. We found that, while the privacy-preserving trainings yielded lower accuracy, they did largely not amplify discrimination against age, sex or co-morbidity. Our study shows that -- under the challenging realistic circumstances of a real-life clinical dataset -- the privacy-preserving training of diagnostic deep learning models is possible with excellent diagnostic accuracy and fairness.

Compared to other techniques, particle swarm optimization is more frequently utilized because of its ease of use and low variability. However, it is complicated to find the best possible solution in the search space in large-scale optimization problems. Moreover, changing algorithm variables does not influence algorithm convergence much. The PSO algorithm can be combined with other algorithms. It can use their advantages and operators to solve this problem. Therefore, this paper proposes the onlooker multi-parent crossover discrete particle swarm optimization (OMPCDPSO). To improve the efficiency of the DPSO algorithm, we utilized multi-parent crossover on the best solutions. We performed an independent and intensive neighborhood search using the onlooker bees of the bee algorithm. The algorithm uses onlooker bees and crossover. They do local search (exploitation) and global search (exploration). Each of these searches is among the best solutions (employed bees). The proposed algorithm was tested on the allocation problem, which is an NP-hard optimization problem. Also, we used two types of simulated data. They were used to test the scalability and complexity of the better algorithm. Also, fourteen 2D test functions and thirteen 30D test functions were used. They also used twenty IEEE CEC2005 benchmark functions to test the efficiency of OMPCDPSO. Also, to test OMPCDPSO's performance, we compared it to four new binary optimization algorithms and three classic ones. The results show that the OMPCDPSO version had high capability. It performed better than other algorithms. The developed algorithm in this research (OMCDPSO) in 36 test functions out of 47 (76.60%) is better than other algorithms. The Onlooker bees and multi-parent operators significantly impact the algorithm's performance.

In this study, we tackle a growing concern around the safety and ethical use of large language models (LLMs). Despite their potential, these models can be tricked into producing harmful or unethical content through various sophisticated methods, including 'jailbreaking' techniques and targeted manipulation. Our work zeroes in on a specific issue: to what extent LLMs can be led astray by asking them to generate responses that are instruction-centric such as a pseudocode, a program or a software snippet as opposed to vanilla text. To investigate this question, we introduce TechHazardQA, a dataset containing complex queries which should be answered in both text and instruction-centric formats (e.g., pseudocodes), aimed at identifying triggers for unethical responses. We query a series of LLMs -- Llama-2-13b, Llama-2-7b, Mistral-V2 and Mistral 8X7B -- and ask them to generate both text and instruction-centric responses. For evaluation we report the harmfulness score metric as well as judgements from GPT-4 and humans. Overall, we observe that asking LLMs to produce instruction-centric responses enhances the unethical response generation by ~2-38% across the models. As an additional objective, we investigate the impact of model editing using the ROME technique, which further increases the propensity for generating undesirable content. In particular, asking edited LLMs to generate instruction-centric responses further increases the unethical response generation by ~3-16% across the different models.

In operations research (OR), predictive models often encounter out-of-distribution (OOD) scenarios where the data distribution differs from the training data distribution. In recent years, neural networks (NNs) are gaining traction in OR for their exceptional performance in fields such as image classification. However, NNs tend to make confident yet incorrect predictions when confronted with OOD data. Uncertainty estimation offers a solution to overconfident models, communicating when the output should (not) be trusted. Hence, reliable uncertainty quantification in NNs is crucial in the OR domain. Deep ensembles, composed of multiple independent NNs, have emerged as a promising approach, offering not only strong predictive accuracy but also reliable uncertainty estimation. However, their deployment is challenging due to substantial computational demands. Recent fundamental research has proposed more efficient NN ensembles, namely the snapshot, batch, and multi-input multi-output ensemble. This study is the first to provide a comprehensive comparison of a single NN, a deep ensemble, and the three efficient NN ensembles. In addition, we propose a Diversity Quality metric to quantify the ensembles' performance on the in-distribution and OOD sets in one single metric. The OR case study discusses industrial parts classification to identify and manage spare parts, important for timely maintenance of industrial plants. The results highlight the batch ensemble as a cost-effective and competitive alternative to the deep ensemble. It outperforms the deep ensemble in both uncertainty and accuracy while exhibiting a training time speedup of 7x, a test time speedup of 8x, and 9x memory savings.

Clustering of publication networks is an efficient way to obtain classifications of large collections of research publications. Such classifications can be used to, e.g., detect research topics, normalize citation relations, or explore the publication output of a unit. Citation networks can be created using a variety of approaches. Best practices to obtain classifications using clustering have been investigated, in particular the performance of different publication-publication relatedness measures. However, evaluation of different approaches to normalization of citation relations have not been explored to the same extent. In this paper, we evaluate five approaches to normalization of direct citation relations with respect to clustering solution quality in four data sets. A sixth approach is evaluated using no normalization. To assess the quality of clustering solutions, we use three measures. (1) We compare the clustering solution to the reference lists of a set of publications using the Adjusted Rand Index. (2) Using the Sihouette width measure, we quantity to which extent the publications have relations to other clusters than the one they have been assigned to. (3) We propose a measure that captures publications that have probably been inaccurately assigned. The results clearly show that normalization is preferred over unnormalized direct citation relations. Furthermore, the results indicate that the fractional normalization approach, which can be considered the standard approach, causes inaccurate assignments. The geometric normalization approach has a similar performance as the fractional approach regarding Adjusted Rand Index and Silhouette width but leads to fewer inaccurate assignments. We therefore believe that the geometric approach may be preferred over the fractional approach.

In many communication contexts, the capabilities of the involved actors cannot be known beforehand, whether it is a cell, a plant, an insect, or even a life form unknown to Earth. Regardless of the recipient, the message space and time scale could be too fast, too slow, too large, or too small and may never be decoded. Therefore, it pays to devise a way to encode messages agnostic of space and time scales. We propose the use of fractal functions as self-executable infinite-frequency carriers for sending messages, given their properties of structural self-similarity and scale invariance. We call it `fractal messaging'. Starting from a spatial embedding, we introduce a framework for a space-time scale-free messaging approach to this challenge. When considering a space and time-agnostic framework for message transmission, it would be interesting to encode a message such that it could be decoded at several spatio-temporal scales. Hence, the core idea of the framework proposed herein is to encode a binary message as waves along infinitely many frequencies (in power-like distributions) and amplitudes, transmit such a message, and then decode and reproduce it. To do so, the components of the Weierstrass function, a known fractal, are used as carriers of the message. Each component will have its amplitude modulated to embed the binary stream, allowing for a space-time-agnostic approach to messaging.

Marginal structural models have been increasingly used by analysts in recent years to account for confounding bias in studies with time-varying treatments. The parameters of these models are often estimated using inverse probability of treatment weighting. To ensure that the estimated weights adequately control confounding, it is possible to check for residual imbalance between treatment groups in the weighted data. Several balance metrics have been developed and compared in the cross-sectional case but have not yet been evaluated and compared in longitudinal studies with time-varying treatment. We have first extended the definition of several balance metrics to the case of a time-varying treatment, with or without censoring. We then compared the performance of these balance metrics in a simulation study by assessing the strength of the association between their estimated level of imbalance and bias. We found that the Mahalanobis balance performed best.Finally, the method was illustrated for estimating the cumulative effect of statins exposure over one year on the risk of cardiovascular disease or death in people aged 65 and over in population-wide administrative data. This illustration confirms the feasibility of employing our proposed metrics in large databases with multiple time-points.

Curiosity-driven learning has shown significant positive effects on students' learning experiences and outcomes. But despite this importance, reports show that children lack this skill, especially in formal educational settings. To address this challenge, we propose an 8-session workshop that aims to enhance children's curiosity through training a set of specific metacognitive skills we hypothesize are involved in its process. Our workshop contains animated videos presenting declarative knowledge about curiosity and the said metacognitive skills as well as practice sessions to apply these skills during a reading-comprehension task, using a web platform designed for this study (e.g. expressing uncertainty, formulating questions, etc). We conduct a pilot study with 15 primary school students, aged between 8 and 10. Our first results show a positive impact on children's metacognitive efficiency and their ability to express their curiosity through question-asking behaviors.

Surrogate modelling techniques have seen growing attention in recent years when applied to both modelling and optimisation of industrial design problems. These techniques are highly relevant when assessing the performance of a particular design carries a high cost, as the overall cost can be mitigated via the construction of a model to be queried in lieu of the available high-cost source. The construction of these models can sometimes employ other sources of information which are both cheaper and less accurate. The existence of these sources however poses the question of which sources should be used when constructing a model. Recent studies have attempted to characterise harmful data sources to guide practitioners in choosing when to ignore a certain source. These studies have done so in a synthetic setting, characterising sources using a large amount of data that is not available in practice. Some of these studies have also been shown to potentially suffer from bias in the benchmarks used in the analysis. In this study, we present a characterisation of harmful low-fidelity sources using only the limited data available to train a surrogate model. We employ recently developed benchmark filtering techniques to conduct a bias-free assessment, providing objectively varied benchmark suites of different sizes for future research. Analysing one of these benchmark suites with the technique known as Instance Space Analysis, we provide an intuitive visualisation of when a low-fidelity source should be used and use this analysis to provide guidelines that can be used in an applied industrial setting.