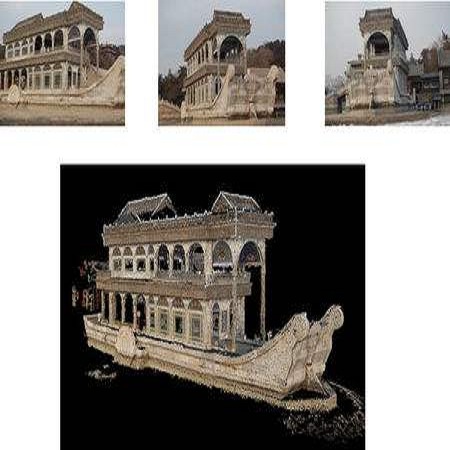

Reconstructing 3D shapes from single-view images has been a long-standing research problem. In this paper, we present DISN, a Deep Implicit Surface Network which can generate a high-quality detail-rich 3D mesh from an 2D image by predicting the underlying signed distance fields. In addition to utilizing global image features, DISN predicts the projected location for each 3D point on the 2D image, and extracts local features from the image feature maps. Combining global and local features significantly improves the accuracy of the signed distance field prediction, especially for the detail-rich areas. To the best of our knowledge, DISN is the first method that constantly captures details such as holes and thin structures present in 3D shapes from single-view images. DISN achieves the state-of-the-art single-view reconstruction performance on a variety of shape categories reconstructed from both synthetic and real images. Code is available at //github.com/xharlie/DISN The supplementary can be found at //xharlie.github.io/images/neurips_2019_supp.pdf

相關內容

Feature extraction has always been a critical component of the computer vision field. More recently, state-of-the-art computer visions algorithms have incorporated Deep Neural Networks (DNN) in feature extracting roles, creating Deep Convolutional Activation Features (DeCAF). The transferability of DNN knowledge domains has enabled the wide use of pretrained DNN feature extraction for applications with novel object classes, especially those with limited training data. This study analyzes the general discriminability of novel object visual appearances encoded into the DeCAF space of six of the leading visual recognition DNN architectures. The results of this study characterize the Mahalanobis distances and cosine similarities between DeCAF object manifolds across two visual object tracking benchmark data sets. The backgrounds surrounding each object are also included as an object classes in the manifold analysis, providing a wider range of novel classes. This study found that different network architectures led to different network feature focuses that must to be considered in the network selection process. These results are generated from the VOT2015 and UAV123 benchmark data sets; however, the proposed methods can be applied to efficiently compare estimated network performance characteristics for any labeled visual data set.

Existing works on motion deblurring either ignore the effects of depth-dependent blur or work with the assumption of a multi-layered scene wherein each layer is modeled in the form of fronto-parallel plane. In this work, we consider the case of 3D scenes with piecewise planar structure i.e., a scene that can be modeled as a combination of multiple planes with arbitrary orientations. We first propose an approach for estimation of normal of a planar scene from a single motion blurred observation. We then develop an algorithm for automatic recovery of number of planes, the parameters corresponding to each plane, and camera motion from a single motion blurred image of a multiplanar 3D scene. Finally, we propose a first-of-its-kind approach to recover the planar geometry and latent image of the scene by adopting an alternating minimization framework built on our findings. Experiments on synthetic and real data reveal that our proposed method achieves state-of-the-art results.

Most current neural networks for reconstructing surfaces from point clouds ignore sensor poses and only operate on raw point locations. Sensor visibility, however, holds meaningful information regarding space occupancy and surface orientation. In this paper, we present two simple ways to augment raw point clouds with visibility information, so it can directly be leveraged by surface reconstruction networks with minimal adaptation. Our proposed modifications consistently improve the accuracy of generated surfaces as well as the generalization ability of the networks to unseen shape domains. Our code and data is available at //github.com/raphaelsulzer/dsrv-data.

3D Morphable Model (3DMM) based methods have achieved great success in recovering 3D face shapes from single-view images. However, the facial textures recovered by such methods lack the fidelity as exhibited in the input images. Recent work demonstrates high-quality facial texture recovering with generative networks trained from a large-scale database of high-resolution UV maps of face textures, which is hard to prepare and not publicly available. In this paper, we introduce a method to reconstruct 3D facial shapes with high-fidelity textures from single-view images in-the-wild, without the need to capture a large-scale face texture database. The main idea is to refine the initial texture generated by a 3DMM based method with facial details from the input image. To this end, we propose to use graph convolutional networks to reconstruct the detailed colors for the mesh vertices instead of reconstructing the UV map. Experiments show that our method can generate high-quality results and outperforms state-of-the-art methods in both qualitative and quantitative comparisons.

Image captioning has attracted ever-increasing research attention in the multimedia community. To this end, most cutting-edge works rely on an encoder-decoder framework with attention mechanisms, which have achieved remarkable progress. However, such a framework does not consider scene concepts to attend visual information, which leads to sentence bias in caption generation and defects the performance correspondingly. We argue that such scene concepts capture higher-level visual semantics and serve as an important cue in describing images. In this paper, we propose a novel scene-based factored attention module for image captioning. Specifically, the proposed module first embeds the scene concepts into factored weights explicitly and attends the visual information extracted from the input image. Then, an adaptive LSTM is used to generate captions for specific scene types. Experimental results on Microsoft COCO benchmark show that the proposed scene-based attention module improves model performance a lot, which outperforms the state-of-the-art approaches under various evaluation metrics.

In this paper, we propose a novel generative adversarial network (GAN) for 3D point clouds generation, which is called tree-GAN. To achieve state-of-the-art performance for multi-class 3D point cloud generation, a tree-structured graph convolution network (TreeGCN) is introduced as a generator for tree-GAN. Because TreeGCN performs graph convolutions within a tree, it can use ancestor information to boost the representation power for features. To evaluate GANs for 3D point clouds accurately, we develop a novel evaluation metric called Frechet point cloud distance (FPD). Experimental results demonstrate that the proposed tree-GAN outperforms state-of-the-art GANs in terms of both conventional metrics and FPD, and can generate point clouds for different semantic parts without prior knowledge.

In this paper, we proposed a new deep learning based dense monocular SLAM method. Compared to existing methods, the proposed framework constructs a dense 3D model via a sparse to dense mapping using learned surface normals. With single view learned depth estimation as prior for monocular visual odometry, we obtain both accurate positioning and high quality depth reconstruction. The depth and normal are predicted by a single network trained in a tightly coupled manner.Experimental results show that our method significantly improves the performance of visual tracking and depth prediction in comparison to the state-of-the-art in deep monocular dense SLAM.

Single-image piece-wise planar 3D reconstruction aims to simultaneously segment plane instances and recover 3D plane parameters from an image. Most recent approaches leverage convolutional neural networks (CNNs) and achieve promising results. However, these methods are limited to detecting a fixed number of planes with certain learned order. To tackle this problem, we propose a novel two-stage method based on associative embedding, inspired by its recent success in instance segmentation. In the first stage, we train a CNN to map each pixel to an embedding space where pixels from the same plane instance have similar embeddings. Then, the plane instances are obtained by grouping the embedding vectors in planar regions via an efficient mean shift clustering algorithm. In the second stage, we estimate the parameter for each plane instance by considering both pixel-level and instance-level consistencies. With the proposed method, we are able to detect an arbitrary number of planes. Extensive experiments on public datasets validate the effectiveness and efficiency of our method. Furthermore, our method runs at 30 fps at the testing time, thus could facilitate many real-time applications such as visual SLAM and human-robot interaction. Code is available at //github.com/svip-lab/PlanarReconstruction.

With the advent of deep neural networks, learning-based approaches for 3D reconstruction have gained popularity. However, unlike for images, in 3D there is no canonical representation which is both computationally and memory efficient yet allows for representing high-resolution geometry of arbitrary topology. Many of the state-of-the-art learning-based 3D reconstruction approaches can hence only represent very coarse 3D geometry or are limited to a restricted domain. In this paper, we propose occupancy networks, a new representation for learning-based 3D reconstruction methods. Occupancy networks implicitly represent the 3D surface as the continuous decision boundary of a deep neural network classifier. In contrast to existing approaches, our representation encodes a description of the 3D output at infinite resolution without excessive memory footprint. We validate that our representation can efficiently encode 3D structure and can be inferred from various kinds of input. Our experiments demonstrate competitive results, both qualitatively and quantitatively, for the challenging tasks of 3D reconstruction from single images, noisy point clouds and coarse discrete voxel grids. We believe that occupancy networks will become a useful tool in a wide variety of learning-based 3D tasks.

In this paper, the problem of describing visual contents of a video sequence with natural language is addressed. Unlike previous video captioning work mainly exploiting the cues of video contents to make a language description, we propose a reconstruction network (RecNet) with a novel encoder-decoder-reconstructor architecture, which leverages both the forward (video to sentence) and backward (sentence to video) flows for video captioning. Specifically, the encoder-decoder makes use of the forward flow to produce the sentence description based on the encoded video semantic features. Two types of reconstructors are customized to employ the backward flow and reproduce the video features based on the hidden state sequence generated by the decoder. The generation loss yielded by the encoder-decoder and the reconstruction loss introduced by the reconstructor are jointly drawn into training the proposed RecNet in an end-to-end fashion. Experimental results on benchmark datasets demonstrate that the proposed reconstructor can boost the encoder-decoder models and leads to significant gains in video caption accuracy.