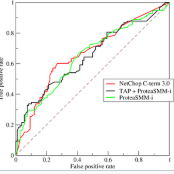

Distributed statistical analyses provide a promising approach for privacy protection when analysing data distributed over several databases. It brings the analysis to the data and not the data to the analysis. The analyst receives anonymous summary statistics which are combined to a aggregated result. We are interested to calculate the AUC of a prediction score based on a distributed approach without getting to know the data of involved individual subjects distributed over different databases. We use DataSHIELD as the technology to carry out distributed analyses and use a newly developed algorithms to perform the validation of the prediction score. Calibration can easily be implemented in the distributed setting. But, discrimination represented by a respective ROC curve and its AUC is challenging. We base our approach on the ROC-GLM algorithm as well as on ideas of differential privacy. The proposed algorithms are evaluated in a simulation study. A real-word application is described: The audit use case of DIFUTURE (Medical Informatics Initiative) with the goal to validate a treatment prediction rule of patients with newly diagnosed multiple sclerosis.

相關內容

Carbon futures has recently emerged as a novel financial asset in the trading markets such as the European Union and China. Monitoring the trend of the carbon price has become critical for both national policy-making as well as industrial manufacturing planning. However, various geopolitical, social, and economic factors can impose substantial influence on the carbon price. Due to its volatility and non-linearity, predicting accurate carbon prices is generally a difficult task. In this study, we propose to improve carbon price forecasting with several novel practices. First, we collect various influencing factors, including commodity prices, export volumes such as oil and natural gas, and prosperity indices. Then we select the most significant factors and disclose their optimal grouping for explainability. Finally, we use the Sparse Quantile Group Lasso and Adaptive Sparse Quantile Group Lasso for robust price predictions. We demonstrate through extensive experimental studies that our proposed methods outperform existing ones. Also, our quantile predictions provide a complete profile of future prices at different levels, which better describes the distributions of the carbon market.

Data sharing remains a major hindering factor when it comes to adopting emerging AI technologies in general, but particularly in the agri-food sector. Protectiveness of data is natural in this setting; data is a precious commodity for data owners, which if used properly can provide them with useful insights on operations and processes leading to a competitive advantage. Unfortunately, novel AI technologies often require large amounts of training data in order to perform well, something that in many scenarios is unrealistic. However, recent machine learning advances, e.g. federated learning and privacy-preserving technologies, can offer a solution to this issue via providing the infrastructure and underpinning technologies needed to use data from various sources to train models without ever sharing the raw data themselves. In this paper, we propose a technical solution based on federated learning that uses decentralized data, (i.e. data that are not exchanged or shared but remain with the owners) to develop a cross-silo machine learning model that facilitates data sharing across supply chains. We focus our data sharing proposition on improving production optimization through soybean yield prediction, and provide potential use-cases that such methods can assist in other problem settings. Our results demonstrate that our approach not only performs better than each of the models trained on an individual data source, but also that data sharing in the agri-food sector can be enabled via alternatives to data exchange, whilst also helping to adopt emerging machine learning technologies to boost productivity.

Grey-box fuzzing is the lightweight approach of choice for finding bugs in sequential programs. It provides a balance between efficiency and effectiveness by conducting a biased random search over the domain of program inputs using a feedback function from observed test executions. For distributed system testing, however, the state-of-practice is represented today by only black-box tools that do not attempt to infer and exploit any knowledge of the system's past behaviours to guide the search for bugs. In this work, we present Mallory: the first framework for grey-box fuzz-testing of distributed systems. Unlike popular black-box distributed system fuzzers, such as Jepsen, that search for bugs by randomly injecting network partitions and node faults or by following human-defined schedules, Mallory is adaptive. It exercises a novel metric to learn how to maximize the number of observed system behaviors by choosing different sequences of faults, thus increasing the likelihood of finding new bugs. The key enablers for our approach are the new ideas of timeline-driven testing and timeline abstraction that provide the feedback function guiding a biased random search for failures. Mallory dynamically constructs Lamport timelines of the system behaviour, abstracts these timelines into happens-before summaries, and introduces faults guided by its real-time observation of the summaries. We have evaluated Mallory on a diverse set of widely-used industrial distributed systems. Compared to the start-of-the-art black-box fuzzer Jepsen, Mallory explores more behaviours and takes less time to find bugs. Mallory discovered 22 zero-day bugs (of which 18 were confirmed by developers), including 10 new vulnerabilities, in rigorously-tested distributed systems such as Braft, Dqlite, and Redis. 6 new CVEs have been assigned.

A number of information retrieval studies have been done to assess which statistical techniques are appropriate for comparing systems. However, these studies are focused on TREC-style experiments, which typically have fewer than 100 topics. There is no similar line of work for large search and recommendation experiments; such studies typically have thousands of topics or users and much sparser relevance judgements, so it is not clear if recommendations for analyzing traditional TREC experiments apply to these settings. In this paper, we empirically study the behavior of significance tests with large search and recommendation evaluation data. Our results show that the Wilcoxon and Sign tests show significantly higher Type-1 error rates for large sample sizes than the bootstrap, randomization and t-tests, which were more consistent with the expected error rate. While the statistical tests displayed differences in their power for smaller sample sizes, they showed no difference in their power for large sample sizes. We recommend the sign and Wilcoxon tests should not be used to analyze large scale evaluation results. Our result demonstrate that with Top-N recommendation and large search evaluation data, most tests would have a 100% chance of finding statistically significant results. Therefore, the effect size should be used to determine practical or scientific significance.

Accurately estimating the probability of failure for safety-critical systems is important for certification. Estimation is often challenging due to high-dimensional input spaces, dangerous test scenarios, and computationally expensive simulators; thus, efficient estimation techniques are important to study. This work reframes the problem of black-box safety validation as a Bayesian optimization problem and introduces an algorithm, Bayesian safety validation, that iteratively fits a probabilistic surrogate model to efficiently predict failures. The algorithm is designed to search for failures, compute the most-likely failure, and estimate the failure probability over an operating domain using importance sampling. We introduce a set of three acquisition functions that focus on reducing uncertainty by covering the design space, optimizing the analytically derived failure boundaries, and sampling the predicted failure regions. Mainly concerned with systems that only output a binary indication of failure, we show that our method also works well in cases where more output information is available. Results show that Bayesian safety validation achieves a better estimate of the probability of failure using orders of magnitude fewer samples and performs well across various safety validation metrics. We demonstrate the algorithm on three test problems with access to ground truth and on a real-world safety-critical subsystem common in autonomous flight: a neural network-based runway detection system. This work is open sourced and currently being used to supplement the FAA certification process of the machine learning components for an autonomous cargo aircraft.

With the breakthrough of AlphaGo, deep reinforcement learning becomes a recognized technique for solving sequential decision-making problems. Despite its reputation, data inefficiency caused by its trial and error learning mechanism makes deep reinforcement learning hard to be practical in a wide range of areas. Plenty of methods have been developed for sample efficient deep reinforcement learning, such as environment modeling, experience transfer, and distributed modifications, amongst which, distributed deep reinforcement learning has shown its potential in various applications, such as human-computer gaming, and intelligent transportation. In this paper, we conclude the state of this exciting field, by comparing the classical distributed deep reinforcement learning methods, and studying important components to achieve efficient distributed learning, covering single player single agent distributed deep reinforcement learning to the most complex multiple players multiple agents distributed deep reinforcement learning. Furthermore, we review recently released toolboxes that help to realize distributed deep reinforcement learning without many modifications of their non-distributed versions. By analyzing their strengths and weaknesses, a multi-player multi-agent distributed deep reinforcement learning toolbox is developed and released, which is further validated on Wargame, a complex environment, showing usability of the proposed toolbox for multiple players and multiple agents distributed deep reinforcement learning under complex games. Finally, we try to point out challenges and future trends, hoping this brief review can provide a guide or a spark for researchers who are interested in distributed deep reinforcement learning.

In large-scale systems there are fundamental challenges when centralised techniques are used for task allocation. The number of interactions is limited by resource constraints such as on computation, storage, and network communication. We can increase scalability by implementing the system as a distributed task-allocation system, sharing tasks across many agents. However, this also increases the resource cost of communications and synchronisation, and is difficult to scale. In this paper we present four algorithms to solve these problems. The combination of these algorithms enable each agent to improve their task allocation strategy through reinforcement learning, while changing how much they explore the system in response to how optimal they believe their current strategy is, given their past experience. We focus on distributed agent systems where the agents' behaviours are constrained by resource usage limits, limiting agents to local rather than system-wide knowledge. We evaluate these algorithms in a simulated environment where agents are given a task composed of multiple subtasks that must be allocated to other agents with differing capabilities, to then carry out those tasks. We also simulate real-life system effects such as networking instability. Our solution is shown to solve the task allocation problem to 6.7% of the theoretical optimal within the system configurations considered. It provides 5x better performance recovery over no-knowledge retention approaches when system connectivity is impacted, and is tested against systems up to 100 agents with less than a 9% impact on the algorithms' performance.

This paper aims to mitigate straggler effects in synchronous distributed learning for multi-agent reinforcement learning (MARL) problems. Stragglers arise frequently in a distributed learning system, due to the existence of various system disturbances such as slow-downs or failures of compute nodes and communication bottlenecks. To resolve this issue, we propose a coded distributed learning framework, which speeds up the training of MARL algorithms in the presence of stragglers, while maintaining the same accuracy as the centralized approach. As an illustration, a coded distributed version of the multi-agent deep deterministic policy gradient(MADDPG) algorithm is developed and evaluated. Different coding schemes, including maximum distance separable (MDS)code, random sparse code, replication-based code, and regular low density parity check (LDPC) code are also investigated. Simulations in several multi-robot problems demonstrate the promising performance of the proposed framework.

This paper focuses on the expected difference in borrower's repayment when there is a change in the lender's credit decisions. Classical estimators overlook the confounding effects and hence the estimation error can be magnificent. As such, we propose another approach to construct the estimators such that the error can be greatly reduced. The proposed estimators are shown to be unbiased, consistent, and robust through a combination of theoretical analysis and numerical testing. Moreover, we compare the power of estimating the causal quantities between the classical estimators and the proposed estimators. The comparison is tested across a wide range of models, including linear regression models, tree-based models, and neural network-based models, under different simulated datasets that exhibit different levels of causality, different degrees of nonlinearity, and different distributional properties. Most importantly, we apply our approaches to a large observational dataset provided by a global technology firm that operates in both the e-commerce and the lending business. We find that the relative reduction of estimation error is strikingly substantial if the causal effects are accounted for correctly.

The demand for artificial intelligence has grown significantly over the last decade and this growth has been fueled by advances in machine learning techniques and the ability to leverage hardware acceleration. However, in order to increase the quality of predictions and render machine learning solutions feasible for more complex applications, a substantial amount of training data is required. Although small machine learning models can be trained with modest amounts of data, the input for training larger models such as neural networks grows exponentially with the number of parameters. Since the demand for processing training data has outpaced the increase in computation power of computing machinery, there is a need for distributing the machine learning workload across multiple machines, and turning the centralized into a distributed system. These distributed systems present new challenges, first and foremost the efficient parallelization of the training process and the creation of a coherent model. This article provides an extensive overview of the current state-of-the-art in the field by outlining the challenges and opportunities of distributed machine learning over conventional (centralized) machine learning, discussing the techniques used for distributed machine learning, and providing an overview of the systems that are available.