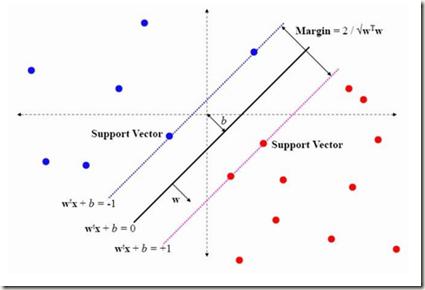

Emotional voice conversion (EVC) aims to convert the emotional state of an utterance from one emotion to another while preserving the linguistic content and speaker identity. Current studies mostly focus on modelling the conversion between several specific emotion types. Synthesizing mixed effects of emotions could help us to better imitate human emotions, and facilitate more natural human-computer interaction. In this research, for the first time, we formulate and study the research problem of mixed emotion synthesis for EVC. We regard emotional styles as a series of emotion attributes that are learnt from a ranking-based support vector machine (SVM). Each attribute measures the degree of the relevance between the speech recordings belonging to different emotion types. We then incorporate those attributes into a sequence-to-sequence (seq2seq) emotional voice conversion framework. During the training, the framework not only learns to characterize the input emotional style, but also quantifies its relevance with other emotion types. At run-time, various emotional mixtures can be produced by manually defining the attributes. We conduct objective and subjective evaluations to validate our idea in terms of mixed emotion synthesis. We further build an emotion triangle as an application of emotion transition. Codes and speech samples are publicly available.

相關內容

The underlying dynamics and patterns of 3D surface meshes deforming over time can be discovered by unsupervised learning, especially autoencoders, which calculate low-dimensional embeddings of the surfaces. To study the deformation patterns of unseen shapes by transfer learning, we want to train an autoencoder that can analyze new surface meshes without training a new network. Here, most state-of-the-art autoencoders cannot handle meshes of different connectivity and therefore have limited to no generalization capacities to new meshes. Also, reconstruction errors strongly increase in comparison to the errors for the training shapes. To address this, we propose a novel spectral CoSMA (Convolutional Semi-Regular Mesh Autoencoder) network. This patch-based approach is combined with a surface-aware training. It reconstructs surfaces not presented during training and generalizes the deformation behavior of the surfaces' patches. The novel approach reconstructs unseen meshes from different datasets in superior quality compared to state-of-the-art autoencoders that have been trained on these shapes. Our transfer learning errors on unseen shapes are 40% lower than those from models learned directly on the data. Furthermore, baseline autoencoders detect deformation patterns of unseen mesh sequences only for the whole shape. In contrast, due to the employed regional patches and stable reconstruction quality, we can localize where on the surfaces these deformation patterns manifest.

We propose to use L\'evy {\alpha}-stable distributions for constructing priors for Bayesian inverse problems. The construction is based on Markov fields with stable-distributed increments. Special cases include the Cauchy and Gaussian distributions, with stability indices {\alpha} = 1, and {\alpha} = 2, respectively. Our target is to show that these priors provide a rich class of priors for modelling rough features. The main technical issue is that the {\alpha}-stable probability density functions do not have closed-form expressions in general, and this limits their applicability. For practical purposes, we need to approximate probability density functions through numerical integration or series expansions. Current available approximation methods are either too time-consuming or do not function within the range of stability and radius arguments needed in Bayesian inversion. To address the issue, we propose a new hybrid approximation method for symmetric univariate and bivariate {\alpha}-stable distributions, which is both fast to evaluate, and accurate enough from a practical viewpoint. Then we use approximation method in the numerical implementation of {\alpha}-stable random field priors. We demonstrate the applicability of the constructed priors on selected Bayesian inverse problems which include the deconvolution problem, and the inversion of a function governed by an elliptic partial differential equation. We also demonstrate hierarchical {\alpha}-stable priors in the one-dimensional deconvolution problem. We employ maximum-a-posterior-based estimation at all the numerical examples. To that end, we exploit the limited-memory BFGS and its bounded variant for the estimator.

In the cybersecurity setting, defenders are often at the mercy of their detection technologies and subject to the information and experiences that individual analysts have. In order to give defenders an advantage, it is important to understand an attacker's motivation and their likely next best action. As a first step in modeling this behavior, we introduce a security game framework that simulates interplay between attackers and defenders in a noisy environment, focusing on the factors that drive decision making for attackers and defenders in the variants of the game with full knowledge and observability, knowledge of the parameters but no observability of the state (``partial knowledge''), and zero knowledge or observability (``zero knowledge''). We demonstrate the importance of making the right assumptions about attackers, given significant differences in outcomes. Furthermore, there is a measurable trade-off between false-positives and true-positives in terms of attacker outcomes, suggesting that a more false-positive prone environment may be acceptable under conditions where true-positives are also higher.

Intelligent agents have great potential as facilitators of group conversation among older adults. However, little is known about how to design agents for this purpose and user group, especially in terms of agent embodiment. To this end, we conducted a mixed methods study of older adults' reactions to voice and body in a group conversation facilitation agent. Two agent forms with the same underlying artificial intelligence (AI) and voice system were compared: a humanoid robot and a voice assistant. One preliminary study (total n=24) and one experimental study comparing voice and body morphologies (n=36) were conducted with older adults and an experienced human facilitator. Findings revealed that the artificiality of the agent, regardless of its form, was beneficial for the socially uncomfortable task of conversation facilitation. Even so, talkative personality types had a poorer experience with the "bodied" robot version. Design implications and supplementary reactions, especially to agent voice, are also discussed.

The task of emotion recognition in conversations (ERC) benefits from the availability of multiple modalities, as offered, for example, in the video-based MELD dataset. However, only a few research approaches use both acoustic and visual information from the MELD videos. There are two reasons for this: First, label-to-video alignments in MELD are noisy, making those videos an unreliable source of emotional speech data. Second, conversations can involve several people in the same scene, which requires the detection of the person speaking the utterance. In this paper we demonstrate that by using recent automatic speech recognition and active speaker detection models, we are able to realign the videos of MELD, and capture the facial expressions from uttering speakers in 96.92% of the utterances provided in MELD. Experiments with a self-supervised voice recognition model indicate that the realigned MELD videos more closely match the corresponding utterances offered in the dataset. Finally, we devise a model for emotion recognition in conversations trained on the face and audio information of the MELD realigned videos, which outperforms state-of-the-art models for ERC based on vision alone. This indicates that active speaker detection is indeed effective for extracting facial expressions from the uttering speakers, and that faces provide more informative visual cues than the visual features state-of-the-art models have been using so far.

Attention-based arbitrary style transfer studies have shown promising performance in synthesizing vivid local style details. They typically use the all-to-all attention mechanism: each position of content features is fully matched to all positions of style features. However, all-to-all attention tends to generate distorted style patterns and has quadratic complexity. It virtually limits both the effectiveness and efficiency of arbitrary style transfer. In this paper, we rethink what kind of attention mechanism is more appropriate for arbitrary style transfer. Our answer is a novel all-to-key attention mechanism: each position of content features is matched to key positions of style features. Specifically, it integrates two newly proposed attention forms: distributed and progressive attention. Distributed attention assigns attention to multiple key positions; Progressive attention pays attention from coarse to fine. All-to-key attention promotes the matching of diverse and reasonable style patterns and has linear complexity. The resultant module, dubbed StyA2K, has fine properties in rendering reasonable style textures and maintaining consistent local structure. Qualitative and quantitative experiments demonstrate that our method achieves superior results than state-of-the-art approaches.

Emotion recognition in conversation (ERC) aims to detect the emotion label for each utterance. Motivated by recent studies which have proven that feeding training examples in a meaningful order rather than considering them randomly can boost the performance of models, we propose an ERC-oriented hybrid curriculum learning framework. Our framework consists of two curricula: (1) conversation-level curriculum (CC); and (2) utterance-level curriculum (UC). In CC, we construct a difficulty measurer based on "emotion shift" frequency within a conversation, then the conversations are scheduled in an "easy to hard" schema according to the difficulty score returned by the difficulty measurer. For UC, it is implemented from an emotion-similarity perspective, which progressively strengthens the model's ability in identifying the confusing emotions. With the proposed model-agnostic hybrid curriculum learning strategy, we observe significant performance boosts over a wide range of existing ERC models and we are able to achieve new state-of-the-art results on four public ERC datasets.

We address the task of automatically scoring the competency of candidates based on textual features, from the automatic speech recognition (ASR) transcriptions in the asynchronous video job interview (AVI). The key challenge is how to construct the dependency relation between questions and answers, and conduct the semantic level interaction for each question-answer (QA) pair. However, most of the recent studies in AVI focus on how to represent questions and answers better, but ignore the dependency information and interaction between them, which is critical for QA evaluation. In this work, we propose a Hierarchical Reasoning Graph Neural Network (HRGNN) for the automatic assessment of question-answer pairs. Specifically, we construct a sentence-level relational graph neural network to capture the dependency information of sentences in or between the question and the answer. Based on these graphs, we employ a semantic-level reasoning graph attention network to model the interaction states of the current QA session. Finally, we propose a gated recurrent unit encoder to represent the temporal question-answer pairs for the final prediction. Empirical results conducted on CHNAT (a real-world dataset) validate that our proposed model significantly outperforms text-matching based benchmark models. Ablation studies and experimental results with 10 random seeds also show the effectiveness and stability of our models.

We propose a new method for event extraction (EE) task based on an imitation learning framework, specifically, inverse reinforcement learning (IRL) via generative adversarial network (GAN). The GAN estimates proper rewards according to the difference between the actions committed by the expert (or ground truth) and the agent among complicated states in the environment. EE task benefits from these dynamic rewards because instances and labels yield to various extents of difficulty and the gains are expected to be diverse -- e.g., an ambiguous but correctly detected trigger or argument should receive high gains -- while the traditional RL models usually neglect such differences and pay equal attention on all instances. Moreover, our experiments also demonstrate that the proposed framework outperforms state-of-the-art methods, without explicit feature engineering.

To quickly obtain new labeled data, we can choose crowdsourcing as an alternative way at lower cost in a short time. But as an exchange, crowd annotations from non-experts may be of lower quality than those from experts. In this paper, we propose an approach to performing crowd annotation learning for Chinese Named Entity Recognition (NER) to make full use of the noisy sequence labels from multiple annotators. Inspired by adversarial learning, our approach uses a common Bi-LSTM and a private Bi-LSTM for representing annotator-generic and -specific information. The annotator-generic information is the common knowledge for entities easily mastered by the crowd. Finally, we build our Chinese NE tagger based on the LSTM-CRF model. In our experiments, we create two data sets for Chinese NER tasks from two domains. The experimental results show that our system achieves better scores than strong baseline systems.