Data association-based multiple object tracking (MOT) involves multiple separated modules processed or optimized differently, which results in complex method design and requires non-trivial tuning of parameters. In this paper, we present an end-to-end model, named FAMNet, where Feature extraction, Affinity estimation and Multi-dimensional assignment are refined in a single network. All layers in FAMNet are designed differentiable thus can be optimized jointly to learn the discriminative features and higher-order affinity model for robust MOT, which is supervised by the loss directly from the assignment ground truth. We also integrate single object tracking technique and a dedicated target management scheme into the FAMNet-based tracking system to further recover false negatives and inhibit noisy target candidates generated by the external detector. The proposed method is evaluated on a diverse set of benchmarks including MOT2015, MOT2017, KITTI-Car and UA-DETRAC, and achieves promising performance on all of them in comparison with state-of-the-arts.

相關內容

Person re-identification (PReID) has received increasing attention due to it is an important part in intelligent surveillance. Recently, many state-of-the-art methods on PReID are part-based deep models. Most of them focus on learning the part feature representation of person body in horizontal direction. However, the feature representation of body in vertical direction is usually ignored. Besides, the spatial information between these part features and the different feature channels is not considered. In this study, we introduce a multi-branches deep model for PReID. Specifically, the model consists of five branches. Among the five branches, two of them learn the local feature with spatial information from horizontal or vertical orientations, respectively. The other one aims to learn interdependencies knowledge between different feature channels generated by the last convolution layer. The remains of two other branches are identification and triplet sub-networks, in which the discriminative global feature and a corresponding measurement can be learned simultaneously. All the five branches can improve the representation learning. We conduct extensive comparative experiments on three PReID benchmarks including CUHK03, Market-1501 and DukeMTMC-reID. The proposed deep framework outperforms many state-of-the-art in most cases.

This paper addresses the problem of head detection in crowded environments. Our detection is based entirely on the geometric consistency across cameras with overlapping fields of view, and no additional learning process is required. We propose a fully unsupervised method for inferring scene and camera geometry, in contrast to existing algorithms which require specific calibration procedures. Moreover, we avoid relying on the presence of body parts other than heads or on background subtraction, which have limited effectiveness under heavy clutter. We cast the head detection problem as a stereo MRF-based optimization of a dense pedestrian height map, and we introduce a constraint which aligns the height gradient according to the vertical vanishing point direction. We validate the method in an outdoor setting with varying pedestrian density levels. With only three views, our approach is able to detect simultaneously tens of heavily occluded pedestrians across a large, homogeneous area.

We propose an algorithm for real-time 6DOF pose tracking of rigid 3D objects using a monocular RGB camera. The key idea is to derive a region-based cost function using temporally consistent local color histograms. While such region-based cost functions are commonly optimized using first-order gradient descent techniques, we systematically derive a Gauss-Newton optimization scheme which gives rise to drastically faster convergence and highly accurate and robust tracking performance. We furthermore propose a novel complex dataset dedicated for the task of monocular object pose tracking and make it publicly available to the community. To our knowledge, It is the first to address the common and important scenario in which both the camera as well as the objects are moving simultaneously in cluttered scenes. In numerous experiments - including our own proposed data set - we demonstrate that the proposed Gauss-Newton approach outperforms existing approaches, in particular in the presence of cluttered backgrounds, heterogeneous objects and partial occlusions.

Tracking by detection is a common approach to solving the Multiple Object Tracking problem. In this paper we show how deep metric learning can be used to improve three aspects of tracking by detection. We train a convolutional neural network to learn an embedding function in a Siamese configuration on a large person re-identification dataset offline. It is then used to improve the online performance of tracking while retaining a high frame rate. We use this learned appearance metric to robustly build estimates of pedestrian's trajectories in the MOT16 dataset. In breaking with the tracking by detection model, we use our appearance metric to propose detections using the predicted state of a tracklet as a prior in the case where the detector fails. This method achieves competitive results in evaluation, especially among online, real-time approaches. We present an ablative study showing the impact of each of the three uses of our deep appearance metric.

We study active object tracking, where a tracker takes as input the visual observation (i.e., frame sequence) and produces the camera control signal (e.g., move forward, turn left, etc.). Conventional methods tackle the tracking and the camera control separately, which is challenging to tune jointly. It also incurs many human efforts for labeling and many expensive trial-and-errors in realworld. To address these issues, we propose, in this paper, an end-to-end solution via deep reinforcement learning, where a ConvNet-LSTM function approximator is adopted for the direct frame-toaction prediction. We further propose an environment augmentation technique and a customized reward function, which are crucial for a successful training. The tracker trained in simulators (ViZDoom, Unreal Engine) shows good generalization in the case of unseen object moving path, unseen object appearance, unseen background, and distracting object. It can restore tracking when occasionally losing the target. With the experiments over the VOT dataset, we also find that the tracking ability, obtained solely from simulators, can potentially transfer to real-world scenarios.

While generic object detection has achieved large improvements with rich feature hierarchies from deep nets, detecting small objects with poor visual cues remains challenging. Motion cues from multiple frames may be more informative for detecting such hard-to-distinguish objects in each frame. However, how to encode discriminative motion patterns, such as deformations and pose changes that characterize objects, has remained an open question. To learn them and thereby realize small object detection, we present a neural model called the Recurrent Correlational Network, where detection and tracking are jointly performed over a multi-frame representation learned through a single, trainable, and end-to-end network. A convolutional long short-term memory network is utilized for learning informative appearance change for detection, while learned representation is shared in tracking for enhancing its performance. In experiments with datasets containing images of scenes with small flying objects, such as birds and unmanned aerial vehicles, the proposed method yielded consistent improvements in detection performance over deep single-frame detectors and existing motion-based detectors. Furthermore, our network performs as well as state-of-the-art generic object trackers when it was evaluated as a tracker on the bird dataset.

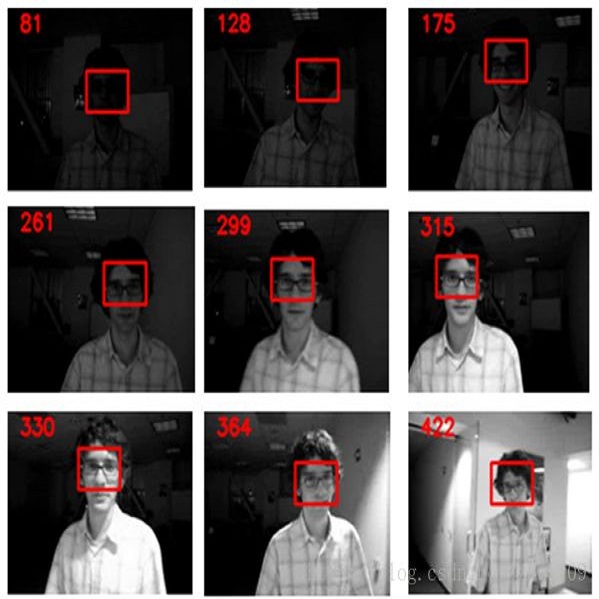

Existing visual tracking methods usually localize a target object with a bounding box, in which the performance of the foreground object trackers or detectors is often affected by the inclusion of background clutter. To handle this problem, we learn a patch-based graph representation for visual tracking. The tracked object is modeled by with a graph by taking a set of non-overlapping image patches as nodes, in which the weight of each node indicates how likely it belongs to the foreground and edges are weighted for indicating the appearance compatibility of two neighboring nodes. This graph is dynamically learned and applied in object tracking and model updating. During the tracking process, the proposed algorithm performs three main steps in each frame. First, the graph is initialized by assigning binary weights of some image patches to indicate the object and background patches according to the predicted bounding box. Second, the graph is optimized to refine the patch weights by using a novel alternating direction method of multipliers. Third, the object feature representation is updated by imposing the weights of patches on the extracted image features. The object location is predicted by maximizing the classification score in the structured support vector machine. Extensive experiments show that the proposed tracking algorithm performs well against the state-of-the-art methods on large-scale benchmark datasets.

Template-matching methods for visual tracking have gained popularity recently due to their comparable performance and fast speed. However, they lack effective ways to adapt to changes in the target object's appearance, making their tracking accuracy still far from state-of-the-art. In this paper, we propose a dynamic memory network to adapt the template to the target's appearance variations during tracking. An LSTM is used as a memory controller, where the input is the search feature map and the outputs are the control signals for the reading and writing process of the memory block. As the location of the target is at first unknown in the search feature map, an attention mechanism is applied to concentrate the LSTM input on the potential target. To prevent aggressive model adaptivity, we apply gated residual template learning to control the amount of retrieved memory that is used to combine with the initial template. Unlike tracking-by-detection methods where the object's information is maintained by the weight parameters of neural networks, which requires expensive online fine-tuning to be adaptable, our tracker runs completely feed-forward and adapts to the target's appearance changes by updating the external memory. Moreover, the capacity of our model is not determined by the network size as with other trackers -- the capacity can be easily enlarged as the memory requirements of a task increase, which is favorable for memorizing long-term object information. Extensive experiments on OTB and VOT demonstrates that our tracker MemTrack performs favorably against state-of-the-art tracking methods while retaining real-time speed of 50 fps.

While most steps in the modern object detection methods are learnable, the region feature extraction step remains largely hand-crafted, featured by RoI pooling methods. This work proposes a general viewpoint that unifies existing region feature extraction methods and a novel method that is end-to-end learnable. The proposed method removes most heuristic choices and outperforms its RoI pooling counterparts. It moves further towards fully learnable object detection.

In this work, we present a method for tracking and learning the dynamics of all objects in a large scale robot environment. A mobile robot patrols the environment and visits the different locations one by one. Movable objects are discovered by change detection, and tracked throughout the robot deployment. For tracking, we extend the Rao-Blackwellized particle filter of previous work with birth and death processes, enabling the method to handle an arbitrary number of objects. Target births and associations are sampled using Gibbs sampling. The parameters of the system are then learnt using the Expectation Maximization algorithm in an unsupervised fashion. The system therefore enables learning of the dynamics of one particular environment, and of its objects. The algorithm is evaluated on data collected autonomously by a mobile robot in an office environment during a real-world deployment. We show that the algorithm automatically identifies and tracks the moving objects within 3D maps and infers plausible dynamics models, significantly decreasing the modeling bias of our previous work. The proposed method represents an improvement over previous methods for environment dynamics learning as it allows for learning of fine grained processes.