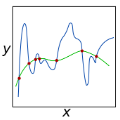

-Recent strides in model predictive control (MPC)underscore a dependence on numerical advancements to efficientlyand accurately solve large-scale problems. Given the substantialnumber of variables characterizing typical whole-body optimalcontrol (OC) problems -often numbering in the thousands-exploiting the sparse structure of the numerical problem becomescrucial to meet computational demands, typically in the range ofa few milliseconds. A fundamental building block for computingNewton or Sequential Quadratic Programming (SQP) steps indirect optimal control methods involves addressing the linearquadratic regulator (LQR) problem. This paper concentrateson equality-constrained problems featuring implicit systemdynamics and dual regularization, a characteristic found inadvanced interior-point or augmented Lagrangian solvers. Here,we introduce a parallel algorithm designed for solving an LQRproblem with dual regularization. Leveraging a rewriting of theLQR recursion through block elimination, we first enhanced theefficiency of the serial algorithm, then subsequently generalized itto handle parametric problems. This extension enables us to splitdecision variables and solve multiple subproblems concurrently.Our algorithm is implemented in our nonlinear numerical optimalcontrol library ALIGATOR. It showcases improved performanceover previous serial formulations and we validate its efficacy bydeploying it in the model predictive control of a real quadrupedrobot. This paper follows up from our prior work on augmentedLagrangian methods for numerical optimal control with implicitdynamics and constraints.

相關內容

We present Direct Reward Fine-Tuning (DRaFT), a simple and effective method for fine-tuning diffusion models to maximize differentiable reward functions, such as scores from human preference models. We first show that it is possible to backpropagate the reward function gradient through the full sampling procedure, and that doing so achieves strong performance on a variety of rewards, outperforming reinforcement learning-based approaches. We then propose more efficient variants of DRaFT: DRaFT-K, which truncates backpropagation to only the last K steps of sampling, and DRaFT-LV, which obtains lower-variance gradient estimates for the case when K=1. We show that our methods work well for a variety of reward functions and can be used to substantially improve the aesthetic quality of images generated by Stable Diffusion 1.4. Finally, we draw connections between our approach and prior work, providing a unifying perspective on the design space of gradient-based fine-tuning algorithms.

Hierarchical Reinforcement Learning (HRL) approaches have shown successful results in solving a large variety of complex, structured, long-horizon problems. Nevertheless, a full theoretical understanding of this empirical evidence is currently missing. In the context of the \emph{option} framework, prior research has devised efficient algorithms for scenarios where options are fixed, and the high-level policy selecting among options only has to be learned. However, the fully realistic scenario in which both the high-level and the low-level policies are learned is surprisingly disregarded from a theoretical perspective. This work makes a step towards the understanding of this latter scenario. Focusing on the finite-horizon problem, we present a meta-algorithm alternating between regret minimization algorithms instanced at different (high and low) temporal abstractions. At the higher level, we treat the problem as a Semi-Markov Decision Process (SMDP), with fixed low-level policies, while at a lower level, inner option policies are learned with a fixed high-level policy. The bounds derived are compared with the lower bound for non-hierarchical finite-horizon problems, allowing to characterize when a hierarchical approach is provably preferable, even without pre-trained options.

We propose a new method for combining in situ buoy measurements with Earth system models (ESMs) to improve the accuracy of temperature predictions in the ocean. The technique utilizes the dynamics \textit{and} modes identified in ESMs alongside buoy measurements to improve accuracy while preserving features such as seasonality. We use this technique, which we call Dynamic Basis Function Interpolation, to correct errors in localized temperature predictions made by the Model for Prediction Across Scales Ocean component (MPAS-O) with the Global Drifter Program's in situ ocean buoy dataset.

Cloth-changing person Re-IDentification (Re-ID) is a particularly challenging task, suffering from two limitations of inferior discriminative features and limited training samples. Existing methods mainly leverage auxiliary information to facilitate identity-relevant feature learning, including soft-biometrics features of shapes or gaits, and additional labels of clothing. However, this information may be unavailable in real-world applications. In this paper, we propose a novel FIne-grained Representation and Recomposition (FIRe$^{2}$) framework to tackle both limitations without any auxiliary annotation or data. Specifically, we first design a Fine-grained Feature Mining (FFM) module to separately cluster images of each person. Images with similar so-called fine-grained attributes (e.g., clothes and viewpoints) are encouraged to cluster together. An attribute-aware classification loss is introduced to perform fine-grained learning based on cluster labels, which are not shared among different people, promoting the model to learn identity-relevant features. Furthermore, to take full advantage of fine-grained attributes, we present a Fine-grained Attribute Recomposition (FAR) module by recomposing image features with different attributes in the latent space. It significantly enhances robust feature learning. Extensive experiments demonstrate that FIRe$^{2}$ can achieve state-of-the-art performance on five widely-used cloth-changing person Re-ID benchmarks. The code is available at //github.com/QizaoWang/FIRe-CCReID.

This paper introduces DiffMix, a new self-supervised learning (SSL) pre-training framework that combines real and synthetic images. Unlike traditional SSL methods that predominantly use real images, DiffMix uses a variant of Stable Diffusion to replace an augmented instance of a real image, facilitating the learning of cross real-synthetic image representations. The key insight is that while SSL methods trained solely on synthetic images underperform compared to those trained on real images, a blended training approach using both real and synthetic images leads to more robust and adaptable representations. Experiments demonstrate that DiffMix enhances the SSL methods SimCLR, BarlowTwins, and DINO, across various robustness datasets and domain transfer tasks. DiffMix boosts SimCLR's accuracy on ImageNet-1K by 4.56\%. These results challenge the notion that high-quality real images are crucial for SSL pre-training by showing that lower quality synthetic images can also produce strong representations. DiffMix also reduces the need for image augmentations in SSL, offering new optimization strategies.

The widely adopted Business Process Model and Notation (BPMN) is a cornerstone of industry standards for business process modeling. However, its ambiguous execution semantics often result in inconsistent interpretations, depending on the software used for implementation. In response, the Process Specification Language (PASS) provides formally defined semantics to overcome these interpretational challenges. Despite its clear advantages, PASS has not reached the same level of industry penetration as BPMN. This feasibility study proposes using PASS as an intermediary framework to translate and execute BPMN models. It describes the development of a prototype translator that converts specific BPMN elements into a format compatible with PASS. These models are then transformed into source code and executed in a bespoke workflow environment, marking a departure from traditional BPMN implementations. Our findings suggest that integrating PASS enhances compatibility across different modeling and execution tools and offers a more robust methodology for implementing business processes across organizations. This study lays the groundwork for more accurate and unified business process model executions, potentially transforming industry standards for process modeling and execution.

Recent research in speaker verification has increasingly focused on achieving robust and reliable recognition under challenging channel conditions and noisy environments. Identifying speakers in radio communications is particularly difficult due to inherent limitations such as constrained bandwidth and pervasive noise interference. To address this issue, we present a Channel Robust Speaker Learning (CRSL) framework that enhances the robustness of the current speaker verification pipeline, considering data source, data augmentation, and the efficiency of model transfer processes. Our framework introduces an augmentation module that mitigates bandwidth variations in radio speech datasets by manipulating the bandwidth of training inputs. It also addresses unknown noise by introducing noise within the manifold space. Additionally, we propose an efficient fine-tuning method that reduces the need for extensive additional training time and large amounts of data. Moreover, we develop a toolkit for assembling a large-scale radio speech corpus and establish a benchmark specifically tailored for radio scenario speaker verification studies. Experimental results demonstrate that our proposed methodology effectively enhances performance and mitigates degradation caused by radio transmission in speaker verification tasks. The code will be available on Github.

Bayesian Image-on-Scalar Regression (ISR) offers significant advantages for neuroimaging data analysis, including flexibility and the ability to quantify uncertainty. However, its application to large-scale imaging datasets, such as found in the UK Biobank, is hindered by the computational demands of traditional posterior computation methods, as well as the challenge of individual-specific brain masks that deviate from the common mask typically used in standard ISR approaches. To address these challenges, we introduce a novel Bayesian ISR model that is scalable and accommodates inconsistent brain masks across subjects in large-scale imaging studies. Our model leverages Gaussian process priors and integrates salience area indicators to facilitate ISR. We develop a cutting-edge scalable posterior computation algorithm that employs stochastic gradient Langevin dynamics coupled with memory mapping techniques, ensuring that computation time scales linearly with subsample size and memory usage is constrained only by the batch size. Our approach uniquely enables direct spatial posterior inferences on brain activation regions. The efficacy of our method is demonstrated through simulations and analysis of the UK Biobank task fMRI data, encompassing 38,639 subjects and over 120,000 voxels per image, showing that it can achieve a speed increase of 4 to 11 times and enhance statistical power by 8% to 18% compared to traditional Gibbs sampling with zero-imputation in various simulation scenarios.

Interest in bilevel optimization has grown in recent years, partially due to its applications to tackle challenging machine-learning problems. Several exciting recent works have been centered around developing efficient gradient-based algorithms that can solve bilevel optimization problems with provable guarantees. However, the existing literature mainly focuses on bilevel problems either without constraints, or featuring only simple constraints that do not couple variables across the upper and lower levels, excluding a range of complex applications. Our paper studies this challenging but less explored scenario and develops a (fully) first-order algorithm, which we term BLOCC, to tackle BiLevel Optimization problems with Coupled Constraints. We establish rigorous convergence theory for the proposed algorithm and demonstrate its effectiveness on two well-known real-world applications - hyperparameter selection in support vector machine (SVM) and infrastructure planning in transportation networks using the real data from the city of Seville.

Multi-relation Question Answering is a challenging task, due to the requirement of elaborated analysis on questions and reasoning over multiple fact triples in knowledge base. In this paper, we present a novel model called Interpretable Reasoning Network that employs an interpretable, hop-by-hop reasoning process for question answering. The model dynamically decides which part of an input question should be analyzed at each hop; predicts a relation that corresponds to the current parsed results; utilizes the predicted relation to update the question representation and the state of the reasoning process; and then drives the next-hop reasoning. Experiments show that our model yields state-of-the-art results on two datasets. More interestingly, the model can offer traceable and observable intermediate predictions for reasoning analysis and failure diagnosis, thereby allowing manual manipulation in predicting the final answer.