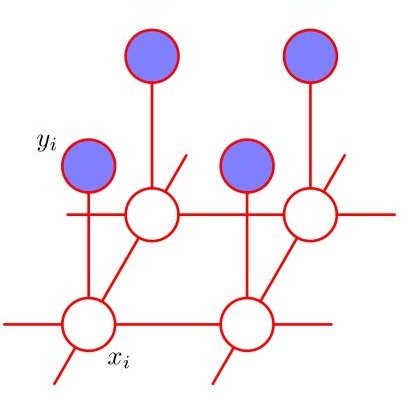

The distributed flocking control of collective aerial vehicles has extraordinary advantages in scalability and reliability, \emph{etc.} However, it is still challenging to design a reliable, efficient, and responsive flocking algorithm. In this paper, a distributed predictive flocking framework is presented based on a Markov random field (MRF). The MRF is used to characterize the optimization problem that is eventually resolved by discretizing the input space. Potential functions are employed to describe the interactions between aerial vehicles and as indicators of flight performance. The dynamic constraints are taken into account in the candidate feasible trajectories which correspond to random variables. Numerical simulation shows that compared with some existing latest methods, the proposed algorithm has better-flocking cohesion and control efficiency performances. Experiments are also conducted to demonstrate the feasibility of the proposed algorithm.

相關內容

Trajectory optimization is a powerful tool for robot motion planning and control. State-of-the-art general-purpose nonlinear programming solvers are versatile, handle constraints effectively and provide a high numerical robustness, but they are slow because they do not fully exploit the optimal control problem structure at hand. Existing structure-exploiting solvers are fast, but they often lack techniques to deal with nonlinearity or rely on penalty methods to enforce (equality or inequality) path constraints. This work presents Fatrop: a trajectory optimization solver that is fast and benefits from the salient features of general-purpose nonlinear optimization solvers. The speed-up is mainly achieved through the integration of a specialized linear solver, based on a Riccati recursion that is generalized to also support stagewise equality constraints. To demonstrate the algorithm's potential, it is benchmarked on a set of robot problems that are challenging from a numerical perspective, including problems with a minimum-time objective and no-collision constraints. The solver is shown to solve problems for trajectory generation of a quadrotor, a robot manipulator and a truck-trailer problem in a few tens of milliseconds. The algorithm's C++-code implementation accompanies this work as open source software, released under the GNU Lesser General Public License (LGPL). This software framework may encourage and enable the robotics community to use trajectory optimization in more challenging applications.

This paper presents a novel approach for generating 3D talking heads from raw audio inputs. Our method grounds on the idea that speech related movements can be comprehensively and efficiently described by the motion of a few control points located on the movable parts of the face, i.e., landmarks. The underlying musculoskeletal structure then allows us to learn how their motion influences the geometrical deformations of the whole face. The proposed method employs two distinct models to this aim: the first one learns to generate the motion of a sparse set of landmarks from the given audio. The second model expands such landmarks motion to a dense motion field, which is utilized to animate a given 3D mesh in neutral state. Additionally, we introduce a novel loss function, named Cosine Loss, which minimizes the angle between the generated motion vectors and the ground truth ones. Using landmarks in 3D talking head generation offers various advantages such as consistency, reliability, and obviating the need for manual-annotation. Our approach is designed to be identity-agnostic, enabling high-quality facial animations for any users without additional data or training.

The growing demand for accurate control in varying and unknown environments has sparked a corresponding increase in the requirements for power supply components, including permanent magnet synchronous motors (PMSMs). To infer the unknown part of the system, machine learning techniques are widely employed, especially Gaussian process regression (GPR) due to its flexibility of continuous system modeling and its guaranteed performance. For practical implementation, distributed GPR is adopted to alleviate the high computational complexity. However, the study of distributed GPR from a control perspective remains an open problem. In this paper, a control-aware optimal aggregation strategy of distributed GPR for PMSMs is proposed based on the Lyapunov stability theory. This strategy exclusively leverages the posterior mean, thereby obviating the need for computationally intensive calculations associated with posterior variance in alternative approaches. Moreover, the straightforward calculation process of our proposed strategy lends itself to seamless implementation in high-frequency PMSM control. The effectiveness of the proposed strategy is demonstrated in the simulations.

A sound field synthesis method enhancing perceptual quality is proposed. Sound field synthesis using multiple loudspeakers enables spatial audio reproduction with a broad listening area; however, synthesis errors at high frequencies called spatial aliasing artifacts are unavoidable. To minimize these artifacts, we propose a method based on the combination of pressure and amplitude matching. On the basis of the human's auditory properties, synthesizing the amplitude distribution will be sufficient for horizontal sound localization. Furthermore, a flat amplitude response should be synthesized as much as possible to avoid coloration. Therefore, we apply amplitude matching, which is a method to synthesize the desired amplitude distribution with arbitrary phase distribution, for high frequencies and conventional pressure matching for low frequencies. Experimental results of numerical simulations and listening tests using a practical system indicated that the perceptual quality of the sound field synthesized by the proposed method was improved from that synthesized by pressure matching.

We consider best arm identification in the multi-armed bandit problem. Assuming certain continuity conditions of the prior, we characterize the rate of the Bayesian simple regret. Differing from Bayesian regret minimization (Lai, 1987), the leading term in the Bayesian simple regret derives from the region where the gap between optimal and suboptimal arms is smaller than $\sqrt{\frac{\log T}{T}}$. We propose a simple and easy-to-compute algorithm with its leading term matching with the lower bound up to a constant factor; simulation results support our theoretical findings.

The rapid expansion of global cloud wide-area networks (WANs) has posed a challenge for commercial optimization engines to efficiently solve network traffic engineering (TE) problems at scale. Existing acceleration strategies decompose TE optimization into concurrent subproblems but realize limited parallelism due to an inherent tradeoff between run time and allocation performance. We present Teal, a learning-based TE algorithm that leverages the parallel processing power of GPUs to accelerate TE control. First, Teal designs a flow-centric graph neural network (GNN) to capture WAN connectivity and network flows, learning flow features as inputs to downstream allocation. Second, to reduce the problem scale and make learning tractable, Teal employs a multi-agent reinforcement learning (RL) algorithm to independently allocate each traffic demand while optimizing a central TE objective. Finally, Teal fine-tunes allocations with ADMM (Alternating Direction Method of Multipliers), a highly parallelizable optimization algorithm for reducing constraint violations such as overutilized links. We evaluate Teal using traffic matrices from Microsoft's WAN. On a large WAN topology with >1,700 nodes, Teal generates near-optimal flow allocations while running several orders of magnitude faster than the production optimization engine. Compared with other TE acceleration schemes, Teal satisfies 6--32% more traffic demand and yields 197--625x speedups.

The selection of Gaussian kernel parameters plays an important role in the applications of support vector classification (SVC). A commonly used method is the k-fold cross validation with grid search (CV), which is extremely time-consuming because it needs to train a large number of SVC models. In this paper, a new approach is proposed to train SVC and optimize the selection of Gaussian kernel parameters. We first formulate the training and parameter selection of SVC as a minimax optimization problem named as MaxMin-L2-SVC-NCH, in which the minimization problem is an optimization problem of finding the closest points between two normal convex hulls (L2-SVC-NCH) while the maximization problem is an optimization problem of finding the optimal Gaussian kernel parameters. A lower time complexity can be expected in MaxMin-L2-SVC-NCH because CV is not needed. We then propose a projected gradient algorithm (PGA) for training L2-SVC-NCH. The famous sequential minimal optimization (SMO) algorithm is a special case of the PGA. Thus, the PGA can provide more flexibility than the SMO. Furthermore, the solution of the maximization problem is done by a gradient ascent algorithm with dynamic learning rate. The comparative experiments between MaxMin-L2-SVC-NCH and the previous best approaches on public datasets show that MaxMin-L2-SVC-NCH greatly reduces the number of models to be trained while maintaining competitive test accuracy. These findings indicate that MaxMin-L2-SVC-NCH is a better choice for SVC tasks.

The core challenge of high-dimensional and expensive black-box optimization (BBO) is how to obtain better performance faster with little function evaluation cost. The essence of the problem is how to design an efficient optimization strategy tailored to the target task. This paper designs a powerful optimization framework to automatically learn the optimization strategies from the target or cheap surrogate task without human intervention. However, current methods are weak for this due to poor representation of optimization strategy. To achieve this, 1) drawing on the mechanism of genetic algorithm, we propose a deep neural network framework called B2Opt, which has a stronger representation of optimization strategies based on survival of the fittest; 2) B2Opt can utilize the cheap surrogate functions of the target task to guide the design of the efficient optimization strategies. Compared to the state-of-the-art BBO baselines, B2Opt can achieve multiple orders of magnitude performance improvement with less function evaluation cost. We validate our proposal on high-dimensional synthetic functions and two real-world applications. We also find that deep B2Opt performs better than shallow ones.

This paper presents a deep reinforcement learning solution for optimizing multi-UAV cell-association decisions and their moving velocity on a 3D aerial highway. The objective is to enhance transportation and communication performance, including collision avoidance, connectivity, and handovers. The problem is formulated as a Markov decision process (MDP) with UAVs' states defined by velocities and communication data rates. We propose a neural architecture with a shared decision module and multiple network branches, each dedicated to a specific action dimension in a 2D transportation-communication space. This design efficiently handles the multi-dimensional action space, allowing independence for individual action dimensions. We introduce two models, Branching Dueling Q-Network (BDQ) and Branching Dueling Double Deep Q-Network (Dueling DDQN), to demonstrate the approach. Simulation results show a significant improvement of 18.32% compared to existing benchmarks.

With the rapid increase of large-scale, real-world datasets, it becomes critical to address the problem of long-tailed data distribution (i.e., a few classes account for most of the data, while most classes are under-represented). Existing solutions typically adopt class re-balancing strategies such as re-sampling and re-weighting based on the number of observations for each class. In this work, we argue that as the number of samples increases, the additional benefit of a newly added data point will diminish. We introduce a novel theoretical framework to measure data overlap by associating with each sample a small neighboring region rather than a single point. The effective number of samples is defined as the volume of samples and can be calculated by a simple formula $(1-\beta^{n})/(1-\beta)$, where $n$ is the number of samples and $\beta \in [0,1)$ is a hyperparameter. We design a re-weighting scheme that uses the effective number of samples for each class to re-balance the loss, thereby yielding a class-balanced loss. Comprehensive experiments are conducted on artificially induced long-tailed CIFAR datasets and large-scale datasets including ImageNet and iNaturalist. Our results show that when trained with the proposed class-balanced loss, the network is able to achieve significant performance gains on long-tailed datasets.