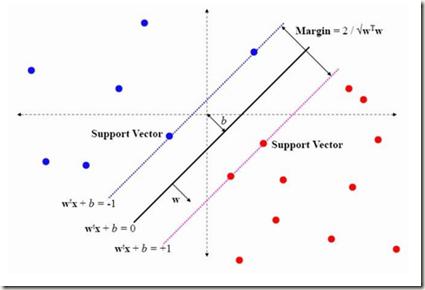

Support vector machine (SVM) is a classical tool to deal with classification problems, which is widely used in biology, statistics and machine learning and good at small sample size and high-dimensional situation. This paper proposes a model averaging method, called SVMMA, to address the uncertainty from deciding which covariates should be included for SVM and to promote its prediction ability. We offer a criterion to search the weights to combine many candidate models that are composed of different parts from the total covariates. To build up the candidate model set, we suggest to use a screening-averaging form in practice. Especially, the model averaging estimator is proved to be asymptotically optimal in the sense of achieving the lowest hinge risk among all possible combination. Finally, we do some simulation to compare the proposed model averaging method with several other model selection/averaging and ensemble learning methods, and apply to four real datasets.

相關內容

The practical importance of coherent forecasts in hierarchical forecasting has inspired many studies on forecast reconciliation. Under this approach, so-called base forecasts are produced for every series in the hierarchy and are subsequently adjusted to be coherent in a second reconciliation step. Reconciliation methods have been shown to improve forecast accuracy, but will, in general, adjust the base forecast of every series. However, in an operational context, it is sometimes necessary or beneficial to keep forecasts of some variables unchanged after forecast reconciliation. In this paper, we formulate reconciliation methodology that keeps forecasts of a pre-specified subset of variables unchanged or "immutable". In contrast to existing approaches, these immutable forecasts need not all come from the same level of a hierarchy, and our method can also be applied to grouped hierarchies. We prove that our approach preserves unbiasedness in base forecasts. Our method can also account for correlations between base forecasting errors and ensure non-negativity of forecasts. We also perform empirical experiments, including an application to sales of a large scale online retailer, to assess the impacts of our proposed methodology.

In this paper, we propose a novel mutual consistency network (MC-Net+) to effectively exploit the unlabeled data for semi-supervised medical image segmentation. The MC-Net+ model is motivated by the observation that deep models trained with limited annotations are prone to output highly uncertain and easily mis-classified predictions in ambiguous regions (e.g., adhesive edges or thin branches) for medical image segmentation. Leveraging these region-level challenging samples can make the semi-supervised segmentation model training more effective. Therefore, our proposed MC-Net+ model consists of two new designs. First, the model contains one shared encoder and multiple slightly different decoders (i.e., using different up-sampling strategies). The statistical discrepancy of multiple decoders' outputs is computed to denote the model's uncertainty, which indicates the unlabeled hard regions. Second, we apply a novel mutual consistency constraint between one decoder's probability output and other decoders' soft pseudo labels. In this way, we minimize the discrepancy of multiple outputs (i.e., the model uncertainty) during training and force the model to generate invariant results in such challenging regions, aiming at capturing more useful features. We compared the segmentation results of our MC-Net+ with five state-of-the-art semi-supervised approaches on three public medical datasets. Extension experiments with two common semi-supervised settings demonstrate the superior performance of our model over other existing methods, which sets a new state of the art for semi-supervised medical image segmentation.

Applications of Reinforcement Learning (RL), in which agents learn to make a sequence of decisions despite lacking complete information about the latent states of the controlled system, that is, they act under partial observability of the states, are ubiquitous. Partially observable RL can be notoriously difficult -- well-known information-theoretic results show that learning partially observable Markov decision processes (POMDPs) requires an exponential number of samples in the worst case. Yet, this does not rule out the existence of large subclasses of POMDPs over which learning is tractable. In this paper we identify such a subclass, which we call weakly revealing POMDPs. This family rules out the pathological instances of POMDPs where observations are uninformative to a degree that makes learning hard. We prove that for weakly revealing POMDPs, a simple algorithm combining optimism and Maximum Likelihood Estimation (MLE) is sufficient to guarantee polynomial sample complexity. To the best of our knowledge, this is the first provably sample-efficient result for learning from interactions in overcomplete POMDPs, where the number of latent states can be larger than the number of observations.

Adverse events are a serious issue in drug development and many prediction methods using machine learning have been developed. The random split cross-validation is the de facto standard for model building and evaluation in machine learning, but care should be taken in adverse event prediction because this approach tends to be overoptimistic compared with the real-world situation. The time split, which uses the time axis, is considered suitable for real-world prediction. However, the differences in model performance obtained using the time and random splits are not fully understood. To understand the differences, we compared the model performance between the time and random splits using eight types of compound information as input, eight adverse events as targets, and six machine learning algorithms. The random split showed higher area under the curve values than did the time split for six of eight targets. The chemical spaces of the training and test datasets of the time split were similar, suggesting that the concept of applicability domain is insufficient to explain the differences derived from the splitting. The area under the curve differences were smaller for the protein interaction than for the other datasets. Subsequent detailed analyses suggested the danger of confounding in the use of knowledge-based information in the time split. These findings indicate the importance of understanding the differences between the time and random splits in adverse event prediction and suggest that appropriate use of the splitting strategies and interpretation of results are necessary for the real-world prediction of adverse events.

While utilization of digital agents to support crucial decision making is increasing, trust in suggestions made by these agents is hard to achieve. However, it is essential to profit from their application, resulting in a need for explanations for both the decision making process and the model. For many systems, such as common black-box models, achieving at least some explainability requires complex post-processing, while other systems profit from being, to a reasonable extent, inherently interpretable. We propose a rule-based learning system specifically conceptualised and, thus, especially suited for these scenarios. Its models are inherently transparent and easily interpretable by design. One key innovation of our system is that the rules' conditions and which rules compose a problem's solution are evolved separately. We utilise independent rule fitnesses which allows users to specifically tailor their model structure to fit the given requirements for explainability.

Existing inferential methods for small area data involve a trade-off between maintaining area-level frequentist coverage rates and improving inferential precision via the incorporation of indirect information. In this article, we propose a method to obtain an area-level prediction region for a future observation which mitigates this trade-off. The proposed method takes a conformal prediction approach in which the conformity measure is the posterior predictive density of a working model that incorporates indirect information. The resulting prediction region has guaranteed frequentist coverage regardless of the working model, and, if the working model assumptions are accurate, the region has minimum expected volume compared to other regions with the same coverage rate. When constructed under a normal working model, we prove such a prediction region is an interval and construct an efficient algorithm to obtain the exact interval. We illustrate the performance of our method through simulation studies and an application to EPA radon survey data.

Recently, the use of machine learning in meteorology has increased greatly. While many machine learning methods are not new, university classes on machine learning are largely unavailable to meteorology students and are not required to become a meteorologist. The lack of formal instruction has contributed to perception that machine learning methods are 'black boxes' and thus end-users are hesitant to apply the machine learning methods in their every day workflow. To reduce the opaqueness of machine learning methods and lower hesitancy towards machine learning in meteorology, this paper provides a survey of some of the most common machine learning methods. A familiar meteorological example is used to contextualize the machine learning methods while also discussing machine learning topics using plain language. The following machine learning methods are demonstrated: linear regression; logistic regression; decision trees; random forest; gradient boosted decision trees; naive Bayes; and support vector machines. Beyond discussing the different methods, the paper also contains discussions on the general machine learning process as well as best practices to enable readers to apply machine learning to their own datasets. Furthermore, all code (in the form of Jupyter notebooks and Google Colaboratory notebooks) used to make the examples in the paper is provided in an effort to catalyse the use of machine learning in meteorology.

In variable selection, a selection rule that prescribes the permissible sets of selected variables (called a "selection dictionary") is desirable due to the inherent structural constraints among the candidate variables. The methods that can incorporate such restrictions can improve model interpretability and prediction accuracy. Penalized regression can integrate selection rules by assigning the coefficients to different groups and then applying penalties to the groups. However, no general framework has been proposed to formalize selection rules and their applications. In this work, we establish a framework for structured variable selection that can incorporate universal structural constraints. We develop a mathematical language for constructing arbitrary selection rules, where the selection dictionary is formally defined. We show that all selection rules can be represented as a combination of operations on constructs, which can be used to identify the related selection dictionary. One may then apply some criteria to select the best model. We show that the theoretical framework can help to identify the grouping structure in existing penalized regression methods. In addition, we formulate structured variable selection into mixed-integer optimization problems which can be solved by existing software. Finally, we discuss the significance of the framework in the context of statistics.

Models for dependent data are distinguished by their targets of inference. Marginal models are useful when interest lies in quantifying associations averaged across a population of clusters. When the functional form of a covariate-outcome association is unknown, flexible regression methods are needed to allow for potentially non-linear relationships. We propose a novel marginal additive model (MAM) for modelling cluster-correlated data with non-linear population-averaged associations. The proposed MAM is a unified framework for estimation and uncertainty quantification of a marginal mean model, combined with inference for between-cluster variability and cluster-specific prediction. We propose a fitting algorithm that enables efficient computation of standard errors and corrects for estimation of penalty terms. We demonstrate the proposed methods in simulations and in application to (i) a longitudinal study of beaver foraging behaviour, and (ii) a spatial analysis of Loaloa infection in West Africa. R code for implementing the proposed methodology is available at //github.com/awstringer1/mam.

Click-through rate (CTR) prediction plays a critical role in recommender systems and online advertising. The data used in these applications are multi-field categorical data, where each feature belongs to one field. Field information is proved to be important and there are several works considering fields in their models. In this paper, we proposed a novel approach to model the field information effectively and efficiently. The proposed approach is a direct improvement of FwFM, and is named as Field-matrixed Factorization Machines (FmFM, or $FM^2$). We also proposed a new explanation of FM and FwFM within the FmFM framework, and compared it with the FFM. Besides pruning the cross terms, our model supports field-specific variable dimensions of embedding vectors, which acts as soft pruning. We also proposed an efficient way to minimize the dimension while keeping the model performance. The FmFM model can also be optimized further by caching the intermediate vectors, and it only takes thousands of floating-point operations (FLOPs) to make a prediction. Our experiment results show that it can out-perform the FFM, which is more complex. The FmFM model's performance is also comparable to DNN models which require much more FLOPs in runtime.