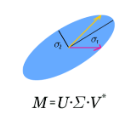

In strong line-of-sight millimeter-wave (mmWave) wireless systems, the rank-deficient channel severely hampers spatial multiplexing. To address this inherent deficiency, multiple reconfigurable-intelligent-surfaces (RISs) are introduced in this study to customize the wireless channel. Utilizing the RIS to reshape electromagnetic waves, we theoretically show that a favorable channel with an arbitrary tunable rank and a minimized truncated condition number can be established by elaborately designing the placement and reflection matrix of RISs. Different from existing works on multi-RISs, the number of elements needed for each RIS to combat the path loss and the limited phase control is also considered. On the basis of the proposed channel customization, a joint transmitter-RISs-receiver (Tx-RISs-Rx) design under a hybrid mmWave system is investigated to maximize the spectral efficiency. Using the proposed scheme, the optimal singular value decomposition-based hybrid beamforming at the Tx and Rx can be obtained without matrix decomposition for the digital and analog beamforming. The bottoms of the sub-channel mode in the water-filling algorithm, which are conventionally uncontrollable, are proven to be independently adjustable by RISs. Moreover, the transmit power required for realizing multi-stream transmission is derived. Numerical results are presented to verify our theoretical analysis and exhibit substantial gains over systems without RISs.

相關內容

Eye blinking detection in the wild plays an essential role in deception detection, driving fatigue detection, etc. Despite the fact that numerous attempts have already been made, the majority of them have encountered difficulties, such as the derived eye images having different resolutions as the distance between the face and the camera changes; or the requirement of a lightweight detection model to obtain a short inference time in order to perform in real-time. In this research, two problems are addressed: how the eye blinking detection model can learn efficiently from different resolutions of eye pictures in diverse conditions; and how to reduce the size of the detection model for faster inference time. We propose to utilize upsampling and downsampling the input eye images to the same resolution as one potential solution for the first problem, then find out which interpolation method can result in the highest performance of the detection model. For the second problem, although a recent spatiotemporal convolutional neural network used for eye blinking detection has a strong capacity to extract both spatial and temporal characteristics, it remains having a high number of network parameters, leading to high inference time. Therefore, using Depth-wise Separable Convolution rather than conventional convolution layers inside each branch is considered in this paper as a feasible solution.

The increasingly crowded spectrum has spurred the design of joint radar-communications systems that share hardware resources and efficiently use the radio frequency spectrum. We study a general spectral coexistence scenario, wherein the channels and transmit signals of both radar and communications systems are unknown at the receiver. In this dual-blind deconvolution (DBD) problem, a common receiver admits a multi-carrier wireless communications signal that is overlaid with the radar signal reflected off multiple targets. The communications and radar channels are represented by continuous-valued range-time and Doppler velocities of multiple transmission paths and multiple targets. We exploit the sparsity of both channels to solve the highly ill-posed DBD problem by casting it into a sum of multivariate atomic norms (SoMAN) minimization. We devise a semidefinite program to estimate the unknown target and communications parameters using the theories of positive-hyperoctant trigonometric polynomials (PhTP). Our theoretical analyses show that the minimum number of samples required for near-perfect recovery is dependent on the logarithm of the maximum of number of radar targets and communications paths rather than their sum. We show that our SoMAN method and PhTP formulations are also applicable to more general scenarios such as unsynchronized transmission, the presence of noise, and multiple emitters. Numerical experiments demonstrate great performance enhancements during parameter recovery under different scenarios.

We study a wireless jamming problem consisting of the competition between a legitimate receiver and a jammer, as a zero-sum game with the value to maximize/minimize being the channel capacity at the receiver's side. Most of the approaches found in the literature consider the two players to be stationary nodes. Instead, we investigate what happens when they can change location, specifically moving along a linear geometry. We frame this at first as a static game, which can be solved in closed form, and subsequently we extend it to a dynamic game, under three different versions for what concerns completeness/perfection of mutual information about the adversary's position, corresponding to different assumptions of concealment/sequentiality of the moves, respectively. We first provide some theoretical conditions that hold for the static game and also help identify good strategies valid under any setup, including dynamic games. Since dynamic games, although more realistic, are characterized by an exploding strategy space, we exploit reinforcement learning to obtain efficient strategies leading to equilibrium outcomes. We show how theoretical findings can be used to train smart agents to play the game, and validate our approach in practical setups.

Emerging connected vehicle (CV) data sets have recently become commercially available. This paper presents several tools using CV data to evaluate traffic progression quality along a signalized corridor. These include both performance measures for high-level analysis as well as visualizations to examine details of the coordinated operation. With the use of CV data, it is possible to assess not only the movement of traffic on the corridor but also to consider its origin-destination (OD) path through the corridor. Results for the real-world operation of an eight-intersection signalized arterial are presented. A series of high-level performance measures are used to evaluate overall performance by time of day, with differing results by metric. Next, the details of the operation are examined with the use of two visualization tools: a cyclic time-space diagram (TSD) and an empirical platoon progression diagram (PPD). Comparing flow visualizations developed with different included OD paths reveals several features. In addition, speed heat maps are generated, providing both speed performance along the corridor. The proposed visualization tools portray the corridor performance holistically instead of combining individual signal performance metrics. The techniques exhibited in this study are compelling for identifying locations where engineering solutions are required. The recent progress in infrastructure-free sensing technology has significantly increased the scope of CV data-based traffic management systems. The study demonstrates the utility of CV trajectory data for obtaining high-level details of the corridor performance and drilling down into the minute specifics.

The full-duplex (FD) technology has the potential to radically evolve wireless systems, facilitating the integration of both communications and radar functionalities into a single device, thus, enabling joint communication and sensing (JCAS). In this paper, we present a novel approach for JCAS that incorporates a reconfigurable intelligent surface (RIS) in the near-field of an FD multiple-input multiple-output (MIMO) node, which is jointly optimized with the digital beamformers to enable JSAC and efficiently handle self-interference (SI). We propose a novel problem formulation for FD MIMO JCAS systems to jointly minimize the total received power at the FD node's radar receiver while maximizing the sum rate of downlink communications subject to a Cram\'{e}r-Rao bound (CRB) constraint. In contrast to the typically used CRB in the relevant literature, we derive a novel, more accurate, target estimation bound that fully takes into account the RIS deployment. The considered problem is solved using alternating optimization, which is guaranteed to converge to a local optimum. The simulation results demonstrate that the proposed scheme achieves significant performance improvement both for communications and sensing. It is showcased that, jointly designing the FD MIMO beamformers and the RIS phase configuration to be SI aware can significantly loosen the requirement for additional SI cancellation.

Blockchain systems suffer from high storage costs as every node needs to store and maintain the entire blockchain data. After investigating Ethereum's storage, we find that the storage cost mostly comes from the index, i.e., Merkle Patricia Trie (MPT), that is used to guarantee data integrity and support provenance queries. To reduce the index storage overhead, an initial idea is to leverage the emerging learned index technique, which has been shown to have a smaller index size and more efficient query performance. However, directly applying it to the blockchain storage results in even higher overhead owing to the blockchain's persistence requirement and the learned index's large node size. Meanwhile, existing learned indexes are designed for in-memory databases, whereas blockchain systems require disk-based storage and feature frequent data updates. To address these challenges, we propose COLE, a novel column-based learned storage for blockchain systems. We follow the column-based database design to contiguously store each state's historical values, which are indexed by learned models to facilitate efficient data retrieval and provenance queries. We develop a series of write-optimized strategies to realize COLE in disk environments. Extensive experiments are conducted to validate the performance of the proposed COLE system. Compared with MPT, COLE reduces the storage size by up to 94% while improving the system throughput by 1.4X-5.4X.

How can we tell whether two neural networks are utilizing the same internal processes for a particular computation? This question is pertinent for multiple subfields of both neuroscience and machine learning, including neuroAI, mechanistic interpretability, and brain-machine interfaces. Standard approaches for comparing neural networks focus on the spatial geometry of latent states. Yet in recurrent networks, computations are implemented at the level of neural dynamics, which do not have a simple one-to-one mapping with geometry. To bridge this gap, we introduce a novel similarity metric that compares two systems at the level of their dynamics. Our method incorporates two components: Using recent advances in data-driven dynamical systems theory, we learn a high-dimensional linear system that accurately captures core features of the original nonlinear dynamics. Next, we compare these linear approximations via a novel extension of Procrustes Analysis that accounts for how vector fields change under orthogonal transformation. Via four case studies, we demonstrate that our method effectively identifies and distinguishes dynamic structure in recurrent neural networks (RNNs), whereas geometric methods fall short. We additionally show that our method can distinguish learning rules in an unsupervised manner. Our method therefore opens the door to novel data-driven analyses of the temporal structure of neural computation, and to more rigorous testing of RNNs as models of the brain.

Two numerical schemes are proposed and investigated for the Yang--Mills equations, which can be seen as a nonlinear generalisation of the Maxwell equations set on Lie algebra-valued functions, with similarities to certain formulations of General Relativity. Both schemes are built on the Discrete de Rham (DDR) method, and inherit from its main features: an arbitrary order of accuracy, and applicability to generic polyhedral meshes. They make use of the complex property of the DDR, together with a Lagrange-multiplier approach, to preserve, at the discrete level, a nonlinear constraint associated with the Yang--Mills equations. We also show that the schemes satisfy a discrete energy dissipation (the dissipation coming solely from the implicit time stepping). Issues around the practical implementations of the schemes are discussed; in particular, the assembly of the local contributions in a way that minimises the price we pay in dealing with nonlinear terms, in conjunction with the tensorisation coming from the Lie algebra. Numerical tests are provided using a manufactured solution, and show that both schemes display a convergence in $L^2$-norm of the potential and electrical fields in $\mathcal O(h^{k+1})$ (provided that the time step is of that order), where $k$ is the polynomial degree chosen for the DDR complex. We also numerically demonstrate the preservation of the constraint.

Existing traffic signal control systems rely on oversimplified rule-based methods, and even RL-based methods are often suboptimal and unstable. To address this, we propose a cooperative multi-objective architecture called Multi-Objective Multi-Agent Deep Deterministic Policy Gradient (MOMA-DDPG), which estimates multiple reward terms for traffic signal control optimization using age-decaying weights. Our approach involves two types of agents: one focuses on optimizing local traffic at each intersection, while the other aims to optimize global traffic throughput. We evaluate our method using real-world traffic data collected from an Asian country's traffic cameras. Despite the inclusion of a global agent, our solution remains decentralized as this agent is no longer necessary during the inference stage. Our results demonstrate the effectiveness of MOMA-DDPG, outperforming state-of-the-art methods across all performance metrics. Additionally, our proposed system minimizes both waiting time and carbon emissions. Notably, this paper is the first to link carbon emissions and global agents in traffic signal control.

Detection and recognition of text in natural images are two main problems in the field of computer vision that have a wide variety of applications in analysis of sports videos, autonomous driving, industrial automation, to name a few. They face common challenging problems that are factors in how text is represented and affected by several environmental conditions. The current state-of-the-art scene text detection and/or recognition methods have exploited the witnessed advancement in deep learning architectures and reported a superior accuracy on benchmark datasets when tackling multi-resolution and multi-oriented text. However, there are still several remaining challenges affecting text in the wild images that cause existing methods to underperform due to there models are not able to generalize to unseen data and the insufficient labeled data. Thus, unlike previous surveys in this field, the objectives of this survey are as follows: first, offering the reader not only a review on the recent advancement in scene text detection and recognition, but also presenting the results of conducting extensive experiments using a unified evaluation framework that assesses pre-trained models of the selected methods on challenging cases, and applies the same evaluation criteria on these techniques. Second, identifying several existing challenges for detecting or recognizing text in the wild images, namely, in-plane-rotation, multi-oriented and multi-resolution text, perspective distortion, illumination reflection, partial occlusion, complex fonts, and special characters. Finally, the paper also presents insight into the potential research directions in this field to address some of the mentioned challenges that are still encountering scene text detection and recognition techniques.