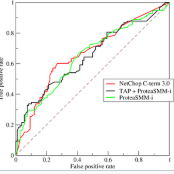

In this paper, we present three estimators of the ROC curve when missing observations arise among the biomarkers. Two of the procedures assume that we have covariates that allow to estimate the propensity and the estimators are obtained using an inverse probability weighting method or a smoothed version of it. The other one assumes that the covariates are related to the biomarkers through a regression model which enables us to construct convolution--based estimators of the distribution and quantile functions. Consistency results are obtained under mild conditions. Through a numerical study we evaluate the finite sample performance of the different proposals. A real data set is also analysed.

相關內容

Covariance estimation for matrix-valued data has received an increasing interest in applications. Unlike previous works that rely heavily on matrix normal distribution assumption and the requirement of fixed matrix size, we propose a class of distribution-free regularized covariance estimation methods for high-dimensional matrix data under a separability condition and a bandable covariance structure. Under these conditions, the original covariance matrix is decomposed into a Kronecker product of two bandable small covariance matrices representing the variability over row and column directions. We formulate a unified framework for estimating bandable covariance, and introduce an efficient algorithm based on rank one unconstrained Kronecker product approximation. The convergence rates of the proposed estimators are established, and the derived minimax lower bound shows our proposed estimator is rate-optimal under certain divergence regimes of matrix size. We further introduce a class of robust covariance estimators and provide theoretical guarantees to deal with heavy-tailed data. We demonstrate the superior finite-sample performance of our methods using simulations and real applications from a gridded temperature anomalies dataset and a S&P 500 stock data analysis.

We investigate the feature compression of high-dimensional ridge regression using the optimal subsampling technique. Specifically, based on the basic framework of random sampling algorithm on feature for ridge regression and the A-optimal design criterion, we first obtain a set of optimal subsampling probabilities. Considering that the obtained probabilities are uneconomical, we then propose the nearly optimal ones. With these probabilities, a two step iterative algorithm is established which has lower computational cost and higher accuracy. We provide theoretical analysis and numerical experiments to support the proposed methods. Numerical results demonstrate the decent performance of our methods.

Existing inferential methods for small area data involve a trade-off between maintaining area-level frequentist coverage rates and improving inferential precision via the incorporation of indirect information. In this article, we propose a method to obtain an area-level prediction region for a future observation which mitigates this trade-off. The proposed method takes a conformal prediction approach in which the conformity measure is the posterior predictive density of a working model that incorporates indirect information. The resulting prediction region has guaranteed frequentist coverage regardless of the working model, and, if the working model assumptions are accurate, the region has minimum expected volume compared to other regions with the same coverage rate. When constructed under a normal working model, we prove such a prediction region is an interval and construct an efficient algorithm to obtain the exact interval. We illustrate the performance of our method through simulation studies and an application to EPA radon survey data.

This paper proposes a numerical method based on the Adomian decomposition approach for the time discretization, applied to Euler equations. A recursive property is demonstrated that allows to formulate the method in an appropriate and efficient way. To obtain a fully numerical scheme, the space discretization is achieved using the classical DG techniques. The efficiency of the obtained numerical scheme is demonstrated through numerical tests by comparison to exact solution and the popular Runge-Kutta DG method results.

An important challenge in statistical analysis lies in controlling the estimation bias when handling the ever-increasing data size and model complexity. For example, approximate methods are increasingly used to address the analytical and/or computational challenges when implementing standard estimators, but they often lead to inconsistent estimators. So consistent estimators can be difficult to obtain, especially for complex models and/or in settings where the number of parameters diverges with the sample size. We propose a general simulation-based estimation framework that allows to construct consistent and bias corrected estimators for parameters of increasing dimensions. The key advantage of the proposed framework is that it only requires to compute a simple inconsistent estimator multiple times. The resulting Just Identified iNdirect Inference estimator (JINI) enjoys nice properties, including consistency, asymptotic normality, and finite sample bias correction better than alternative methods. We further provide a simple algorithm to construct the JINI in a computationally efficient manner. Therefore, the JINI is especially useful in settings where standard methods may be challenging to apply, for example, in the presence of misclassification and rounding. We consider comprehensive simulation studies and analyze an alcohol consumption data example to illustrate the excellent performance and usefulness of the method.

A High-dimensional and sparse (HiDS) matrix is frequently encountered in a big data-related application like an e-commerce system or a social network services system. To perform highly accurate representation learning on it is of great significance owing to the great desire of extracting latent knowledge and patterns from it. Latent factor analysis (LFA), which represents an HiDS matrix by learning the low-rank embeddings based on its observed entries only, is one of the most effective and efficient approaches to this issue. However, most existing LFA-based models perform such embeddings on a HiDS matrix directly without exploiting its hidden graph structures, thereby resulting in accuracy loss. To address this issue, this paper proposes a graph-incorporated latent factor analysis (GLFA) model. It adopts two-fold ideas: 1) a graph is constructed for identifying the hidden high-order interaction (HOI) among nodes described by an HiDS matrix, and 2) a recurrent LFA structure is carefully designed with the incorporation of HOI, thereby improving the representa-tion learning ability of a resultant model. Experimental results on three real-world datasets demonstrate that GLFA outperforms six state-of-the-art models in predicting the missing data of an HiDS matrix, which evidently supports its strong representation learning ability to HiDS data.

In this paper we study the finite sample and asymptotic properties of various weighting estimators of the local average treatment effect (LATE), several of which are based on Abadie (2003)'s kappa theorem. Our framework presumes a binary endogenous explanatory variable ("treatment") and a binary instrumental variable, which may only be valid after conditioning on additional covariates. We argue that one of the Abadie estimators, which we show is weight normalized, is likely to dominate the others in many contexts. A notable exception is in settings with one-sided noncompliance, where certain unnormalized estimators have the advantage of being based on a denominator that is bounded away from zero. We use a simulation study and three empirical applications to illustrate our findings. In applications to causal effects of college education using the college proximity instrument (Card, 1995) and causal effects of childbearing using the sibling sex composition instrument (Angrist and Evans, 1998), the unnormalized estimates are clearly unreasonable, with "incorrect" signs, magnitudes, or both. Overall, our results suggest that (i) the relative performance of different kappa weighting estimators varies with features of the data-generating process; and that (ii) the normalized version of Tan (2006)'s estimator may be an attractive alternative in many contexts. Applied researchers with access to a binary instrumental variable should also consider covariate balancing or doubly robust estimators of the LATE.

Policy gradient (PG) estimation becomes a challenge when we are not allowed to sample with the target policy but only have access to a dataset generated by some unknown behavior policy. Conventional methods for off-policy PG estimation often suffer from either significant bias or exponentially large variance. In this paper, we propose the double Fitted PG estimation (FPG) algorithm. FPG can work with an arbitrary policy parameterization, assuming access to a Bellman-complete value function class. In the case of linear value function approximation, we provide a tight finite-sample upper bound on policy gradient estimation error, that is governed by the amount of distribution mismatch measured in feature space. We also establish the asymptotic normality of FPG estimation error with a precise covariance characterization, which is further shown to be statistically optimal with a matching Cramer-Rao lower bound. Empirically, we evaluate the performance of FPG on both policy gradient estimation and policy optimization, using either softmax tabular or ReLU policy networks. Under various metrics, our results show that FPG significantly outperforms existing off-policy PG estimation methods based on importance sampling and variance reduction techniques.

The inverse probability (IPW) and doubly robust (DR) estimators are often used to estimate the average causal effect (ATE), but are vulnerable to outliers. The IPW/DR median can be used for outlier-resistant estimation of the ATE, but the outlier resistance of the median is limited and it is not resistant enough for heavy contamination. We propose extensions of the IPW/DR estimators with density power weighting, which can eliminate the influence of outliers almost completely. The outlier resistance of the proposed estimators is evaluated through the unbiasedness of the estimating equations. Unlike the median-based methods, our estimators are resistant to outliers even under heavy contamination. Interestingly, the naive extension of the DR estimator requires bias correction to keep the double robustness even under the most tractable form of contamination. In addition, the proposed estimators are found to be highly resistant to outliers in more difficult settings where the contamination ratio depends on the covariates. The outlier resistance of our estimators from the viewpoint of the influence function is also favorable. Our theoretical results are verified via Monte Carlo simulations and real data analysis. The proposed methods were found to have more outlier resistance than the median-based methods and estimated the potential mean with a smaller error than the median-based methods.

Models for dependent data are distinguished by their targets of inference. Marginal models are useful when interest lies in quantifying associations averaged across a population of clusters. When the functional form of a covariate-outcome association is unknown, flexible regression methods are needed to allow for potentially non-linear relationships. We propose a novel marginal additive model (MAM) for modelling cluster-correlated data with non-linear population-averaged associations. The proposed MAM is a unified framework for estimation and uncertainty quantification of a marginal mean model, combined with inference for between-cluster variability and cluster-specific prediction. We propose a fitting algorithm that enables efficient computation of standard errors and corrects for estimation of penalty terms. We demonstrate the proposed methods in simulations and in application to (i) a longitudinal study of beaver foraging behaviour, and (ii) a spatial analysis of Loaloa infection in West Africa. R code for implementing the proposed methodology is available at //github.com/awstringer1/mam.